Simulating Reality: A Comprehensive Guide to Monte Carlo Methods for Modeling Particle Transport in Biological Tissues

This article provides a comprehensive guide for researchers and pharmaceutical professionals on applying Monte Carlo (MC) methods to simulate particle-tissue interactions.

Simulating Reality: A Comprehensive Guide to Monte Carlo Methods for Modeling Particle Transport in Biological Tissues

Abstract

This article provides a comprehensive guide for researchers and pharmaceutical professionals on applying Monte Carlo (MC) methods to simulate particle-tissue interactions. We cover the foundational physics, from photon and electron transport to complex radiation chemistry, and detail the implementation of popular MC codes like Geant4, PENELOPE, and MCNP in biomedical contexts. We address critical challenges in modeling tissue heterogeneity and achieving computational efficiency, and present frameworks for validating simulations against experimental benchmarks. By comparing major MC toolkits, this guide aims to equip scientists with the knowledge to accurately model radiation therapy, diagnostic imaging, and nanoparticle drug delivery.

The Physics of Particle-Tissue Interactions: Core Principles for Monte Carlo Simulation

The deterministic paradigm, governed by ordinary differential equations (ODEs), has long been the cornerstone of modeling biological systems. However, this framework frequently fails when applied to the intricate, mesoscopic scale of cellular processes within tissue. Within the context of advancing Monte Carlo methods for simulating particle interactions—such as drug molecules, signaling proteins, or radiation tracks—in tissue research, the inherent limitations of deterministic approaches become starkly apparent. This whitepaper argues that stochastic methods are not merely an alternative but an essential framework for capturing the fundamental behavior of complex biological systems where randomness is a feature, not noise.

The Limitation of Determinism in Biological Contexts

Deterministic models assume continuous concentrations and predictable rates, valid only when molecular populations are exceedingly high. In cellular and sub-cellular compartments, key regulators (e.g., transcription factors, regulatory RNAs) often exist in low copy numbers. A change of a few molecules can switch entire genetic programs. Furthermore, tissue heterogeneity ensures that even average behaviors are poor predictors of individual cellular outcomes, which is critical for understanding drug efficacy and toxicity.

Table 1: Comparison of Deterministic vs. Stochastic Modeling Outcomes for a Gene Regulatory Switch

| Aspect | Deterministic (ODE) Model | Stochastic (Gillespie) Model | Implication for Tissue Research |

|---|---|---|---|

| Bistable Switch Prediction | Predicts a precise, concentration-dependent switch point. | Reveals probabilistic switching and random transition times. | Explains heterogeneous cell fate decisions in a tissue. |

| Response to Low-Abundance Signal | Smooth, averaged response curve. | "All-or-nothing" stochastic bursts in individual cells. | Critical for modeling drug targeting of rare cell populations. |

| Extinction Events | Cannot simulate molecule count reaching zero. | Can accurately model molecular extinction. | Essential for simulating complete inhibitor efficacy or pathway blockade. |

The Stochastic Foundation: Master Equations and Monte Carlo

The rigorous foundation is the Chemical Master Equation (CME), which describes the time evolution of the probability distribution for all molecular species. As the CME is often analytically intractable for complex systems, stochastic simulation algorithms (SSA), a form of dynamic Monte Carlo, provide the numerical solution.

Key Experimental Protocol: Stochastic Simulation Algorithm (Gillespie's Direct Method)

- System Definition: Define the set of N molecular species and M reaction channels. Specify reaction rate constants (k_j) and the initial state vector (X(t=0)).

- Propensity Calculation: For the current state (X(t)), calculate the propensity function (aj) for each reaction (j), where (ajdt) is the probability the reaction will occur in the next infinitesimal time interval.

- Monte Carlo Step:

- Generate two random numbers (r1) and (r2) uniformly from (0,1).

- Calculate the time to next reaction: (\tau = (1/a{tot}) \ln(1/r1)), where (a{tot} = \sum{j=1}^{M} a_j).

- Determine the reaction index (\mu) such that (\sum{j=1}^{\mu-1} aj < r2 a{tot} \leq \sum{j=1}^{\mu} aj).

- Update: Update the system state: (t = t + \tau); (X = X + \nu\mu), where (\nu\mu) is the stoichiometric vector for reaction (\mu).

- Iterate: Return to Step 2 until a termination condition is met.

Application to Particle Interactions in Tissue: A Monte Carlo Paradigm

This stochastic philosophy directly extends to the Monte Carlo modeling of physical particles in tissue. The trajectory of each photon, electron, or drug molecule is treated as a random walk, with interactions (scattering, absorption, reaction) governed by probability cross-sections.

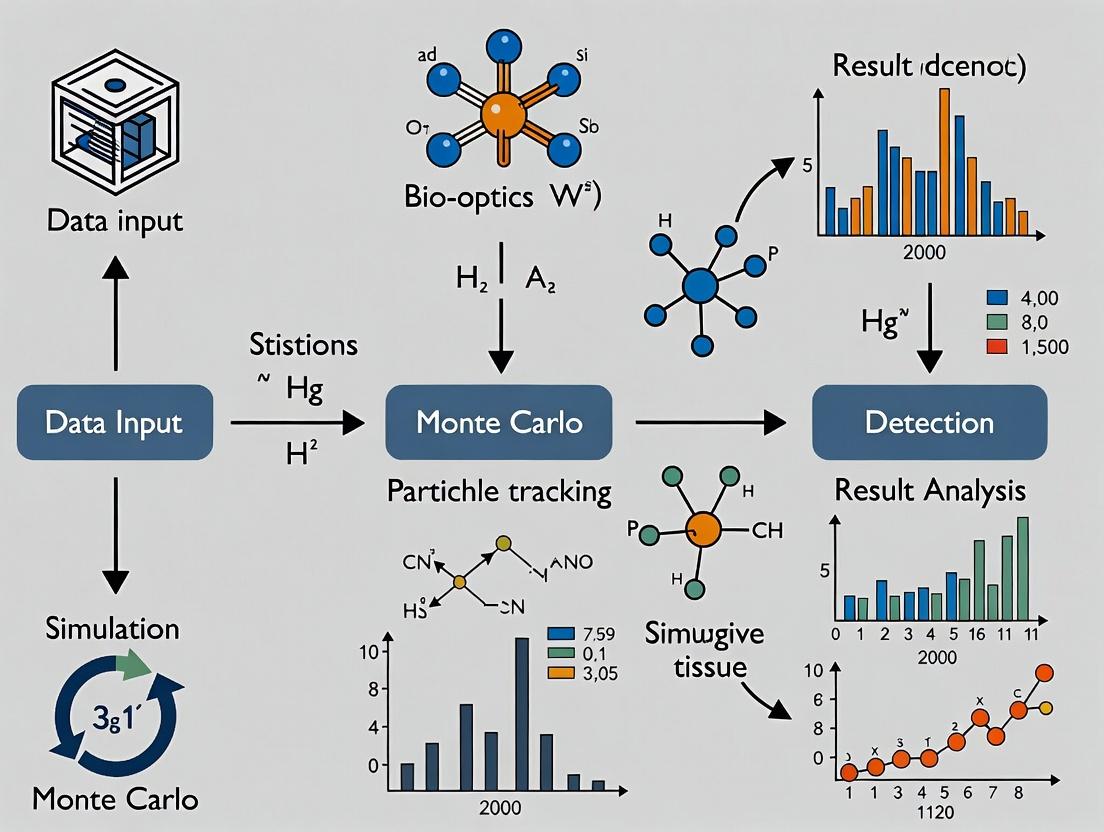

Title: Monte Carlo Particle Transport Workflow

Case Study: Stochasticity in EGFR Signaling and Drug Response

The Epidermal Growth Factor Receptor (EGFR) pathway exemplifies system complexity where stochastic methods are vital. Ligand binding, receptor dimerization, and trafficking events occur in low-copy numbers at the cell membrane, leading to significant cell-to-cell variability that influences tumor resistance to tyrosine kinase inhibitors (TKIs).

Title: Stochastic Events in the EGFR Signaling Pathway

Detailed Experimental Protocol: Simulating TKI Action with Stochastic Dynamics

- Model Construction: Define reaction network for EGFR: ligand association/dissociation, dimerization, phosphorylation, downstream adaptor binding, and recycling/degradation. Include TKI as a competitive binding reaction to the receptor kinase domain.

- Parameterization: Obtain kinetic rates from surface plasmon resonance (SPR) and fluorescence recovery after photobleaching (FRAP) data. Set initial conditions: ~10,000-100,000 surface EGFR molecules per cell, with variability.

- Stochastic Simulation: Use a hybrid SSA (e.g., tau-leaping) to simulate the network over 24 hours of simulated time, for 1000+ individual "cells."

- Perturbation: Introduce a pulse or constant concentration of TKI molecules, simulated as a distinct molecular species with its own diffusion and binding reactions.

- Output Analysis: Quantify the distribution of phosphorylated EGFR and downstream effector levels across the virtual cell population. Calculate the fraction of cells where signaling remains above a proliferation threshold despite treatment.

Table 2: Key Research Reagent Solutions for Stochastic Single-Cell Analysis

| Reagent / Material | Function in Stochastic Analysis |

|---|---|

| Fluorescent Reporters (FRET biosensors) | Enable live-cell, single-molecule imaging of protein activity (e.g., kinase activity, second messengers) to quantify stochastic fluctuations. |

| Microfluidic Cell Traps | Allow long-term, high-throughput imaging of individual cells under controlled perturbations for gathering statistical data on cell fate. |

| Single-Cell RNA-Seq Kits | Profile transcriptomic states of thousands of individual cells from a tissue to infer the underlying stochastic gene expression network. |

| Quantum Dots (QDs) | Photostable nanoparticles for tracking single receptor molecules over long durations on the live cell surface. |

| Stochastic Optical Reconstruction Microscopy (STORM) Dyes | Enable super-resolution imaging to visualize nanoscale spatial organization, a key modulator of stochastic reaction kinetics. |

The shift from deterministic to stochastic modeling is a fundamental necessity for meaningful research into particle interactions within tissue. Whether modeling the random walk of a radiation particle depositing energy or the probabilistic collision of a drug molecule with its target receptor, Monte Carlo and other stochastic methods embrace the inherent randomness that defines biological complexity at the cellular scale. This paradigm provides not just more realistic simulations, but also a framework to understand—and eventually predict—the heterogeneous outcomes observed in drug development and disease progression.

1. Introduction This whitepaper details the core particle types employed in biomedical applications, with a specific focus on their physical interactions with biological tissue. The analysis is framed within the context of advancing Monte Carlo (MC) simulation methodologies, which provide the gold standard for stochastically modeling these interactions to predict energy deposition, dose distribution, and subsequent biological effects. Accurate MC modeling is critical for therapy optimization, diagnostic imaging refinement, and fundamental radiobiological research.

2. Particle Characteristics and Interactions The key particles differ fundamentally in mass, charge, and their primary interaction mechanisms with matter, leading to distinct depth-dose profiles and biological impact.

Table 1: Fundamental Properties and Primary Interaction Mechanisms

| Particle | Rest Mass (MeV/c²) | Electric Charge | Primary Interaction Mechanisms in Tissue |

|---|---|---|---|

| Photon (X/Gamma-ray) | 0 | 0 | Compton Scattering, Photoelectric Effect, Pair Production |

| Electron | 0.511 | -1 | Collisional (Ionization/Excitation), Radiative (Bremsstrahlung) |

| Proton | 938.27 | +1 | Coulomb Scattering, Nuclear Interactions (at high energy) |

| Heavy Ion (e.g., C-12) | ~11178 (for C-12) | +Z | Coulomb Scattering, Dense Ionization Tracks, Nuclear Fragmentation |

Table 2: Key Biomedical Applications and Dose Distribution Features

| Particle | Major Applications | Key Dose Distribution Feature | Relative Biological Effectiveness (RBE) Range |

|---|---|---|---|

| Photon | Radiotherapy (IMRT, VMAT), CT/PET Imaging | Exponentially attenuating; exit dose | 1.0 (Reference) |

| Electron | Superficial Tumors, Intraoperative Radiotherapy | Rapid dose fall-off beyond target depth | 1.0 - 1.5 |

| Proton | Particle Therapy (e.g., ocular, pediatric tumors) | Bragg Peak; sharp distal fall-off | 1.0 - 1.1 (in clinical use) |

| Heavy Ion (C-12) | Particle Therapy (radioresistant tumors) | Inverse Depth Dose; sharpest peak | 2.0 - 5.0 (variable with LET) |

3. Monte Carlo Simulation of Particle Transport MC methods simulate individual particle histories through tissue, modeling stochastic interactions based on cross-section data. The general workflow for a particle-in-tissue MC code involves:

Experimental Protocol 1: Core Monte Carlo Simulation Cycle

- Geometry Definition: Model patient/tissue geometry (via CT voxels or mathematical phantoms).

- Source Definition: Initialize particle type, energy, and spatial/directional distribution.

- Step Length Calculation: Sample free path to next interaction point using total cross-section.

- Interaction Sampling: Determine interaction type (e.g., Compton vs. Photoelectric) probabilistically.

- Energy Deposition: Record energy deposited in local voxel from ionization/excitation.

- Secondary Particle Generation: Create and stack secondary particles (e.g., delta rays, characteristic X-rays).

- Particle Update: Adjust primary particle energy and direction post-interaction.

- Termination & History: Repeat steps 3-7 until particle energy falls below cutoff or exits geometry. Loop over millions of histories.

- Dose Scoring: Aggregate energy deposition per voxel, convert to dose (Gy).

Title: Monte Carlo Particle Transport Simulation Workflow

4. Biological Effectiveness and Experimental Modeling The variable biological effectiveness of particles is primarily quantified through cell survival assays, linked to the density of ionization events (Linear Energy Transfer, LET).

Experimental Protocol 2: Clonogenic Survival Assay for RBE Determination

- Cell Preparation: Seed mammalian cell lines (e.g., V79, H460) in culture flasks and grow to exponential phase.

- Irradiation: Trypsinize, count, and plate cells in known numbers. Irradiate plates with graded doses of reference (e.g., 250 kVp X-rays) and test particles (electrons, protons, ions). Include unirradiated controls. Use precise particle accelerators (cyclotron/synchrotron) with beam monitoring.

- Incubation: Place dishes in a 37°C, 5% CO₂ incubator for 10-14 days to allow colony formation.

- Staining & Counting: Fix colonies with methanol/acetic acid, stain with crystal violet. Count colonies (>50 cells).

- Data Analysis: Calculate surviving fraction (SF = colonies counted / (cells seeded × plating efficiency)). Fit SF vs. dose data with Linear-Quadratic (LQ) model: SF = exp(-αD - βD²). Calculate RBE at a specific SF (e.g., 10%) as RBE₁₀ = Dref / Dtest.

Title: Particle-Induced DNA Damage and Repair Pathway

Table 3: The Scientist's Toolkit - Key Reagents & Materials for Radiobiology Experiments

| Item | Function in Experiment |

|---|---|

| Dulbecco's Modified Eagle Medium (DMEM) | Cell culture medium providing nutrients for growth pre- and post-irradiation. |

| Fetal Bovine Serum (FBS) | Serum supplement for culture media, providing essential growth factors and proteins. |

| Trypsin-EDTA Solution | Proteolytic enzyme used to detach adherent cells for counting and re-plating. |

| Crystal Violet Stain | Dye used to fix and stain formed cell colonies for visualization and counting. |

| Linear-Quadratic Model Fitting Software (e.g., Origin, R) | For analyzing survival curve data to extract α, β parameters and calculate RBE. |

| Radiochromic Film / Ionization Chamber | For absolute dosimetry and beam profile verification prior to biological experiments. |

| Monte Carlo Code (e.g., TOPAS/GEANT4, FLUKA, MCNP) | To simulate detailed particle transport and predict physical dose/LET distributions in silico. |

5. Conclusion Photons, electrons, protons, and heavy ions form a versatile toolkit for biomedicine, each with unique physical and biological signatures. The continuous refinement of Monte Carlo methods, informed by precise experimental radiobiology protocols, is essential to fully exploit these differences. This synergy between simulation and experiment drives innovation in targeted radiotherapy and diagnostic imaging, ultimately aiming to improve therapeutic ratios and patient outcomes.

This technical guide details the three primary photon interaction mechanisms relevant to medical physics and therapeutic research: the photoelectric effect, Compton scattering, and pair production. Framed within the context of Monte Carlo simulation for modeling particle interactions in biological tissue, this whitepaper provides the foundational physics, quantitative data, experimental methodologies, and research tools necessary for accurate simulation in drug development and radiation therapy research.

In Monte Carlo simulations of radiation transport through tissue—a critical tool for radiotherapy planning, dosimetry, and radiopharmaceutical development—the accurate modeling of photon interactions is paramount. The dominant mechanisms by which photons deposit energy in tissue vary with photon energy and the atomic number (Z) of the absorbing material. This document provides an in-depth analysis of these core interactions to inform the development and validation of Monte Carlo codes like GEANT4, MCNP, and PENELOPE.

Core Interaction Mechanisms

Photoelectric Effect

The photoelectric effect describes the complete absorption of an incident photon by an atom. The photon ejects a bound electron (typically from an inner shell), with the photon's energy transferred to the electron as kinetic energy, minus the electron's binding energy. The resulting vacancy leads to characteristic X-ray emission or Auger electron ejection.

Key Dependencies: Cross section (probability) scales approximately as ~ Z⁴/Eᵧ³, making it dominant for low-energy photons and high-Z materials.

Compton Scattering

Compton scattering is the inelastic scattering of a photon by a loosely bound or free electron. The photon transfers part of its energy to the electron and is deflected with reduced energy. The relationship between the scattering angle (θ) and energy loss is given by the Klein-Nishina formula.

Key Dependencies: Cross section per electron is nearly independent of Z. Dominant in soft tissue for intermediate photon energies (~30 keV to 10 MeV).

Pair Production

Pair production occurs when a photon with energy exceeding 1.022 MeV (twice the rest mass of an electron) interacts with the strong electric field near a nucleus. The photon is converted into an electron-positron pair. Any excess photon energy above the threshold becomes kinetic energy of the created particles.

Key Dependencies: Cross section scales as ~ Z² and increases logarithmically with photon energy. Dominant at high energies (>5-10 MeV in tissue).

The following tables consolidate key quantitative parameters for these interactions, essential for Monte Carlo cross-section libraries.

Table 1: Dominant Interaction Regions by Photon Energy in Soft Tissue (Z_eff ≈ 7.5)

| Photon Energy Range | Dominant Interaction | Approximate Probability Fraction | Key Monte Carlo Consideration |

|---|---|---|---|

| < 30 keV | Photoelectric Effect | > 80% | Critical for imaging, low-dose regions |

| 30 keV - 5 MeV | Compton Scattering | > 70% | Main contributor to dose in radiotherapy |

| > 5 MeV | Pair Production | Increasing to > 50% | Significant in high-energy therapy beams |

Table 2: Key Cross-Section Formulas and Dependencies

| Interaction | Atomic Cross-Section (σ) Proportionality | Energy Threshold | Primary Secondary Particles |

|---|---|---|---|

| Photoelectric | ~ Zⁿ/Eᵧ³.5 (n≈4-5) | > Electron Binding Energy | Photoelectron, Characteristic X-ray, Auger e⁻ |

| Compton (per atom) | ~ Z * Klein-Nishina (per electron) | None (free electron) | Recoil Electron, Scattered Photon |

| Pair Production (nuclear field) | ~ Z² * f(Eᵧ) | 1.022 MeV | Electron-Positron Pair |

Table 3: Typical Mean Free Paths in Water (cm)

| Photon Energy | Photoelectric (λ_pe) | Compton (λ_c) | Pair Production (λ_pp) |

|---|---|---|---|

| 50 keV | ~4.0 | ~10.2 | — |

| 200 keV | ~25.1 | ~5.1 | — |

| 1 MeV | — | ~10.0 | ~40.0 |

| 10 MeV | — | ~24.0 | ~16.0 |

Experimental Protocols for Validation

Monte Carlo models require validation against empirical data. Below are summarized protocols for measuring interaction cross-sections.

Protocol: Measuring Compton Scattering Cross-Sections

Objective: To measure the differential cross-section for Compton scattering as a function of scattering angle. Materials: Monoenergetic gamma source (e.g., Cs-137, 662 keV), High-Purity Germanium (HPGe) detector, Collimators, Scatterer (low-Z thin foil), Precision goniometer, Multi-channel analyzer. Procedure:

- Mount the source and detector on the goniometer arms. Use collimators to define narrow beams.

- Place the thin scatterer at the center of the goniometer.

- Measure the primary beam intensity (I₀) without the scatterer.

- For angles θ from 10° to 150°, measure the energy and count rate (I_s) of the scattered photons.

- Correct for detector efficiency, air scattering, and absorption in the scatterer.

- Calculate the differential cross-section using the Klein-Nishina formula modified for experimental geometry and solid angle.

Protocol: Verifying Photoelectric Effect Z-Dependence

Objective: To validate the ~Z⁴ dependence of the photoelectric cross-section. Materials: Low-energy X-ray source (e.g., 59.5 keV from Am-241), Thin foils of known thickness and varying Z (e.g., Al, Cu, Sn, Pb), NaI(Tl) or HPGe detector. Procedure:

- Measure the incident photon flux (I₀) with no absorber.

- For each foil, measure the transmitted flux (I).

- Calculate the linear attenuation coefficient (μ) for each material from I = I₀ exp(-μt).

- Subtract the theoretically calculated Compton and Rayleigh contributions to isolate μ_photoelectric.

- Plot log(μ_pe) vs log(Z). The slope should be approximately 4.

Monte Carlo Integration & Workflow

The modeling of these interactions within a Monte Carlo simulation for tissue involves a structured workflow.

Diagram Title: Monte Carlo Photon Interaction Decision & Sub-Process Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Experimental Validation Studies

| Item / Reagent | Function in Experiment | Key Specification / Note |

|---|---|---|

| Monoenergetic Gamma/X-ray Sources (Am-241, Cs-137, Co-60) | Provide well-defined photon beams for cross-section measurement. | Sealed sources with known activity and emission probability. |

| High-Purity Germanium (HPGe) Detector | High-resolution spectroscopy to distinguish photopeaks, Compton edges, and annihilation peaks. | Requires liquid nitrogen cooling. Efficiency calibration essential. |

| Tissue-Equivalent Phantoms | Simulate human tissue (e.g., lung, muscle, bone) for dose deposition studies. | Defined by ICRU/ICRP compositions; can be solid, liquid, or gel. |

| Radiochromic Films (e.g., EBT3) | Measure 2D dose distributions from complex photon interactions in phantom. | Self-developing, near tissue-equivalent, high spatial resolution. |

| Monte Carlo Code Package (GEANT4, MCNP6, TOPAS) | Simulate stochastic photon interactions using physics models validated against this data. | Requires accurate physics list selection (e.g., G4EmLivermorePhysics for low-E). |

| NIST Standard Reference Materials (e.g., SRM for X-ray Attenuation) | Calibrate experimental setups and validate simulated attenuation coefficients. | Provides certified μ/ρ values for specific materials and energies. |

| Precision Collimators & Diaphragms | Define narrow photon beams to control scatter and improve angular resolution. | Often made from high-Z materials (e.g., tungsten) to absorb unwanted photons. |

Accurate modeling of tissue properties is a foundational pillar in the application of Monte Carlo (MC) methods for simulating particle transport in biological systems. Within the broader thesis on advancing MC techniques for medical physics and radiation therapy, this technical guide details the critical parameters of tissue density, elemental composition, and their resultant energy-dependent interaction cross-sections. These parameters directly govern the stochastic processes of energy deposition, scattering, and nuclear interactions that MC algorithms are designed to simulate, ultimately determining the accuracy of dose calculations in radiotherapy, radioprotection studies, and biomedical imaging.

Core Tissue Properties and Their Quantification

Density (ρ)

Tissue density, mass per unit volume (g/cm³), is the primary scaling factor for macroscopic cross-sections in particle transport. It is not homogeneous and varies significantly between and within tissues.

Elemental Composition

The stoichiometric composition of a tissue, defined by the mass or fraction of constituent elements (e.g., H, C, N, O, P, Ca), dictates its microscopic interaction probabilities. Modern reference data are derived from techniques like cryo-mass spectrometry and prompt-gamma neutron activation analysis.

Energy-Dependent Cross-Sections (σ(E))

The probability of a specific interaction (e.g., Compton scattering, photoelectric absorption, elastic scattering) between an incident particle and a target atom is quantified by its cross-section (barns/atom), which is a strong function of particle energy (E). For compound materials like tissue, the effective cross-section is computed as the weighted sum of elemental cross-sections.

Reference Data Tables

Table 1: Density and Elemental Composition of Reference Tissues (Mass Fractions)

| Tissue Type | Density (g/cm³) | H | C | N | O | Other |

|---|---|---|---|---|---|---|

| Skeletal Muscle (ICRP 110) | 1.05 | 0.102 | 0.123 | 0.035 | 0.729 | Na, P, S, K (0.011) |

| Adipose Tissue (ICRP 110) | 0.95 | 0.114 | 0.598 | 0.007 | 0.278 | Na, P, S (0.003) |

| Cortical Bone (ICRP 110) | 1.92 | 0.047 | 0.144 | 0.042 | 0.435 | P, Ca, Mg (0.332) |

| Lung (Inflated, ICRP 110) | 0.26 | 0.103 | 0.105 | 0.031 | 0.749 | Na, P, S, Cl (0.012) |

| Brain (White Matter) | 1.04 | 0.107 | 0.145 | 0.022 | 0.712 | P, S, Cl, K (0.014) |

Table 2: Photon Interaction Cross-Section Data for Water (Analog for Soft Tissue) at Key Energies

| Energy (MeV) | Photoelectric (barns/atom) | Compton (barns/atom) | Pair Production (barns/atom) | Total μ/ρ (cm²/g) |

|---|---|---|---|---|

| 0.01 | 3.86E+02 | 5.12 | 0.00 | 5.33 |

| 0.1 | 1.55E-01 | 3.81E-01 | 0.00 | 0.171 |

| 1 | 1.58E-03 | 6.15E-02 | 0.00 | 0.0706 |

| 10 | 1.41E-05 | 2.48E-02 | 1.34E-02 | 0.0221 |

Data sourced from NIST XCOM database.

Experimental Protocols for Parameter Determination

Protocol for Experimental Density Measurement via Pycnometry

Objective: To determine the bulk density of a small, irregular tissue sample. Materials: Helium pycnometer, microbalance, surgical tools, sample vials. Procedure:

- Calibrate the pycnometer cell volume using a standard sphere of known geometry and mass.

- Excise a tissue sample (~1 cm³), blot surface fluid, and immediately place in a sealed vial.

- Weigh the empty sample cup (m_cup).

- Transfer sample to cup and weigh (m_cup+sample).

- Place cup in pycnometer chamber. The instrument measures the pressure change of helium as it expands into the sample cell and then into a reference volume.

- The system software calculates the sample's solid volume (V_sample) by subtracting the displaced gas volume from the known cell volume.

- Calculate density: ρ = (mcup+sample - mcup) / V_sample.

- Perform in triplicate and average, reporting standard deviation.

Protocol for Elemental Composition via Inductively Coupled Plasma Mass Spectrometry (ICP-MS)

Objective: To quantify trace element concentrations in digested tissue samples. Materials: ICP-MS instrument, high-purity nitric acid, microwave digester, precision pipettes, certified elemental standards. Procedure:

- Digestion: Accurately weigh ~0.5g of lyophilized, homogenized tissue into a Teflon digestion vessel. Add 5 mL of concentrated HNO3. Digest using a microwave system with a ramped temperature program (e.g., to 180°C over 20 min, hold for 15 min).

- Dilution: After cooling, quantitatively transfer digestate to a 50 mL volumetric flask and dilute to mark with ultrapure water (18.2 MΩ·cm). A further 1:10 or 1:100 dilution may be required based on expected concentrations.

- Calibration: Prepare a series of calibration standards (e.g., 1, 10, 100, 1000 ppb) from multi-element stock solutions for target elements (P, S, Ca, Fe, Zn, etc.).

- Analysis: Introduce standards and samples into the ICP-MS via a peristaltic pump and nebulizer. The instrument atomizes and ionizes the sample; ions are separated by mass/charge ratio and counted.

- Quantification: Generate calibration curves (signal intensity vs. concentration) for each element. Use internal standards (e.g., Scandium-45) to correct for matrix effects and instrumental drift. Calculate elemental mass in original sample and convert to mass fraction.

Visualizations

Diagram 1: Data flow for MC particle transport.

Diagram 2: Workflow for determining tissue composition.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Tissue Property Experiments

| Item | Function | Example/Note |

|---|---|---|

| Helium Pycnometer | Measures the true volume (and thus density) of porous or irregular solids by gas displacement. | AccuPyc II (Micromeritics); uses inert, non-adsorbing He gas. |

| Inductively Coupled Plasma Mass Spectrometer (ICP-MS) | Detects and quantifies trace elemental concentrations at parts-per-billion levels in digested solutions. | Agilent 7900 or PerkinElmer NexION; requires high-purity argon gas. |

| Microwave Digestion System | Rapidly and completely dissolves organic tissue matrices using controlled heat and pressure with acids. | CEM Mars 6 or Milestone Ethos UP; uses Teflon vessels. |

| High-Purity Nitric Acid (TraceMetal Grade) | Primary digestion acid for ICP-MS; oxidizes organic matter and keeps elements in solution. | Fisher Optima Grade or Sigma-Aldpur; minimizes background contamination. |

| Certified Multi-Element Standard Solutions | Used to calibrate the ICP-MS for accurate quantification across the periodic table. | Inorganic Ventures; supplied with certificates of analysis for concentration. |

| Cryomill/Homogenizer | Pulverizes and homogenizes frozen tissue to a fine powder for representative sub-sampling. | SPEX SamplePrep 6870 Freezer/Mill; uses liquid nitrogen to prevent degradation. |

| NIST Standard Reference Material (SRM) | Certified tissue (e.g., SRM 1577c Bovine Liver) used for quality control and method validation. | Provides benchmark values for composition to assess analytical accuracy. |

| Monte Carlo Code with Tissue Libraries | Software that implements particle transport using cross-section databases and tissue parameters. | GEANT4, MCNP, FLUKA, EGSnrc; require correctly formatted input files. |

This whitepaper serves as a core technical guide within a broader thesis investigating Monte Carlo (MC) simulations for modeling particle-tissue interactions. The central challenge in predictive radiobiology and targeted drug development lies in accurately translating macroscopic absorbed dose (Gy) into microscopic spatial patterns of energy deposition. This document bridges that gap by detailing the physical principles of Track Structure and Linear Energy Transfer (LET), which are fundamental inputs for advanced MC codes like Geant4-DNA, TOPAS-nBio, and FLUKA. For researchers in radiation oncology and pharmaceutical development, mastering these concepts is critical for predicting biological outcomes, from DNA lesion complexity to the efficacy of radiopharmaceuticals.

Core Concepts: From Dose to Discrete Events

Macroscopic Dose: Absorbed dose is an average energy deposition per unit mass, a bulk quantity that fails to describe the stochastic, heterogeneous nature of particle interactions at cellular and sub-cellular scales.

Microscopic Track Structure: This refers to the detailed, stochastic spatial distribution of inelastic interactions (ionizations and excitations) along and around the path of a single charged particle. It is the explicit output of track-structure MC simulations.

Linear Energy Transfer (LET): Defined as the average energy locally imparted to the medium per unit track length by a charged particle (keV/µm). LET serves as a crucial, albeit simplified, descriptor of radiation quality, correlating with the density of ionizations along a track.

- LET∞ (Unrestricted): Considers all energy transfers.

- LETΔ (Restricted): Considers only energy transfers below a cutoff Δ, excluding high-energy δ-rays, providing a more localized measure.

The Monte Carlo Link: Track-structure MC methods simulate individual interaction cross-sections to build up a stochastic picture of energy deposition, explicitly modeling secondary electron (δ-ray) spectra. LET is a derived statistical quantity from these simulations.

Quantitative Data on Radiation Qualities

Table 1: Characteristic LET and Track Structure Parameters for Common Radiation Types

| Radiation Type | Particle & Energy | Typical LET∞ (keV/µm) in Water | Approx. Mean Interaction Spacing (nm) | Primary Track Width (nm, core) | Key Biological Implication |

|---|---|---|---|---|---|

| Photons / Electrons (Low-Energy) | 250 keV e⁻ | ~0.3 | ~1000 | Diffuse, wide | Sparse, isolated lesions; high repairability. |

| Protons | 150 MeV (Therapeutic) | ~0.5 | ~600 | 1-10 | Moderately dense tracks; pronounced Bragg peak. |

| Carbon Ions | 270 MeV/u (Therapeutic) | ~15 | ~20 | ~10 | Very dense core; complex clustered damage. |

| Alpha Particles | 5 MeV (from Rn decay) | ~90 | < 10 | < 0.1 | Extremely dense, short range; high RBE. |

| Neutrons (Fast) | 1 MeV (indirect) | Spectrum via recoil protons | Variable | Variable | Mixed field; produces proton tracks of varying LET. |

Table 2: Key MC Codes for Track Structure Simulation

| Code Name | Primary Application | Scale Modeled | Key Strength | Typical Input/Output |

|---|---|---|---|---|

| Geant4-DNA | Nano-/micro-dosimetry | Physics & Chemistry (ps-ns) | Open-source, detailed processes. | Particle type/energy → Interaction points, species yields. |

| TOPAS-nBio | Radiobiology extension | Physics to Biology (ps-hours) | User-friendly TOPAS interface. | Particle track → DNA damage score, cell survival. |

| PARTRAC | DNA damage modeling | Physics to Chromatin (ps-min) | Integrated DNA structure model. | Radiation field → DSB yield and complexity. |

| FLUKA | Mixed-field dosimetry | Macroscopic to microscopic | High-energy to NMRC coupling. | Complex field → Dose, LET spectra. |

Experimental Protocols for Validation

Validating MC-predicted track structure and LET requires correlating simulation with physical and biological experiments.

Protocol 1: Nanodosimetry with Track-Etch Detectors

- Objective: Measure the microscopic pattern of ionizations.

- Materials: CR-39 (allyl diglycol carbonate) plastic, etching solution (NaOH/KOH), microscope.

- Method:

- Irradiate CR-39 detector with charged particles of known type/energy.

- Chemically etch the plastic. Material is removed faster along the radiation-damaged latent track.

- Under a microscope, measure the resulting etch-pit geometry (diameter, length).

- Correlate pit morphology (conical shape for high-LET) with simulated ionization density and LET.

Protocol 2: Determining Relative Biological Effectiveness (RBE)

- Objective: Link LET to biological outcome, validating biological MC models.

- Materials: Cell line (e.g., V79, HSG), clonogenic assay reagents, particle irradiation facility.

- Method:

- Irradiate cell monolayers with reference radiation (e.g., 250 kVp X-rays) and test particles (e.g., protons, C-ions) across a dose range.

- For each beam, perform a clonogenic survival assay: trypsinize, plate at low density, incubate for 1-2 weeks, fix/stain colonies.

- Plot survival curves (log(SF) vs. dose). Calculate RBE at a specific survival level (e.g., SF=0.1): RBE = Dref / Dtest.

- Plot experimental RBE vs. MC-simulated LET to establish the relationship for the model system.

Protocol 3: Microscopic Imaging of DNA Damage Foci

- Objective: Visualize spatial correlation of damage with particle traversal.

- Materials: Immunofluorescence-labeled cells (γ-H2AX, 53BP1 antibodies), confocal microscope, particle microbeam.

- Method:

- Irradiate cell nuclei precisely using a particle microbeam (e.g., 1-10 α-particles/nucleus).

- Fix, permeabilize, and immuno-stain for DNA double-strand break (DSB) markers.

- Image with confocal microscopy. Analyze linear clusters (foci) along simulated particle tracks.

- Quantify foci density and size, comparing to predicted ionization clusters from track-structure simulations.

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Reagents and Solutions

| Item/Category | Example Product/Specification | Primary Function in Research Context |

|---|---|---|

| Track-Etch Material | CR-39 Plastic Sheets | Records latent particle tracks for visualization and nanodosimetric measurement. |

| Cell Culture for RBE | V79 (Chinese Hamster Lung) Cells | Standardized, high-plating-efficiency cell line for clonogenic survival assays. |

| DNA Damage Stain | Anti-γ-H2AX (Phospho-S139) Antibody | Immunofluorescence marker for microscopic visualization of DNA double-strand breaks. |

| Monte Carlo Code | Geant4-DNA Toolkit | Open-source software for simulating particle track structure in liquid water. |

| Microbeam System | Particle Microbeam (e.g., SNAKE, GSI) | Allows targeted irradiation of single cells or sub-cellular compartments for precise correlation. |

| LET Spectrometer | Silicon Semiconductor Detector (e.g., ΔE-E telescope) | Measures energy loss of individual particles to derive experimental LET spectra. |

| Biological Target Model | DNA Geometry Packages (e.g., PARTRAC Nucleosome Model) | Provides structural data (atomic coordinates) for MC simulation of direct DNA damage. |

Visualizing Concepts and Workflows

Title: Relationship Between Dose, Track Structure, LET, and Biology

Title: Monte Carlo Track Structure Simulation Workflow

Implementing Monte Carlo Simulations: Codes, Workflows, and Biomedical Use Cases

Within the broader thesis on Monte Carlo (MC) methods for simulating particle interactions in biological tissue, the selection of an appropriate simulation toolkit is foundational. This guide provides an in-depth technical comparison of four major, general-purpose MC codes: Geant4, MCNP, PENELOPE/PRIMO, and TOPAS. These toolkits are indispensable for research in radiation therapy, radiobiology, medical imaging, and drug development, enabling the precise tracking of particle transport and energy deposition at macroscopic to microscopic scales.

Core Toolkit Architectures and Methodologies

Geant4

Geant4 (Geometry and Tracking) is an open-source C++ toolkit developed and maintained by a worldwide collaboration. Its object-oriented architecture provides unparalleled flexibility. Users build their simulation by composing geometry, defining physics processes, and creating particle sources from modular components. It offers a vast library of physics models covering electromagnetic and hadronic interactions from eV to TeV energies, including specialized packages for low-energy physics (Livermore, PENELOPE) and optical photon transport.

MCNP

MCNP (Monte Carlo N-Particle), developed at Los Alamos National Laboratory, is a legacy code written in FORTRAN. It uses a continuous-energy generalized geometry system. Its primary strengths are its mature, validated nuclear data libraries for neutrons, photons, and electrons, and its efficient transport algorithms. It is widely used for radiation shielding, criticality safety, and reactor physics, with growing applications in medical physics via its MNCPX and MCNP6 variants.

PENELOPE/PRIMO

PENELOPE (Penetration and Energy Loss of Positrons and Electrons) is a FORTRAN/C++ code algorithm and physics model designed for simulating coupled electron-photon transport in the 50 eV to 1 GeV range with high accuracy. It uses a mixed simulation scheme, classifying steps as "hard" or "soft" for computational efficiency. PRIMO is a specialized, user-friendly software that incorporates the PENELOPE engine within a graphical interface, pre-configured for clinical linear accelerator simulation and voxelized patient dose calculation.

TOPAS

TOPAS (TOol for PArticle Simulation) is an open-source extension layered atop Geant4. Written in C++, it provides a scripting interface (using a custom parameter system) that abstracts much of the Geant4 coding complexity. It is specifically designed for translational research in particle therapy and medical physics, offering built-in components for beam lines, patients, and scoring. It combines Geant4's power with significantly reduced development time for complex simulations.

Quantitative Comparison of Toolkit Capabilities

Table 1: Core Characteristics and Technical Specifications

| Feature | Geant4 | MCNP6 | PENELOPE/PRIMO | TOPAS |

|---|---|---|---|---|

| Primary Dev. Language | C++ | FORTRAN | FORTRAN/C++ | C++ (Geant4 wrapper) |

| License & Cost | Open Source (Free) | Proprietary (Paid) | PENELOPE: Free / PRIMO: Free | Open Source (Free) |

| Primary Particle Types | e-/e+, γ, p, n, ions, μ, π, etc. | n, γ, e- (primary focus) | e-, e+, γ | All Geant4 particles |

| Typical Energy Range | eV – TeV | Thermal – GeV | 50 eV – 1 GeV | eV – TeV (inherited) |

| Key Strength | Flexibility, breadth of physics | Validated nuclear data, neutronics | Accuracy in e-/γ transport | Ease of use in medical physics |

| Typical Application | HEP, space, medical physics | Shielding, reactors, detectors | Radiotherapy dose calculation | Particle therapy, translational research |

| Learning Curve | Very Steep | Steep | Moderate (PRIMO) / Steep (PENELOPE) | Moderate for medical physics |

Table 2: Performance and Usability in Tissue Research Context

| Aspect | Geant4 | MCNP6 | PENELOPE/PRIMO | TOPAS |

|---|---|---|---|---|

| Voxelized Geometry | Yes (via GDCM, DICOM) | Yes (lattice/universe system) | Yes (in PRIMO) | Yes (native, optimized) |

| DNA/Damage Scoring | Yes (via Geant4-DNA) | Limited | Possible with customization | Yes (via extensions) |

| Pre-built Medical Beam Lines | No (must be coded) | No | Yes (in PRIMO for linacs) | Yes (extensive library) |

| Validation in Medical Physics | Extensive, ongoing | Strong for neutron/photon | Excellent for kV/MV beams | Extensive for proton therapy |

| User Interface | Code/script | Text input file | GUI (PRIMO) / Text input | Text parameter files |

Detailed Experimental Protocol: Benchmarking Dose Deposition in a Water Phantom

A critical step in any MC study for tissue research is validating the toolkit's output against measured or benchmark data. Below is a generalized protocol for benchmarking absorbed dose in a water phantom.

1. Objective: To validate the electromagnetic physics models of a chosen MC toolkit by simulating depth-dose curves in a water phantom and comparing to trusted reference data (e.g., IAEA TRS-398).

2. Materials & Software:

- MC Toolkit (Geant4, MCNP, PRIMO, or TOPAS installation).

- Reference beam data for a 6 MV photon beam or a 150 MeV proton beam.

- Computing cluster or high-performance workstation.

3. Methodology:

- Geometry Definition: Construct a cubic water phantom (e.g., 40x40x40 cm³). Define a scoring plane along the central axis for depth-dose measurement.

- Source Definition: Model a clinical radiotherapy source.

- For Photons: Define a phase-space file or a virtual linac head above the phantom.

- For Protons: Define a monoenergetic pencil beam with appropriate energy spread and lateral profile.

- Physics List Selection: Activate the relevant electromagnetic physics processes. For low-energy electrons in water, ensure models like "Livermore" or "PENELOPE" (in Geant4/TOPAS) or appropriate cross-section libraries (in MCNP) are selected.

- Scoring Setup: Implement a "dose scorer" to record energy deposited per unit mass in thin slabs (e.g., 1 mm or 2 mm thick) along the beam axis.

- Simulation Execution: Run a sufficient number of primary particle histories to achieve a statistical uncertainty of <0.5% in the high-dose region.

- Data Analysis: Normalize the simulated depth-dose curve to its maximum value. Calculate the percentage difference at key points (e.g., depth of maximum dose, R50) against the reference data. Use gamma-index analysis (e.g., 2%/2mm criteria) for a composite evaluation.

4. Expected Output: A depth-dose curve (e.g., Bragg peak for protons, exponential fall-off for photons) that aligns with reference data within accepted tolerances, confirming the accuracy of the toolkit's physics models for water (a tissue surrogate).

Logical Workflow for Toolkit Selection in Tissue Research

Title: Decision Workflow for Selecting a Monte Carlo Toolkit

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational & Data Resources for MC Tissue Research

| Item | Function in Research |

|---|---|

| High-Performance Computing (HPC) Cluster | Enables parallel processing of billions of particle histories in a feasible time, essential for low-uncertainty results in complex geometries. |

| Anatomically Realistic Voxel Phantom (e.g., ICRP 110) | Digital models of human anatomy derived from CT/MRI, used as simulation geometry to estimate organ doses and study radiation effects in specific tissues. |

| Reference Clinical Beam Data | Benchmark datasets (depth-dose, profiles) for standard accelerator beams, required for validating and tuning the MC simulation's source model. |

| Tissue Composition Database (e.g., ICRU 44) | Tables of elemental mass fractions and densities for various tissues (muscle, bone, lung, etc.), critical for defining material properties in the simulation. |

| Phase-Space File | A pre-recorded file containing the state (energy, position, direction) of particles crossing a plane, used as a validated source to save computation time. |

| DICOM RT Suite | Standard medical images (CT) and structure sets, used to import patient-specific geometries and contours for treatment planning studies. |

| Statistical Analysis Package (e.g., Python SciPy, R) | Software for post-processing simulation output: statistical comparison, gamma-index analysis, curve fitting, and data visualization. |

| Cross-Section Library (e.g., ENDF, EPDL97) | Comprehensive databases of particle interaction probabilities with different elements, the fundamental data driving the MC physics. |

In the context of a broader thesis on Monte Carlo methods for modeling particle interactions (e.g., photons, electrons, protons) in biological tissue, geometric phantoms serve as the foundational digital representation of anatomy. Accurate simulation of radiation dose deposition, light propagation in tissues, or radiopharmaceutical biodistribution depends fundamentally on the quality of this anatomical model. The choice between voxelized and parameterized phantoms directly impacts the accuracy, computational efficiency, and flexibility of the Monte Carlo simulation.

Core Model Architectures: Definitions and Technical Foundations

Voxelized Phantoms are derived from segmented medical imaging data (CT, MRI). The anatomy is discretized into a three-dimensional grid of volume elements (voxels), each assigned a specific tissue or material index. This creates a highly realistic, non-uniform model that precisely mirrors the scanned anatomy.

Parameterized (or Mathematical) Phantoms use simple geometric primitives (e.g., ellipsoids, cylinders, cones) and analytical formulas to describe anatomical boundaries. Organs and structures are defined by equations with adjustable parameters (center coordinates, radii, angles), offering a smooth, continuous representation.

Quantitative Comparison of Model Characteristics

The following table summarizes the core quantitative and qualitative differences critical for Monte Carlo applications in tissue research.

Table 1: Comparison of Phantom Model Characteristics for Monte Carlo Simulation

| Characteristic | Voxelized Phantom | Parameterized Phantom |

|---|---|---|

| Anatomical Basis | Direct segmentation of CT/MRI data; patient-specific. | Based on reference anatomical data (e.g., ICRP publications); population-averaged. |

| Spatial Representation | Discrete, stair-stepped boundaries at high resolution. | Continuous, smooth surfaces defined by equations. |

| Model Flexibility | Low; morphology is fixed to the source image. | High; organ size, shape, and position can be altered via parameters. |

| Computational Memory | High (scales with resolution, e.g., 512³ voxels). | Very Low (stores only equation coefficients). |

| Monte Carlo Navigation | Complex; requires boundary-crossing logic per voxel. | Simple; direct ray-geometry intersection calculations. |

| Typical Use Case | Patient-specific dosimetry, validation studies. | Protocol development, comparative studies, investigating anatomical variability. |

| Common Formats | DICOM, RAW matrix. | BREP (Boundary Representation), CAD scripts, PHITS/EGS++ native formats. |

Experimental Protocols for Phantom Implementation & Evaluation

Protocol 1: Constructing a Voxelized Phantom from Clinical CT

- Image Acquisition: Obtain a high-resolution (≤1 mm slice thickness) DICOM CT series. Ensure the scan covers the entire volume of interest.

- Segmentation: Use a software toolkit (e.g., 3D Slicer, ITK-Snap) to manually or semi-automatically label voxels according to tissue type. Assign a unique integer index to each tissue (e.g., 1=lung, 2=bone, 3=soft tissue).

- Material Assignment: Create a correspondence table linking each tissue index to specific material properties (density, elemental composition) relevant to your Monte Carlo code (e.g., Geant4, MCNP, GATE).

- Grid Export: Convert the segmented label map into a binary or ASCII file format readable by your Monte Carlo engine, preserving the 3D matrix dimensions and voxel resolution.

Protocol 2: Implementing a Parameterized Phantom (e.g., Ellipsoidal Torso)

- Reference Definition: Define a world coordinate origin (e.g., center of the body). Reference standard anatomical dimensions (e.g., from ICRP Publication 110).

- Organ Modeling: For each organ, define its bounding surface using a combination of primitives. Example (Liver as ellipsoid):

- Equation:

(x-x0)²/a² + (y-y0)²/b² + (z-z0)²/c² = 1 - Parameters:

(x0, y0, z0)= center coordinates;(a, b, c)= semi-axis lengths.

- Equation:

- Hierarchical Placement: Define organs relative to body landmarks. Nesting (e.g., heart inside lung cavity) can be managed via Boolean operations (union, intersection, subtraction).

- Code Integration: Implement the geometry directly within the Monte Carlo code's geometry constructor or use a dedicated scripting language (e.g., GDML for Geant4). Ensure each organ volume is assigned its correct material properties.

Protocol 3: Comparative Dosimetry Experiment

- Simulation Setup: Model the same radiation source (e.g., a 6 MV photon beam point source or a distributed radionuclide) in two identical Monte Carlo codes differing only in phantom geometry (voxelized vs. parameterized of equivalent scale).

- Dose Scoring: Define a consistent 3D dose scoring mesh (voxelized tally) encompassing the target region in both simulations.

- Execution: Run simulations with a sufficient number of particle histories (e.g., 10⁸) to achieve a statistical uncertainty of <1% in regions of interest.

- Analysis: Calculate dose-volume histograms (DVHs) for critical organs. Compute global metrics like the 3D gamma index (e.g., 2%/2mm criteria) to quantify the spatial agreement of dose distributions between the two phantom models.

Visualization of Phantom Construction Workflows

Diagram Title: Voxelized Phantom Construction Pipeline

Diagram Title: Parameterized Phantom Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Data Resources for Phantom Development

| Tool/Resource | Category | Primary Function in Phantom Development |

|---|---|---|

| 3D Slicer | Open-Source Software | Platform for medical image segmentation and 3D model generation from DICOM data. |

| ITK-SNAP | Open-Source Software | Specialized tool for semi-automatic segmentation of anatomical structures in 3D. |

| ICRP Publication 110 | Reference Data | Provides reference adult male and female voxel phantom datasets and tissue compositions. |

| GEANT4 | Monte Carlo Toolkit | Provides flexible geometry packages (CSG, BREP) for implementing both phantom types directly in C++ code. |

| GATE/OpenGATE | Monte Carlo Platform | Built on GEANT4, includes specialized features for voxelized import and patient-specific dosimetry. |

| MCNP / PHITS | Monte Carlo Code | Supports lattice and universe structures for voxelized phantoms and native combinatorial geometry for parameterized models. |

| Python (NumPy, PyVista) | Programming Library | For scripting custom phantom creation, manipulating voxel arrays, and converting between file formats. |

| NRRD/NIfTI Format | File Format | Common, standardized formats for storing and exchanging labeled voxel phantom data. |

Defining Source Characteristics for Radiotherapy and Imaging Beams

Accurate definition of radiation source characteristics is the foundational step for any Monte Carlo (MC) simulation of particle interactions in tissue. Within the broader thesis on advancing MC methods for biomedical applications, this guide details the technical specifications and experimental protocols required to define primary beams for radiotherapy (e.g., megavoltage photons/electrons, protons) and medical imaging (e.g., kV x-rays, CT). The fidelity of downstream dose deposition, image contrast, and secondary particle generation models is directly contingent on this initial source characterization.

The essential parameters for beam definition vary by modality. The data below, synthesized from current clinical and research literature, must be incorporated into the MC simulation's source model.

Table 1: Key Characteristics for Radiotherapy Beams

| Beam Type | Typical Energy Spectrum | Focal Spot Size | Angular Divergence/Scanning Pattern | Dose Rate | Key Contaminants |

|---|---|---|---|---|---|

| Linac MV Photons | Bremsstrahlung spectrum (e.g., 6 MV: max ~6 MeV, mean ~1.5-2 MeV) | 1-3 mm (FWHM) | Defined by primary collimator & flattening filter | 100-2400 MU/min | Electron, photon scatter from flattening filter |

| Linac Electrons | Quasi-monoenergetic (e.g., 6, 9, 12, 18 MeV) with low-energy tail | 1-3 mm (FWHM) | Scattered by scattering foils | 100-2400 MU/min | Bremsstrahlung photons |

| Proton Pencil Beam | Spread-Out Bragg Peak (SOBP) via energy stacking (~70-250 MeV) | 3-10 mm σ (in air) | Magnetically scanned across target volume | ~2-10 Gy/min | Secondary electrons, neutrons |

| MV-IMRT/VMAT | As above, with intensity modulation | As above | Dynamic MLC sequence | As above | As above |

Table 2: Key Characteristics for Imaging Beams

| Beam Type | Typical Energy Spectrum | Focal Spot Size | Source-Detector Distance (SDD) | Filtration | Half-Value Layer (HVL) |

|---|---|---|---|---|---|

| Diagnostic X-ray (kV) | Polychromatic (e.g., 50-140 kVp) | 0.6-1.2 mm | 100-180 cm | 2.5-4 mm Al eq. | 2.5-5 mm Al |

| Cone-Beam CT (CBCT) | Polychromatic (80-140 kVp) | 0.3-0.8 mm | ~150 cm | Bowtie filter + Cu/Al | Specific to system |

| Micro-CT/Preclinical | 20-100 kVp | <50 µm | 10-50 cm | Optional Be, Al, Cu | <1 mm Al |

Experimental Protocols for Source Characterization

To populate the MC source model with the data from Tables 1 & 2, the following experimental methodologies are employed.

Protocol 1: Energy Spectrum Measurement for kV X-rays using a Cadmium Telluride (CdTe) Spectrometer

- Equipment Setup: Place a high-resolution CdTe spectrometer (e.g., Amptek XR-100CdTe) on the beam central axis at a known distance from the focal spot. Use a collimator to limit the beam to the active detector area.

- Calibration: Energy calibrate the spectrometer using known radioactive sources (e.g., Am-241, Co-57).

- Data Acquisition: Acquire pulse-height spectra for the x-ray tube at multiple kVp settings (e.g., 50, 80, 120 kVp) and tube currents. Ensure count rates are within the linear range of the detector to avoid pile-up.

- Correction & Deconvolution: Correct the raw spectrum for detector effects (e.g., charge trapping, escape peaks) using manufacturer-provided software or a deconvolution algorithm (e.g., iterative Lucy-Richardson) based on the detector response function.

- HVL Validation: Use the derived spectrum to calculate the Half-Value Layer (HVL) computationally and validate it against a physical measurement using aluminum filters and an ionization chamber.

Protocol 2: Focal Spot Size Measurement using the Pin-Hole Camera Technique

- Pinhole Fabrication: Utilize a tungsten or platinum disk with a laser-drilled pinhole of known, precise diameter (typically 10-30 µm). The pinhole diameter should be ≤ 1/3 of the expected focal spot size.

- Imaging Geometry: Position the pinhole between the x-ray source and a high-resolution digital detector (e.g., CCD-based imager with a scintillator). The magnification (M) is given by M = (a+b)/a, where 'a' is the source-to-pinhole distance and 'b' is the pinhole-to-detector distance.

- Image Acquisition: Acquire a short, high-exposure image of the focal spot. Ensure the pinhole is accurately aligned to the beam central axis.

- Analysis: Measure the full width at half maximum (FWHM) of the magnified spot image on the detector. Calculate the true focal spot size: Focal Spot FWHM = (Image FWHM) / M.

Protocol 3: Proton Pencil Beam Characterization in Air

- Setup: In an air-scanning tank, position a large-area parallel-plate ionization chamber at isocenter (typically 100 cm from source) to measure integrated charge per spot. Simultaneously, position a pixelated scintillation detector (e.g., Lynx, IBA) downstream to acquire 2D lateral dose profiles.

- Spot Scanning: For each nominal beam energy in the clinical range, instruct the accelerator to deliver a single spot at isocenter.

- Profile Measurement: Record the 2D dose profile. Extract the in-air sigma (σair) in both X and Y directions by fitting a single Gaussian function to the central region of each profile.

- Energy Calibration: Relate the nominal energy to the measured practical range in water, establishing the energy calibration curve for the MC source model.

Visualization of Methodological Relationships

Beam Characterization Workflow for MC

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Source Characterization Experiments

| Item / Reagent Solution | Function in Characterization |

|---|---|

| High-Purity CdTe or Si (Li) Spectrometer | Direct measurement of photon energy spectra; essential for kV and MV spectral definition. |

| Precision Pin-Hole Apertures (Tungsten, 10-30 µm) | Creates a magnified image of the focal spot for size measurement via pin-hole camera technique. |

| High-Resolution Digital Imager (CCD/CMOS + Scintillator) | Detects the magnified focal spot image or high-resolution beam profiles. |

| Parallel-Plate Ionization Chamber (e.g., Markus type) | Measures integrated dose for proton/carbon beam spots or for HVL measurements. |

| Pixelated Scintillation Detector Array (e.g., Lynx) | Provides fast, high-resolution 2D profiles of scanning particle beams (protons, ions). |

| Step Wedge & Solid Water Phantoms | Used for HVL measurement and beam profile/depth-dose validation in water-equivalent media. |

| Radioactive Calibration Sources (Am-241, Co-57) | Provides known emission lines for precise energy calibration of spectroscopic detectors. |

| Monte Carlo Code (e.g., Geant4, TOPAS, MCNP, EGSnrc) | Platform for implementing the characterized source model and simulating particle transport. |

This whitepaper details the critical application of Monte Carlo (MC) methods within the broader research thesis investigating stochastic simulations of particle interactions in biological tissue. The accurate calculation of dose deposition from external photon and electron beams is a cornerstone of modern, precise radiotherapy. MC techniques, by explicitly simulating the random nature of particle transport and energy loss, provide the most accurate method for modeling these complex interactions within heterogeneous human anatomy, serving as a gold standard against which faster, deterministic algorithms are benchmarked.

Core Principles of Monte Carlo Dose Calculation

The fundamental process involves tracking millions of individual primary and secondary particles (photons, electrons, positrons) through a patient geometry derived from CT data. Key interactions simulated include:

- Photoelectric Effect: Complete absorption of photon, ejecting an electron.

- Compton Scattering: Inelastic scattering of a photon by a loosely bound electron.

- Pair Production: Photon conversion into an electron-positron pair near a nucleus.

- Electron Collisions: Continuous and discrete energy loss via ionization and excitation.

- Electron Bremsstrahlung: Radiation emission due to electron deflection by a nucleus.

The probability of each interaction is sampled from known cross-sections, making the simulation intrinsically linked to tissue composition and density.

Quantitative Data on Algorithm Performance

Table 1: Comparison of Dose Calculation Algorithm Accuracy in Heterogeneous Media

| Algorithm Type | Principle | Computation Speed | Dosimetric Accuracy in Heterogeneity (vs. Measurement) | Key Limitation |

|---|---|---|---|---|

| Monte Carlo (e.g., EGSnrc, Geant4, PENELOPE) | Stochastic simulation of particle tracks | Very Slow (Hours) | High ( ~1-2% deviation) | Prohibitive computational cost for routine planning |

| Collapsed Cone Convolution/Superposition | Pre-calculated energy deposition kernels | Medium (Minutes) | Medium (~2-4% deviation) | Kernels approximated for heterogeneity |

| Pencil Beam Convolution | Simplified 1D kernels along ray lines | Fast (Seconds) | Low (>5% deviation in lung/bone) | Fails in severe electronic disequilibrium |

| Analytical Anisotropic Algorithm (AAA) | Modeling of photon scatter | Fast-Medium (Minutes) | Medium (~2-3% deviation) | Empirical scaling of pencil beams |

Table 2: Example MC Simulation Parameters for a 6 MV Photon Beam

| Parameter | Typical Value/Range | Impact on Calculation |

|---|---|---|

| Number of Histories (Particles) | 10^7 - 10^9 | Statistical uncertainty ∝ 1/√(N). Higher N reduces noise. |

| Energy Cutoff (ECUT, PCUT) | ECUT: 0.7 MeV (e-), PCUT: 0.01 MeV (γ) | Particles below cutoff energy deposit local dose. Affects speed/accuracy. |

| Voxel Size (in patient CT) | 2.0 x 2.0 x 2.0 mm^3 | Finer resolution increases geometry detail and computation time. |

| Variance Reduction Techniques | Particle splitting, Russian Roulette | Greatly increases efficiency but requires careful implementation. |

Experimental Protocol: MC Commissioning and Validation

Protocol 1: Beam Modeling and Commissioning for a Linac

- Data Acquisition: Measure percent depth dose (PDD) and profiles (in-plane, cross-plane, diagonal) for a clinical linear accelerator (e.g., Varian TrueBeam) using a water phantom and ionization chambers for multiple field sizes (e.g., 3x3 cm² to 40x40 cm²) and energies (e.g., 6 MV, 10 MV).

- Geometry Definition: Accurately model the Linac head (target, primary collimator, flattening filter, jaws, multi-leaf collimators) in the MC code (e.g., BEAMnrc component module).

- Source Parameterization: Iteratively adjust the initial electron beam parameters (energy, spot size) incident on the target until the simulated PDD and profiles match measured data within criteria (e.g., 1% / 1mm in high gradient regions).

- Phase-Space File Generation: Store the position, direction, energy, and particle type of particles exiting the Linac head for reuse in patient simulations.

Protocol 2: Patient-Specific QA Validation Using MC

- Plan Import: Export the treatment plan (beam angles, MLC sequences, monitor units) from the Treatment Planning System (TPS).

- MC Recalculation: Use the commissioned beam model and the patient's CT geometry to recalculate the 3D dose distribution using the MC engine.

- Comparison Metric: Calculate a 3D gamma index (e.g., 2% dose difference / 2 mm distance-to-agreement) between the TPS dose (often from a faster algorithm) and the MC-recalculated dose.

- Analysis: Identify regions where gamma pass rates fall below clinical tolerance (e.g., <95%), indicating potential limitations of the TPS algorithm in areas of high heterogeneity or small fields.

Visualizing the MC Workflow in Radiotherapy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for MC-Based Radiotherapy Research

| Item / Solution | Function in Research | Key Considerations |

|---|---|---|

| Monte Carlo Code Suite (e.g., EGSnrc, TOPAS/Geant4, MCNP) | Core simulation engine for modeling radiation transport. | Choice depends on user expertise, desired particle types, and available support. EGSnrc is dominant in clinical photon/electron research. |

| Clinical Linear Accelerator Beam Data | Ground truth for beam model commissioning and validation. | Requires precise measurement with calibrated ionization chambers in water phantom. |

| Patient CT Datasets (Anonymized) | Provides the 3D geometry and density map for dose calculation. | Must include Hounsfield Unit to material/density conversion protocol. |

| Dosimetry Detectors (e.g., Ion Chamber, Diode, Radiochromic Film, 3D Scanner) | For experimental validation of simulated dose distributions. | Film and 3D scanners provide high spatial resolution for complex fields. |

| High-Performance Computing (HPC) Cluster | Enables practical simulation times for clinical cases via parallel processing. | Essential for running millions of particle histories across many beam angles. |

| DICOM-RT Interface Tools | Enables import/export of RT Plan, RT Structure, and RT Dose objects between TPS and MC systems. | Critical for clinical workflow integration. |

| Analysis Software (e.g., Python with SciPy/NumPy, MATLAB, 3D Slicer) | For statistical analysis, visualization, and gamma comparison of 3D dose matrices. | Custom scripts are often needed for advanced research metrics. |

This whitepaper details the clinical and experimental applications of nuclear medicine imaging and therapy, framed within a research thesis on Monte Carlo (MC) methods for simulating particle interactions in tissue. MC techniques are foundational for modeling the stochastic transport of gamma rays (SPECT), annihilation photons (PET), and beta/alpha particles (therapy) through heterogeneous human anatomy. Accurate MC simulations, which require detailed anatomical phantoms and precise interaction cross-sections, are critical for optimizing scanner design, image reconstruction, dosimetry, and ultimately, patient outcomes.

Quantitative Comparison of Modalities

The core quantitative characteristics of SPECT, PET, and Radiopharmaceutical Therapy are summarized in Table 1.

Table 1: Quantitative Comparison of Nuclear Medicine Modalities

| Parameter | SPECT | PET | Radiopharmaceutical Therapy |

|---|---|---|---|

| Primary Radiation | Single gamma-ray (γ) | Two 511 keV annihilation photons (γ) | Beta (β¯), Alpha (α), or Auger electrons |

| Typical Isotopes | Tc-99m (141 keV), In-111 (171, 245 keV) | F-18, Ga-68, Cu-64 | I-131, Lu-177 (β¯); Ac-225, Ra-223 (α) |

| Spatial Resolution (Clinical) | 8-12 mm | 4-7 mm | N/A (Therapeutic) |

| Sensitivity | Low (~10⁻⁴ counts/sec/Bq) | High (~10⁻² counts/sec/Bq) | N/A |

| Attenuation Correction | Required, using CT or transmission sources | Required, more straightforward (coincidences) | Critical for dose planning |

| Key Quantitative Metric | Activity concentration (kBq/cc) | Standardized Uptake Value (SUV) | Absorbed dose (Gy) |

| Monte Carlo Code Examples | GATE, SIMIND, MCNP | GATE, FLUKA, Geant4 | GATE, MIRDcalc, VARSKIN |

Detailed Methodologies & Experimental Protocols

Protocol for Preclinical PET/CT Imaging with [⁶⁸Ga]Ga-PSMA-11

Objective: To quantify tumor uptake in a murine xenograft model.

- Radiopharmaceutical Preparation: Synthesize [⁶⁸Ga]Ga-PSMA-11 via a Ge-68/Ga-68 generator and automated synthesis module. Perform quality control (HPLC, pH, radiochemical purity >95%).

- Animal Model: Subcutaneous prostate cancer (LNCaP) xenografts in NOD/SCID mice (tumor volume ~200-500 mm³).

- Injection & Uptake: Inject ~5-10 MBq of [⁶⁸Ga]Ga-PSMA-11 via tail vein. Allow for 60-minute biodistribution period under anesthesia (isoflurane/O₂).

- Image Acquisition: Place mouse in prone position in preclinical PET/CT scanner. Acquire: a) CT scan for attenuation correction and anatomy, b) 10-minute static PET scan.

- Image Reconstruction & Analysis: Reconstruct PET data using OSEM algorithm with CT-based attenuation correction. Co-register PET/CT images. Draw volumetric regions of interest (ROIs) over tumor and major organs. Calculate mean and maximum SUV (SUVmean/max).

Protocol for Patient-Specific Dosimetry for [¹⁷⁷Lu]Lu-DOTATATE Therapy

Objective: To estimate absorbed doses to tumors and organs at risk.

- Quantitative SPECT/CT Imaging: Administer ~7.4 GBq of [¹⁷⁷Lu]Lu-DOTATATE to the patient. Acquire serial quantitative SPECT/CT scans at multiple time points (e.g., 4, 24, 72, 168 h post-injection).

- Image Calibration & Segmentation: Calibrate SPECT system using a known activity phantom. For each time point, segment tumors, kidneys, liver, spleen, and bone marrow on CT/SPECT images.

- Time-Activity Curve (TAC) Fitting: For each ROI, plot the decay-corrected activity versus time. Fit an exponential or bi-exponential function to the data.

- Dose Calculation (MC-Based): Integrate the TAC to obtain the total number of decays (cumulative activity). Input this data, along with patient-specific segmented CT anatomy (voxelized phantom), into a Monte Carlo code (e.g., GATE). Simulate particle transport (β¯ emissions of Lu-177) to calculate the absorbed dose distribution in Gy per administered GBq.

- Dose-Response Correlation: Record administered activity and cumulative organ doses. Monitor patient response (e.g., tumor shrinkage, hematological toxicity) for dose-response analysis.

Visualization of Workflows and Pathways

Diagram 1: PET/CT Quantitative Imaging Workflow

Diagram 2: Patient-Specific Dosimetry via Monte Carlo

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Research Reagents and Materials

| Item | Function & Explanation |

|---|---|

| Ge-68/Ga-68 Generator | Long-lived parent (Ge-68, t₁/₂=271d) producing short-lived PET isotope Ga-68 (t₁/₂=68 min) for on-demand radiopharmaceutical synthesis. |

| PSMA-11 / DOTATATE Precursor | High-purity, GMP-grade targeting molecule (peptide) that chelates the radionuclide (Ga-68, Lu-177) for specific tumor binding. |

| Automated Synthesis Module | Closed, shielded system for reproducible, high-activity radiochemistry, ensuring operator safety and compliance with GMP. |

| Radio-TLC/HPLC System | Critical for quality control; measures radiochemical purity and identity of the final product prior to administration. |

| Multimodal Imaging Phantoms | Physical objects with known geometries and activity concentrations for calibrating SPECT/PET scanners and validating MC simulations. |

| Voxelized Computational Phantoms | Digital models (e.g., ICRP reference phantoms) derived from CT/MRI, used in MC simulations for dose estimation and protocol optimization. |

| Monte Carlo Software (GATE/Geant4) | Gold-standard platform for simulating particle transport through complex geometries, integral for scanner design and dosimetry. |

| Dosimetry Software (OLINDA/EXM) | Implements the MIRD formalism to calculate organ-level absorbed doses from Time-Activity Data, often used alongside MC. |

This whitepaper details advanced applications of radiation therapy modeling, framed within a broader doctoral thesis investigating Monte Carlo (MC) methods for simulating particle interactions in biological tissue. The core hypothesis is that MC techniques provide the essential, high-fidelity computational framework required to model the radical physical and chemical processes in two transformative modalities: nanoparticle-enhanced radiotherapy (NPRT) and FLASH radiotherapy. MC codes, such as Geant4, TOPAS, and FLUKA, enable the explicit simulation of radiation transport, energy deposition at nanometer scales, and the subsequent radiochemical cascade, which is critical for optimizing these emerging therapies.

Nanoparticle-Enhanced Radiotherapy (NPRT) Modeling

NPRT utilizes high-Z nanoparticles (NPs) to locally augment radiation dose. MC modeling is indispensable for quantifying the complex physical enhancement mechanisms.

Core Enhancement Mechanisms

- Physical Dose Enhancement: Photoelectric absorption cross-section scales with ~Z⁴. NPs increase localized photoelectron and Auger electron emission.

- Chemical Enhancement: NPs act as catalysts for the radiolysis of water, increasing radical yield (•OH).

- Biological Enhancement: NPs can induce complex DNA damage and interfere with cellular repair signaling.

Monte Carlo Simulation Protocol for NPRT

Objective: To calculate the dose enhancement factor (DEF) around a gold nanoparticle in a water phantom under kV x-ray irradiation.

- Geometry Definition: Model a single spherical gold nanoparticle (e.g., 50 nm diameter) suspended in a cubic water voxel (e.g., 1 µm³).

- Physics List: Utilize a detailed electromagnetic physics list extending down to low energies (e.g., Geant4's

G4EmLivermorePhysics). Enable explicit production of Auger electrons and characteristic x-rays. - Source Definition: Define a parallel beam of monoenergetic or spectrum-defined photons (e.g., 50-300 kVp) incident on the volume.

- Scoring: Implement a dose-scoring mesh (voxel size ~5-10 nm) concentric around the NP to record energy deposition (eV/g/particle).

- Simulation Execution: Run ≥10⁹ primary histories to achieve statistical uncertainty <2% in the NP vicinity.

- Analysis: Calculate the DEF as: DEF(r) = D₍water+NP₎(r) / D₍water only₎(r), where r is the radial distance from the NP surface.

Key Quantitative Data: NPRT

Table 1: Monte Carlo-Derived Dose Enhancement Factors (DEF) for Gold Nanoparticles

| NP Diameter (nm) | X-ray Energy (kVp) | DEF at NP Surface | DEF at 100 nm Distance | Key Simulation Code | Reference (Year) |

|---|---|---|---|---|---|

| 50 | 100 | ~180 | ~4.5 | Geant4-DNA | Schuemann et al. (2016) |

| 100 | 250 | ~45 | ~2.8 | TOPAS-nBio | Lin et al. (2017) |

| 30 | 50 | ~250 | ~6.0 | PENELOPE | McMahon et al. (2011) |

| 20 (Cluster) | 120 | ~300 (local peak) | ~3.0 | MCNP6 | Recent Study (2023) |

FLASH Radiotherapy Modeling

FLASH therapy involves ultra-high dose rate irradiation (>40 Gy/s), which exhibits a protective effect on normal tissue (FLASH effect) while maintaining tumor response. MC modeling is critical to disentangle the physical and chemical origins.

The Oxygen Depletion Hypothesis & MC Modeling

The leading hypothesis is that FLASH irradiation rapidly depletes dissolved oxygen in normal tissue, reducing the yield of permanent, oxygen-fixed peroxic damage. MC codes coupled with chemical reaction-diffusion solvers (e.g., CHEM in TOPAS-nBio) are used to model this temporal radiochemistry.

Monte Carlo Simulation Protocol for FLASH Chemistry

Objective: To model the time-dependent depletion and reoxygenation of oxygen in a capillary tissue model under FLASH vs. conventional dose rates.

- Physical Stage: Use MC to simulate the stochastic physical energy depositions (spurs, blobs, tracks) in a defined volume (e.g., a 1 µm³ voxel of water representing tissue) for a single pulse.

- Chemical Stage (Pre-solution): Define initial concentrations of chemical species (e.g., [O₂] = 50 µM, [•OH scavengers]).

- Chemical Stage (Reaction-Diffusion): For each time step (picoseconds to milliseconds), solve the system of coupled differential equations (e.g., Smoluchowski diffusion-reaction) for all radical interactions (e.g., •OH + O₂ → O₂•⁻, H• + O₂ → HO₂•).

- Pulse Structure: Repeat physical and chemical stages for the pulse train of the FLASH beam (e.g., 1 µs pulses, 100 Hz) versus a continuous conventional beam.

- Output: Track the temporal evolution of [O₂] and the yield of hydrogen peroxide (H₂O₂) as a proxy for fixed damage.

Key Quantitative Data: FLASH Therapy

Table 2: Monte Carlo-Simulated Chemical Yields for FLASH vs. Conventional Dose Rate

| Parameter | Conventional (0.1 Gy/s) | FLASH (>100 Gy/s) | Simulated Tissue Model | Key Finding |

|---|---|---|---|---|

| Initial O₂ Concentration | 50 µM | 50 µM | Capillary (10 µm diam.) | Identical starting conditions |

| O₂ Depletion per 10 Gy | ~15% | >95% | Homogeneous tissue | FLASH induces near-complete depletion |

| Net H₂O₂ Yield | High | Low | Homogeneous tissue | Critical reduction in fixed damage yield |

| Time for O₂ Replenishment | N/A | ~10-100 ms | Vascularized model | Depends on diffusion coefficient & distance |

Integrated Modeling and Pathways

Logical Workflow for Combined NPRT & FLASH MC Study

Integrated MC Workflow for NPRT-FLASH Studies

Key Signaling Pathways Influenced by NPRT & FLASH

Cellular Signaling in NPRT & FLASH Therapy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for NPRT & FLASH Experimental Validation

| Item Name & Category | Primary Function in Research | Example Use-Case / Rationale |

|---|---|---|

| Gold Nanoparticles (Citrate-capped) | High-Z radiosensitizer for NPRT | In vitro proof-of-concept for dose enhancement under kV irradiation. |

| Hypoxia Probes (e.g., Pimonidazole HCl) | Immunohistochemical detection of hypoxic cells | Validate MC-predicted oxygen depletion in FLASH-irradiated normal tissue. |

| γ-H2AX Antibody Kit | Marker for DNA double-strand breaks (DSBs) | Quantify and compare DNA damage complexity after NPRT vs. conventional RT. |

| Reactive Oxygen Species (ROS) Detection Kit (CellROX) | Fluorescent detection of intracellular ROS | Measure the temporal and spatial increase in oxidative stress post-NPRT. |

| 3D Tissue Phantom (Gelatin-based) | Anatomically realistic test medium for dosimetry | Experimental validation of MC-predicted dose distributions around NPs. |