Optimizing Optical Design with Open-Source Algorithms: A Comprehensive Guide for Biomedical Researchers

This article explores the transformative potential of open-source optimization algorithms in optical design, with a specific focus on applications in biomedical research and drug development.

Optimizing Optical Design with Open-Source Algorithms: A Comprehensive Guide for Biomedical Researchers

Abstract

This article explores the transformative potential of open-source optimization algorithms in optical design, with a specific focus on applications in biomedical research and drug development. It provides a foundational understanding of key algorithms, details methodological approaches for implementation, offers solutions for common troubleshooting and optimization challenges, and establishes frameworks for rigorous validation and comparative analysis. Aimed at researchers and scientists, this guide bridges the gap between theoretical optical design and practical, reproducible research tools, enabling the development of advanced imaging systems, diagnostic devices, and analytical instruments.

Understanding Open-Source Algorithms in Optical Design

The Critical Role of Optimization in Modern Optical Systems for Biomedical Applications

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My optical simulation results do not match experimental data. What could be wrong?

- Potential Cause: Stray light within your optical system is introducing unwanted signal noise [1].

- Solution:

- Simulate Stray Light: Use Monte Carlo ray tracing in software like TracePro to identify problematic reflections and scattering points [1].

- Mitigate in Design: Apply anti-reflective coatings to lenses, use baffles and light shields to block unintended paths, and select materials with low surface scatter (low BRDF values) [1].

- Validate Experimentally: Ensure your physical setup includes adequate shielding and that all optical surfaces are clean.

FAQ 2: My lens design optimization is stuck in a local minimum and performance is poor.

- Potential Cause: The optimization algorithm is not effectively exploring the parameter space, a common issue with local optimization methods [2].

- Solution:

- Switch Algorithms: For a starting design far from optimal, use a global optimizer like Differential Evolution or a genetic algorithm [2].

- Hybrid Approach: Once a global optimizer finds a better starting point, refine the design with a faster local optimizer like SLSQP or Nelder-Mead Simplex [2].

- Check Merit Function: Ensure your merit function accurately reflects all key performance targets and constraints.

FAQ 3: My biomedical optical device performs well in the lab but fails in clinical testing. What should I consider?

- Potential Cause: The device may not have been validated under real-world environmental and usage conditions, a key challenge in clinical translation [3] [4].

- Solution:

- Multiphysics Analysis: Use simulation tools (e.g., Ansys Optics) to model thermal and mechanical stresses on optical components to ensure performance stability [4].

- Incorporate Tolerances: Integrate manufacturing tolerances directly into the optimization loop to ensure the "as-built" system is robust [2].

- Early Regulatory Planning: Use simulation data to build the extensive documentation required for regulatory submissions to bodies like the FDA [4].

FAQ 4: How can I generate a lithography mask for a custom diffractive optical element?

- Solution: Use an open-source, end-to-end software package, like the one developed by INL, which can translate an optical design directly into a binary or multilevel lithography file (GDSII, DXF) compatible with standard microfabrication tools [5].

Experimental Protocols & Methodologies

Protocol 1: Optimizing a Triplet Lens Using Open-Source Algorithms

This protocol is based on research comparing open-source optimization algorithms for optical design [2].

- Define the System: Start with a Cooke triplet lens design as a baseline (e.g., 50 mm effective focal length, f/4) [2].

- Construct Merit Function: Define a function that combines system constraints (e.g., effective focal length, total track length) with performance targets (e.g., wavefront error, spot size) [2].

- Select Optimization Variables: Typical variables include surface curvatures, element thicknesses, airspaces, and glass types [2].

- Choose and Run Algorithm:

- For local optimization, use the SLSQP algorithm.

- For global optimization, use Differential Evolution.

- Interface the algorithm with optical design software (e.g., Zemax OpticStudio) via Python to automate ray-tracing and merit function calculation [2].

- Validate Results: Analyze the optimized design using standard metrics like Modulation Transfer Function (MTF) and root-mean-square (RMS) wavefront error.

Protocol 2: Designing a Hologram Phase Mask for Pattern Projection

This methodology is enabled by open-source software for micro-optics [5].

- Define Target Pattern: Specify the desired far-field light distribution.

- Compute Phase Mask: Use an algorithm like Gerchberg-Saxton to iteratively calculate the phase profile that will generate the target pattern [5].

- Discretize for Fabrication: Convert the continuous phase profile into discrete levels compatible with your multilevel lithography process [5].

- Simulate Performance: Use the software's built-in simulation tools to validate optical field propagation in both near- and far-field ranges [5].

- Export Mask File: Generate and export the final lithography mask in GDSII or DXF format [5].

Data Presentation

Performance of Open-Source Optimization Algorithms

The table below summarizes the performance of various open-source algorithms in optimizing a triplet lens design, providing a guide for algorithm selection [2].

Table 1: Comparison of Open-Source Optimization Algorithms for a Triplet Lens

| Algorithm Name | Algorithm Type | Key Performance Findings | Best Use Case |

|---|---|---|---|

| SLSQP | Local (Gradient-based) | Fastest convergence; lowest number of merit function evaluations (2958) [2] | Refining a design that is already close to its optimal state. |

| Nelder-Mead Simplex | Local (Derivative-free) | Reliable convergence; higher number of merit function evaluations (12,635) [2] | Local optimization when gradient calculation is difficult. |

| Differential Evolution | Global (Population-based) | Effective at escaping local minima; finds good starting point for refinement [2] | Exploring new design forms when starting point is poor. |

| SHG (Simple Genetic Algorithm) | Global (Population-based) | Found a viable solution but was less efficient than Differential Evolution [2] | Global search where population-based methods are preferred. |

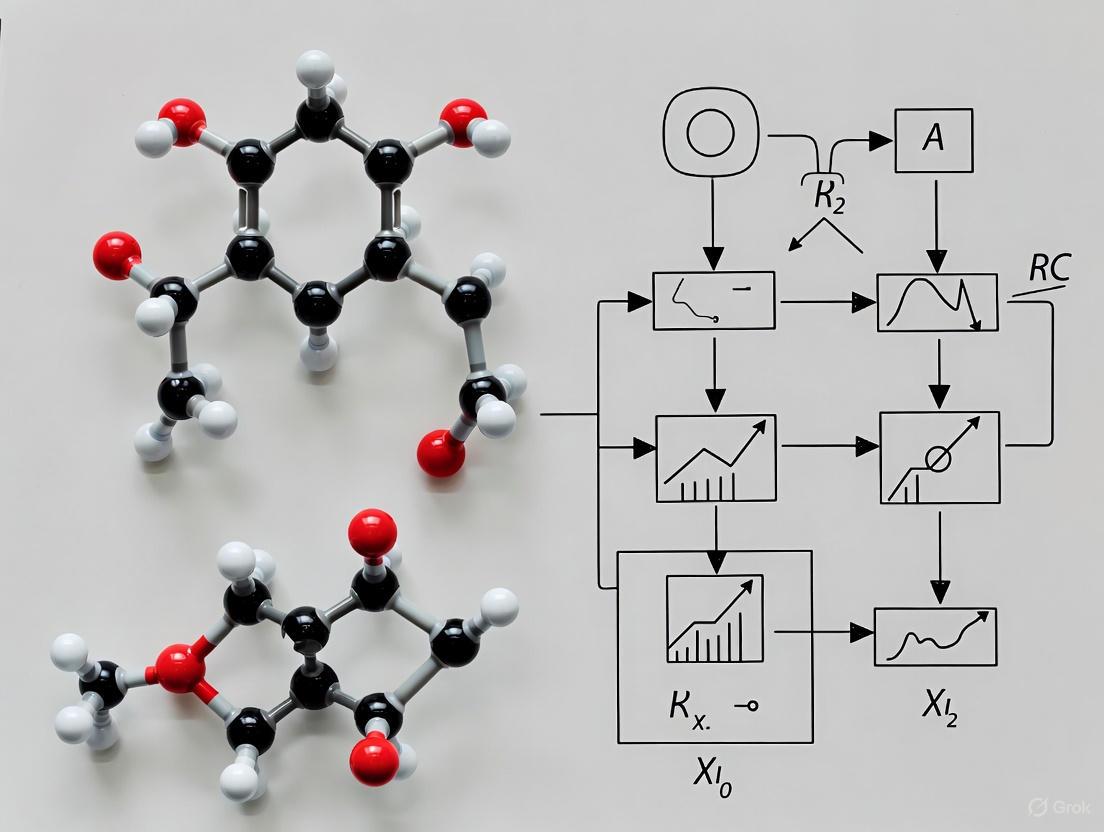

Mandatory Visualization

Optical System Optimization Workflow

Workflow for Optimizing an Optical System

System Integration & Validation Logic

Integrated System Validation Approach

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Optical System Development

| Tool / Material | Function / Explanation |

|---|---|

| Open-Source Python Software (INL) | An end-to-end tool for designing, simulating, and generating lithography masks for micro-optical elements [5]. |

| Optiland | An open-source optical design platform in Python for building, optimizing (with traditional or differentiable methods), and analyzing optical systems [6]. |

| Ansys Optics / Zemax OpticStudio | Commercial optical design software used for high-fidelity simulation, ray tracing, stray light analysis, and system integration [2] [4]. |

| Gerchberg-Saxton Algorithm | An iterative algorithm used to compute hologram phase masks for projecting specific patterns in the far-field [5]. |

| Anti-Reflective (AR) Coatings | Thin films applied to optical surfaces to reduce reflections and stray light, thereby improving image contrast and system throughput [1] [7]. |

| Baffles & Light Shields | Physical structures placed inside an optical system to block stray light from reaching the image plane or detector [1]. |

This technical support center provides troubleshooting guides and FAQs for researchers and scientists, framed within a broader thesis on optimizing optical design with open-source algorithms.

Frequently Asked Questions

What are the primary functional differences between proprietary and free optical design software? Free software often provides core functionalities like ray tracing and basic optimization but may lack advanced features found in proprietary solutions. Key capabilities and their common limitations in free software are summarized below [8].

| Capability | Description | Common Limitations in Free Software |

|---|---|---|

| Ray Tracing | Simulates light path through optical systems; reveals aberrations and image formation [8]. | May struggle with complex geometries or wavelength-dependent effects [8]. |

| Aberration Analysis | Quantifies imperfections like spherical aberration and coma [8]. | May use simplified models, potentially underestimating aberration severity [8]. |

| Optimization Algorithms | Automatically adjusts design parameters to meet performance criteria (e.g., minimizing aberrations) [8]. | Algorithms may be less sophisticated, leading to longer computation times or suboptimal designs [8]. |

| System Simulation | Evaluates overall performance under various conditions, including thermal changes and component tolerances [8]. | Simulation speed can be slower; tolerance analysis may be rudimentary [8]. |

Which open-source or free software packages are recommended for optical design? Community feedback and software databases highlight several packages suitable for different needs [8] [9].

| Software Name | Key Characteristics | Noted Application Context |

|---|---|---|

| OpticsWorkbench | Free and open-source; integrated into FreeCAD; useful for teaching demos and basic geometry design [9]. | Creating teaching demos (e.g., compound microscope) [9]. |

| Geopter | Open-source; reported to be one of the closest open-source equivalents to Zemax [9]. | General optical system design [9]. |

| Pyrate | A Python package for optical design [9]. | Suitable for problems amenable to Python scripting [9]. |

| RayTracing | A reasonably intuitive and easy-to-use Python package [9]. | Optical system design [9]. |

| OpticsPy | Uses the refractive index database as its glass catalog [9]. | Promising for lens design and analysis [9]. |

| WinLens3D Basic | Free version of a commercial software [9]. | General optical design [9]. |

| 3DOptix | Free, cloud-based optical design and simulation tool; no installation required [9]. | Versatile optical designs using a component library [9]. |

| OSLO EDU | Free, educational version of OSLO (Lambda Research); limited to 10 surfaces [9]. | Basic design and optimization [9]. |

What are the common file compatibility challenges with free software? A significant limitation of free software is limited support for industry-standard file formats (e.g., Zemax, Code V). This can impede collaboration and data exchange, potentially requiring manual data conversion or design reconstruction, which introduces risk of errors and inefficiencies. Using software that supports open or widely-adopted formats is critical for project sustainability [8].

What accuracy limitations should I be aware of in free optical design software? The accuracy of simulations is paramount and can be limited in free software in several key areas [8]:

| Aspect of Accuracy | Potential Issue |

|---|---|

| Ray Tracing Precision | Inaccuracies can accumulate in high-numerical-aperture or complex systems, deviating predicted performance [8]. |

| Aberration Calculation Fidelity | Simplified models may misrepresent severity of aberrations, leading to designs that simulate well but perform poorly in practice [8]. |

| Material Model Accuracy | Incomplete refractive index data across wavelengths can lead to errors in chromatic aberration correction [8]. |

| Tolerance Analysis | Rudimentary tolerance analysis may not model complex manufacturing variations, resulting in an overly optimistic performance assessment [8]. |

Troubleshooting Guides

Guide 1: Troubleshooting Software Selection and Workflow Integration

Problem: Difficulty selecting appropriate open-source software and integrating it into an effective workflow.

Solution: Follow a structured methodology to evaluate and deploy software.

Step-by-Step Protocol:

- Define Problem Domain: Clearly outline your optical design problem (e.g., lens design, beam propagation, metasurface simulation) as open-source tools often focus on specific domains [9].

- Map Capabilities to Needs: Compare available software against the capabilities table above. For example, use

Geopterfor comprehensive lens design orRayTracingfor more straightforward, Python-integrated tasks [9]. - Verify File Compatibility: Check software documentation for supported import/export formats to ensure seamless data exchange with collaborators or other tools [8].

- Establish a Validation Method: Given potential accuracy limitations, plan to validate simulation results with experimental measurements or benchmark against trusted software when possible [8].

Software Selection and Validation Workflow

Guide 2: Troubleshooting Common Optical Alignment Issues in Simulation and Experiment

Problem: Optical systems designed in simulation suffer from performance degradation when built, often due to alignment issues.

Solution: Understand and account for common alignment problems during the design and experimental validation phases [10].

Step-by-Step Protocol:

- Incorporate Tolerance Analysis: During the design phase, use available software tools to perform tolerance analysis. This identifies which component misalignments (decenter, tilt) are most critical to system performance [11] [8].

- Design for Stability: Opt for simple, modular optical designs to reduce "alignment complexity." Use robust mechanical mounts in your experiment to improve "alignment stability" against thermal fluctuations and vibration [10].

- Verify Alignment Experimentally: Use precise alignment techniques such as autocollimation, interferometry, or alignment lasers to correct for "misalignment errors" [10].

- Iterate and Optimize: Be prepared to make alignment trade-offs, balancing factors like cost, time, and performance to achieve a functional system [10].

Alignment Troubleshooting and Validation Cycle

Guide 3: Leveraging AI and Advanced Algorithms in Open-Source Contexts

Problem: How to achieve state-of-the-art optimization results, like those enabled by AI in proprietary tools, using open-source approaches.

Solution: Integrate modern algorithmic strategies such as AI-driven optimization and space-efficient design into your workflow [11] [12].

Step-by-Step Protocol:

- Implement Automated Optimization: Develop or utilize open-source algorithms for lens system optimization. AI techniques, such as evolutionary algorithms or gradient-based optimizers, can explore vast design spaces automatically to minimize aberrations, moving beyond tedious manual adjustment [11].

- Explore Inverse Design: Frame problems using inverse design, where desired optical performance is specified and the algorithm works backward to find a device structure. This can discover non-intuitive, high-performance designs [11].

- Apply Space-Efficiency Principles: For compact optical computing or on-chip systems, apply structural sparsity constraints inspired by neural network pruning. This can reduce device footprint to 1%-10% of conventional designs with minimal performance loss [12].

- Utilize Surrogate Models: To accelerate computationally expensive simulations, train machine learning surrogate models. These models act as fast approximations of physics-based simulations, enabling rapid design iteration and tolerance analysis [11].

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details key computational and material solutions used in advanced optical design research.

| Item Name | Function / Explanation |

|---|---|

| AI Optimization Algorithms | Algorithms that automate the exploration of lens parameters to minimize aberrations and meet design targets, drastically reducing design time [11]. |

| Inverse Design Algorithms | Computational methods that start from a desired optical function and solve for the physical structure that will produce it, enabling novel component designs [11]. |

| Surrogate Models | Machine-learning models trained to approximate slow, physics-based simulations, allowing for near-instant performance evaluation during design exploration [11]. |

| Structural Sparsity Constraints | Design constraints motivated by wave physics that enforce local connectivity patterns, enabling dramatic size reductions in optical computing devices [12]. |

| Digital Diagnostic Monitoring (DDM) | A feature in modern optical transceivers that provides real-time data on parameters like transmit/receive power, crucial for troubleshooting physical links [13]. |

| Optical Time-Domain Reflectometer (OTDR) | A tool that provides a graphical "map" of an optical fiber, used to locate faults like breaks or poor splices in physical fiber optic links [13]. |

Optimizing an optical system involves adjusting its parameters to achieve the best possible performance, which is quantified by a "merit function." This function is a mathematical representation of the system's performance, often including factors like image sharpness, distortion, and aberration. The choice of optimization algorithm is critical, as it determines how efficiently the software can navigate the complex landscape of possible designs to find the optimum configuration. Broadly, these algorithms fall into two categories: local optimizers, which refine an existing design, and global optimizers, which search the entire parameter space for the best possible solution [14].

The transition to cloud computing has enabled the use of massively parallel processing for optical design problems. This approach allows researchers to evaluate countless system configurations simultaneously, making it feasible to apply global optimization algorithms that were previously too computationally expensive [15].

Core Algorithm Families: Local vs. Global

Local Optimization Algorithms

Local optimization algorithms are designed for refinement. They require a starting point—an initial optical design—and then perform a targeted search of the nearby parameter space to find a local minimum in the merit function. They are highly efficient at converging to the nearest optimum but can become trapped in a "good enough" solution if the design landscape is complex.

- Principle of Operation: These methods work by iteratively calculating the direction of steepest descent (the gradient) from the current design point and taking a step in that direction to improve the merit function.

- Common Techniques: Damped Least Squares (DLS) is a classic and widely used local method in optical design due to its rapid convergence. Sequential Least Squares Programming (SLSQP) is another powerful local method for constrained optimization [14].

- Typical Workflow: A designer provides a starting lens design, and the local optimizer adjusts surface curvatures, thicknesses, and material types to minimize aberrations.

Global Optimization Algorithms

Global optimization algorithms explore a much wider range of the design space. They are less reliant on the quality of the starting point and are specifically designed to avoid becoming trapped in local minima. This makes them ideal for exploring novel optical configurations or when a good starting point is not known.

- Principle of Operation: These algorithms use various strategies to maintain a population of potential solutions, encouraging both exploitation of good designs and exploration of new regions.

- Common Techniques:

- Genetic Algorithms (GAs): Mimic natural selection by creating a population of lens systems, "mating" the best performers to create offspring, and introducing random "mutations" to explore new designs [16].

- Particle Swarm Optimization (PSO): A population-based method where candidate designs ("particles") move through the parameter space based on their own best-known position and the best-known position of the entire swarm.

- Simulated Annealing: Inspired by the annealing process in metallurgy, this method occasionally allows moves to worse designs, helping it escape local minima [14].

Hybrid Optimization Strategies

Given the strengths and weaknesses of both approaches, a highly effective strategy is to combine them. A hybrid workflow uses a global algorithm to perform a broad exploration of the design space and identify promising regions. The best result from the global search is then passed to a local optimizer for fine-tuning and rapid convergence to the nearest precise optimum [14] [16]. This approach balances comprehensive exploration with efficient refinement.

Table 1: Comparison of Local and Global Optimization Algorithms

| Feature | Local Optimization | Global Optimization |

|---|---|---|

| Primary Strength | High speed and precision for refining a design | Ability to escape local minima and discover novel designs |

| Dependence on Starting Point | High; requires a good starting design | Low; can start from a random or poor design |

| Risk of Trap in Local Minima | High | Low |

| Computational Cost | Lower per iteration | Significantly higher, requires parallel processing |

| Typical Methods | Damped Least Squares (DLS), SLSQP [14] | Genetic Algorithms, Particle Swarm, Simulated Annealing [14] [16] |

| Best Use Case | Final design refinement, small perturbations | Initial design phases, innovative system design |

Experimental Protocols & Workflows

A Standard Hybrid Optimization Workflow

The following diagram and protocol outline a robust method for optimizing an optical system using a hybrid global-local approach, as demonstrated in research [16].

Diagram 1: Hybrid Global-Local Optimization Workflow

Protocol: Hybrid Genetic and Bisection Optimization for Optical Systems

1. System Definition and Merit Function Setup

- Objective: Define the optical system's initial parameters and the metric for performance.

- Procedure:

- Input the starting optical layout (e.g., lens curvatures, thicknesses, materials).

- Define the merit function. This includes:

- Operands: Specify which optical properties to optimize (e.g., spot size, wavefront error, distortion).

- Weights: Assign a weight factor to each operand based on its importance in the final design [16].

- Set constraints (e.g., minimum edge thickness, maximum total length).

2. Global Optimization via Genetic Algorithm

- Objective: Broadly explore the design space to find a region near the global optimum.

- Procedure:

- Configure GA Parameters: Set the population number (number of design variants) and the size of the search area for each variable [16].

- Run Iterations: Allow the genetic algorithm to run, applying selection, crossover, and mutation over multiple generations.

- Monitor for Abort Criterion: Continue until a predefined condition is met, such as a maximum number of generations or a lack of improvement in the merit function.

3. Local Refinement via Bisection Method

- Objective: Precisely converge to the local optimum from the design found by the GA.

- Procedure:

- Select Candidate: Take the best-performing design candidate from the GA's final population.

- Apply Local Optimizer: Use a deterministic local optimizer like the bisection method or Damped Least Squares to fine-tune the parameters [16].

- Check Convergence: Iterate until the merit function improvement falls below a specified tolerance, indicating a local minimum has been found.

The Scientist's Toolkit: Essential Open-Source Software

Table 2: Key Open-Source Software for Optical Design & Optimization

| Tool Name | Primary Function | Role in Optimization |

|---|---|---|

| Pyrate [9] | Optical ray tracing and design. | Provides the engine for evaluating the merit function of a given optical design during optimization. |

| OpticsPy [9] | Python-based optical design package. | Offers a scripting environment to define optimization problems and link ray tracing with algorithm libraries. |

| RayTracing [9] | A Python package for optical system design. | Used for rapid prototyping and analysis of optical systems within an optimization loop. |

| Geopter [9] | An open-source optical design tool. | Functions as a close, free alternative to commercial tools like Zemax, featuring various optimization algorithms. |

| Meep [17] | Finite-difference time-domain (FDTD) simulation. | Simulates light propagation in complex structures; often used to evaluate and optimize nanophotonic devices. |

| RSoft Device University Bundle [17] | Suite for photonic device simulation. | Includes "MOST," a multi-variable optimization tool, for automating design sweeps of photonic components. |

Troubleshooting Common Optimization Issues

FAQ 1: The optimizer is not improving my design. The merit function is stuck. What should I do?

- Problem: The algorithm is trapped in a local minimum.

- Solution:

- Switch to a Global Algorithm: If you started with a local optimizer, your initial design might be in a poor region of the design space. Restart the optimization using a global algorithm like a Genetic Algorithm or Particle Swarm Optimization to find a better starting point [14].

- Adjust Algorithm Parameters: For a Genetic Algorithm, try increasing the population size or the mutation rate to encourage more exploration [16].

- Check Constraints: Overly strict constraints can prevent the optimizer from finding a better solution. Review your boundary conditions and minimum/maximum values.

FAQ 2: The optimization process is taking too long. How can I speed it up?

- Problem: The computational cost of evaluating the merit function is too high.

- Solution:

- Leverage Parallel Computing: Cloud computing can be utilized to run optimization algorithms in a massively parallel way. Ensure your software and algorithm can distribute ray-tracing evaluations across multiple cores or machines [15].

- Simplify the Merit Function: Reduce the number of field points, wavelengths, or rays traced per evaluation to get faster feedback from the merit function.

- Use a Hybrid Approach: A pure global optimization can be slow to converge. Use a global optimizer for a limited number of iterations to get into the right region, then switch to a faster local optimizer for final convergence [14] [16].

FAQ 3: The optimized design is theoretically good but cannot be manufactured. What went wrong?

- Problem: The optimization did not account for real-world manufacturing tolerances.

- Solution:

- Perform Tolerancing Analysis: After optimization, use your software's tolerancing features to model how performance degrades with expected manufacturing variations (e.g., curvature errors, thickness variations, misalignments) [14].

- Include Tolerancing in the Merit Function: Some advanced workflows can incorporate tolerancing sensitivity directly into the merit function, pushing the optimizer toward designs that are not only high-performing but also robust.

FAQ 4: How do I choose the right weights for my merit function operands?

- Problem: The optimizer is sacrificing one important performance metric to improve another.

- Solution:

- Prioritize System Requirements: Assign higher weights to operands that are critical for your application (e.g., distortion for a metrology system, MTF for an imaging system).

- Iterate and Review: Optimization is often an iterative process. Run the optimizer, review the resulting design, and if a specific operand is underperforming, increase its weight and re-run the optimization [16].

Advanced Topics & Best Practices

Managing Optical Aberrations through Optimization

A primary goal of optical design optimization is to control and minimize aberrations. The optimizer works to balance various aberrations across the field of view and spectrum.

- Strategy: The optimization merit function should include specific operands that target known aberrations, such as spherical aberration, coma, and astigmatism. The weight of these operands can be adjusted based on their impact on the final image quality [14].

- Challenge: Correcting one aberration can often exacerbate another. The optimization algorithm's role is to find the best possible compromise given the system's constraints.

Algorithm Selection Guide

Use the following decision diagram to select an appropriate optimization strategy for your problem.

Diagram 2: Algorithm Selection Guide

This technical support center provides troubleshooting guides and FAQs for researchers using key Python libraries—NumPy, SciPy, and PyTorch—in optical design experiments. The content supports a thesis on optimizing optical design with open-source algorithms, offering practical solutions for computational challenges.

Frequently Asked Questions (FAQs)

Q1: My gradient-based optimization for a lens system is stuck in a poor local minimum. How can I improve the design?

A1: This is a common challenge in classical optimization. We recommend implementing a curriculum learning strategy, as used in the DeepLens framework [18].

- Methodology: Break down the full lens design task into milestones of increasing complexity. Start optimization with a small aperture size and a narrow field of view (FoV). Once a stable solution is found, strategically and gradually increase the aperture size and FoV in subsequent optimization runs [18].

- Libraries Used: This approach is effectively implemented using PyTorch, which provides the automatic differentiation needed for gradient-based optimization and allows for easy manipulation of model parameters during training [18].

- Additional Tip: Incorporate optical regularization terms in your loss function to prevent degenerate, non-physical lens geometries (e.g., self-intersecting surfaces) [18].

Q2: How can I accelerate the simulation of large-scale optical systems for deeper design exploration?

A2: You can leverage hardware acceleration and scalable algorithms.

- GPU Acceleration: Utilize PyTorch for its seamless GPU support. Porting your core optical calculations (e.g., wave propagation, matrix multiplications) to PyTorch tensors can dramatically reduce computation time [19].

- Efficient Linear Algebra: For fundamental numerical computations, ensure you are using optimized routines from NumPy and SciPy. These libraries are highly optimized for performance on both CPU and, for some SciPy operations, GPU [20] [21].

- Parametric Design: Use parametric modeling tools, such as those in LaserCAD, to quickly iterate and visualize designs without recalculating everything from scratch [22].

Q3: I need to move from a theoretical optical model to a physical component. How can I generate the necessary files for microfabrication?

A3: This transition requires software that bridges optical design and nanofabrication.

- Solution: Use specialized open-source packages like the one developed by INL researchers [5].

- Workflow:

- Design: Create your desired optical function (e.g., a Fresnel lens or a phase mask for holography).

- Simulate: Validate the optical performance in both near-field and far-field using the package's simulation tools.

- Export: Generate lithography-ready mask files directly from the designed topography. The software can export industry-standard formats like GDSII and DXF, which are compatible with grayscale lithography tools [5].

Troubleshooting Guides

Issue 1: Managing Spatial Complexity in Optical Neural Networks (ONNs)

Problem: A free-space optical neural network (ONN) design is becoming physically too large (spatially complex) to be practical for its intended operation, such as image classification [12].

Diagnosis: The physical size of an optical computing system is governed by its "spatial complexity." The thickness t of a free-space optical device is fundamentally bounded by the "overlapping nonlocality" (the number of independent sideways communication channels C required), the free-space wavelength λ₀, the refractive index n, and the maximum ray angle θ [12]. The relationship is given by:

t ≥ max(C) * λ₀ / [2(1 - cos θ)n] (for a 1D system) [12].

An overly large design indicates inefficient use of these communication channels.

Solution: Apply a physics-informed neural network pruning technique [12].

- Define the ONN: Model your optical system as a neuromorphic network (e.g., an Optical Neural Network) where its kernel operator is represented by a matrix

D[12]. - Impose Structural Sparsity: During training, enforce a "local sparse" structure on the kernel. This constraint limits the connections between input and output ports, effectively reducing the

max(C)parameter [12]. - Prune and Retrain: Use standard neural network pruning methods to remove non-essential connections within this sparsity constraint, then retrain the network to recover accuracy [12].

- Outcome: This method has been shown to reduce the device footprint to 1%-10% of conventional designs with minimal performance loss [12].

Issue 2: High Memory Usage During Differentiable Ray Tracing

Problem: Differentiable ray tracing, used for end-to-end lens design optimization, consumes excessive GPU memory, limiting model resolution and complexity [18].

Diagnosis: This occurs because tracking the gradients (for backward pass) of a high-resolution ray bundle through multiple optical surfaces requires storing a very large computation graph.

Solution: Implement memory-control strategies [18].

- Gradient Checkpointing: Instead of storing all intermediate activations, save only select checkpoints during the forward pass. Recompute the intermediate values between checkpoints during the backward pass. This trades computation time for reduced memory usage.

- Mixed-Precision Training: Use lower-precision data types (e.g., 16-bit floating point,

float16) for certain operations while keeping critical parts in full precision (float32) to maintain stability. - Library: These strategies are natively supported in deep learning frameworks like PyTorch.

Experimental Protocols

Protocol 1: End-to-End Optimization of an Extended Depth-of-Field (EDoF) Lens

This protocol outlines the methodology for designing a computational imaging system where the optics and the processing algorithm are co-optimized [18].

- Objective: Automatically design a compact EDoF lens and associated image reconstruction network.

- Hypothesis: A curriculum learning strategy combined with deep learning optimization can overcome local minima and discover novel, high-performance lens designs from scratch.

1. Materials & Software (The Research Reagent Solutions)

| Item Name | Function in the Experiment | Library/Framework |

|---|---|---|

| DeepLens Framework | Provides the core environment for automated lens design using differentiable ray tracing [18]. | PyTorch |

| Differentiable Ray Tracer | Calculates light propagation through optical surfaces and enables gradient flow for optimization [18]. | PyTorch |

| Curriculum Scheduler | Manages the progressive increase of aperture size and field of view during training [18]. | Custom Scripts |

| Optical Regularizer | Penalizes non-physical lens geometries in the loss function to ensure manufacturable designs [18]. | PyTorch |

| Image Reconstruction CNN | A neural network that deconvolves the captured EDoF image; co-optimized with the lens [18]. | PyTorch |

2. Workflow Diagram

3. Step-by-Step Instructions

- Initialization: Start the optimization with flat optical surfaces [18].

- Set Curriculum Milestone: Begin with a small aperture and a narrow field of view [18].

- Forward Simulation:

- Perform differentiable ray tracing for a set of training scenes and depths [18].

- Simulate the image formation on the sensor.

- Image Reconstruction: Pass the simulated, blurry image through the trainable reconstruction CNN [18].

- Loss Calculation: Compute the loss between the reconstructed image and the ground truth. Add optical regularization terms to the loss to penalize invalid lens shapes [18].

- Backward Pass & Optimization: Use PyTorch's autograd to compute gradients and update the lens parameters and the CNN parameters [18].

- Curriculum Update: Once loss convergence is achieved for the current milestone, increase the aperture size and/or FoV as per the scheduler and return to Step 3 [18].

- Termination: The process completes when the final milestone is reached and the performance target is met [18].

Protocol 2: From Optical Function to Lithography Mask Generation

This protocol describes the process of designing a micro-optical element and generating the files required for its fabrication [5].

- Objective: Create a functional micro-optical element (e.g., a diffractive lens) and produce its corresponding binary or multilevel lithography mask.

- Hypothesis: An open-source Python package can provide an end-to-end solution for the design, simulation, and mask generation of micro-optical elements, democratizing access to advanced optics fabrication.

1. Materials & Software (The Research Reagent Solutions)

| Item Name | Function in the Experiment | Library/Framework |

|---|---|---|

| INL Micro-Optics Package | The core open-source software for design, simulation, and mask generation [5]. | Python |

| Phase/Height Profile Generator | Creates the computational design for optical elements like Fresnel or Alvarez lenses [5]. | Custom (INL Package) |

| Gerchberg-Saxton Algorithm | A computational method for generating hologram phase masks for pattern projection [5]. | Custom (INL Package) |

| Near/Far-Field Simulator | Validates the optical performance of the designed element before fabrication [5]. | Custom (INL Package) |

| Mask Exporter | Converts the computed design into industry-standard lithography file formats [5]. | Custom (INL Package) |

2. Workflow Diagram

3. Step-by-Step Instructions

- Define Function: Specify the intended optical function (e.g., focus light to a point, project a specific pattern) [5].

- Generate Profile: Use the software's generators (e.g., for a Fresnel lens) or algorithms (e.g., Gerchberg-Saxton for holograms) to compute the required surface relief or phase profile [5].

- Discretize into Masks: The package will automatically discretize the continuous topography into the required number of binary or multilevel mask layers compatible with specific microfabrication processes like grayscale lithography [5].

- Simulate and Validate: Use the integrated simulation tools to model the optical fields in the near- and far-field to ensure the design performs as expected [5].

- Iterate: If performance is unsatisfactory, adjust the design parameters and return to Step 2.

- Export Mask: Once validated, export the final mask design as a GDSII or DXF file, which is ready for use in standard microfabrication tools [5].

Quantitative Comparison of Key Python Libraries

The table below summarizes the core quantitative data for the key Python libraries discussed, providing a clear comparison of their roles and metrics in optical design.

Table 1: Core Python Libraries for Optical Design and Scientific Computing

| Library | Primary Role in Optical Design | Key Metrics (GitHub Stars / Downloads) | Example Use-Case in Optics |

|---|---|---|---|

| NumPy [20] | Foundation for numerical computation; handling multidimensional arrays and linear algebra. | 25K Stars / 2.4B Downloads [20] | Representing wavefronts, sensor data, and performing Fourier transforms for wave propagation. |

| SciPy [21] | Building on NumPy with advanced algorithms for optimization, integration, and linear algebra. | Information in search results is insufficient for a specific number. | Solving optimization problems for lens parameters, signal processing for optical coherence tomography. |

| PyTorch [23] [21] | Enabling differentiable optical simulations and end-to-end optimization of optical systems and AI models. | Information in search results is insufficient for a specific number. | Differentiable ray tracing (DeepLens) [18], implementing and training Optical Neural Networks (ONNs) [12]. |

| Scikit-learn [20] | Providing traditional machine learning tools for data analysis and pattern recognition. | 57K Stars / 703M Downloads [20] | Classifying image quality metrics, clustering types of optical aberrations in a dataset. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the key differences between local and cloud-based computational approaches for optical design optimization?

Local computing uses a single workstation or desktop computer, where all ray tracing, analysis, and optimization processes occur on local hardware. This approach offers immediate feedback and direct control but is limited by the computer's processing power, memory, and storage capacity. Cloud-based computing distributes these tasks across multiple virtual machines or processors in the cloud, enabling massively parallel processing that can significantly accelerate optimization, particularly for complex systems with many variables or when running multiple design variations simultaneously [2].

FAQ 2: Which open-source optimization algorithms are most suitable for different types of optical design problems?

The choice of algorithm depends on your specific design problem and available computational resources. For local optimization where a reasonable starting point is known, gradient-based algorithms like SLSQP are efficient, requiring fewer merit function calculations [2]. For global optimization problems where the optimal solution isn't nearby, algorithms like Differential Evolution or SHGO perform better at exploring the entire design space. Population-based algorithms like CMA-ES can be implemented with generalized island models for parallelization, making them well-suited for cloud environments [2].

FAQ 3: How can I determine when to transition from desktop to cloud-based computing for my optical design projects?

Consider transitioning to cloud-based computing when you encounter: (1) optimization runtimes exceeding practical timeframes on your desktop, (2) designs with numerous variables (e.g., multi-element systems with high-order aspherical surfaces), (3) requirements for extensive tolerance analyses, or (4) needs for running multiple optimizations simultaneously with different parameters [2] [8]. The transition is also warranted when implementing advanced techniques like integrating manufacturing tolerances directly into optimization or incorporating computational photography steps at the design stage [2].

FAQ 4: What file compatibility issues should I anticipate when using open-source optical design tools?

Many free optical design programs have limited support for proprietary file formats used in commercial software like Zemax or CODE V [8]. This can impede collaboration and data exchange. To mitigate these issues: (1) use standard interchange formats like STEP or IGES when possible, (2) verify specific import/export capabilities before selecting software, and (3) maintain documentation of optical specifications in standardized formats to facilitate manual recreation if necessary [8].

FAQ 5: How do optimization algorithms in open-source tools compare to proprietary implementations in commercial optical design software?

Open-source algorithms provide flexibility and transparency but may lack the specialized refinements of commercial implementations. Proprietary algorithms in software like CODE V, OpticStudio, and SYNOPSYS have been specifically tuned for optical design problems over many years [2]. For instance, SYNOPSYS implements the PSD III method claimed to be the fastest lens optimization available, while CODE V has introduced Step Optimization for faster convergence [2]. Open-source alternatives can achieve good results but may require more computational time or parameter tuning.

Troubleshooting Guides

Problem 1: Slow Optimization Convergence

Symptoms: Optimization processes take excessively long to converge to a solution, with minimal improvement in merit function value over many iterations.

Solution:

- Verify Algorithm Selection: Ensure you're using an appropriate algorithm for your problem type. For local optimization, use SLSQP or Nelder-Mead Simplex. For global optimization, use Differential Evolution or SHGO [2].

- Adjust Algorithm Parameters: For population-based algorithms, increase population size for more complex problems. For gradient-based methods, adjust tolerance settings.

- Simplify Merit Function: Reduce unnecessary operands in your merit function that may not significantly impact final performance.

- Check Variable Constraints: Review constraints to ensure they're not overly restrictive and preventing convergence.

- Utilize Parallel Processing: Implement population-based algorithms with island models for parallelization on cloud platforms [2].

Symptoms: Software crashes, excessive swap file usage, or dramatically slowed performance during ray tracing or optimization.

Solution:

- Desktop Scaling:

- Upgrade RAM to handle larger optical systems and ray sets

- Utilize multi-core processors for parallel ray tracing

- Employ solid-state drives for faster data access

- Cloud Scaling:

- Implement parallelizable optimization algorithms that can run on scalable cloud computing systems [2]

- Use generalized island models for population-based algorithms

- Distribute different design variations across multiple cloud instances

- Algorithm Optimization:

- For local optimization, SLSQP algorithm requires fewer merit function calculations (e.g., 2,958 vs. 12,635 for Nelder-Mead in testing) [2]

- Adjust ray density settings based on required precision

Problem 3: Poor Optimization Results

Symptoms: Optimization fails to produce usable designs, gets stuck in local minima, or produces designs that cannot be manufactured.

Solution:

- Implement Hybrid Approach: Use global optimization to identify promising regions of the solution space, then refine with local optimization [2].

- Multi-start Strategy: Run multiple optimizations from different starting points to identify the best solution.

- Review Manufacturing Constraints: Ensure all physical constraints (center thickness, edge thickness, air spacing) are properly implemented in the merit function.

- Adjust Merit Function Weights: Rebalance weights to prioritize critical performance parameters.

- Verify Variable Selection: Ensure appropriate parameters are set as variables for the optimization problem.

Problem 4: Software Compatibility and Data Transfer Issues

Symptoms: Inability to import/export designs between different software platforms, loss of data during transfer, or missing features after conversion.

Solution:

- Use Standard File Formats: Convert designs to STEP, IGES, or SAT formats when moving between platforms [24] [8].

- Maintain Design Documentation: Keep detailed records of optical specifications, materials, and performance data independent of proprietary file formats.

- Verify Critical Parameters: After conversion, manually check that all optical surfaces, materials, and system parameters transferred correctly.

- Implement Intermediate Scripts: Develop Python or other scripts to translate between different software data formats [2].

Experimental Protocols

Protocol 1: Computational Resource Benchmarking

Objective: Quantify the performance of different computational setups for specific optical design tasks to inform resource allocation decisions.

Methodology:

- Select a standardized test case (e.g., triplet lens optimization with defined variables and constraints) [2].

- Establish performance metrics: optimization time, number of iterations, final merit function value, and computational resource utilization.

- Run identical optimization problems on:

- Standard desktop workstation (baseline)

- High-performance desktop with maximum resources

- Cloud-based single instance comparable to desktop

- Cloud-based parallel processing configuration

- Execute each configuration multiple times to establish statistical significance.

- Document resource scaling factors and performance improvements.

Expected Outcomes: Quantitative comparison of computational approaches informing optimal resource allocation for different project types.

Protocol 2: Open-source Algorithm Performance Evaluation

Objective: Systematically evaluate different open-source optimization algorithms for optical design applications.

Methodology:

- Define test optical system with complete specifications (e.g., Cooke triplet with 50mm effective focal length, f/4, 12.5mm entrance pupil diameter) [2].

- Implement multiple optimization algorithms using Python programming language interfaced with optical design software [2].

- For each algorithm, track:

- Convergence rate (merit function vs. iterations)

- Computational time per iteration

- Final optical performance achieved

- Stability and reliability across multiple runs

- Test both local (SLSQP, Nelder-Mead Simplex) and global (Differential Evolution, SHGO) optimization algorithms [2].

- Compare results against proprietary algorithms when possible.

Expected Outcomes: Algorithm selection guidelines for different optical design scenarios based on quantitative performance data.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Optical Design Research |

|---|---|

| Open-Source Optimization Algorithms | Provides the core mathematical routines for automatically improving optical designs by minimizing aberrations while satisfying constraints [2] [8]. |

| Python Programming Interface | Enables customization and automation of optical design workflows, allowing researchers to implement and test novel optimization approaches [2]. |

| Ray Tracing Engine | Calculates how light propagates through optical systems, providing the fundamental data for evaluating design quality and computing merit functions [2] [8]. |

| Merit Function Framework | Quantifies optical system performance through a weighted sum of aberrations and constraint violations, guiding the optimization process [2]. |

| Cloud Computing Platform | Provides scalable computational resources for running parallel optimizations and handling complex designs that exceed desktop capabilities [2]. |

| Material Database | Contains refractive index and dispersion information for optical materials, essential for accurate simulation of light propagation [8]. |

| Analysis Tools | Evaluate specific optical properties including spot diagrams, MTF, wavefront error, and illumination patterns for comprehensive design assessment [24]. |

Computational Workflow Diagram

Optical Design Computational Pathway: This workflow illustrates the decision process for selecting computational approaches in optical design optimization, showing both desktop and cloud-based pathways.

Computational Resource Profiling Table

| Analysis Type | Desktop Resources | Cloud Scaling | Optimization Approach |

|---|---|---|---|

| Simple Lens Optimization | 8-16GB RAM, Multi-core CPU | Usually unnecessary | Local optimization (SLSQP, Nelder-Mead) [2] |

| Global Optimization | 16-32GB RAM, High-speed CPU | Beneficial for population-based algorithms | Differential Evolution, SHGO [2] |

| Tolerance Analysis | 16-32GB RAM, Fast storage | Highly recommended for Monte Carlo | Parallel sampling across instances [8] |

| Illumination Design | 32+GB RAM, GPU acceleration | Essential for non-sequential ray tracing | Interactive optimization with parallel ray tracing [24] |

Implementing Open-Source Algorithms in Your Optical Design Workflow

Core Concepts in Optical Design Structuring

Structuring an optical design problem effectively requires a clear definition of its three fundamental components: the variables the software can adjust, the constraints that must be obeyed, and the merit function that quantifies performance. This structured approach is vital for leveraging open-source optimization algorithms efficiently, guiding them to produce a viable design that meets specifications.

Variables are the adjustable parameters in your optical system. Common examples include:

- Surface Curvatures: The radii of lens surfaces.

- Thicknesses: The distances between optical elements.

- Material Properties: The glass or optical material types.

- Aspheric Coefficients: Parameters defining non-spherical surfaces.

Constraints are the boundaries and conditions that a valid design must satisfy. They ensure the design is physically realizable and meets system requirements. Typical constraints include:

- Physical Realizability: Positive edge thicknesses for lenses, minimum center thickness.

- System Packaging: Overall system length, element diameters.

- Performance Specifications: Focal length, field of view, or distortion limits.

The Merit Function (or Error Function) is a single numerical value that quantifies the performance of the current optical system configuration. The goal of the optimization algorithm is to minimize this value. It is typically constructed from a weighted sum of squares of specific operands that measure aberrations or deviations from target specifications.

Troubleshooting Common Optimization Issues

Q1: The optimization algorithm fails to converge or produces a design with poor performance. What are the primary causes?

A: Poor convergence often stems from an improperly formulated problem. Key issues include:

- Over-constrained System: Having too many constraints can severely limit the solution space, making it difficult for the algorithm to find a valid design. Review constraints and remove any that are not strictly necessary.

- Poor Starting Point: The initial system configuration may be too far from a viable solution. The optimization algorithm, especially a local optimizer, may get stuck in a poor local minimum. Consider simplifying the system or using a different, more robust starting point.

- Inadequate Variables: The set of variables may be insufficient to correct the dominant aberrations in the system. Ensure that critical parameters, such as key curvatures and material choices, are included as variables.

- Ill-conditioned Merit Function: An improperly weighted merit function can give undue importance to one aberration while neglecting others. Review and rebalance the weights of the operands in your merit function.

Q2: The optimized design is difficult or impossible to manufacture. How can this be avoided?

A: This is a common pitfall, often resulting from a lack of manufacturing constraints during optimization. To prevent this:

- Incorporate Manufacturing Constraints from the Start: Include constraints on element center and edge thicknesses, minimum allowable radius of curvature, and realistic glass materials from available catalogs. Do not add these as an afterthought [25].

- Understand the Application: A design that meets every performance specification on paper but is overly sensitive to manufacturing tolerances is not a good design. Consider the environmental conditions (temperature, vibration) and specify surface roughness and wavefront requirements appropriately, as over-specification drastically increases cost and production time [25].

- Consult with Manufacturing Experts: During the design phase, consult with optical engineers and technicians to identify potential assembly and alignment issues, such as problems related to tilt, decenter, and the use of adhesives or retaining rings [25].

Experimental Protocol: Structuring a Simple Singlet Lens Design Problem

Objective: To define a well-structured optimization problem for a single-element lens to achieve a target focal length with minimal spherical aberration.

Materials & Setup:

- Software: An open-source optical design platform like OptiLand, which provides a Python API for constructing, optimizing, and analyzing optical systems [6].

- Initial Configuration: A single lens element defined by two spherical surfaces.

Procedure:

- Define Variables:

- Set the front (R1) and back (R2) surface curvatures as variables.

- Set the lens thickness as a variable.

- Define Constraints:

- Impose a minimum center thickness constraint (e.g.,

CT > 2.0 mm). - Impose a minimum edge thickness constraint (e.g.,

ET > 1.0 mm). - Set the effective focal length (EFL) as a fixed target value (e.g.,

EFL = 100 mm).

- Impose a minimum center thickness constraint (e.g.,

- Construct the Merit Function:

- The primary goal is to minimize spherical aberration. Use the

SPHAoperand or trace multiple rays and minimize the spot size (RMS) at the image plane. - Use an operand to enforce the focal length constraint, applying a high weight to ensure it is met.

- The merit function

Φis constructed as:Φ = w1 * (SPHA)^2 + w2 * (Current_EFL - Target_EFL)^2, wherew1andw2are weighting factors.

- The primary goal is to minimize spherical aberration. Use the

- Execute Optimization:

- Run a local optimization algorithm (e.g., Damped Least Squares) to minimize the merit function.

- Analyze the resulting design. If performance is inadequate, consider relaxing constraints, adding more variables (e.g., by making the lens aspheric), or changing the starting point.

Visualization of the Optical Design Workflow

The following diagram illustrates the logical workflow and iterative feedback loop of structuring and solving an optical design problem.

Optical Design Optimization Feedback Loop

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and "reagents" for computational optical design research.

Table: Essential Resources for Optical Design Research

| Resource / Tool | Function / Description | Example in Open-Source Context |

|---|---|---|

| Design Software | Platform for building optical models, ray tracing, optimization, and analysis. | OptiLand: An open-source platform in Python for classical and computational optics, supporting tolerancing and optimization [6]. |

| Educational Texts | Foundational knowledge on principles, techniques, and historical context of lens design. | Kingslake's "Lens Design Fundamentals": Covers core principles, ray tracing, and various lens types with practical examples [26]. Smith's "Modern Lens Design": A comprehensive guide on modern design principles, aberrations, and advanced techniques [26]. |

| Optimization Algorithm | The mathematical engine that adjusts variables to minimize the merit function. | Open-source libraries (e.g., SciPy) or built-in algorithms in platforms like OptiLand, which may support traditional methods and GPU-accelerated, differentiable models [6]. |

| Material Catalog | A database of optical glasses and materials with refractive indices, dispersion, and other properties. | Integrated GlassExpert module in OptiLand or open data sets of glass properties for accurate material selection and substitution [6]. |

| Analysis Tools | Modules for quantifying system performance against requirements. | Tools within OptiLand for analyzing paraxial properties, wavefront errors, Point Spread Functions (PSF), and Modulation Transfer Function (MTF) [6]. |

| Online Communities | Forums for discussion, troubleshooting, and knowledge sharing with peers and experts. | ELE Optics Community: A forum for discussing all facets of optics, from history to cutting-edge research and practical applications [26]. |

Core Concepts of the SLSQP Algorithm

Sequential Quadratic Programming (SQP) is an iterative method for constrained nonlinear optimization. The SLSQP (Sequential Least Squares Programming) variant solves a sequence of quadratic programming (QP) subproblems to find the optimal solution [27] [28].

The Fundamental Principle

At each iteration ( k ), SLSQP solves a constrained least-squares subproblem to generate a search direction ( d_k ) [29] [30]. The algorithm optimizes successive second-order (quadratic/least-squares) approximations of the objective function, with first-order (affine) approximations of the constraints [29].

Mathematical Foundation

For a nonlinear programming problem of the form:

- Minimize: ( f(x) )

- Subject to: ( h(x) \geq 0 ), ( g(x) = 0 ) [28]

The Lagrangian is: [ \mathcal{L}(x, \lambda, \sigma) = f(x) + \lambda h(x) + \sigma g(x) ] where ( \lambda ) and ( \sigma ) are Lagrange multipliers [28].

Frequently Asked Questions (FAQs)

Algorithm Behavior and Convergence

Q: Why does SLSQP get stuck at local minima? A: SLSQP is a local optimization algorithm that converges to the nearest local minimum from the starting point [31]. The 250-dimensional parameter space in your problem likely contains multiple valleys, causing the algorithm to converge to different local solutions [31].

Mitigation Strategies:

- Implement a multi-start approach with different initial points

- Use global optimization techniques like basinhopping with SLSQP as the local minimizer

- Analyze the problem structure to identify better starting points [31]

Q: How can I improve SLSQP convergence under numerical noise? A: SLSQP is generally more stable than standard SQP under numerical noise [30]. However, for better convergence:

- Provide analytical gradients instead of using numerical approximations

- Implement proper scaling of variables and constraints

- Use the revised search directions with improved least squares solvers [30]

Practical Implementation Issues

Q: What are the computational limitations of SLSQP? A: SLSQP uses dense-matrix methods (ordinary BFGS), requiring:

- O(n²) storage and O(n³) time in n dimensions [29]

- Becomes less practical for optimizing more than a few thousand parameters [29]

- Consider problem dimension reduction techniques for large-scale applications

Q: How do I handle infeasible QP subproblems? A: Practical implementations address this through:

- Merit functions or filter methods to assess progress toward constrained solutions

- Trust region or line search methods to manage model deviations

- Feasibility restoration phases or L1-penalized subproblems [28]

Troubleshooting Common Experimental Issues

Performance Optimization Techniques

Table 1: SLSQP Performance Tuning Strategies

| Issue | Symptoms | Solution | Expected Improvement |

|---|---|---|---|

| Local Minima | Same output with relaxed bounds | Multi-start with different initial points [31] | Better objective value |

| Slow Convergence | Many iterations with minimal progress | Implement analytical gradients [31] | 10-90% faster convergence [30] |

| Infeasible Subproblems | Algorithm fails to find feasible direction | Use L1-penalized subproblems [28] | Restored convergence |

| Numerical Instability | Gradient errors or constraint violations | Improved LSQ solver with proper conditioning [30] | Increased stability |

Computational Requirements

Table 2: SLSQP Computational Characteristics

| Dimension (n) | Storage Complexity | Time Complexity | Practical Limit |

|---|---|---|---|

| Small (n < 100) | O(n²) | O(n³) | Easily manageable |

| Medium (100 < n < 1000) | O(n²) | O(n³) | Requires substantial memory |

| Large (n > 1000) | O(n²) | O(n³) | Becomes impractical [29] |

Experimental Protocols for Optical Design Applications

Integration with Optical System Optimization

In optical design research, SLSQP enables efficient optimization of complex systems. The algorithm's constraint-handling capability is particularly valuable for practical optical engineering constraints [32].

Typical Optical Design Variables:

- Surface curvatures and thicknesses

- Material properties and dispersion coefficients

- Aspheric coefficients and freeform surface parameters [32]

Common Optical Constraints:

- Focal length specifications

- Field of view requirements

- Back focal length limits

- Manufacturing tolerances [32]

Implementation Example: Process Optimization

A recent study demonstrated SLSQP for large-scale process optimization with:

- 12151 equalities and 17 inequalities

- 13661 decision variables in the full model

- 122 decision variables in the reduced space [30]

The improved SLSQP algorithm achieved 10-90% reduction in computational time while generating better solutions compared to existing implementations [30].

Research Reagent Solutions: Essential Computational Tools

Table 3: Essential Software Tools for SLSQP Implementation

| Tool/Software | Function | Application Context |

|---|---|---|

| SciPy | De facto standard for scientific Python with scipy.optimize.minimize(method='SLSQP') [28] | General-purpose optimization |

| NLopt | C/C++ implementation with interfaces to Julia, Python, R, MATLAB/Octave [29] [28] | Cross-platform research |

| ALGLIB | SQP solver with C++, C#, Java, Python API [28] | Multi-language applications |

| acados | SQP method tailored to optimal control problems [28] | Specialized control applications |

Advanced Implementation Strategies

Line Search Improvements

Modern SLSQP implementations enhance performance through:

- Formula-based initial step length instead of fixed full-step length

- Wolfe condition integration with Armijio condition fallback

- Relaxed line search criteria for specific iterations [30]

Hybrid Approaches for Challenging Problems

For high-dimensional optimization problems, consider combining SLSQP with:

- Tensor sampling methods for better initial points

- Global exploration followed by local refinement

- Domain decomposition for complex systems [33]

The continued development of SLSQP algorithms ensures their relevance for solving challenging optimization problems in optical design, process optimization, and scientific research, particularly when leveraging open-source implementations within comprehensive research frameworks.

Nelder-Mead Algorithm FAQs

What is the Nelder-Mead algorithm and when should I use it?

The Nelder-Mead algorithm is a popular direct search method for minimizing nonlinear functions in several variables. Unlike other non-linear minimization methods, it is a derivative-free optimization technique, meaning it does not require gradient information. This makes it particularly valuable for optimizing complex systems where the objective function is noisy, discontinuous, or its derivatives are unknown or difficult to compute [34] [35].

Key characteristics and ideal use cases include:

- Nonlinear problems where gradient calculation is impractical

- Optical design systems like Raman amplifiers and hollow-core fibers [36]

- Engineering parameter estimation where system behavior is modeled by complex simulations

- Scenarios requiring "global" search where the minimum isn't necessarily bracketed by the initial guess [34]

What are the common failure modes of Nelder-Mead?

Despite its widespread use, the Nelder-Mead algorithm has known limitations and failure modes that researchers should recognize:

- Convergence to non-stationary points: The simplex vertices may converge to a point that isn't a stationary point of the objective function [37]

- Limit simplex of positive diameter: The simplex sequence may converge to a limit simplex with positive diameter rather than a single point [37]

- Stagnation in local optima: The algorithm may enter oscillation near local minima without converging to a single value [35]

- Unbounded simplex sequence: Function values may converge while the simplex sequence itself is unbounded [37]

Recent research has identified these convergence behaviors through rigorous mathematical analysis, providing examples of each failure mode [37].

How do I implement proper termination criteria?

Robust termination criteria are essential for effective Nelder-Mead implementation. Avoid basing termination solely on the "rate of improvement" as this can lead to premature termination when the algorithm is predominantly reshaping the simplex without significant objective function improvement [34].

Recommended termination criteria include:

- Maximum iteration limit: Prevent infinite loops

- Function value variation: Bound the variation of function values across all vertices

- Simplex size: Upper bound on distances between centroid and all vertices [34]

Troubleshooting Common Implementation Issues

Problem: Poor Convergence in High-Dimensional Spaces

Symptoms: Slow convergence, stagnation in clearly non-optimal regions, or excessive computation time when the number of variables increases.

Solutions:

- Hybrid approaches: Combine Nelder-Mead with global search algorithms like Genetic Algorithms (GA) or Particle Swarm Optimization (PSO) [38] [39]. The GANMA framework demonstrates how GA's global exploration complements NM's local refinement [38].

- Dimensionality reduction: Apply machine learning techniques like Principal Component Analysis (PCA) to reduce problem dimensionality before optimization [40].

- Parameter tuning: Experiment with reflection, expansion, and contraction coefficients. Research suggests αR=1, αE=3, and αQ=-1/2 as potential starting points [35].

Problem: Handling Constraints

Symptoms: Algorithm suggests infeasible solutions that violate physical or system constraints.

Solutions:

- Penalty functions: Transform constraints into penalty functions that severely penalize regions outside constraints [41]. This "soft constraint" approach is implemented in Flanagan's Scientific Library [41].

- Alternative algorithms: Consider constraint-aware algorithms like COBYLA (Constrained Optimization BY Linear Approximation) for problems with significant nonlinear constraints [41].

- Feasibility checks: Implement explicit feasibility checks before evaluating objective functions, rejecting infeasible trial points [35].

Problem: Excessive Computation Time per Iteration

Symptoms: Each iteration takes prohibitively long, making optimization impractical.

Solutions:

- Surrogate modeling: Use machine learning to create fast surrogate models of computationally expensive simulations [40].

- Mesh optimization: For computational fluid dynamics applications, balance mesh size and accuracy. Research shows meshes of ~20 million elements can maintain errors below 0.05% while improving computational efficiency [39].

- Parallel evaluation: Exploit that simplex vertices can often be evaluated independently and in parallel.

Experimental Protocols for Optical Design Optimization

Protocol 1: Raman Amplifier Gain Flatness Optimization

This protocol details the methodology for optimizing Raman amplifier designs to achieve flat on-off gain profiles using the Nelder-Mead algorithm [36].

Materials and Setup:

- Software Tools: MATLAB with fminsearch function [36]

- Specialized Solvers: Custom optical solvers for Raman amplification physics

- Design Variables: Pump powers, wavelengths, fiber parameters

- Objective Function: Deviation from flat gain profile across target wavelength range

Procedure:

- Initial Simplex Construction: Define initial guess for design parameters based on physical constraints

- Gain Profile Simulation: For each simplex vertex, compute gain spectrum using specialized optical solver

- Flatness Evaluation: Calculate objective function as root-mean-square deviation from target flat gain

- Simplex Transformation: Apply Nelder-Mead operations (reflect, expand, contract) based on gain flatness

- Termination Check: Continue until gain variation falls below threshold or maximum iterations reached

- Validation: Verify optimal design with full-wave simulation

Expected Outcomes: Significant improvement in gain flatness across operational bandwidth compared to initial design [36].

Protocol 2: Hollow-Core Fiber Loss Minimization

This protocol describes the optimization of anti-resonant hollow-core fibers to minimize confinement and scattering losses [36].

Materials and Setup:

- Optimization Framework: Nelder-Mead implementation (MATLAB fminsearch) [36]

- Simulation Tools: Custom fiber mode solvers for loss calculation

- Design Variables: Core geometry, tube thickness, arrangement parameters

- Objective Function: Weighted sum of confinement and scattering losses

Procedure:

- Parameter Space Definition: Establish feasible ranges for geometric parameters based on fabrication constraints

- Initial Design Selection: Choose starting simplex vertices covering diverse regions of parameter space

- Loss Calculation: For each vertex, compute confinement and scattering losses using specialized solvers

- Multi-Objective Optimization: Apply Nelder-Mead to minimize composite loss function

- Pareto Frontier Exploration: Repeat with different weightings to map trade-off between loss mechanisms

- Fabrication Feasibility Check: Ensure optimal designs are manufacturable

Expected Outcomes: Substantial reduction in both confinement and scattering losses while maintaining other performance metrics [36].

Nelder-Mead Performance Characteristics

Table 1: Nelder-Mead Algorithm Parameters and Operations

| Parameter/Option | Standard Value | Function |

|---|---|---|

| Reflection (αR) | 1 | Reflects worst point through centroid |

| Expansion (αE) | 2-3 | Extends reflection further in promising directions |

| Contraction (αQ) | 0.5 | Contracts toward centroid when reflection is poor |

| Shrinkage | 0.5 | Reduces simplex size around best point |

Table 2: Hybrid Algorithm Performance Comparison

| Hybrid Method | Application Domain | Performance Advantages | Limitations |

|---|---|---|---|

| GA-NM (GANMA) [38] | General optimization, parameter estimation | Improved convergence speed, balanced exploration/exploitation | Scalability in high dimensions, parameter sensitivity |

| PSO-NM [39] | Turbine flow efficiency | 4 percentage point efficiency improvement in gas-steam turbine | Computational demands with complex simulations |

| JAYA-NM [35] | PEMFC parameter estimation | Satisfactory convergence speed and accuracy | Limited to specific problem domains |

| DNMRIME [42] | Photovoltaic parameter estimation | Superior performance on CEC 2017 benchmarks | Recent method requiring further validation |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Nelder-Mead Optimization

| Tool/Resource | Function | Example Implementation |

|---|---|---|

| MATLAB fminsearch | Built-in Nelder-Mead implementation | Optical device design optimization [36] |

| Custom Optical Solvers | Physics-based performance evaluation | Raman amplifier and hollow-core fiber simulation [36] |

| Synopsys Sentaurus TCAD | Device modeling and calibration | Photonic power converter design [40] |

| Rigorous Coupled Wave Analysis (RCWA) | Optical simulation | Absorption calculation in multi-junction devices [40] |

| TracePro | Non-imaging optical design | Optical system optimization with built-in Nelder-Mead [43] |

| Dimensionality Reduction (PCA) | Design space simplification | Knowledge discovery in photonic power converters [40] |

Workflow Visualization

Advanced Implementation Notes

Modern Variants and Improvements

Recent research has addressed several limitations of the original Nelder-Mead algorithm:

- Ordered Nelder-Mead: Lagarias et al. developed an ordered version with better convergence properties than the original method [37]

- Hybrid approaches: The DNMRIME algorithm combines dynamic multi-dimensional random mechanism with Nelder-Mead simplex, demonstrating superior performance on CEC 2017 benchmarks [42]

- Matrix representations: Modern analyses use transformation matrices to represent simplex operations, enabling better theoretical understanding [37]

Application-Specific Considerations

For optical design problems specifically:

- Computational expense: Balance simulation accuracy with computational cost. ML-enhanced dimensionality reduction can provide over 20× more optimal designs with 15% reduction in computational cost [40]

- Multi-objective optimization: Many optical systems require balancing competing objectives. The reduced dimensionality space enables efficient multi-parameter sweeps [40]

- Fabrication constraints: Ensure optimal parameters respect manufacturing tolerances and physical realizability