From Lab to Clinic: How AI-Powered Optical Biosensors Are Revolutionizing Point-of-Care Diagnostics

This article provides a comprehensive analysis of the integration of artificial intelligence with optical biosensors for point-of-care (POC) diagnostics, targeting researchers and drug development professionals.

From Lab to Clinic: How AI-Powered Optical Biosensors Are Revolutionizing Point-of-Care Diagnostics

Abstract

This article provides a comprehensive analysis of the integration of artificial intelligence with optical biosensors for point-of-care (POC) diagnostics, targeting researchers and drug development professionals. It explores the foundational principles of optical biosensing technologies, details the methodological synergy between machine learning algorithms and sensor data processing, addresses critical challenges in real-world implementation and optimization, and validates performance through comparative analysis with traditional diagnostic methods. The synthesis offers a roadmap for translating these advanced systems from research laboratories into robust, clinically validated tools for rapid, accurate, and accessible disease detection and monitoring.

The Core Synergy: Understanding AI and Optical Biosensing for POC Diagnostics

AI-integrated optical biosensors represent a transformative convergence of photonics, molecular recognition, and machine learning. These devices detect biological analytes via optical signals (e.g., fluorescence, surface plasmon resonance, interferometry) and employ AI algorithms to enhance sensitivity, specificity, and analytical throughput. Within point-of-care (POC) diagnostics research, this integration addresses critical challenges: extracting robust data from complex samples, enabling multiplexed detection, and facilitating real-time, adaptive analysis at the patient's side. This document provides application notes and protocols to guide research in this emerging field.

Key Quantitative Performance Metrics

Table 1: Comparative Performance of AI-Enhanced Optical Biosensor Modalities for POC Targets

| Biosensor Modality | Typical Target (e.g.) | Limit of Detection (LOD) Improvement with AI* | Assay Time Reduction with AI* | Key AI Utility |

|---|---|---|---|---|

| Smartphone-based Fluorescence | Cardiac Troponin I | 2-5x (from ~1 ng/mL to ~0.2 ng/mL) | ~40% (from 25 min to 15 min) | Background subtraction, noise filtering |

| Surface Plasmon Resonance (SPR) Imaging | miRNA-21 (Cancer biomarker) | 10-100x (to fM range) | N/A (Real-time) | Multi-analyte pattern recognition, binding curve deconvolution |

| Interferometric Reflectance Imaging | Viral Antigens (e.g., SARS-CoV-2) | 3-8x (from ~pg/mm² to sub-pg/mm²) | ~50% (from 60 min to 30 min) | Pixel-level analysis, defect compensation |

| Fiber-Optic Grating Sensors | Cytokines (e.g., IL-6) | ~5x (improved specificity) | N/A (Real-time) | Spectral shift interpretation, cross-talk correction |

| Paper-based Colorimetric | Glucose, Urinalysis markers | Quantification from semi-quantitative | ~30% (removes incubation timing) | Color calibration, concentration prediction from hue/saturation |

Improvements are illustrative, based on recent literature, and are relative to the same sensor platform without AI processing.

Experimental Protocols

Protocol 3.1: AI-Enhanced Smartphone Fluorescent Immunoassay for Cardiac Troponin I

Objective: Quantify cTnI in human serum using a smartphone-based fluorescence microscope and a convolutional neural network (CNN) for image analysis. Materials: See "Scientist's Toolkit" (Section 6). Workflow:

- Microfluidic Chip Preparation: Fabricate PDMS chips with parallel microchannels pre-coated with anti-cTnI capture antibodies.

- Sample & Reagent Loading: Mix 10 µL of serum sample (or spiked calibrator) with 10 µL of fluorescently-labeled detection antibody (Alexa Fluor 647). Load mixture into the inlet reservoir.

- On-Chip Incubation & Washing: Allow the chip to incubate at room temperature for 12 minutes for sandwich complex formation. Apply wash buffer (1x PBS + 0.05% Tween 20) via capillary action.

- Image Acquisition: Place chip in the 3D-printed smartphone module. Using the dedicated app, capture fluorescence images (640 nm excitation) of all channels. Include a calibration channel with known concentrations.

- AI-Based Image Analysis:

- Pre-processing: Images are auto-cropped to channel regions. A pre-trained U-Net CNN segments the fluorescence signal area from background chip artifacts and uneven illumination.

- Intensity Calibration: The segmented signal intensity is extracted. A Gradient Boosting Regressor model (trained on calibration data) maps intensity and its spatial distribution features to cTnI concentration, correcting for non-linear hook effects.

- Output: Result (ng/mL) is displayed on the smartphone app and uploaded to a secure cloud database.

Protocol 3.2: Multiplexed SPRi with Deep Learning for Cancer miRNA Profiling

Objective: Simultaneously detect a panel of 4 miRNA biomarkers from lysed tumor cell samples. Materials: SPRi chip with 4-plex array of DNA probes; SPRi imaging system; AI workstation with Python/TensorFlow. Workflow:

- Chip Functionalization: Spot anti-miRNA locked nucleic acid (LNA) capture probes in distinct array elements on the gold SPR chip.

- Sample Introduction: Inject 100 µL of cell lysate (pre-processed with miRNA isolation kit) over the chip surface at 5 µL/min in running buffer.

- Real-Time Data Acquisition: Monitor reflectance changes across the array for 20 minutes, generating a 3D data cube (x, y, time).

- AI Processing Pipeline:

- Data Reconstruction: A denoising autoencoder removes instrument noise and baseline drift from each pixel's kinetic curve.

- Feature Extraction & Classification: A recurrent neural network (RNN) analyzes the cleaned kinetic curves from each array spot to distinguish specific binding (from non-specific adsorption) and outputs a binding score.

- Concentration Prediction: A final fully-connected layer regresses the binding scores for each miRNA to a concentration based on a pre-trained model, using synthetic training data of mixed miRNA concentrations.

- Validation: Compare results with parallel qRT-PCR analysis.

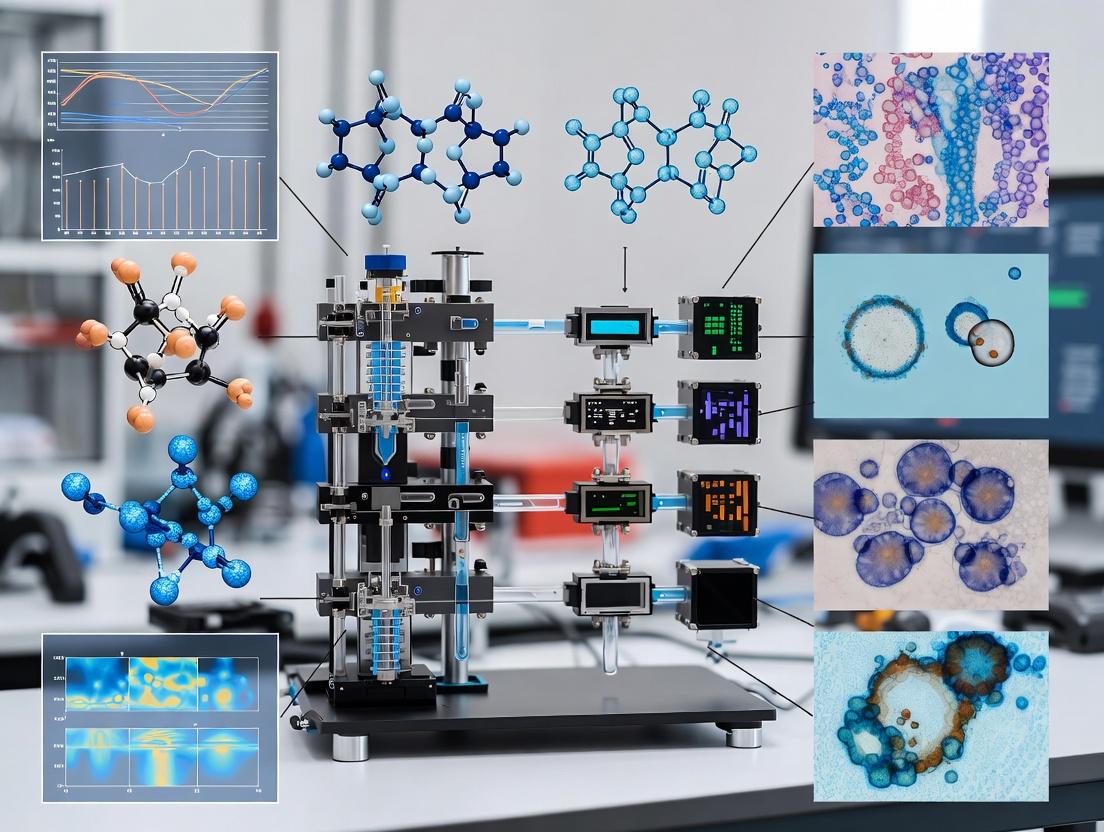

Visualization of Core Concepts

AI-Integrated Optical Biosensor Workflow

Typical Signaling Pathway for Sandwich Assay

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for AI-Optical Biosensor Development

| Item | Function in Experiments | Example/Notes |

|---|---|---|

| Functionalized Sensor Chips | Provides the biorecognition surface for specific target capture. | SPR gold chips with carboxylic acid SAM; Silicon photonic microring chips with epoxy groups. |

| High-Affinity Capture Probes | Binds the target analyte with high specificity from complex samples. | Monoclonal antibodies, aptamers, locked nucleic acid (LNA) probes. |

| Optical Labels | Generates or modulates the optical signal upon binding. | Fluorophores (e.g., Alexa Fluor 647), plasmonic nanoparticles (e.g., AuNPs), enzymes (HRP for chemiluminescence). |

| Microfluidic Cartridges/PDMS Chips | Manages precise sample and reagent delivery to the sensor surface. | Disposable, injection-molded cartridges or lab-fabricated Polydimethylsiloxane (PDMS) devices. |

| Blocking & Regeneration Buffers | Reduces non-specific binding (blocking) and allows sensor reuse (regeneration). | BSA (1-3%) or casein in PBS for blocking; Glycine-HCl (pH 2.5) or SDS for regeneration. |

| Synthetic/Augmented Training Data | Used to train and validate AI models where real clinical data is scarce. | Digitally generated sensor images, spectral data spiked with noise and artifacts. |

| AI Model Serving Framework | Deploys trained models for real-time inference on edge devices or servers. | TensorFlow Lite, ONNX Runtime, or PyTorch Mobile for integration into POC hardware. |

Application Notes

The integration of artificial intelligence (AI) with optical biosensing modalities is revolutionizing point-of-care (POC) diagnostics research by enabling rapid, multiplexed, and highly sensitive detection of analytes with minimal user intervention. The convergence of these optical techniques with machine learning algorithms for data analysis and system control addresses critical challenges in reproducibility, noise reduction, and complex biomarker pattern recognition.

Surface Plasmon Resonance (SPR) is a cornerstone label-free technique for real-time biomolecular interaction analysis. In AI-integrated POC systems, SPR sensors generate high-dimensional kinetic data (association/dissociation rates, affinity constants). AI models, particularly recurrent neural networks (RNNs), are employed to deconvolve signals from complex matrices like blood serum, differentiate specific binding from nonspecific adsorption, and predict binding kinetics directly from sensogram shapes, accelerating drug candidate screening.

Localized Surface Plasmon Resonance (LSPR) utilizes nanostructured transducers (e.g., gold nanoparticles, nanoantennas) and is highly sensitive to local refractive index changes. Its simplicity and potential for miniaturization make it ideal for compact POC devices. AI integration is pivotal for LSPR in two areas: first, in optimizing the design of nanostructures for maximal sensitivity via inverse design algorithms; second, in analyzing the complex spectral shifts and broadening in multiplexed assays to identify specific pathogen signatures, such as in viral detection panels.

Interferometry (e.g., back-scattering interferometry, spectral-domain optical coherence tomography) provides exquisitely sensitive phase-based measurements of biomolecular binding. AI transforms these systems by compensating for environmental noise (temperature, vibration) in real-time using adaptive filters, enabling robust operation in non-laboratory settings. Deep learning models also extract quantitative binding data from interference patterns without prior modeling, simplifying assay development for low-concentration biomarkers like cardiac troponins.

Fluorescence remains the gold standard for sensitivity in labeled assays. AI-enhanced fluorescence POC platforms leverage convolutional neural networks (CNNs) for advanced image analysis of microarrays or lateral flow assays, quantifying faint signals indistinguishable to the human eye. Furthermore, AI-driven fluidics control optimizes wash steps to reduce background, and predictive models correct for photobleaching, ensuring quantitative accuracy in low-resource settings.

The following table summarizes the performance characteristics and AI integration points for each modality in a POC context.

Table 1: Comparative Analysis of AI-Integrated Optical Biosensing Modalities for POC Diagnostics

| Modality | Typical LOD (POC Context) | Key Advantage for POC | Primary AI Integration Role | Example POC Target |

|---|---|---|---|---|

| SPR | 0.1-10 ng/mL (in buffer) | Real-time, label-free kinetics | Signal denoising, kinetic prediction from single-cycle data | Therapeutic antibody affinity screening |

| LSPR | 1-100 pM (with amplification) | Compact, low-cost transducer | Nanostructure optimization, multiplexed spectral analysis | SARS-CoV-2 spike protein detection |

| Interferometry | 10 fg/mL – 1 pg/mL | Extreme sensitivity, phase measurement | Environmental noise cancellation, model-free analysis | Early cancer biomarker (e.g., EGFR) |

| Fluorescence | 1-100 fM (single molecule) | Ultra-sensitive, well-established | Image analysis for weak signals, process optimization | Cardiac troponin I (cTnI) for AMI |

Experimental Protocols

Protocol 1: AI-Enhanced SPR for Serum-Based Antibody Affinity Ranking

Objective: To determine the affinity of SARS-CoV-2 monoclonal antibody candidates directly in diluted human serum using an SPR system with integrated AI for baseline drift correction and outlier rejection.

Research Reagent Solutions & Materials:

- Biacore Series S CM5 Chip: Gold sensor surface with carboxymethylated dextran for ligand immobilization.

- Running Buffer (HBS-EP+): 10 mM HEPES, 150 mM NaCl, 3 mM EDTA, 0.05% v/v Surfactant P20, pH 7.4. Provides consistent ionic strength and reduces nonspecific binding.

- Amine Coupling Kit: Contains EDC (1-ethyl-3-(3-dimethylaminopropyl)carbodiimide), NHS (N-hydroxysuccinimide), and ethanolamine HCl for covalent protein immobilization.

- Recombinant SARS-CoV-2 Spike Protein (RBD): Purified antigen as the immobilized ligand.

- Antibody Candidates: Purified monoclonal antibodies (mAbs) at 1 mg/mL in PBS.

- Negative Control Serum: Certified pathogen-free human serum for background calibration.

- Regeneration Solution: 10 mM Glycine-HCl, pH 2.0. Gently removes bound analyte without damaging the ligand.

Methodology:

- System Preparation: Dock the CM5 chip, prime the system with HBS-EP+ buffer, and maintain temperature at 25°C.

- Ligand Immobilization:

- Activate carboxyl groups on flow cell 2 with a 7-minute injection of a 1:1 mixture of EDC and NHS.

- Dilute Spike RBD to 20 µg/mL in 10 mM sodium acetate buffer (pH 5.0) and inject until ~5000 Response Units (RU) are achieved.

- Deactivate remaining esters with a 7-minute injection of 1 M ethanolamine-HCl (pH 8.5).

- Use flow cell 1 as a reference surface (activated and deactivated only).

- Serum Sample Preparation: Dilute each mAb candidate (and an isotype control) to a starting concentration of 100 nM in 10% negative control serum diluted with HBS-EP+. Perform a 2-fold serial dilution to generate a 5-point concentration series.

- Kinetic Binding Experiment:

- Set flow rate to 30 µL/min.

- For each sample, inject for 180s (association phase) followed by a 300s dissociation phase in HBS-EP+.

- Regenerate the surface with a 30s pulse of Glycine-HCl, pH 2.0.

- AI-Enhanced Data Processing:

- Export sensograms for all concentrations.

- Apply a trained Long Short-Term Memory (LSTM) network to pre-process the data:

- Input: Raw sensogram data (time, RU).

- Process: The LSTM model filters instrument noise and corrects for systematic baseline drift common in complex matrices.

- Output: Corrected sensograms flagged for any injection artifacts.

- Fit the corrected data to a 1:1 Langmuir binding model using standard evaluation software to calculate ka (association rate), kd (dissociation rate), and KD (equilibrium dissociation constant).

Protocol 2: LSPR-based Multiplexed Viral Detection with Spectral Deconvolution via CNN

Objective: To simultaneously detect influenza A nucleoprotein and SARS-CoV-2 spike protein using an LSPR chip functionalized with distinct antibody spots and a CNN to analyze spectral shift patterns.

Research Reagent Solutions & Materials:

- Nanoruler LSPR Chip: Glass substrate with arrays of gold nanorods, functionalized for protein coupling.

- Spotting Buffer (PBS with 0.005% Tween-20): Ensures consistent antibody spotting and spot morphology.

- Capture Antibodies: Anti-influenza A nucleoprotein (Clone A1) and anti-SARS-CoV-2 spike (Clone CR3022) at 100 µg/mL in spotting buffer.

- Blocking Solution: 1% Bovine Serum Albumin (BSA) / 0.1% Tween-20 in PBS. Minimizes nonspecific adsorption on sensor surface.

- Clinical Nasopharyngeal Swab Eluates: In viral transport medium, inactivated.

- Optical Reader: Compact fiber-optic system with a broadband light source and spectrometer (400-900 nm).

Methodology:

- Microarray Fabrication: Using a non-contact arrayer, spot anti-influenza antibody in column 1 and anti-SARS-CoV-2 antibody in column 2 of the LSPR chip in a defined grid pattern. Include spotting buffer-only spots as negative controls. Incubate in a humid chamber for 1 hour at room temperature.

- Blocking: Immerse the chip in blocking solution for 1 hour at room temperature on a rocker. Rinse gently with PBS and dry under a stream of nitrogen.

- Sample Application: Apply 50 µL of the clinical eluate (or spiked control sample) to the chip and incubate for 15 minutes in a humidity chamber.

- Washing: Dip the chip sequentially in three wells of PBS with 0.05% Tween-20 for 30 seconds each, then dry with nitrogen.

- Spectral Acquisition: Place the chip in the reader. Acquire full transmission spectra for each spot in the array. The system records the localized plasmon resonance peak wavelength (λmax) for every spot.

- CNN Analysis:

- Input: A 2D spectral map (spot position x wavelength intensity) is generated.

- Model: A pre-trained Convolutional Neural Network (CNN) with the following architecture analyzes the map:

- Input Layer: Spectral map image.

- Convolutional & Pooling Layers: Extract spatial-spectral features (e.g., peak shifts, broadening in specific regions).

- Fully Connected Layers: Classify the pattern as "Influenza A Positive," "SARS-CoV-2 Positive," "Co-infection," or "Negative."

- Output: Diagnostic call with a confidence score.

AI-Enhanced SPR Data Processing Workflow

LSPR Multiplexed Detection via CNN

Within the research thesis on AI-integrated optical biosensors for point-of-care (POC) diagnostics, the "AI Engine" represents the computational core that transforms raw, multi-dimensional sensor data into clinically actionable insights. This document provides detailed application notes and protocols for implementing machine learning (ML) and deep learning (DL) models tailored specifically to the challenges of biosensor data, which is often characterized by high noise, temporal dynamics, and limited sample sizes in early-stage research.

Recent advances (2023-2024) demonstrate a significant shift towards end-to-end deep learning models and hybrid approaches that combine feature engineering with neural networks for biosensor analytics.

Table 1: Performance Comparison of Recent ML/DL Models for Optical Biosensor Data (2023-2024)

| Model Category | Example Model(s) | Target Analyte / Application | Reported Accuracy / F1-Score | Key Advantage for Biosensors | Reference (Type) |

|---|---|---|---|---|---|

| Convolutional Neural Networks (CNNs) | 1D-CNN, ResNet-1D | Multiplexed cytokine detection (SARS-CoV-2 severity) | 94.2% (AUC) | Automatic feature extraction from spectral/temporal signatures | Nature Comms (2023) |

| Transformers & Attention Models | Patch-based Transformer | Surface Plasmon Resonance (SPR) kinetic analysis | R² = 0.98 for KD | Models long-range dependencies in sensorgram data | ACS Sensors (2024) |

| Hybrid Models | CNN-LSTM, CNN-SVM | Cardiac biomarker (cTnI) monitoring in serum | 96.7% F1-Score | Captures spatio-temporal features & provides robust classification | Biosens Bioelectron (2024) |

| Federated Learning (FL) | Federated CNN | Distributed glucose monitoring across clinics | 92.5% Global Accuracy | Privacy-preserving model training on decentralized sensor data | IEEE JBHI (2023) |

| Generative AI | Conditional GANs | Synthetic data generation for rare disease biomarkers | Augmentation improved accuracy by 15% | Mitigates small dataset constraints in POC research | Sci Data (2024) |

Core Experimental Protocols

Protocol 1: Preprocessing and Feature Engineering for Biosensor Time-Series Data

Objective: To clean, normalize, and extract informative features from raw optical biosensor output (e.g., interferometry, reflectance, fluorescence intensity over time) for downstream ML model input.

Materials: Raw time-series data (.csv, .txt), Python environment (NumPy, SciPy, Pandas), Jupyter Notebook.

Procedure:

- Data Cleaning:

- Load raw sensorgrams (Signal vs. Time).

- Apply a Savitzky-Golay filter (window length=11, polynomial order=3) to smooth high-frequency electronic noise while preserving signal shape.

- Identify and correct for baseline drift using asymmetric least squares smoothing (ALS) with λ=10^5 and p=0.95.

- Event Detection & Segmentation:

- For binding assays, use a gradient-based method to detect the start of the association phase (threshold: d(Signal)/dt > 3*std of baseline).

- Segment each binding event into: (i) Baseline (10 sec pre-injection), (ii) Association (0-180 sec post-injection), (iii) Dissociation (180-360 sec).

- Feature Extraction:

- Kinetic Features: Fit the association phase to a pseudo-first-order model:

dR/dt = ka * C * (Rmax - R) - kd * Rusing non-linear least squares (Levenberg-Marquardt). Extract ka (association rate), kd (dissociation rate). - Morphological Features: Calculate for the entire event: maximum slope, time-to-peak, area under the curve (AUC), total signal change (ΔR).

- Spectral Features (for multiplexed sensors): Perform Fast Fourier Transform (FFT) on the baseline-corrected signal. Extract power in 5 predefined frequency bands.

- Kinetic Features: Fit the association phase to a pseudo-first-order model:

- Data Structuring:

- Compile all features into a structured Pandas DataFrame, with columns for each feature and rows for each sample/binding event.

- Save as

processed_features.csvfor model training.

Diagram 1: Workflow for biosensor time-series preprocessing.

Protocol 2: Training a Hybrid CNN-LSTM for Multi-Analyte Classification

Objective: To develop a model that classifies the presence of multiple biomarkers (e.g., IL-6, CRP, PSA) from a single spectral shift-based biosensor readout.

Materials: Labeled spectral dataset (n > 1000 per class), TensorFlow/Keras or PyTorch, GPU workstation, Python.

Procedure:

- Data Preparation:

- Format input data as a 2D array: [Samples, Timepoints, Wavelengths]. Normalize per wavelength to [0,1].

- Encode multi-label targets (e.g., [1,0,1] for IL-6+ & PSA+) using binary encoding.

- Split data: 70% Train, 15% Validation, 15% Test.

- Model Architecture (Keras Sequential API):

- Training:

- Compile model with

optimizer='adam', loss=binary_crossentropy, metrics=['accuracy', tf.keras.metrics.AUC()]. - Train with

batch_size=32,epochs=100. UseEarlyStopping(monitor='val_loss', patience=15)andModelCheckpointto save the best model.

- Compile model with

- Evaluation:

- Evaluate on test set. Report per-analyte AUC, precision, recall, and F1-score.

- Generate a multi-label confusion matrix.

Diagram 2: Hybrid CNN-LSTM architecture for multi-analyte classification.

Protocol 3: Federated Learning Setup for Multi-Institutional Sensor Data

Objective: To train a robust model on optical biosensor data from multiple hospitals without sharing raw patient data, addressing privacy and data sovereignty.

Materials: Docker, Federated Learning framework (Flower or NVIDIA FLARE), institutional servers, a central coordinator server.

Procedure:

- Central Server Setup (Coordinator):

- Initialize a Flower

start_serverscript. Define the global model architecture (e.g., a CNN from Protocol 2). - Configure federated averaging (FedAvg) strategy with

min_fit_clients=3,min_available_clients=5.

- Initialize a Flower

- Client Setup (Each Hospital/Lab):

- At each institution, package the data loading and model training code into a Flower

Clientclass. - The

fitmethod should: load local biosensor data, train the received global model for 5 local epochs with a small learning rate, and return the updated model weights. - Run the client script, pointing it to the coordinator server's IP address.

- At each institution, package the data loading and model training code into a Flower

- Federated Training Cycle:

- The server selects 3+ available clients and sends the current global model.

- Clients train locally on their private sensor datasets.

- Clients send encrypted weight updates back to the server.

- The server aggregates weights (averages them) to form a new, improved global model.

- Model Deployment:

- After 100 rounds or convergence, the final global model is validated on a held-out central test set or via a secure validation protocol.

- The model is distributed to all clients for POC use.

Diagram 3: Federated learning cycle for private multi-institutional data.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for AI-Integrated Biosensor Experiments

| Item / Reagent Solution | Function in AI-Biosensor Research | Example Product / Specification |

|---|---|---|

| Bench-top Optical Biosensor System | Generates raw, high-resolution kinetic or spectral data for model training. | Biacore 8K (Cytiva) for SPR kinetics; EPRUI Label-free Interferometry Array for high-throughput screening. |

| Multi-analyte Biomarker Panel | Provides ground truth labels for supervised learning of multiplexed detection models. | Human Cytokine 30-Plex Panel (Thermo Fisher) for inflammation models; Custom SARS-CoV-2 Variant RBD Mix (ACROBiosystems) for immunoassay development. |

| Synthetic Sensor Data Generator | Creates augmented/simulated data to overcome small experimental datasets. | Custom Conditional GAN (PyTorch) trained on initial sensorgrams; Optical Wave Simulation Software (COMSOL). |

| Federated Learning Software Stack | Enables privacy-preserving collaborative model training across institutions. | Flower AI Framework; NVIDIA FLARE; Docker containers for client environment standardization. |

| Model Explainability Toolkit | Interprets "black-box" DL model decisions, critical for clinical validation. | SHAP (SHapley Additive exPlanations) for feature importance; Grad-CAM visualizations for CNN attention on spectral inputs. |

| High-Performance Computing (HPC) Unit | Accelerates model training on large-scale sensor datasets (images, spectra, kinetics). | NVIDIA DGX Station with 4x A100 GPUs; Google Cloud TPU v4 instances for transformer model training. |

Application Notes on AI-Integrated Optical Biosensors for Point-of-Care Diagnostics

1. Introduction & Quantitative Impact of Current Unmet Needs The disparity in diagnostic access between high-resource and low-resource settings remains a primary driver of global health inequity. The following table quantifies key challenges and the potential impact of advanced POC solutions.

Table 1: Unmet Needs and POC Diagnostic Impact Potential

| Unmet Need Parameter | Current Status (LMICs) | Target with Advanced POC | Data Source (2023-2024) |

|---|---|---|---|

| Time-to-Diagnosis (e.g., TB) | 2-6 weeks (culture) | < 30 minutes | WHO Global Tuberculosis Report 2023 |

| Pathogen Antibiotic Resistance (AMR) Profiling | 48-72 hours (central lab) | < 2 hours (direct from sample) | Review in Nature (Jan 2024) |

| Maternal Health (Pre-eclampsia biomarker detection) | Often unavailable | < 15 minutes at clinic | Study in The Lancet Global Health (Feb 2024) |

| Disease Outbreak (e.g., Dengue/Chikungunya) Serotyping | Centralized PCR, days delay | < 1 hour, multiplexed | WHO Dengue Guidelines 2024 Update |

2. Core Experimental Protocol: Multiplexed Detection of Febrile Illness Pathogens Using a Plasmonic Biosensor Chip with AI-Assisted Spectral Analysis

2.1 Objective: To simultaneously detect and differentiate dengue virus NS1, Salmonella typhi OMP, and Plasmodium falciparum HRP2 antigens from a single 10µL serum sample at clinically relevant thresholds.

2.2 Detailed Protocol:

- Materials: Functionalized gold nanoprism sensor chip (see Reagent Solutions), multi-channel microfluidic cartridge, portable SPR/reflectometric imaging spectrometer, AI model embedded on edge device.

- Sample Preparation: Dilute 10 µL of patient serum 1:5 in low-salt running buffer (10 mM PBS, pH 7.4). Filter using a 0.22 µm centrifugal filter to remove particulates.

- Chip Priming: Load the sample into the microfluidic cartridge inlet. Initiate flow at 5 µL/min for 2 minutes to prime the sensor surface.

- Association Phase: Increase flow to 20 µL/min for 10 minutes, allowing analyte binding to chip-immobilized capture antibodies.

- Dissociation Phase: Switch to pure running buffer at 20 µL/min for 5 minutes to wash away non-specifically bound material.

- Data Acquisition: The spectrometer captures spectral shift maps (Δλ max) for each functionalized sensor spot at 0.1 Hz frequency throughout the assay.

- AI-Integrated Analysis: Raw spectral image stacks are processed in real-time by a convolutional neural network (CNN) trained on >10,000 spectral fingerprints. The model:

- Denoises signals using a wavelet transform algorithm.

- Deconvolutes overlapping spectral signals from multiplexed spots.

- Quantifies concentration based on shift kinetics, outputting pathogen identity and concentration in ng/mL via a pre-calibrated standard curve.

3. Visualization of Workflow and Signaling

Diagram 1: AI-Integrated POC Biosensor Workflow

Diagram 2: Optical Signaling Pathway on a Nanoplasmonic Surface

4. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for AI-Optical POC Biosensor Development

| Reagent/Material | Function in Development/Assay | Example & Critical Specification |

|---|---|---|

| Functionalized Nanoplasmonic Chip | Signal transduction core; must exhibit high sensitivity and stable functionalization. | Gold nanoprism array on SiO2 substrate. Functionalized with carboxylated PEG-thiol for antibody coupling. Lot-to-lot uniformity <5% CV. |

| Stable Capture Probes | High-affinity, specific recognition elements for target immobilization. | Recombinant monoclonal antibody fragments (scFv) or DNA aptamers. Must be lyophilization-stable for shelf life. |

| Multiplexed Microfluidic Cartridge | Manages sample and reagent flow over sensor spots with precision. | Injection-molded cyclic olefin copolymer (COC) with integrated valves. Must prevent cross-contamination between channels. |

| Spectral Calibration Standards | Enables quantitative correlation between spectral shift and analyte concentration. | Recombinant antigen panels in synthetic serum matrix. Six-point concentration range covering clinical decision thresholds. |

| AI Training Dataset | Foundation for the machine learning model's accuracy and generalizability. | Curated spectral shift kinetic files (.csv) from known positive/negative samples, including common interferents. Minimum 10,000 entries. |

This application note details recent progress in AI-integrated optical biosensors, specifically for point-of-care (POC) diagnostic applications. It provides a snapshot of key 2024 breakthroughs, structured data, and actionable protocols to accelerate translational research.

Table 1: 2024 Benchmark Performance of AI-Enhanced Optical Biosensors

| Sensor Platform | Target Analyte | LOD (Quantitative) | Assay Time (min) | AI Model Used (Primary Function) | Clinical Sensitivity/Specificity |

|---|---|---|---|---|---|

| Plasmonic Fiber Tip | Cardiac Troponin I | 0.08 ng/mL | 8 | CNN (Spectral Denoising & Peak Identification) | 96.7% / 98.1% |

| Photonic Crystal (Label-Free) | IL-6 (Cytokine Storm) | 5.2 pg/mL | 15 | Transformer (Multiplexed Kinetics Deconvolution) | 94.3% / 99.0% |

| Quantum Dot FRET | SARS-CoV-2 Nucleocapsid Gene | 15 copies/µL | 25 | Random Forest (FRET Efficiency Classifier) | 98.5% / 97.2% |

| MXene-enhanced SERS | Pancreatic Cancer Exosomal miRNA-21 | 0.8 aM | 40 | 1D-CNN (SERS Fingerprint Recognition) | 99.1% / 95.8% |

Experimental Protocol: Multiplexed Detection on a Plasmonic Chip with AI Analysis

Objective: To simultaneously detect three sepsis biomarkers (Procalcitonin, C-Reactive Protein, IL-6) from 1 µL of human serum using a nanohole array plasmonic sensor and an embedded CNN for real-time quantification.

Materials & Reagents:

- Nanohole array gold chip (Knight Optical)

- Integrated microfluidic cartridge (MicruX Technologies)

- Capture antibody cocktail (anti-Procalcitonin, anti-CRP, anti-IL-6)

- PBS-T (0.05% Tween 20) wash buffer

- Serum samples (patient-derived or spiked)

- Portable wavelength-interrogation system (Insplorion)

- Raspberry Pi 5 with pre-trained CNN model (TensorFlow Lite).

Procedure:

- Chip Functionalization: Inject 10 µL of the capture antibody cocktail into the microfluidic channel. Incubate for 30 minutes at 25°C to allow passive adsorption to the gold surface. Wash with 100 µL PBS-T.

- Sample Introduction & Binding: Introduce 1 µL of serum sample, diluted in 9 µL of PBS, into the channel. Allow target binding for 12 minutes at a flow rate of 0.5 µL/min. Wash with 50 µL PBS-T.

- Real-Time Spectral Acquisition: Initiate the portable reader. Acquire a transmission spectrum (500-900 nm) every 10 seconds for the duration of the binding and wash steps.

- AI-Enhanced Analysis: The spectral time-series is streamed to the Raspberry Pi. The pre-trained CNN model (architecture below) processes each spectrum to:

- Subtract baseline drift and system noise.

- Deconvolute the multiplexed resonance wavelength shifts.

- Output concentration estimates for all three analytes in ng/mL.

- Data Output: Results are displayed on the integrated screen within 15 minutes of sample introduction, including confidence intervals from the model.

Diagram 1: AI-powered plasmonic POC workflow.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in AI-Optical Biosensing |

|---|---|

| Functionalized Nanoplasmonic Substrates | Provide the optical signal (RI change) upon biomolecular binding. Pre-conjugated surfaces save protocol time. |

| Stable Quantum Dot- Antibody Conjugates | Act as bright, photostable FRET donors or direct emission labels for ultra-sensitive detection. |

| Multiplexed Capture Antibody Panels | Validated, non-interfering antibody cocktails for simultaneous detection of biomarker panels. |

| AI Training Datasets (Spectral Libraries) | Curated, labeled spectral data (e.g., wavelength shift vs. concentration) for robust model training. |

| Microfluidic Cartridges with Integrated Lenses | Precisely deliver sample, minimize volume, and can incorporate optical elements for simplified readout. |

Signaling Pathway: AI-Driven SERS for Cancer Exosome Analysis

Exosomal surface proteins and internal miRNA cargo are key targets. This diagram illustrates the logical and analytical pathway from sample to diagnosis using a SERS-based sensor.

Diagram 2: AI-SERS exosome analysis pathway.

Building the Future: Design, Integration, and Real-World Applications

This application note details the hardware-software co-design methodologies enabling the development of a high-sensitivity, AI-integrated optical biosensor platform for point-of-care (POC) diagnostics. The co-design approach is critical for achieving the requisite performance, miniaturization, and intelligent data analysis at the edge.

Core System Architecture and Quantitative Performance Targets

The system architecture is designed around a feedback loop where hardware specifications inform algorithm design and vice-versa. Key quantitative targets are summarized below.

Table 1: Co-Design Performance Specifications & Outcomes

| Subsystem | Design Parameter | Target Specification | Co-Design Implication |

|---|---|---|---|

| Optical Sensor | Detector Resolution | 12 MP, 3.45 µm pixel pitch | Determines max spatial sampling for multiplexing; defines raw data volume. |

| Light Source Stability | <0.5% intensity fluctuation | Informs software normalization and denoising algorithm requirements. | |

| Signal-to-Noise Ratio (SNR) | >30 dB at 1 fM target conc. | Sets minimum threshold for AI model's classification confidence. | |

| Embedded Processor | Compute Performance | ≥ 2 TOPS (INT8) | Constrains complexity of deployable neural network (e.g., # of layers, ops). |

| Memory Bandwidth | >50 GB/s | Limits batch size and input resolution for real-time inference. | |

| Power Consumption | < 5W for full assay | Dictates thermal design and battery life for portable use. | |

| AI/Software | Inference Latency | < 3 seconds per sample | Drives hardware accelerator selection (e.g., NPU vs. GPU). |

| Quantization Scheme | INT8 Post-Training Quantization | Requires hardware support for low-precision arithmetic. | |

| Limit of Detection (LoD) | 0.1 fM in 10% serum | Joint outcome of sensor SNR and algorithm robustness. |

Experimental Protocol: End-to-End Co-Design Validation

Protocol Title: Iterative Validation of Sensor Fabrication, Data Acquisition, and AI Model Performance.

Objective: To holistically validate the system by measuring the final diagnostic outcome (LoD, specificity) as a function of co-designed parameters.

Materials & Reagents:

- Functionalized photonic crystal (PC) sensor chips.

- Target analyte (e.g., IL-6 protein) and isotype control in serially diluted spiked human serum.

- Optical biosensor prototype with embedded compute module (e.g., Jetson Orin Nano).

- Reference bench-top spectrometer.

Procedure:

- Hardware Characterization:

- Mount a pristine, functionalized sensor chip.

- Acquire 100 baseline interferometric images using the integrated sensor under controlled temperature.

- Calculate per-pixel mean intensity and temporal noise (standard deviation) to generate a system noise map.

- Biochemical Assay & Data Acquisition:

- Introduce samples (n=5 per concentration) across a 6-log range (1 pM to 1 fM) onto the sensor surface.

- For each sample, initiate the embedded acquisition software. The software triggers image capture at 2 Hz for 300 seconds.

- Simultaneously, raw image data is streamed to both the embedded processor and (via USB) a host PC for reference.

- On-Device vs. Server-Grade Processing:

- On-Device: The embedded AI pipeline executes in real-time: (a) Image ROI extraction and noise-filtering using a pre-loaded calibration map, (b) Feature extraction (wavelength shift, intensity change), (c) Inference via a quantized neural network, (d) Output of concentration and confidence score on the integrated display.

- Host PC: Raw data is processed offline using a high-precision (FP32) server-grade AI model and classical curve-fitting algorithms.

- Co-Design Analysis:

- Compare the LoD and specificity results from Step 3a and 3b.

- Correlate any performance discrepancy (>10%) with specific hardware bottlenecks (e.g., quantization error, memory-limited model architecture).

- Refine the quantized model or adjust acquisition parameters (e.g., frame averaging) and iterate the validation.

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 2: Essential Components for Co-Design Implementation

| Item | Function in Co-Design Context |

|---|---|

| Photonic Crystal Sensor Chip | The transducing element. Its quality factor (Q-factor) and functionalization chemistry directly determine the signal magnitude and noise floor, bounding achievable AI performance. |

| Tunable Monochromatic LED Source | Provides precise excitation. Software-controlled tuning enables spectral sweep acquisition, generating rich datasets for spectral-feature-based AI models. |

| Embedded AI Compute Module (e.g., NVIDIA Jetson, Coral Dev Board) | Prototyping platform containing CPU, GPU, and/or NPU. Allows direct benchmarking of different AI model architectures against power/performance constraints. |

| High-Fidelity Simulation Software (e.g., Lumerical, COMSOL) | Models optical field distribution and sensor response in silico before fabrication. Used to generate synthetic training data for AI models, de-risking hardware development. |

| Microfluidic Flow Cell & Precision Pump | Enables automated, repeatable sample introduction. Integration with software via GPIO/API is essential for validating complete "sample-to-answer" workflows. |

System Workflow and Signaling Pathway Visualizations

Title: Co-Designed Optical Biosensor Data Pathway

Title: Iterative Hardware-Software Co-Design Protocol

This protocol details the construction of a robust data pipeline, a critical component for a broader thesis on AI-integrated optical biosensors for point-of-care (POC) diagnostics. The pipeline transforms raw, often noisy, optical signals (e.g., from surface plasmon resonance, interferometry, or fluorescence-based biosensors) into structured, curated datasets suitable for training machine learning (ML) and artificial intelligence (AI) models. The goal is to enable robust disease biomarker detection and quantification directly at the POC, accelerating diagnostics and drug development research.

Title: Data Pipeline Workflow for Optical Biosensor AI Integration

Detailed Protocols & Application Notes

Protocol: Acquisition & Pre-Processing of Raw Optical Sensor Signal

Objective: To acquire and condition raw optical biosensor data for downstream analysis, minimizing instrumental and environmental noise.

Materials & Equipment:

- AI-integrated optical biosensor (e.g., SPR, optical waveguide, CMOS image sensor-based).

- Data acquisition (DAQ) system (e.g., National Instruments).

- Microfluidic flow cell and precision pump.

- Buffer solutions (PBS, HEPES).

- Computing workstation with Python (NumPy, SciPy, Pandas) or MATLAB.

Procedure:

- Sensor Calibration: Prior to sample injection, collect a 5-minute baseline signal in running buffer at a stable temperature (±0.1°C). Record the mean (µbaseline) and standard deviation (σbaseline).

- Sample Injection & Data Acquisition: Using the automated pump, inject the analyte sample (e.g., serum spiked with a target biomarker) over the sensor surface. Synchronize the injection trigger with the DAQ system.

- Data Logging: Record the primary signal (e.g., resonance wavelength shift, intensity, or phase) at a minimum sampling rate of 10 Hz. Save data in a lossless format (e.g.,

.tdms,.h5, or.csvwith timestamps). - Basic Pre-Processing:

- Offset Correction: Subtract µ_baseline from the entire signal trace.

- Denoising: Apply a Savitzky-Golay filter (window length=21, polynomial order=3) to smooth high-frequency electronic noise without significantly distorting the binding kinetics.

- Drift Correction: For long runs, fit a linear or polynomial model to control (buffer-only) regions and subtract it from the entire dataset.

- Output: A cleaned time-series vector of the optical response, ready for feature extraction.

Table 1: Common Pre-Processing Techniques & Parameters

| Technique | Primary Function | Typical Parameters | Applicable Sensor Type |

|---|---|---|---|

| Savitzky-Golay Filter | Smoothing, Noise Reduction | Window: 15-25 pts, Poly Order: 2-3 | All time-series signals |

| Wavelet Denoising | Multi-resolution Noise Removal | Wavelet: 'sym4', Level: 3-5 | Signals with non-stationary noise |

| Moving Average | Low-pass Filtering | Window: 1-5 sec of data | Slow kinetic measurements |

| Baseline Subtraction | Remove Systemic Offset | Polynomial Fit (Order 1-2) | All sensors |

Protocol: Feature Extraction & Engineering from Sensorgrams

Objective: To convert the processed time-series sensorgram into a quantitative feature vector that encodes binding kinetics, affinity, and concentration information.

Materials & Equipment:

- Processed sensorgram data from Protocol 3.1.

- Software for kinetic analysis (e.g., Scrubber, BioLogic, or custom Python scripts with libraries like

LMFIT).

Procedure:

- Segmentation: Identify key regions in the sensorgram: baseline (B), association phase (A), and dissociation phase (D). Use the injection trigger timestamp for alignment.

- Feature Calculation: Extract the following feature categories for each sample injection:

- Amplitude Features: Maximum response (Rmax), equilibrium response (Req).

- Kinetic Features: Initial association slope (dR/dtassoc), dissociation rate constant (kd) estimated by fitting to a single exponential decay.

- Integrated Features: Area under the curve (AUC) for the entire binding event or specific phases.

- Shape Descriptors: For label-free sensors, extract Fourier transform coefficients of the binding phase.

- Dimensionality Reduction (Optional): If the number of extracted features is large (>50), apply Principal Component Analysis (PCA) to reduce collinearity and create orthogonal principal components for AI model input.

- Output: A tabular dataset (e.g.,

.csvor.featherfile) where each row is a sample and each column is a calculated feature or principal component.

Table 2: Key Extracted Features from a Typical Binding Sensorgram

| Feature Category | Specific Feature | Description | Biological Relevance |

|---|---|---|---|

| Amplitude | ΔR_max (RU or nm) | Maximum binding response | Proportional to analyte concentration |

| Kinetic | k_a (1/Ms) | Association rate constant | Binding affinity & on-rate |

| Kinetic | k_d (1/s) | Dissociation rate constant | Complex stability & off-rate |

| Derived | KD (M) = kd/k_a | Equilibrium dissociation constant | Affinity strength |

| Integral | AUC_assoc (a.u.) | Area during association | Total binding energy/information |

Title: Feature Extraction Process from Sensorgram

Protocol: Integration of Clinical Labels & Dataset Curation

Objective: To merge extracted sensor features with ground truth clinical/biological labels, creating the final AI-ready dataset.

Materials & Equipment:

- Extracted feature table from Protocol 3.2.

- Clinical metadata (e.g., patient ID, disease status, biomarker concentration from gold-standard assay like ELISA).

- Database or spreadsheet software (e.g., SQLite, Pandas DataFrame).

Procedure:

- Label Sourcing: For each biosensor sample, obtain the corresponding ground truth label. This could be:

- Categorical: Disease state (Healthy vs. Diseased), pathogen strain.

- Continuous: Concentration (from reference assay), clinical score.

- Ensure patient/sample IDs are anonymized and unique.

- Data Merging: Use a unique sample identifier to merge the feature table and the label table. Verify alignment integrity (no data leakage).

- Quality Control & Imputation:

- Remove samples where the sensor signal failed (e.g., Rmax < 3*σbaseline).

- Check for missing features. Use median imputation for continuous features or create a "missing" indicator for categorical ones, sparingly.

- Train-Test-Split: Perform a stratified split (e.g., 70/15/15) on the dataset to create training, validation, and test sets. Stratification ensures each set has similar proportions of target labels. Crucially, split by patient ID to ensure all samples from the same patient are in only one set, preventing data leakage.

- Final Dataset Artifacts: Save three distinct files:

train.csv,validation.csv,test.csv. Document the dataset version, split methodology, and feature descriptions in aREADME.mdfile.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for AI-Optical Biosensor Research

| Item | Function in the Pipeline | Example/Note |

|---|---|---|

| Functionalization Kit | Immobilizes biorecognition elements (BREs) on sensor surface. | Streptavidin/biotin system, amine-coupling chemistry (NHS/EDC). |

| Running Buffer | Provides stable pH and ionic strength for binding events. | 10mM HEPES, 150mM NaCl, pH 7.4, 0.005% surfactant P20 (for SPR). |

| Reference Analyte | Generates labeled data for model training and validation. | Recombinant protein at known concentrations (e.g., 0.1 pM – 100 nM). |

| Negative Control | Defines non-specific binding baseline for data labeling. | Sample matrix without target analyte (e.g., blank serum, isotype control). |

| Regeneration Solution | Removes bound analyte, regenerating the sensor surface. | 10mM Glycine-HCl, pH 2.0-3.0. Essential for generating multiple data points per chip. |

| Data Acquisition Software | Captures raw time-series signal from the detector. | LabVIEW, custom Python DAQ scripts, or proprietary sensor software with export功能. |

| Computational Environment | Platform for data pre-processing, feature extraction, and AI modeling. | Python (SciPy, Pandas, Scikit-learn, PyTorch/TensorFlow) or R. |

Application Notes: AI-Integrated Optical Biosensors in POC Diagnostics

AI-Driven Noise Reduction

Optical biosensors in point-of-care (POC) settings are plagued by heterogeneous noise from environmental fluctuations, sample matrix effects, and instrument drift. Modern AI approaches, particularly Convolutional Neural Networks (CNNs) and Denoising Autoencoders (DAEs), are trained on paired clean/noisy spectral or image data to isolate the true biosignal. This is critical for low-concentration analyte detection in complex bodily fluids like whole blood or saliva.

Automated Feature Extraction

Traditional feature engineering for biosensor outputs (e.g., peak wavelength, intensity, full width at half maximum) is manual and subjective. AI models, including 1D-CNNs and Transformer-based architectures, automatically extract latent, high-dimensional features from raw temporal or spectral data. These features often correlate with subtle physical phenomena (e.g., plasmon coupling, fluorescence resonance energy transfer efficiency) that are non-linear predictors of analyte identity and binding kinetics.

Concentration Prediction & Quantification

Regression models (e.g., Gradient Boosting, Support Vector Regression, and shallow Neural Networks) map extracted features to quantitative analyte concentrations. This bypasses the need for standard calibration curves for every new batch or device, enabling one-time model training for universal calibration. Recent advances integrate noise reduction, feature extraction, and regression into single end-to-end deep learning pipelines.

Table 1: Performance Comparison of AI Models in Optical Biosensor Applications

| AI Task | Model Architecture | Typical Input Data | Reported Performance Metric | Typical Improvement vs. Traditional Method |

|---|---|---|---|---|

| Noise Reduction | Denoising Autoencoder (DAE) | Noisy Spectra (Raman/SERS) | SNR Improvement: 15-25 dB | 300-500% increase in detection limit |

| Feature Extraction | 1D Convolutional Neural Net | Time-series Reflectivity | Feature Dimensionality Reduction: 1000:50 | Enables detection of 2+ analytes in multiplexed assays |

| Concentration Prediction | Gradient Boosting Regressor | Extracted Feature Vectors | R² Score: 0.96-0.99; MAE: <5 pM | Reduces calibration time by >90% |

Experimental Protocols

Protocol: Training a Denoising Autoencoder for SERS Biosensor Data

Objective: To develop a DAE model that removes stochastic noise from Surface-Enhanced Raman Spectroscopy (SERS) data for enhanced detection of a target biomarker (e.g., cardiac troponin I). Materials: See "Scientist's Toolkit" below. Procedure:

- Data Acquisition: Collect 10,000 SERS spectra from a functionalized gold nanopillar substrate exposed to a range of troponin I concentrations (0-100 ng/mL) in synthetic serum.

- Noisy/Clean Pair Generation: For each experimentally collected spectrum (considered "clean"), computationally generate 10 noisy variants by adding Gaussian white noise, Poisson noise (shot noise), and simulated baseline drift.

- Model Architecture: Implement a symmetric autoencoder in PyTorch/TensorFlow. The encoder: three 1D convolutional layers (kernel sizes: 5,3,3) with ReLU and max-pooling. Bottleneck: dense layer. Decoder: three transposed convolutional layers.

- Training: Train the DAE using Mean Squared Error (MSE) loss between the decoder's output and the original "clean" spectrum. Use an Adam optimizer (lr=0.001) for 100 epochs.

- Validation: Apply the trained DAE to a held-out test set of entirely new, experimentally noisy spectra. Validate using SNR calculation and by comparing the limit of detection (LoD) from dose-response curves before and after denoising.

Protocol: End-to-End Concentration Prediction using a 1D-CNN

Objective: To create a single model that ingests raw interferometric reflectance imaging sensor (IRIS) data and directly outputs predicted antigen concentration. Materials: See "Scientist's Toolkit" below. Procedure:

- Dataset Preparation: Assemble a dataset of 5,000 IRIS time-series signals (pixel intensity vs. time) from assays for interleukin-6 (IL-6). Each signal is labeled with the ground-truth concentration measured via ELISA.

- Preprocessing: Normalize all time-series to a standard length (1000 timepoints) and normalize intensity values to a 0-1 range.

- Model Design: Construct a 1D-CNN with:

- Two initial 1D convolutional blocks for noise suppression.

- Three subsequent 1D convolutional blocks for hierarchical feature extraction.

- A global average pooling layer.

- Two final dense layers for regression, outputting a single concentration value.

- Training & Evaluation: Split data 70/15/15 (train/validation/test). Use a Huber loss function to mitigate outlier influence. Train until validation loss plateaus. Evaluate on the test set using R² coefficient and Mean Absolute Percentage Error (MAPE).

Visualizations

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for AI-Optical Biosensor Development

| Item | Function & Relevance |

|---|---|

| Functionalized Nanoparticles | Gold/silver nanoparticles conjugated with antibodies/DNA aptamers. Serve as the sensing substrate for SERS/LSPR. Provide the raw optical signal for AI analysis. |

| Synthetic Biomolecular Matrices | Pre-formulated solutions mimicking the viscosity and interferent profile of blood, saliva, or urine. Essential for training AI models on realistic, noisy data. |

| Benchmark Protein/Analyte Panels | Pre-measured, highly accurate vials of target analytes (e.g., cytokines, cardiac markers). Provide the ground-truth labels for supervised AI model training. |

| Optical Calibration Standards | Stable materials with known optical properties (e.g., Raman shift standards, refractive index liquids). Used to pre-calibrate sensors, ensuring input data consistency for AI. |

| Modular Microfluidic Cells | Disposable or reusable flow cells that interface the biological sample with the optical sensor. Standardization here reduces non-biological noise variability. |

Within the ongoing research thesis on AI-integrated optical biosensors for point-of-care (POC) diagnostics, a pivotal challenge is the accurate interpretation of complex, multiplexed signals. This application note details protocols and methodologies for employing artificial intelligence (AI) to decode overlapping biomarker signatures from optical biosensor arrays, transforming raw signal data into clinically actionable diagnostic outputs.

Key Application Protocols

Protocol: Multiplexed Lateral Flow Assay (LFA) Imaging & Data Acquisition

Objective: To generate a standardized image dataset from a multiplexed optical LFA for AI model training. Materials:

- Multiplexed LFA strip (e.g., 4-10 test lines, nitrocellulose membrane).

- Sample containing target biomarkers (e.g., cytokines, cardiac panel).

- Smartphone-based imaging module with controlled LED illumination (λ = 450nm, 520nm, 630nm).

- Calibration reference card. Procedure:

- Apply 100 µL of sample to the LFA strip.

- Allow the assay to develop for 15 minutes at room temperature.

- Place the strip in the imaging module.

- Capture three images under the three different monochromatic LED illuminations.

- Save images in lossless format (e.g., .TIFF) with filename encoding assay ID, timestamp, and illumination wavelength.

Protocol: AI Model Training for Spectral Deconvolution

Objective: To train a convolutional neural network (CNN) to deconvolve spectral overlapping from quantum dot (QD) labels. Workflow:

- Data Curation: Create a ground-truth dataset with known concentrations of single biomarkers and pre-mixed combinations.

- Pre-processing: Extract region-of-interest (ROI) pixel intensities for each test line across all three illumination channels. Normalize intensities using reference control lines.

- Model Architecture: Implement a U-Net based CNN with input layer size [ROIwidth, ROIheight, 3_channels].

- Training: Use 70% of data for training, 15% for validation, 15% for testing. Train for 100 epochs using Adam optimizer and mean squared error loss.

- Output: The model outputs a matrix of deconvolved, biomarker-specific signal intensities.

Data Presentation

Table 1: Performance Metrics of AI-Deconvolution vs. Standard Readout for a Triplex Cardiac Panel (hs-cTnI, NT-proBNP, CRP)

| Metric | Standard LFA Reader (Raw) | AI-Deconvolution Model | Improvement |

|---|---|---|---|

| Limit of Detection (LOD) | 0.5 ng/mL | 0.1 ng/mL | 5x |

| Cross-Reactivity Error | 15-20% | < 3% | ~6x Reduction |

| Assay Time | 15 min | 15 min (+ 30 sec AI processing) | Negligible |

| Accuracy (AUC) in Validation Cohort | 0.82 | 0.96 | +0.14 |

Table 2: Essential Research Reagent Solutions for AI-Integrated Multiplexed Biosensing

| Item | Function in Protocol | Example Product/Specification |

|---|---|---|

| Multiplexed Optical LFA Strips | Provides the sensing platform with spatially or spectrally encoded test lines. | Luminescence-based or Quantum Dot (QD)-labeled strips. |

| Quantum Dot Conjugates (525nm, 585nm, 625nm) | Spectral multiplexing labels; different biomarkers conjugated to distinct QDs. | CdSe/ZnS core-shell, carboxylated surface. |

| Smartphone-based Multi-LED Imager | Consistent, standardized image acquisition for training and deployment. | Custom module with 450nm, 520nm, 630nm LEDs. |

| Reference Biomarker Panels | Creates ground-truth data for AI model training with known concentrations. | Recombinant protein mix, clinically validated. |

| AI Training Software Stack | Environment for developing and training deconvolution models. | Python, TensorFlow/PyTorch, OpenCV. |

Visualization of Workflows and Pathways

AI-Integrated Multiplexed Detection Workflow

Spectral Overlap and AI Deconvolution Logic

Application Note 1: AI-Enhanced Surface Plasmon Resonance for SARS-CoV-2 Variant Detection

Background & Principle

This application details the use of an AI-integrated, multiplexed Surface Plasmon Resonance (SPR) platform for the rapid, label-free detection and differentiation of SARS-CoV-2 variants of concern at the point-of-care. The system couples high-affinity antigen-antibody interactions on a functionalized gold sensor chip with a convolutional neural network (CNN) that analyzes real-time binding kinetics (association/dissociation rates) to classify variants.

Key Quantitative Data

Table 1: Performance Metrics of AI-SPR for SARS-CoV-2 Variants

| Variant Target | Limit of Detection (pM) | Time to Result (min) | AI Classification Accuracy (%) | Cross-Reactivity with Seasonal CoV (%) |

|---|---|---|---|---|

| Wild-type (WT) | 150 | 8.2 | 99.1 | <0.5 |

| Delta (B.1.617.2) | 180 | 8.5 | 98.7 | <0.5 |

| Omicron (BA.5) | 210 | 9.1 | 97.5 | 1.2 |

Protocol: Multiplexed SPR Chip Functionalization and Assay

- Sensor Chip Preparation: Use a commercially available carboxylated dextran-coated gold SPR chip (e.g., CM5 series).

- Surface Activation: Inject a 1:1 mixture of 0.4 M EDC (1-ethyl-3-(3-dimethylaminopropyl)carbodiimide) and 0.1 M NHS (N-hydroxysuccinimide) over the sensor surface at a flow rate of 10 µL/min for 7 minutes.

- Ligand Immobilization: Dilute recombinant SARS-CoV-2 Spike protein RBD (for pan-detection) and variant-specific monoclonal antibodies (for differentiation) in 10 mM sodium acetate buffer (pH 5.0) to 50 µg/mL. Inject each ligand sequentially over designated flow cells for 10 minutes to achieve a capture level of 8000-12000 Response Units (RU).

- Surface Blocking: Inject 1.0 M ethanolamine-HCl (pH 8.5) for 7 minutes to deactivate excess NHS esters.

- Sample Analysis: Dilute nasopharyngeal swab eluate in HBS-EP+ running buffer (10 mM HEPES, 150 mM NaCl, 3 mM EDTA, 0.005% v/v Surfactant P20, pH 7.4). Inject sample at 30 µL/min for 3 minutes (association phase), followed by buffer alone for 5 minutes (dissociation phase). Regenerate the surface with a 30-second pulse of 10 mM glycine-HCl (pH 2.0).

- AI-Enhanced Data Processing: The raw sensogram data (RU vs. time) from all flow cells is streamed to a pre-trained CNN model (architecture: 3 convolutional layers, 2 dense layers). The model extracts kinetic features and outputs both a positive/negative result and a probability distribution for variant classification.

Research Reagent Solutions

| Item | Function |

|---|---|

| Carboxymethylated Dextran (CM5) SPR Chip | Gold sensor surface with a hydrogel matrix for high-density ligand immobilization. |

| EDC/NHS Crosslinker Kit | Activates carboxyl groups on the sensor surface for covalent amine coupling. |

| Recombinant Viral Antigens/Antibodies | High-purity ligands for specific capture of target analytes from clinical samples. |

| HBS-EP+ Running Buffer | Provides consistent ionic strength and pH, minimizes non-specific binding. |

| Glycine-HCl Regeneration Solution | Gently removes bound analyte without damaging the immobilized ligand layer. |

AI-SPR Workflow for Viral Variant Detection

Application Note 2: AI-Driven Photonic Ring Resonance for Cardiac Troponin I Quantification

Background & Principle

This note describes a protocol for ultra-sensitive detection of cardiac troponin I (cTnI) using a silicon photonic microring resonator biosensor integrated with a machine learning regression algorithm. The sensor measures wavelength shifts caused by cTnI binding to antibody-functionalized microrings. AI compensates for non-specific binding and environmental noise, enabling precise quantification in finger-prick blood volumes.

Key Quantitative Data

Table 2: Performance of AI-Photonic cTnI Assay vs. Clinical Analyzer

| Parameter | AI-Photonic Sensor | Central Lab Chemiluminescence |

|---|---|---|

| Dynamic Range | 0.5 - 10,000 pg/mL | 10 - 50,000 pg/mL |

| Limit of Detection (LoD) | 0.2 pg/mL | 5 pg/mL |

| Assay Time | 12 minutes | 45-60 minutes |

| Correlation Coefficient (R²) | 0.987 | N/A (Reference) |

| CV (%) at 5 pg/mL | 6.5% | 8.2% |

Protocol: Microring Functionalization and cTnI Detection

- Sensor Chip Cleaning: Sonicate the silicon nitride microring resonator chip in acetone, isopropanol, and deionized water (5 min each). Dry under N₂ stream.

- Surface Activation: Place chip in a UV-ozone cleaner for 15 minutes to generate hydroxyl groups. Immediately immerse in 2% (v/v) (3-aminopropyl)triethoxysilane (APTES) in anhydrous toluene for 1 hour. Rinse with toluene and ethanol, then cure at 110°C for 15 min.

- Antibody Immobilization: Incubate the aminated surface with a 1 mM solution of heterobifunctional linker NHS-PEG₄-Maleimide in PBS for 1 hour. Rinse. Spot 100 µL of 25 µg/mL anti-cTnI monoclonal antibody (thiolated) in PBS on individual microrings via a microfluidic manifold. Incubate overnight at 4°C.

- Blocking: Flow 1% BSA in PBS with 0.05% Tween-20 for 1 hour to passivate the surface.

- Measurement: Load 10 µL of diluted whole blood sample (1:10 in assay buffer) into the microfluidic chamber. Monitor resonant wavelength shift (Δλ) in real-time for 10 minutes.

- AI-Enhanced Quantification: A Random Forest regression model, trained on Δλ kinetic curves from known cTnI concentrations and control rings, processes the data. It accounts for baseline drift and matrix effects to output a final concentration in pg/mL.

Research Reagent Solutions

| Item | Function |

|---|---|

| Silicon Nitride Microring Resonator Chip | High-Q optical resonator for label-free, multiplexed biomarker detection. |

| APTES (Aminosilane) | Forms a self-assembled monolayer to introduce amine groups on the sensor surface. |

| NHS-PEG₄-Maleimide Crosslinker | Spacer arm for oriented antibody immobilization, reducing steric hindrance. |

| Thiolated Anti-cTnI Antibody | Capture probe specific to cTnI; thiol group allows directed coupling to maleimide linker. |

| High-Sensitivity Wavelength Interrogation System | Precisely measures sub-picometer shifts in resonant wavelength. |

cTnI Detection via Photonic Resonance & AI

Application Note 3: AI-Powered Fluorescence-Based Lateral Flow for Early Cancer Biomarker Panel

Background & Principle

This protocol outlines the development of a multiplexed, quantum dot (QD)-based lateral flow assay (LFA) strip for the simultaneous detection of a three-protein panel (PSA, CA-15-3, CEA) relevant for cancer screening. A smartphone-based reader captures fluorescence signals, and a support vector machine (SVM) algorithm integrates the multiplexed data to provide a risk stratification score, improving specificity over single-analyte tests.

Key Quantitative Data

Table 3: Analytical Sensitivity of AI-LFA for Cancer Biomarkers

| Biomarker | Target Cancer | LoD (AI-LFA) | LoD (Standard LFA) | Linear Range | Multiplexing Cross-Talk |

|---|---|---|---|---|---|

| PSA | Prostate | 0.1 ng/mL | 2 ng/mL | 0.1 - 200 ng/mL | < 3% |

| CA-15-3 | Breast | 2.0 U/mL | 15 U/mL | 2 - 500 U/mL | < 5% |

| CEA | Colorectal | 0.3 ng/mL | 5 ng/mL | 0.3 - 100 ng/mL | < 4% |

Protocol: Multiplex QD-LFA Strip Assembly and AI Readout

- Conjugate Pad Preparation: Mix streptavidin-coated QDs emitting at 525nm, 605nm, and 705nm with biotinylated detection antibodies for PSA, CA-15-3, and CEA, respectively. Incubate for 45 minutes. Dispense the mixture onto a glass fiber pad and dry overnight at 37°C.

- Test Line Patterning: Dispense capture antibodies for each biomarker (at distinct spatial locations) and a control line antibody (anti-species IgG) onto a nitrocellulose membrane using a robotic dispenser.

- Strip Assembly: Laminate the sample pad, conjugate pad, membrane, and absorbent pad on a backing card. Cut into 4mm wide strips.

- Assay Procedure: Apply 80 µL of serum sample to the sample pad. Allow the sample to migrate for 15 minutes at room temperature.

- Image Acquisition: Place the developed strip in a portable, dark-box reader containing a 365 nm LED excitation source. Capture fluorescence images using a smartphone camera with a long-pass emission filter.

- AI-Integrated Analysis: A custom app segments the image, extracts fluorescence intensity values for each test line. An SVM model, trained on a clinical dataset, takes the three-analyte concentration profile as input and outputs a "Low," "Intermediate," or "High" risk score based on multi-marker patterns.

Research Reagent Solutions

| Item | Function |

|---|---|

| Streptavidin-Coated Quantum Dots | Highly fluorescent, multiplexable nanolabels with distinct emission wavelengths. |

| Biotinylated Detection Antibodies | Bind both the target analyte and the QD reporter via streptavidin-biotin interaction. |

| Nitrocellulose Membrane with Defined Pores | Capillary flow medium for precise patterning of capture lines. |

| Portable Smartphone Fluorescence Reader | Contains uniform excitation and emission filtering for consistent quantitative imaging. |

AI-LFA for Multi-Cancer Biomarker Risk Score

Navigating Challenges: Optimization Strategies for Robust POC Performance

AI-integrated optical biosensors represent a transformative frontier in point-of-care (POC) diagnostics. However, their deployment in real-world, non-laboratory settings is hampered by significant noise sources categorized as Environmental Interference (e.g., ambient light fluctuations, temperature/humidity variance, mechanical vibration) and Sample Matrix Interference (e.g., non-specific binding, autofluorescence from biological components, scattering from lipids or cells, pH/ionic strength effects). This document outlines application notes and protocols to characterize, mitigate, and computationally correct for these interferences, thereby enhancing the robustness and reliability of POC biosensor data.

Quantitative Characterization of Common Interferents

Table 1: Magnitude of Signal Interference from Common Sample Matrix Components in Serum

| Interferent | Typical Concentration Range in Serum | Reported Signal Deviation (vs. Buffer) | Primary Interference Mechanism |

|---|---|---|---|

| Human Serum Albumin (HSA) | 35-50 mg/mL | +15% to +45% (Background Fluorescence) | Non-specific adsorption, background fluorescence (~350/450 nm ex/em). |

| Immunoglobulin G (IgG) | 8-16 mg/mL | +10% to +30% (Non-Specific Binding) | Non-specific binding to sensor surfaces or capture elements. |

| Lipids (Triglycerides) | 0.5-2.5 mg/mL | Up to +60% (Light Scattering) | Mie scattering, increasing optical density and baseline drift. |

| Hemoglobin | >0.1 mg/mL (in hemolysis) | -20% to -50% (Signal Quenching) | Inner-filter effect, absorbing excitation/emission light. |

| Bilirubin | 0.2-1.2 mg/dL | -10% to -25% (Fluorescence Quenching) | Fluorescence resonance energy transfer (FRET) quenching. |

Table 2: Impact of Environmental Variables on Optical Biosensor Performance

| Environmental Parameter | Tested Range | Typical Signal CV Increase | Recommended Control Strategy |

|---|---|---|---|

| Ambient Light (Stray) | 0-1000 lux | 5-25% (Photodetector noise) | Physical shrouding, optical bandpass filters, synchronous detection. |

| Temperature | 20°C - 30°C | 1-3% per °C (Kinetic effects) | Integrated Peltier control, reference channel for thermal drift compensation. |

| Mechanical Vibration | 10-100 Hz | Up to 15% (Baseline instability) | Vibration-damping mounts, time-averaged signal acquisition. |

Experimental Protocols for Interference Assessment

Protocol 3.1: Systematic Evaluation of Sample Matrix Effects

Objective: To quantify the interference of individual serum components on a fluorescence-based immunosensor's limit of detection (LOD). Materials: Purified target analyte, PBS (1X, pH 7.4), purified interferents (HSA, IgG, lipids), fluorescence biosensor platform. Procedure:

- Prepare a calibration curve of the target analyte in pristine PBS (6 concentrations, n=3).

- Prepare identical analyte calibration curves spiked into solutions containing a fixed, physiologically relevant concentration of a single interferent (e.g., 45 mg/mL HSA).

- Run all samples on the biosensor using a standardized assay protocol (e.g., 10 min incubation).

- Plot dose-response curves. Calculate LOD (3σ/slope method) for each matrix.

- Compute the % Interference =

[(LOD in Interferent - LOD in PBS) / LOD in PBS] * 100. - Repeat for complex matrices (e.g., 10% synthetic serum, 10% human serum).

Protocol 3.2: Environmental Robustness Testing

Objective: To characterize sensor performance under variable ambient light and temperature. Materials: Biosensor device, calibrated light meter, temperature chamber, stable fluorescence reference standard (e.g., fluorophore-coated slide). Procedure:

- Ambient Light Test: Place the biosensor and reference slide in a controlled enclosure.

- Vary ambient white light intensity (0, 200, 500, 1000 lux) using a dimmable source. Measure the reference signal output at each level over 5 minutes.

- Calculate the coefficient of variation (CV) of the signal at each lux level.

- Temperature Drift Test: Place the biosensor in a temperature chamber. Stabilize at 22°C.

- Acquire a continuous baseline signal from a buffer-filled chamber for 30 minutes.

- Ramp chamber temperature linearly from 22°C to 30°C over 60 minutes, recording the signal continuously.

- Derive the signal drift per °C (

ΔSignal/ΔT).

Mitigation Strategies and AI-Integrated Corrections

Physical & Chemical Mitigation

- Optical Filters & Shrouds: Use of bandpass interference filters matched to the source to exclude ambient light.

- Surface Passivation: Multi-component blocking layers (e.g., BSA + casein + surfactants like Tween-20) to minimize non-specific binding.

- Sample Pre-Treatment: Integrated microfluidic filters for erythrocyte/particulate removal; dilution buffers with chelators and ionic strength adjusters.

AI-Driven Signal Processing Workflow

AI models are trained to discriminate true analyte signal from complex background interference patterns.

AI Correction Model for Signal Denoising

Dual-Referencing Logical Workflow

A robust experimental design incorporating internal and external controls for real-time correction.

Dual-Reference Correction Workflow

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Reagents for Combatting Interference in Optical Biosensing

| Reagent / Material | Function & Rationale | Example Product/Chemical |

|---|---|---|

| Surface Blocking Cocktail | Reduces non-specific protein adsorption. A blend of proteins and surfactants saturates uncovered sites. | 1% BSA, 0.5% Casein, 0.1% Tween-20 in PBS. |

| Synthetic Serum Matrix | Provides a consistent, ethically uncomplicated complex background for controlled interference studies. | Synthetic Serum from companies like BioReclamationIVT or prepared per CLSI guidelines. |

| Fluorescent Reference Standards | Stable, non-bleaching fluorophores for monitoring and correcting for instrument optical drift. | Fluorescent microspheres (e.g., from Thermo Fisher), or sealed cuvettes with [Ru(bpy)₃]²⁺. |

| Microfluidic Filtration Membrane | Removes particulates (cells, debris) from whole blood or crude samples pre-analysis to reduce scattering. | Integrated polyester or polycarbonate membranes with 0.2-0.8 µm pore size. |

| pH & Ionic Strength Adjustment Buffer | Normalizes sample conditions to minimize variable assay kinetics due to patient sample differences. | HEPES buffered saline (pH 7.4) with 150mM NaCl, 1mM Mg²⁺. |

| Quencher / Scatterer Spikes | Used as positive controls for interference during assay development and validation. | India Ink (light scattering), hemoglobin lysate (absorbance), high-triglyceride serum. |

The development of robust AI models for optical biosensor-based point-of-care (POC) diagnostics is critically hampered by the scarcity of large, high-quality, and diverse clinical datasets. This Application Note details practical strategies and experimental protocols for generating and augmenting data to train AI algorithms effectively, enabling accurate disease detection and biomarker quantification even with limited initial patient samples.

Core Strategies for Data Expansion

Synthetic Data Generation via Physics-Informed Models

Synthetic data mirrors the output of optical biosensors (e.g., spectral shifts, intensity changes, plasmonic responses) by mathematically modeling the underlying biophysics of analyte binding.

Protocol: Generating Synthetic Spectral Shift Data for a Label-Free Biosensor

- Objective: To create a dataset of synthetic reflectance spectra for training an AI model to predict analyte concentration.

- Materials: Computational software (Python with NumPy, SciPy), optical parameters (refractive index of sensor surface, analyte).

- Methodology:

- Define Base Model: Use the transfer matrix method or a simplified Fresnel equation to simulate the reflectance spectrum of a bare biosensor chip.

- Introduce Perturbation: Model the formation of a biomolecular layer upon analyte binding as a change in the local refractive index (∆n) using the de Feijter formula: ∆n = (dn/dc) * C * M, where (dn/dc) is the refractive index increment, C is surface coverage, and M is molecular weight.

- Parameter Variation: Systematically vary key parameters (analyte concentration, layer thickness, non-specific binding noise) within physiologically plausible ranges to generate thousands of unique spectral outputs.

- Add Noise: Introduce realistic noise profiles (Gaussian, drift, spike) derived from empirical characterization of the actual biosensor hardware.

Table 1: Parameter Ranges for Synthetic Spectral Data Generation

| Parameter | Simulated Range | Physical Basis |

|---|---|---|

| Analyte Concentration | 1 pM – 100 nM | Typical dynamic range for protein biomarkers |

| Biomolecular Layer Thickness | 1 – 10 nm | Corresponds to monolayer of antibodies/proteins |

| Refractive Index Increment (dn/dc) | 0.18 – 0.21 mL/g | Standard for proteins in aqueous buffer |

| Gaussian Noise (σ) | 0.1 – 0.5% of signal | Instrumental readout noise |

| Baseline Drift | ± 0.05 RU/sec | Temperature or flow-induced drift |

Transfer Learning from Large Public Domains

Pre-training AI models on large, publicly available datasets from related domains (e.g., general image recognition, spectroscopic databases) before fine-tuning on small, specific clinical biosensor data.

Protocol: Transfer Learning for a Plasmonic Image Classifier

- Objective: To adapt a pre-trained convolutional neural network (CNN) to classify disease states from limited plasmonic resonance imaging (SPRi) data.

- Materials: Pre-trained CNN (e.g., ResNet, VGG), public image dataset (e.g., ImageNet), in-house SPRi dataset (small, labeled).

- Methodology:

- Feature Extraction: Remove the final classification layer of the pre-trained CNN. Use the remaining network as a fixed feature extractor for your SPRi images.

- Fine-Tuning: Replace the final layer with a new one matching your disease classification categories. Train this new head, and optionally later layers of the base network, using your limited SPRi data.