Cost-Effectiveness Analysis in Medical Imaging: A Comprehensive Guide to Markov Modeling for Diagnostic Pathways

This article provides a comprehensive guide for researchers and healthcare decision-makers on applying Markov models to evaluate the cost-effectiveness of diagnostic imaging pathways.

Cost-Effectiveness Analysis in Medical Imaging: A Comprehensive Guide to Markov Modeling for Diagnostic Pathways

Abstract

This article provides a comprehensive guide for researchers and healthcare decision-makers on applying Markov models to evaluate the cost-effectiveness of diagnostic imaging pathways. We explore the foundational principles of Markov modeling in the context of diagnostic imaging, detail step-by-step methodological approaches for constructing and parameterizing models, address common troubleshooting and optimization challenges, and examine validation techniques and comparative analyses against other modeling frameworks. The article synthesizes current best practices, addresses methodological pitfalls, and highlights the role of these models in informing evidence-based resource allocation and clinical guideline development for imaging strategies.

Understanding Markov Models: The Foundation for Imaging Pathway Economics

Defining the Role of Markov Models in Health Economic Evaluations for Imaging

Within the broader thesis on cost-effectiveness analysis (CEA) of diagnostic and therapeutic imaging pathways, Markov models serve as a foundational computational technique. They are uniquely suited to model chronic, progressive diseases where patient management is heavily informed by serial imaging. The model's core function is to simulate a hypothetical cohort of patients moving through a set of mutually exclusive "health states" (e.g., Pre-Diagnosis, Localized Disease, Advanced Disease, Post-Treatment Surveillance, Death) over discrete time cycles. Transitions between states are governed by probabilities, which can be directly informed by imaging results (e.g., probability of progression based on MRI findings) and associated costs and quality-of-life weights. This allows for the comparative evaluation of different imaging strategies (e.g., MRI vs. CT for cancer staging) on long-term clinical and economic outcomes.

Application Notes: Key Use Cases in Imaging

| Application Area | Role of Markov Model | Imaging-Dependent Parameters |

|---|---|---|

| Cancer Staging & Surveillance | Compare lifetime costs and outcomes of initial staging with advanced imaging (e.g., PET/CT) vs. conventional imaging. | Transition probabilities from localized to metastatic state; test sensitivity/specificity informing treatment decisions. |

| Cardiovascular Risk Stratification | Evaluate cost-effectiveness of coronary CT angiography (CCTA) vs. stress testing in patients with chest pain. | Probability of revascularization based on imaging findings; reduction in MI risk post-imaging. |

| Neurodegenerative Disease Monitoring | Assess value of serial MRI/PET in monitoring disease progression and guiding therapy in Alzheimer's. | Rates of transition between mild, moderate, and severe cognitive impairment states. |

| Treatment Response Assessment | Model the impact of early response assessment imaging (e.g., interim PET in lymphoma) on therapy switching and outcomes. | Probability of treatment continuation or change based on imaging response criteria. |

Core Quantitative Data for Model Inputs

Table 1: Example Data Sources for a Markov Model Evaluating MRI in Multiple Sclerosis Monitoring

| Parameter Type | Example Value | Source | Note |

|---|---|---|---|

| Transition Probability: Stable to Progressive | 0.08 per year | Clinical trial with MRI endpoints (Freedman et al., 2023) | Informed by new T2 lesion appearance. |

| Cost: Brain MRI with Contrast | $1,250 (USD) | Medicare Physician Fee Schedule (2024) | Includes technical and professional components. |

| Utility (QoL) for Stable Disease | 0.85 | EQ-5D survey data from observational study | Scale: 0 (death) to 1 (full health). |

| Utility Decrement for Relapse | -0.15 (for 3 months) | Systematic review (Briggs et al., 2022) | Applied for the cycle in which relapse occurs. |

| Sensitivity of MRI for Detecting Progression | 0.92 | Meta-analysis of diagnostic accuracy (Kim et al., 2023) | Informs model branch for imaging-guided treatment change. |

Experimental Protocol: Building a Markov Model for an Imaging Pathway

Protocol Title: Development and Analysis of a Markov Model to Assess the Cost-Effectiveness of PET/CT vs. CT Alone in Lung Cancer Staging.

Objective: To determine the incremental cost-effectiveness ratio (ICER) of using PET/CT for initial staging of non-small cell lung cancer.

Methodology:

- Define Health States: Create a state-transition diagram (see Diagram 1).

- Define Model Cycle and Time Horizon: Set cycle length to 3 months. Set time horizon to 10 years (lifetime perspective).

- Populate Transition Probabilities:

- Extract probabilities from published literature (e.g., probability of occult metastasis missed by CT but detected by PET/CT).

- Derive mortality rates from cancer registries (disease-specific) and life tables (background).

- Assign Costs & Utilities:

- Costs: Direct medical costs (imaging procedure, treatment modalities [surgery, chemotherapy], follow-up, palliative care).

- Utilities: Assign quality-of-life weights (utilities) to each health state from published preference-based studies.

- Implement Imaging Strategy Arms:

- Arm A (CT): Probabilities of correct/incorrect staging based on CT sensitivity/specificity.

- Arm B (PET/CT): Probabilities based on PET/CT sensitivity/specificity.

- Run Simulation & Analysis:

- Simulate a cohort of 100,000 patients through the model for both strategies.

- Calculate total costs, quality-adjusted life-years (QALYs), and life-years (LYs) for each.

- Compute ICER: (CostB - CostA) / (QALYB - QALYA).

- Conduct Sensitivity Analyses:

- One-Way Sensitivity Analysis: Vary each key parameter (e.g., cost of PET/CT, sensitivity) over a plausible range.

- Probabilistic Sensitivity Analysis (PSA): Run the model 10,000 times, sampling all parameters simultaneously from defined probability distributions (e.g., beta for probabilities, gamma for costs). Present results on a cost-effectiveness acceptability curve (CEAC).

Visualized Workflow and Model Structure

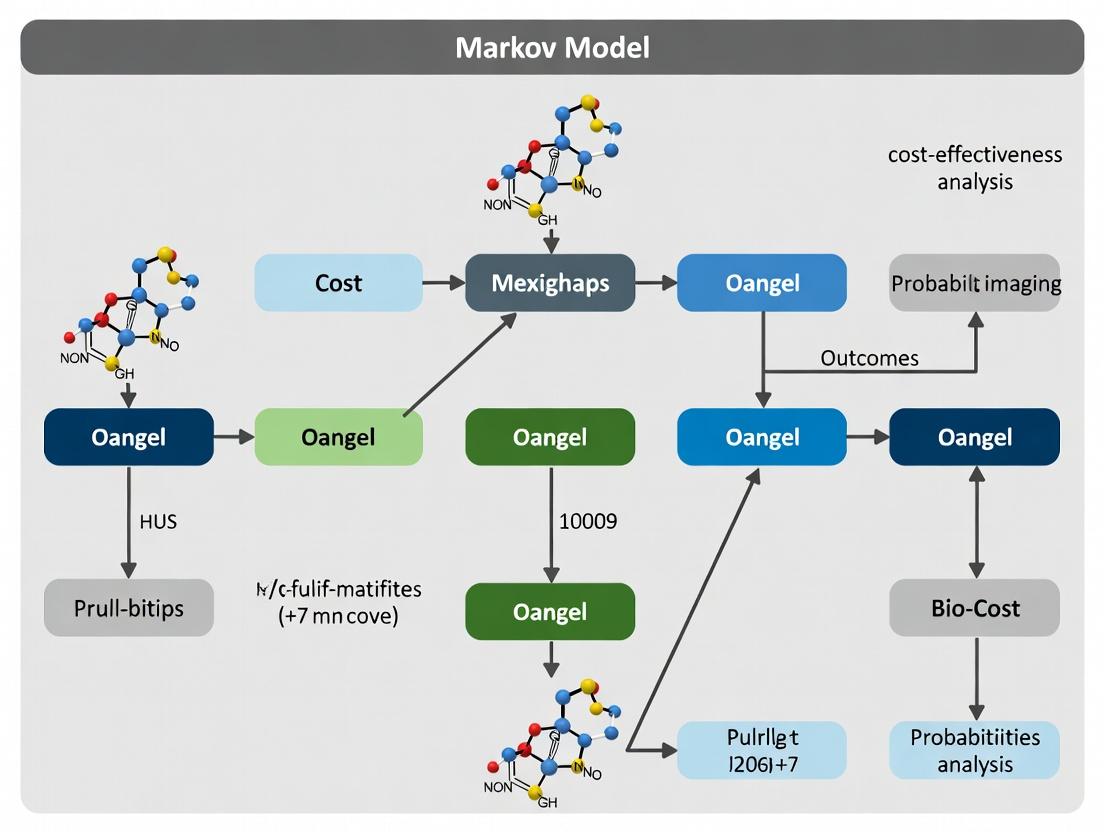

Diagram 1: Markov Model for Imaging-Based Staging

Diagram 2: Health Economic Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Markov Modeling for Imaging |

|---|---|

| Modeling Software (TreeAge Pro, R, SAS) | Primary platform for building, populating, running, and analyzing the Markov model. R is increasingly used for its transparency and PSA capabilities. |

| Systematic Literature Review Databases (PubMed, EMBASE, Cochrane Library) | Source for populating transition probabilities, test characteristics, utilities, and cost inputs with evidence. |

| Probabilistic Distributions Library (e.g., Beta, Gamma, Log-Normal) | Used in PSA to define uncertainty around input parameters (Beta for probabilities, Gamma for costs). |

| Cost Databases (Medicare Fee Schedules, NHSEngland Tariffs, HIRC data) | Provide standardized, geographically relevant cost inputs for imaging procedures and related healthcare services. |

| Quality of Life (QoL) Weight Registries (EQ-5D Value Sets, NHANES, Disease-Specific Studies) | Source for utility weights assigned to model health states, essential for QALY calculation. |

| Visualization Tools (Graphviz, Microsoft Visio, Lucidchart) | For creating clear state-transition diagrams and conceptual workflows for publications and presentations. |

In cost-effectiveness analysis (CEA) of diagnostic imaging pathways, Markov models provide a dynamic framework to simulate patient progression through defined health states over time. The accurate definition of health states, transition probabilities, cycle lengths, and outcome trace values is critical for modeling the long-term clinical and economic impact of imaging technologies (e.g., advanced MRI vs. CT for cancer staging). These models inform value-based decisions in drug development and healthcare policy by comparing the incremental cost per quality-adjusted life-year (QALY) gained between pathways.

Core Terminology & Quantitative Data

Table 1: Key Markov Modeling Terminology in Imaging Pathways

| Term | Definition in Imaging Context | Typical Value / Example | Source/Justification |

|---|---|---|---|

| Health State | A distinct clinical/imaging status defining patient management. | 1. Pre-imaging (Suspected Disease) 2. Post-Imaging: Localized 3. Post-Imaging: Metastasized 4. Post-Treatment: Remission 5. Death | Model states must be mutually exclusive and collectively exhaustive. |

| Transition | Probability of moving from one health state to another per model cycle. | P(Localized -> Metastasized) = 0.15 per cycle (based on imaging-identified progression). | Derived from imaging trial literature or meta-analyses of progression rates. |

| Cycle Length | The fixed time period over which transitions are evaluated. | 1 month or 3 months common in chronic disease (e.g., cancer monitoring). | Must align with imaging follow-up intervals and clinical decision points. |

| Trace Value (Reward) | Outcome (cost, utility, survival) accumulated per cycle in a state. | Utility: Localized = 0.80, Metastasized = 0.50. Cost: Advanced MRI scan = $1,200, CT scan = $500. | Utilities from EQ-5D studies; costs from Medicare fee schedules. |

| Half-Cycle Correction | Adjustment for outcomes assuming transitions occur mid-cycle. | Applied as standard in cohort models for accuracy. | Best practice in health economic modeling. |

Table 2: Example Transition Probability Matrix (3-Month Cycle)

| From \ To | Localized | Metastasized | Remission | Death |

|---|---|---|---|---|

| Localized | 0.80 | 0.15 | 0.04 | 0.01 |

| Metastasized | 0.00 | 0.70 | 0.10 | 0.20 |

| Remission | 0.05 | 0.05 | 0.85 | 0.05 |

| Death | 0.00 | 0.00 | 0.00 | 1.00 |

Experimental Protocols for Parameter Estimation

Protocol 1: Deriving Transition Probabilities from Imaging Trial Data

Objective: To estimate the probability of disease progression (e.g., from localized to metastasized) based on serial imaging reads.

- Cohort Definition: Recruit a cohort of patients with initially localized disease (confirmed by baseline imaging).

- Imaging Schedule: Perform follow-up scans using the defined imaging modality (e.g., whole-body MRI) at regular intervals (e.g., every 3 months) for 2 years.

- Blinded Central Read: All scans are read independently by two radiologists blinded to clinical data, using standardized criteria (e.g., RECIST 1.1 for oncology).

- Adjudication: Discordant reads are resolved by a third senior radiologist.

- Data Analysis: For each interval (cycle), calculate the proportion of patients whose imaging status changed from "Localized" to "Metastasized."

- Formula:

P(Transition) = Number of patients with new metastases at follow-up / Number of patients at risk (alive with localized disease at start of cycle).

- Formula:

- Statistical Modeling: Use survival analysis (e.g., Kaplan-Meier method) to account for censoring and calculate continuous hazard rates, which can be converted to cycle-specific probabilities.

Protocol 2: Eliciting Health State Utilities for Imaging-Detected States

Objective: To measure quality-of-life (QoL) weights (utilities) for health states defined by imaging findings.

- Vignette Development: Create detailed, patient-centric descriptions of health states (e.g., "Localized disease with mild symptoms, undergoing active monitoring with quarterly MRI scans").

- Population Sample: Recruit a representative sample from the general public (n≥100) via validated panels.

- Utility Elicitation: Administer the vignettes using a standard gamble (SG) or time trade-off (TTO) protocol via interview or digital platform.

- Analysis: Calculate mean utility scores for each vignette/health state. Conduct sensitivity analyses on subgroup responses.

Visualization of a Markov Model for Imaging Pathways

Diagram 1: Simplified Markov Model for Cancer Imaging

Diagram 2: Protocol for Transition Probability Estimation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Imaging-Based Markov Model Research

| Item / Solution | Function in Research | Example Product/ Source |

|---|---|---|

| DICOM Viewing & Analysis Software | Standardized measurement of lesions on serial scans for progression determination. | Horos, 3D Slicer, OsiriX MD. |

| Clinical Data Capture (EDC) System | Manage patient cohort data, imaging schedules, and linked reader outcomes. | REDCap, Medidata Rave. |

| Statistical Analysis Software | Perform survival analysis, calculate probabilities, and run Markov models. | R (heemod, mstate packages), TreeAge Pro, SAS. |

| Utility Elicitation Platform | Administer standard gamble/time trade-off surveys for health state valuation. | EQ-5D-5L Web Version, dedicated survey tools (Qualtrics) with TTO modules. |

| Markov Modeling Software | Build, run, and validate the cost-effectiveness model. | Microsoft Excel with VBA, R (hesim, dampack), TreeAge Pro. |

| Standardized Reporting Guidelines | Ensure model transparency and quality. | CHEERS 2022 Checklist for Health Economic Evaluations. |

When to Use a Markov Model vs. Other Cost-Effectiveness Analysis Frameworks

Cost-effectiveness analysis (CEA) in imaging pathways research requires selecting an appropriate analytical framework to model disease progression, costs, and outcomes. The choice depends on the clinical condition, intervention type, time horizon, and data availability.

Table 1: Decision Matrix for CEA Framework Selection

| Feature/Criterion | Markov Model | Decision Tree | Discrete-Event Simulation (DES) | Partitioned Survival Model (PSM) |

|---|---|---|---|---|

| Time Handling | Cyclic, discrete time periods (cycles) | Static, one-time point | Continuous, event-driven | Time-to-event from Kaplan-Meier curves |

| Best for Disease Process | Chronic, progressive conditions with recurring events | Acute, short-term decisions with clear endpoints | Complex systems with queues, resource constraints | Oncology trials with progression-free & overall survival data |

| Typical Time Horizon | Long-term (lifetime) | Short-term (<1 year) | Flexible, any horizon | Trial duration or extrapolated |

| State Transitions | Probabilistic, between finite health states | Not applicable | Individual patient attributes & event times | Transitions between health states based on survival curves |

| Computational Complexity | Moderate | Low | High | Low-Moderate |

| Data Requirements | Transition probabilities, utilities, costs | Probabilities, costs, utilities for pathways | Detailed resource use, time distributions | Survival curves, state costs/utilities |

| Ideal Imaging Use Case | Screening for abdominal aortic aneurysm over a lifetime | Choosing between MRI or CT for acute stroke | Modeling patient flow in a busy imaging department | Comparing novel PET tracer vs. standard imaging in lymphoma |

Core Protocols for Implementing a Markov Model in Imaging Pathways

Protocol 2.1: Defining Model Structure and Health States

Objective: To establish the finite health states that represent the clinical pathway of the disease being managed with imaging.

- Conduct a systematic literature review to define the natural history of the disease.

- Convene a clinical expert panel (minimum 3 specialists) to validate and refine health states via a modified Delphi process.

- Define states that are mutually exclusive and collectively exhaustive (e.g., Well, Disease Detected by Imaging, Post-Treatment, Disease Recurrence, Death).

- Create a state transition diagram. Allowed transitions must be clinically plausible (e.g., no direct transition from Well to Death unless the model includes an all-cause mortality risk).

Protocol 2.2: Populating Transition Probabilities from Imaging Data

Objective: To derive cycle-specific probabilities for moving between health states, incorporating the sensitivity, specificity, and follow-up intervals of the imaging pathway.

- Data Extraction: For each relevant clinical study, extract sensitivity (Sn), specificity (Sp), and disease incidence rates. Use meta-analysis if multiple studies exist.

- Adjust for Cycle Length: Convert annual probabilities (p) to cycle probabilities (P) using the formula: P = 1 - exp(-rt), where *r = -ln(1-p) and t is cycle length in years.

- Integrate Test Performance: Calculate the probability of moving from Well to Disease Detected as: Incidence * Sn + (1-Incidence) * (1-Sp). This accounts for true positives and false positives leading to the "detected" state.

- Parameterization: Populate a transition probability matrix for each strategy (e.g., MRI-based pathway vs. CT-based pathway).

Table 2: Example Annual Transition Probability Inputs for an AAA Screening Model

| From State | To State | Probability (Imaging Pathway A: Ultrasound) | Probability (Imaging Pathway B: CT Angio) | Source (Study, Year) |

|---|---|---|---|---|

| Well | AAA Detected (Small) | 0.0021 | 0.0023 | Systematic Review, 2023 |

| Well | Death (Other Causes) | 0.015 | 0.015 | Life Tables, 2024 |

| AAA Detected (Small) | AAA Progressed | 0.10 | 0.10 | RESCAN, 2022 |

| AAA Detected (Small) | Death (Other Causes) | 0.025 | 0.025 | Life Tables (Age-Adjusted), 2024 |

| Post-Repair | Death (Other Causes) | 0.022 | 0.022 | Life Tables (Age-Adjusted), 2024 |

| Post-Repair | Re-intervention | 0.02 | 0.02 | EVAR-1, 2021 |

Protocol 2.3: Costing and Utility Assessment for Imaging States

Objective: To assign accurate resource costs and health state utility values (Quality-Adjusted Life Years - QALYs) to each Markov state.

- Micro-Costing for Imaging Pathways: Itemize all resources for a given imaging state (e.g., "Disease Detected by MRI").

- Technician/radiologist time (minutes)

- Equipment use (amortized cost per scan)

- Contrast media or radiopharmaceuticals

- Facility overhead

- Utility Elicitation: Use time-trade-off (TTO) or standard gamble (SG) surveys with patients or clinicians to assign utility weights (0-1, where 1=perfect health) to chronic health states. For temporary states (e.g., "Recovering from Biopsy"), assign short-term disutilities.

- Discounting: Apply an annual discount rate (e.g., 3%) to future costs and QALYs as per national guidelines (e.g., NICE, ISPOR).

Comparative Experimental Protocol: Markov vs. Decision Tree

Title: Head-to-Head Analysis of Short-Term Diagnostic Pathways for Pulmonary Embolism.

Objective: To compare the cost-effectiveness of a Markov model vs. a decision tree for evaluating CT Pulmonary Angiography (CTPA) vs. V/Q SPECT over a 3-month horizon.

Protocol:

- Decision Tree Arm:

- Structure: Create a tree with chance nodes for test results (Positive/Negative) and terminal nodes for outcomes (PE Treated, PE Missed, No PE, False Alarm).

- Populate: Use probabilities from the PIOPED III trial. Assign costs and utilities only to terminal nodes.

- Analyze: Roll back the tree to calculate expected cost and effectiveness for each strategy.

- Markov Model Arm:

- Structure: Define states: Suspected PE, Post-CTPA, Post-V/Q, On Anticoagulation, Major Bleed, Post-Bleed, Dead.

- Populate: Use same clinical probabilities. Define 1-week cycles. Transitions allow for events like bleeding within the 3-month period.

- Simulate: Run a cohort simulation of 100,000 patients for 13 cycles.

- Comparison Metrics: Record total cost, total QALYs, Incremental Cost-Effectiveness Ratio (ICER), and computational time for each framework.

The Scientist's Toolkit: Key Reagents for CEA Modeling

Table 3: Essential Software and Data Sources for Imaging Pathway CEA

| Tool/Reagent | Provider/Example | Primary Function in CEA |

|---|---|---|

| Modeling Software | TreeAge Pro, R (hesim, dampack), Excel with VBA | Provides the computational environment to build, populate, and run Markov and other models. |

| Probabilistic Sensitivity Analysis (PSA) Tool | Built into TreeAge, R (BCEA package) | Automates Monte Carlo simulation to assess parameter uncertainty and generate cost-effectiveness acceptability curves. |

| Utility Weights Database | EQ-5D, HUI, SF-6D from clinical trials | Provides pre-measured health state utility values for QALY calculation. |

| Costing Compendium | CMS Physician Fee Schedule, NHS Reference Costs | Provides standardized unit costs for imaging procedures, physician time, and hospital stays. |

| Clinical Input Data | PubMed, Cochrane Library, NICE Evidence Search | Sources for meta-analyses on disease incidence, test accuracy, and treatment efficacy. |

| Visualization Library | R (ggplot2, DiagrammeR), Python (matplotlib) | Creates publication-quality diagrams of model structures and results. |

This document provides application notes and protocols for constructing a Markov model to analyze the cost-effectiveness of diagnostic imaging pathways. The content supports a broader thesis on economic evaluations in medical imaging research. The model integrates three core components: imaging test accuracy parameters, natural history of disease progression, and long-term health and economic outcomes.

Core Quantitative Data

Table 1: Generic Parameters for an Imaging Pathway Markov Model

| Component | Parameter | Symbol | Typical Range / Value | Source / Measurement Method |

|---|---|---|---|---|

| Test Accuracy | Sensitivity | Se | 0.70 - 0.95 | Meta-analysis of validation studies |

| Specificity | Sp | 0.80 - 0.99 | Meta-analysis of validation studies | |

| Positive Predictive Value | PPV | Calculated (Se, Sp, prevalence) | PPV = (Se * Prev) / [SePrev + (1-Sp)(1-Prev)] | |

| Negative Predictive Value | NPV | Calculated (Se, Sp, prevalence) | NPV = [Sp * (1-Prev)] / [(1-Se)Prev + Sp(1-Prev)] | |

| Disease Progression | Annual Transition: Healthy → Early Disease | PHE | 0.01 - 0.10 | Cohort studies, registries |

| Annual Transition: Early → Advanced Disease | PEA | 0.05 - 0.30 | Longitudinal imaging/natural history studies | |

| Annual Mortality (Advanced Disease) | Mort_A | 0.10 - 0.50 | Survival analysis (Kaplan-Meier) | |

| Annual Mortality (Other Causes) | Mort_OC | Age-dependent | Life tables | |

| Outcomes & Costs | Utility: Healthy State | U_H | 1.0 (reference) | EQ-5D survey in reference population |

| Utility: Early Disease (treated) | U_E | 0.75 - 0.90 | Patient-reported outcomes (PRO) studies | |

| Utility: Advanced Disease | U_A | 0.50 - 0.70 | Patient-reported outcomes (PRO) studies | |

| Cost: Diagnostic Test | C_Test | Variable ($200 - $2,000) | Hospital billing data, Medicare rates | |

| Cost: Early Disease Treatment (annual) | CTxE | Variable | Healthcare claims database analysis | |

| Cost: Advanced Disease Care (annual) | CCareA | Variable | Healthcare claims database analysis |

Experimental Protocols for Parameter Estimation

Protocol 3.1: Meta-Analysis for Imaging Test Accuracy

Objective: To pool sensitivity and specificity estimates for a target imaging modality (e.g., MRI for prostate cancer detection) from multiple diagnostic accuracy studies.

- Literature Search: Execute a systematic search in PubMed, EMBASE, and Cochrane Library using PRISMA-DTA guidelines. Search terms:

[imaging modality]AND[disease]AND (sensitivityORspecificity). - Study Selection: Two independent reviewers screen titles/abstracts, then full texts. Inclusion: original studies reporting TP, FP, FN, TN against a reference standard.

- Data Extraction: Use a standardized form to extract: sample size, patient characteristics, technical parameters of imaging, and contingency table data.

- Statistical Synthesis: Fit a bivariate random-effects model (e.g., using

midascommand in Stata ormadapackage in R) to jointly pool sensitivity and specificity, accounting for threshold effects. - Reporting: Present summary estimates with 95% confidence and prediction regions.

Protocol 3.2: Estimating Disease Progression Rates from Registry Data

Objective: To estimate annual transition probabilities between health states (e.g., localized to metastatic cancer) using longitudinal observational data.

- Data Source: Obtain data from a disease-specific registry (e.g., SEER for cancer) with follow-up on disease stage at diagnosis and subsequent events.

- Cohort Definition: Identify patients diagnosed in the initial health state of interest (e.g., localized disease) with no prior history of advanced disease.

- Time-to-Event Analysis: Define the event as progression to the next health state. Censor patients at death, loss to follow-up, or end of study.

- Modeling: Fit a parametric survival model (e.g., exponential, Weibull) to the time-to-progression data. The scale parameter (λ) of an exponential model provides a constant hazard rate, which can be approximated as the annual transition probability for a Markov cycle length of one year.

- Validation: Compare model-derived probabilities with empirical Kaplan-Meier estimates at key time points (e.g., 1, 3, 5 years).

Objective: To assign quality-of-life weights (utilities) for model health states using primary or secondary data.

- Health State Description: Develop clear, concise vignettes describing each Markov state (e.g., "Early Disease on Treatment" including symptoms and side effects).

- Valuation Technique:

- Primary Elicitation: Recruit a representative sample from the general public (n≥100). Use a standardized method like Time Trade-Off (TTO) or Standard Gamble (SG) to value each vignette.

- Secondary Sourcing: Identify published studies that report EQ-5D-5L scores for patient populations matching the health state descriptions. Calculate the mean utility score.

- Analysis: For primary studies, calculate mean and standard deviation of utilities for each vignette. For secondary analysis, pool means using meta-analysis if multiple sources exist.

Model Structure & Workflow Visualization

Diagram 1: Basic 3-State Markov Model Structure

Diagram 2: Imaging Pathway Decision Tree Integrated with Markov Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Developing an Imaging Pathway Markov Model

| Item | Function in Modeling | Example/Note |

|---|---|---|

| Decision Analysis Software | Provides the computational environment to build, run, and analyze the Markov model. | TreeAge Pro, R (heemod, dampack), Microsoft Excel with VBA. |

| Statistical Software | Used for meta-analysis of test accuracy, survival analysis for progression rates, and utility estimation. | Stata, SAS, R (metafor, survival, flexsurv packages). |

| Systematic Review Database Access | Source for identification of primary studies for parameter estimation. | PubMed/Medline, EMBASE, Cochrane Library, Web of Science. |

| Clinical & Cost Datasets | Provide real-world data for estimating transition probabilities, costs, and outcomes. | Disease registries (e.g., SEER), hospital billing databases, national claims data (e.g., Medicare), clinical trial data. |

| Utility Valuation Instruments | Standardized tools for measuring health-related quality of life for utility estimation. | EQ-5D-5L survey, Time Trade-Off (TTO) interview guide, Standard Gamble (SG) interview guide. |

| Model Validation Framework | A structured checklist to assess model credibility and face validity. | ISPOR-SMDM Modeling Good Research Practices guidelines, CHEERS 2022 checklist for reporting. |

Building Your Model: A Step-by-Step Guide to Markov Modeling for Imaging Strategies

Clinical Scenario Definition

A precise clinical scenario is the cornerstone of a meaningful cost-effectiveness analysis. It defines the patient population, diagnostic challenge, and clinical decisions that the imaging pathways aim to inform.

Core Elements:

- Patient Population: Demographics (age, sex), pre-test probability of disease, comorbidities, and presenting symptoms.

- Diagnostic Challenge: The specific clinical question (e.g., staging, detection, characterization, treatment response).

- Clinical Decision Point: The actionable decision informed by the imaging result (e.g., biopsy, surgery, medical therapy, no further action).

- Perspective: The viewpoint of the analysis (e.g., healthcare payer, societal, hospital).

Example Scenario for a Markov Model:

- Population: Patients ≥50 years with newly diagnosed, biopsy-proven non-small cell lung cancer (NSCLC).

- Challenge: Accurate initial staging to distinguish resectable (Stage I-IIIA) from unresectable (Stage IIIB-IV) disease.

- Decision Point: To proceed with curative-intent surgical resection or to initiate systemic therapy/chemoradiation.

- Perspective: U.S. Medicare Payer.

Competing Imaging Pathways

Pathways are sequences of imaging tests (and potentially other procedures) used to resolve the diagnostic challenge. They must be realistic, reflect current clinical guidelines, and represent viable alternatives.

Pathway Specification:

- Pathway A (Standard): [18F]FDG-PET/CT + Contrast-Enhanced CT (CECT) of chest/abdomen.

- Pathway B (Advanced): Whole-body [18F]FDG-PET/MRI.

- Pathway C (Sequential): CECT chest/abdomen, followed by selective PET/CT for equivocal cases.

Pathway Outcomes: Each pathway leads to a classification of disease stage (Resectable vs. Unresectable), which determines subsequent treatment and costs.

Data Synthesis for Pathway Performance

Diagnostic performance parameters (sensitivity, specificity) for each pathway are derived from meta-analyses and comparative studies. Key data for the NSCLC staging example, based on current literature, are summarized below.

Table 1: Diagnostic Performance of Imaging Pathways for NSCLC Staging (M-Stage)

| Imaging Pathway | Sensitivity (95% CI) | Specificity (95% CI) | Source / Key Study Design |

|---|---|---|---|

| A: PET/CT + CECT | 0.87 (0.82–0.91) | 0.92 (0.89–0.95) | Meta-analysis, He et al., 2022 |

| B: PET/MRI | 0.91 (0.85–0.95) | 0.95 (0.92–0.97) | Prospective comparative trial, Kim et al., 2023 |

| C: Sequential CECT→PET/CT | 0.83 (0.78–0.87)* | 0.96 (0.94–0.98)* | Modeling based on cascade testing |

Note: CI = Confidence Interval. *Performance for CECT→PET/CT is population-dependent, based on the proportion of equivocal CECT results triggering a PET/CT.

Table 2: Estimated Procedural Costs & Durations (U.S. Medicare)

| Procedure | Technical Component | Professional Component | Total Allowable | Median Time |

|---|---|---|---|---|

| CECT (Chest/Abdomen) | $185 | $45 | $230 | 20 min |

| [18F]FDG-PET/CT | $1,150 | $210 | $1,360 | 45 min |

| [18F]FDG-PET/MRI | $2,100 | $310 | $2,410 | 75 min |

Experimental Protocols for Key Cited Studies

Protocol 1: Prospective Comparative Trial of PET/CT vs. PET/MRI (e.g., Kim et al., 2023)

- Patient Recruitment: Enroll patients with newly diagnosed, biopsy-proven NSCLC planned for staging.

- Imaging Acquisition:

- Patients undergo both [18F]FDG PET/CT and PET/MRI within a 14-day window.

- PET/CT Protocol: Fasting ≥6 hrs, blood glucose <150 mg/dL, inject 3.7 MBq/kg [18F]FDG, uptake period 60±10 min. Acquisition from skull base to mid-thigh. CT performed with intravenous contrast.

- PET/MRI Protocol: Same radiopharmaceutical dose and uptake period. MRI sequences include T2 HASTE, DWI (b-values 50, 800), and volumetric T1 GRE pre/post-contrast.

- Image Analysis: Two blinded expert readers stage each exam independently using TNM 8th edition. Discordance resolved by consensus.

- Reference Standard: Pathologic confirmation from biopsy/surgery or clinical/imaging follow-up of ≥12 months.

- Statistical Analysis: Calculate per-patient sensitivity, specificity, and accuracy for M-stage. Compare using McNemar’s test.

Protocol 2: Meta-Analysis of PET/CT Performance (e.g., He et al., 2022)

- Search Strategy: Systematic search of PubMed, EMBASE, Cochrane Library (Jan 2015–Dec 2021). Keywords: "non-small cell lung cancer," "PET/CT," "staging," "sensitivity," "specificity."

- Study Selection: Include prospective/retrospective studies with ≥30 patients, using histopathology or follow-up as reference standard. Exclude reviews, case reports.

- Data Extraction: Two reviewers independently extract 2x2 contingency table data, study characteristics, quality scores (QUADAS-2).

- Statistical Synthesis: Fit a bivariate random-effects model to pool sensitivity and specificity, accounting for threshold effect and between-study heterogeneity. Generate hierarchical summary ROC curves.

Visualizations

Diagram 1: Competing Imaging Pathways for NSCLC Staging

Diagram 2: Markov Model State Transition Structure

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Imaging Pathway Research |

|---|---|

| [18F]Fluorodeoxyglucose ([18F]FDG) | Radiopharmaceutical for PET imaging. Serves as a glucose analog to highlight metabolically active tumor cells. |

| Iodinated / Gadolinium-Based Contrast Media | Enhances vascular and tissue contrast for CT and MRI, respectively, improving anatomical delineation and lesion detection. |

| QUADAS-2 (Quality Assessment Tool) | Validated checklist for systematic reviews to assess risk of bias and applicability of diagnostic accuracy studies. |

Statistical Software (R with mada package) |

Open-source environment for performing bivariate meta-analysis of diagnostic test accuracy. |

Markov Modeling Software (TreeAge Pro, R heemod) |

Specialized software for building, running, and analyzing state-transition (Markov) cost-effectiveness models. |

| DICOM Viewer & Analysis Suite (e.g., 3D Slicer) | Open-source platform for viewing, annotating, and quantitatively analyzing medical imaging data from clinical trials. |

1. Application Notes

Structuring the state-transition diagram is the foundational step in constructing a Markov model for cost-effectiveness analysis (CEA). In the context of imaging pathways research for diseases like cancer or neurodegenerative conditions, this model simulates the progression of a patient cohort through distinct, mutually exclusive health states over discrete time cycles (e.g., 1-month or 1-year cycles). The choice of states and allowed transitions must accurately reflect the natural history of the disease and the impact of diagnostic and therapeutic interventions. A key consideration in imaging research is how different imaging strategies (e.g., MRI vs. PET-CT) influence state classification (e.g., correct staging, early detection of recurrence) and subsequent management decisions, thereby altering transition probabilities and costs.

2. Core Protocol for Diagram Construction

Protocol 2.1: Defining Health States

- Objective: To define a set of mutually exclusive and collectively exhaustive health states relevant to the disease and imaging pathway.

- Procedure: a. Conduct a systematic literature review of the disease's natural history and standard care pathways. b. In consultation with clinical experts, list all possible health states a patient can occupy (e.g., Well, Localized Disease, Metastatic Disease, Post-Treatment Remission, Progressive Disease, Death). c. For imaging CEA, explicitly include states where imaging findings directly alter management (e.g., Diagnosed with Local Recurrence). d. Ensure states are defined such that a patient can be in only one state per model cycle. e. The "Death" state is always included and is typically absorbing (no exits).

Protocol 2.2: Defining Allowable Transitions

- Objective: To map all possible movements between health states from one cycle to the next.

- Procedure: a. For each health state, determine all states to which a patient can transition in the next cycle. b. Transitions are governed by probabilities, derived from clinical trials, registries, or meta-analyses. c. In imaging models, define separate transition probability sets for each diagnostic strategy (e.g., probabilities of detecting recurrence earlier with Strategy A vs. B). d. Transitions from most states to "Death" (all-cause or disease-specific) must be considered. e. Document clinical rationale for each allowed transition; disallowed transitions are not drawn.

Protocol 2.3: Populating Transition Probabilities

- Objective: To assign quantitative probabilities to each defined transition.

- Procedure: a. Identify primary data sources (e.g., Kaplan-Meier curves from relevant clinical studies). b. Use statistical techniques (e.g., curve digitization, parametric survival analysis) to extract or calculate constant or time-dependent (e.g., Weibull) transition probabilities per model cycle. c. For imaging-specific transitions (e.g., probability of moving from Remission to Recurrence Detected), use data on test sensitivity, specificity, and disease incidence. d. All probabilities from a given state must sum to 1.0 per cycle. e. Organize probabilities in a matrix format for clarity and programming.

3. Data Presentation: Transition Probability Matrix Template

Table 1: Template Transition Probability Matrix for a Simplified Oncology Model with Two Imaging Strategies.

| From State → To State | Localized Disease | Metastatic Disease | Death |

|---|---|---|---|

| Localized Disease | 1 - (pprog + pdeath_ld) | p_prog (Imaging Strategy-Dependent) | pdeathld |

| Metastatic Disease | 0 | 1 - pdeathmd | pdeathmd |

| Death | 0 | 0 | 1.0 |

Note: p_prog (probability of progression) may differ based on the imaging pathway's detection sensitivity. p_death_ld and p_death_md are state-specific mortality probabilities.

4. Visualization: Health State Transition Diagram

Diagram Title: Health State Transition Model for Imaging CEA

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Building a State-Transition Model.

| Item | Function in Model Development |

|---|---|

| Systematic Review Protocol | Framework for identifying disease natural history data, clinical guidelines, and key evidence on imaging test performance and treatment efficacy. |

| Clinical Expert Panel | Provides validation of health state definitions, transition structures, and clinical plausibility of assumptions. |

| Survival Analysis Software (e.g., R, Stata) | Used to fit parametric survival models (Weibull, Exponential, Gompertz) to published Kaplan-Meier curves to extract transition probabilities. |

| Curve Digitization Tool (e.g., WebPlotDigitizer) | Converts published survival curves from image format to numerical data for probability analysis. |

| Probabilistic Sensitivity Analysis (PSA) Framework | Library of statistical distributions (Beta for probabilities, Gamma for costs) to define parameter uncertainty for Monte Carlo simulation. |

| Markov Modeling Software/Platform (e.g., R, TreeAge, Excel) | Environment to program the state-transition structure, run cohort simulations, and calculate costs and outcomes. |

| Model Validation Checklist | Structured list (face, internal, cross, external validity) to ensure the model's structure and behavior align with clinical reality and previous research. |

This protocol details the critical third step in constructing a Markov model for cost-effectiveness analysis (CEA) of diagnostic imaging pathways. Within the broader thesis framework, this step translates the conceptual model structure into a quantitative, operational model by populating it with rigorously sourced data on costs, health state utilities, and clinical probabilities. The accuracy and credibility of the model's output—typically incremental cost-effectiveness ratios (ICERs)—are wholly dependent on the quality and appropriateness of these inputs.

Data Sourcing Protocols

Protocol for Systematic Literature Review (SLR) to Source Probabilities

Objective: To identify, extract, and synthesize transition probabilities (e.g., test accuracy, disease progression rates) from published literature.

Materials:

- Electronic bibliographic databases (PubMed, Embase, Cochrane Library).

- Reference management software (e.g., EndNote, Zotero).

- Pre-defined data extraction forms (digital or physical).

Methodology:

- Search Strategy Development: Define PICOTS (Population, Intervention, Comparator, Outcomes, Timing, Setting) criteria specific to the imaging pathway. Develop Boolean search strings using MeSH terms and keywords.

- Dual Screening: Two independent reviewers screen titles/abstracts against inclusion/exclusion criteria. Conflicts are resolved by a third reviewer.

- Full-Text Review: Retrieve and assess full-text articles of selected abstracts.

- Data Extraction: Extract point estimates (probabilities, rates) and measures of uncertainty (confidence intervals, standard errors). Record study design, sample size, and population characteristics.

- Data Transformation: Convert reported statistics (e.g., odds ratios, hazard rates) into annual transition probabilities compatible with the Markov cycle length using accepted formulas (e.g., ( p = 1 - e^{-rt} ), where r is the rate and t is time).

- Parameter Synthesis: If multiple sources exist, perform meta-analysis to derive a pooled estimate. If not, select the most applicable source based on population similarity and study quality.

Protocol for Deriving Cost Estimates

Objective: To attach accurate, geographically relevant direct medical costs to each model state and transition.

Materials:

- National fee schedules (e.g., Medicare Physician Fee Schedule, Diagnosis-Related Group (DRG) databases).

- Hospital accounting data or published cost studies.

- Drug and device price lists (e.g., Red Book, hospital procurement costs).

Methodology:

- Cost Identification: List all resource utilization associated with each health state (e.g., routine monitoring) and transition (e.g., performing an MRI, treating a complication).

- Cost Categorization: Separate costs into direct medical (e.g., procedure, hospitalization, medication) and, if within scope, direct non-medical (e.g., transportation).

- Unit Cost Assignment: Assign a unit cost to each resource item. Prioritize nationally representative published lists for generalizability. For hospital-based perspectives, micro-costing exercises may be required.

- Cost Year Adjustment: Inflate or deflate all costs to a common reference year using appropriate health sector indices (e.g., Consumer Price Index for medical care).

- Currency Standardization: If using international sources, convert to the target currency using purchasing power parities (PPPs) for health, not just exchange rates.

Protocol for Eliciting Health State Utility Values

Objective: To obtain preference-based weights (utilities) for each Markov health state, typically on a 0 (death) to 1 (perfect health) scale.

Materials:

- Published studies reporting utilities derived from generic preference-based instruments (EQ-5D, SF-6D, HUI).

- Primary data collection tools (if conducting de novo elicitation).

- Valuation algorithms (e.g., value sets for EQ-5D from countries like the UK or US).

Methodology:

- Literature First Approach: Conduct a targeted SLR for utility studies in the relevant disease and treatment context.

- Population Matching: Ensure the population in the utility source aligns with the model's target population (e.g., disease severity, age).

- Instrument Selection: Prefer utilities derived from instruments validated for the condition and linked to a recognized societal value set.

- Mapping (if necessary): If only disease-specific quality of life (QoL) data (e.g., EORTC QLQ-C30) are available, use validated mapping algorithms to predict utility values.

- Handling Uncertainty: Extract measures of variance (SD, SE, range) for probabilistic sensitivity analysis.

Data Synthesis and Tables

Table 1: Sourced Transition Probabilities for Suspected Liver Cancer Imaging Pathway

| Parameter Description | Base Case Value | Range for PSA (Distribution) | Source (Citation) | Notes/Assumptions |

|---|---|---|---|---|

| Prevalence of HCC in cirrhosis | 0.08 | 0.04-0.12 (Beta) | Singal et al., 2022 | Annual incidence in surveillance cohort |

| Sensitivity of US for HCC | 0.84 | 0.78-0.89 (Beta) | Tzartzeva et al., 2018 | For lesions >2cm |

| Specificity of US for HCC | 0.91 | 0.88-0.94 (Beta) | Tzartzeva et al., 2018 | |

| Sensitivity of MRI (LI-RADS) | 0.92 | 0.87-0.96 (Beta) | Chernyak et al., 2021 | Using hepatobiliary contrast |

| Specificity of MRI (LI-RADS) | 0.88 | 0.82-0.92 (Beta) | Chernyak et al., 2021 | |

| Probability of curative treatment | 0.65 | 0.55-0.75 (Beta) | Registry Data, 2023 | Conditional on early stage diagnosis |

Table 2: Estimated Costs (2024 USD) for Pathway Components

| Cost Item | Base Case Value | Range for PSA (Distribution) | Source | Perspective & Notes |

|---|---|---|---|---|

| Abdominal Ultrasound | $290 | ±20% (Gamma) | Medicare Fee Schedule CPT 76705 | Professional + Technical |

| Multi-phasic Liver MRI | $1,250 | ±20% (Gamma) | Medicare Fee Schedule CPT 74185 | Includes contrast |

| Ultrasound-guided Biopsy | $1,100 | ±25% (Gamma) | Hospital Cost Report | Includes pathology |

| Early Stage HCC Treatment (Ablation) | $25,000 | ±30% (Gamma) | DRG-based Estimate | Inpatient procedure |

| Advanced Stage HCC Treatment (Systemic) | $12,000/month | ±30% (Gamma) | Average Sales Price (Drug) | First-line therapy |

| Yearly Follow-up (Stable Disease) | $4,000 | ±20% (Gamma) | Published CEA, Adjusted | Imaging + Consult |

Table 3: Health State Utility Weights

| Health State | Base Case Utility | Range for PSA (Distribution) | Source (Instrument/Value Set) | Description |

|---|---|---|---|---|

| No HCC (Cirrhosis) | 0.80 | 0.72-0.88 (Beta) | Younossi et al., 2019 (SF-6D/US) | Compensated cirrhosis |

| Post-curative treatment | 0.75 | 0.65-0.85 (Beta) | Parikh et al., 2020 (EQ-5D-5L/UK) | Year 1 after resection |

| On palliative therapy | 0.65 | 0.55-0.75 (Beta) | Llovet et al., 2018 (Mapping from EORTC) | Receiving systemic treatment |

| Terminal/End-of-Life Care | 0.50 | 0.40-0.60 (Beta) | Expert Elicitation Panel | Last 6 months of life |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Parameter Sourcing |

|---|---|

| PRISMA Checklist & Flow Diagram | Ensures transparency and reproducibility in systematic literature review conduct and reporting. |

| Cochrane Risk of Bias Tool (ROB 2, ROBINS-I) | Assesses the methodological quality of randomized trials and observational studies, informing source weighting. |

| GDP Deflator / Medical CPI Calculator | Standardizes costs from different years to a common reference year for accurate comparison. |

| Probabilistic Sensitivity Analysis (PSA) Software | (e.g., R heemod, TreeAge, SAS) Facilitates running the model thousands of times using parameter distributions to assess uncertainty. |

| Utility Mapping Algorithms | Published statistical models (e.g., regression equations) that map from disease-specific QoL scores to generic utility values. |

| Valuation Tariffs | Country-specific value sets (e.g., EQ-5D-5L Crosswalk Index Value Calculator) to convert descriptive system responses into a single utility index. |

Model Parameter Integration Workflow

Title: Workflow for Sourcing and Incorporating Model Parameters

Parameter Uncertainty and Distributions Logic

Title: Logic for Assigning Parameter Distributions in PSA

Within a Markov model for cost-effectiveness analysis (CEA) of diagnostic imaging pathways, Step 4 involves three interdependent structural decisions that fundamentally shape the model's validity and output. The time horizon defines the period over which costs and health outcomes are accrued. The cycle length determines the frequency at which patients can transition between health states. The analytical perspective (e.g., healthcare sector, societal) dictates which costs and outcomes are relevant. These choices must align with the clinical natural history of the condition being studied and the decision problem.

Core Concepts and Current Guidelines

Time Horizon

The time horizon must be sufficient to capture all relevant differences in costs and outcomes between the compared imaging pathways. For chronic conditions or cancers, a lifetime horizon is often recommended. A shorter horizon may be appropriate for acute, self-limiting conditions.

Recent Search Findings (ISPOR, NICE Guidelines):

- ISPOR Good Practices Task Force (2022) recommends aligning the time horizon with the study perspective and the disease course. A lifetime horizon is standard for chronic diseases.

- NICE Reference Case (2024) mandates a lifetime horizon for most CEAs, unless a shorter horizon can be justified as capturing all important differences.

- In imaging, the horizon must cover downstream consequences of diagnostic accuracy (e.g., delayed diagnosis, unnecessary treatment).

Cycle Length

The cycle length is the model's time step. It should be short enough to accurately approximate the timing of clinical events (e.g., disease progression, recurrence) and to allow no more than one transition per cycle.

Recent Search Findings (Modeling Literature):

- Common cycle lengths range from 1 week to 1 year.

- For rapidly changing post-imaging states (e.g., post-procedural complications, short-term recovery), a shorter initial cycle (e.g., 1 month) may be used before switching to a longer cycle.

- Key Consideration: The Half-Cycle Correction must be applied to both cost and outcome accruals to avoid systematic bias.

Analytical Perspective

The perspective determines whose costs and benefits count. This choice is ethical and policy-driven, dictating cost inclusion.

Standard Perspectives:

- Healthcare Sector/Payer: Includes direct medical costs only. Most common for US models.

- Societal: Includes all direct medical costs, patient time, transportation, productivity losses. Recommended by US Panel on Cost-Effectiveness in Health and Medicine (2016) as the reference perspective for economic evaluations.

Table 1: Decision Criteria for Time Horizon and Cycle Length in Imaging Pathway Models

| Parameter | Typical Range | Key Determinants | Common Choice in Imaging CEA | Impact on Model |

|---|---|---|---|---|

| Time Horizon | Short-term (<1 yr) to Lifetime | Disease natural history, intervention effects duration, policy question. | Lifetime for cancer; 1-5 years for non-life-threatening chronic disease. | Drives outcome (QALY) differences; too short a horizon biases against preventive strategies. |

| Cycle Length | 1 week to 1 year | Frequency of clinical events, data availability on transition probabilities, computational burden. | 1 month for acute phase/post-procedure; 3-12 months for long-term follow-up. | Affects accuracy of state transition approximation; influences need for half-cycle correction. |

Table 2: Comparison of Analytical Perspectives

| Perspective | Costs Included | Outcomes Included | Recommended By | Use Case in Imaging |

|---|---|---|---|---|

| Healthcare Payer | Direct medical costs only (imaging, drugs, hospitalization, professional fees). | Health outcomes (QALYs, LYs) accrued to patient. | NICE, many US payers. | Standard submission to health insurance or national payer. |

| Societal | All direct medical costs + patient time, travel, informal care, productivity losses/morbidity. | Health outcomes (QALYs, LYs) accrued to patient. | US Panel on CEA (2016), WHO. | Broad policy assessment, public health planning. |

Experimental Protocols

Protocol 1: Determining an Appropriate Time Horizon

Objective: To justify the selection of the model's time horizon based on the clinical context of the imaging pathway. Methodology:

- Conduct Systematic Scoping Review: Review clinical guidelines and longitudinal cohort studies to map the natural history of the target disease from pre-diagnosis to final outcome (cure, chronic management, death).

- Identify Critical Events: Pinpoint key clinical events influenced by imaging (e.g., time to correct diagnosis, time to treatment initiation, recurrence monitoring windows).

- Model-Based Survival Extrapolation: If clinical data are censored, use statistical models (e.g., parametric survival analysis with Weibull, Gompertz distributions) to extrapolate long-term survival curves for each relevant health state.

- Decision Rule: Set the time horizon equal to the time point at which either:

- The survival curves for all comparator strategies converge, or

- Fewer than 1% of the simulated cohort remains alive (for lifetime models).

- Sensitivity Analysis: Plan to vary the time horizon in scenario analyses (e.g., 10 years vs. lifetime) to test its impact on the incremental cost-effectiveness ratio (ICER).

Protocol 2: Calibrating Cycle Length via Cohort Simulation Checks

Objective: To select a cycle length that minimizes discretization error without unnecessary computational complexity. Methodology:

- Define Candidate Cycle Lengths: Based on clinical event timing, propose 2-3 candidate cycle lengths (e.g., 1 month, 3 months, 1 year).

- Build Parallel Model Shells: Create simplified versions of the Markov model implementing each candidate cycle length.

- Input Test Transition Probabilities: Use a set of known, constant monthly transition probabilities for a test disease progression.

- Run Cohort Simulation: Run each model for a fixed period (e.g., 10 years) without half-cycle correction.

- Calculate Discretization Error: Compare the model-predicted proportion of patients in each health state at the end of the period against the expected proportion from a continuous-time microsimulation or analytical solution.

- Selection Criteria: Choose the longest cycle length where the absolute error in state membership is <1% for all states. Apply half-cycle correction to the final model.

Protocol 3: Operationalizing the Societal Perspective

Objective: To comprehensively identify, measure, and value non-medical costs for inclusion in a societal perspective CEA of an imaging pathway. Methodology:

- Stakeholder Mapping: Identify all parties bearing costs: patient, family/caregivers, employers, healthcare system.

- Micro-costing Study Design:

- Patient Time: Use time-and-motion study or patient diary to record hours spent on imaging appointment (travel, waiting, procedure, recovery). Value using the human capital approach (average wage rate + fringe benefits) or friction cost method.

- Transportation Costs: Collect data on round-trip distance, mode of transport. Apply national standard mileage rates or public transit fares.

- Productivity Losses: For employed patients, use the Work Productivity and Activity Impairment (WPAI) questionnaire specific to the disease, linked to wage data.

- Informal Care: Measure caregiver hours using instruments like the Resource Utilization in Dementia (RUD) Lite questionnaire. Value using the opportunity cost method (caregiver's forgone wage).

- Incorporation into Model: These costs are typically applied as one-time or recurring "add-ons" to the relevant health states in the Markov model (e.g., a "Diagnostic Testing" state incurs travel and time costs).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Markov Model Structural Design

| Item / Resource | Function in Step 4 | Example / Provider |

|---|---|---|

| R (heemod package) / TreeAge Pro | Software to build, run, and test Markov models with different cycle lengths and time horizons. Facilitates probabilistic sensitivity analysis. | heemod R package (open-source); TreeAge Pro (commercial). |

| ISPOR CHEERS 2022 Checklist | Reporting guideline ensuring transparent documentation of time horizon, perspective, and cycle length justification. | International Society for Pharmacoeconomics and Outcomes Research. |

| Human Capital Cost Parameters | National average wage data with fringe benefits, used to value patient and caregiver time. | US Bureau of Labor Statistics (BLS) reports. |

| Standardized Cost Databases | Sources for direct medical costs (e.g., imaging procedure costs, drug costs). | Medicare Physician Fee Schedule, Healthcare Cost and Utilization Project (HCUP). |

| Survival Analysis Software | Tools for parametric extrapolation of time-to-event data to inform lifetime horizons. | R (flexsurv, survival packages); SAS (PROC LIFEREG). |

Visualizations

Title: Interdependence of Key Structural Choices in Markov Modeling

Title: Cost & Outcome Inputs by Analytical Perspective

Application Notes

This protocol details the final analytical step within a Markov model-based cost-effectiveness analysis (CEA) for imaging pathways. The primary outcomes are Quality-Adjusted Life Years (QALYs) and the Incremental Cost-Effectiveness Ratio (ICER), which inform decision-making on the value of a new imaging strategy compared to the standard of care. QALYs combine the quantity and quality of life lived in specific health states from the Markov model. The ICER quantifies the additional cost per additional QALY gained, providing a standardized metric for economic evaluation against willingness-to-pay thresholds.

Experimental Protocols

Protocol 1: Calculation of Total Expected Costs and QALYs

This protocol aggregates the outputs from the Markov cohort simulation to produce summary results for each compared imaging pathway.

- Input: For each strategy (e.g., Standard Imaging vs. Advanced Imaging), obtain the Markov trace results from Step 4. This includes the cohort distribution across health states per model cycle.

- Cost Aggregation: For each strategy, sum the discounted costs accumulated over all model cycles and across all health states. Use the formula:

Total Cost = Σ (Number of patients in state * Cost of state) per cycle, summed over all cycles, with appropriate discounting (e.g., 3% annually). - QALY Calculation: For each strategy, calculate the total QALYs by summing the product of the cohort's time in each health state and the state's utility weight (preference score), adjusted for cycle length. Use the formula:

Total QALYs = Σ (Number of patients in state * Utility weight of state * Cycle length) per cycle, summed over all cycles, with appropriate discounting. - Output: A table of total discounted costs and total discounted QALYs for each imaging strategy under analysis.

Protocol 2: Calculation of the Incremental Cost-Effectiveness Ratio (ICER)

This protocol determines the comparative value of one strategy over another.

- Input: Total discounted Costs (C) and QALYs (E) for each strategy from Protocol 1.

- Strategy Ordering: Order strategies from least to most expensive based on total cost.

- Dominance Check:

- Simple Dominance: If Strategy B has higher costs and lower QALYs than Strategy A, Strategy B is dominated and excluded.

- Extended Dominance: If the ICER of a strategy is higher than that of a more effective subsequent strategy, it is extendedly dominated and excluded.

- ICER Calculation: For the two remaining non-dominated strategies, calculate the ICER using the formula:

ICER = (C_New - C_Standard) / (E_New - E_Standard)This represents the additional cost required to gain one additional QALY by adopting the new imaging pathway. - Interpretation: Compare the calculated ICER to a pre-defined cost-effectiveness threshold (e.g., $50,000 - $150,000 per QALY, jurisdiction-dependent) to determine if the new strategy is considered cost-effective.

Data Presentation

Table 1: Summary of Cost-Effectiveness Results for Hypothetical Imaging Pathways

| Imaging Strategy | Total Discounted Cost (USD) | Total Discounted QALYs | Incremental Cost (USD) | Incremental QALYs | ICER (USD/QALY) | Status vs. Threshold ($100k/QALY) |

|---|---|---|---|---|---|---|

| Standard CT (A) | $42,500 | 8.20 | - | - | - | Reference |

| Advanced PET/CT (B) | $48,750 | 8.55 | $6,250 | 0.35 | $17,857 | Cost-Effective |

| Experimental MRI (C) | $59,000 | 8.60 | $10,250* | 0.05* | $205,000 | Not Cost-Effective |

Note: Incremental values for Strategy C are calculated vs. Strategy B (the next non-dominated option). Strategy C is extendedly dominated as its ICER vs. B exceeds the threshold, making B the optimal strategy.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Tool | Function in CEA of Imaging Pathways |

|---|---|

Markov Modeling Software (e.g., TreeAge Pro, R heemod, Microsoft Excel with VBA) |

Platform for constructing, populating, and running the multi-state Markov model to simulate patient pathways. |

| Utility Weight Catalog (e.g., EQ-5D, SF-6D population norms, disease-specific value sets) | Source of preference-based health state utility scores (0-1 scale) essential for calculating QALYs. |

| Costing Database (e.g., Medicare Physician Fee Schedule, Hospital Cost Reports, published literature) | Source of unit costs for imaging procedures, treatments, and health state management. |

| Discounting Calculator | Tool to apply annual discount rates (e.g., 3%) to future costs and QALYs to reflect present value. |

| Probabilistic Sensitivity Analysis (PSA) Tool | Software module to run Monte Carlo simulations, varying all input parameters simultaneously to characterize uncertainty and generate cost-effectiveness acceptability curves. |

Visualizations

Title: QALY and ICER Calculation Workflow

Title: ICER Calculation & Decision Logic

Navigating Challenges: Common Pitfalls and Advanced Optimization Techniques in Markov Modeling

Application Notes

In cost-effectiveness analyses (CEA) of chronic, progressive diseases (e.g., Alzheimer's disease, liver fibrosis, many cancers) using Markov models, the standard Markovian assumption of memorylessness is a critical limitation. The Markov property states that the probability of transitioning to a future health state depends solely on the current state, not on the history of how the patient arrived there. For progressive diseases, where the duration in a state or the accumulation of past damage often dictates future progression risk, this assumption is frequently violated. This necessitates specific modeling strategies to maintain analytical validity.

Table 1: Impact of Memoryless Assumption on Progressive Disease Modeling

| Disease Example | Standard Markov State | Key Historical Factor Ignored | Consequence of Ignoring History |

|---|---|---|---|

| Alzheimer's Disease | Mild Cognitive Impairment (MCI) | Time spent in MCI, specific cognitive test score trajectory | Under/overestimation of progression to dementia, biased cost and utility estimates. |

| Liver Fibrosis (NASH) | Fibrosis Stage F2 | Rate of fibrosis increase, prior biomarker levels (e.g., ELF score) | Inaccurate prediction of time to cirrhosis (F4), misallocation of monitoring resources. |

| Oncology (PFS/OS) | Progression-Free Survival (PFS) | Time since treatment initiation, depth of initial response | Flawed estimation of subsequent overall survival (OS) and post-progression treatment costs. |

Protocols for Advanced Markov Modeling in Progressive Diseases

Protocol 1: Implementing Tunnel States to Capture Time-Dependency

Objective: To model the increased risk of progression associated with longer dwell times in a given health state.

Methodology:

- Deconstruct the State: Divide a monolithic health state (e.g., "Moderate Disease") into a series of identical, sequential sub-states ("Tunnel States").

- Define Transition Probabilities: Allow transitions only from one tunnel state to the next, or to a "worse" health state. The probability of progressing to a worse health state typically increases with each successive tunnel state.

- Assign Costs & Utilities: These can remain constant or vary across tunnel states (e.g., decreasing utility with longer disease duration).

- Analysis: Run the modified Markov model. The patient cohort's progression is now a function of both the state and the time spent within that state's tunnel, introducing memory.

Diagram 1: Tunnel States for a Progressive Disease Stage

Protocol 2: Developing a Semi-Markov (Coxian) Model Structure

Objective: To directly incorporate time-to-event data and history-dependent transition rates.

Methodology:

- Model Structure: Use a Coxian phase-type model. The disease pathway is represented as a series of transient states (phases of the disease), with possible transitions to an absorbing state (e.g., Death or Severe Disability).

- Parameterization: Fit phase-type distributions (e.g., Erlang, hyperexponential) to observed time-to-progression or survival data using maximum likelihood estimation.

- State Transition: The time spent in each phase becomes an explicit model variable. The transition hazard out of a state can be a function of this time, bypassing the memoryless property.

- Integration with CEA: Attach cost and utility weights to each phase. Run a state-transition simulation based on the fitted time-dependent hazards.

Diagram 2: Coxian Semi-Markov Model Structure

Protocol 3: Microsimulation (Individual State-Transition) Modeling

Objective: To track a full set of time-varying patient attributes (e.g., biomarker scores, cumulative drug dose) for each simulated individual over their lifetime.

Methodology:

- Define Patient Profiles: Create a large cohort (e.g., n=100,000) of simulated patients with baseline characteristics drawn from relevant distributions.

- Program History-Dependent Rules: For each cycle, calculate transition probabilities for an individual as a function of their entire history (e.g.,

P(Progression) = f(baseline risk, current biomarker, time in state, prior treatments)). - Run Stochastic Simulation: Use random number generators to determine outcomes for each patient in each cycle, updating their history vector accordingly.

- Aggregate Results: Sum costs and QALYs across all simulated patients to generate cohort-level results for CEA.

Diagram 3: Microsimulation Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Progressive Disease Markov Modeling Research

| Item / Solution | Function in Model Development & Validation |

|---|---|

R (with hesim, flexsurv, mstate packages) |

Open-source statistical platform for building advanced Markov, semi-Markov, and microsimulation models, and fitting survival distributions. |

| TreeAge Pro Healthcare | Specialized commercial software with built-in support for tunnel states, time-dependent transitions, and microsimulation, streamlining CEA. |

| Patient-Level Clinical Trial Data | Source for estimating history-dependent parameters, such as time-to-event curves and longitudinal biomarker trajectories. |

| Excel with VBA | Prototyping environment for discrete-event microsimulation models; allows full customization of patient history tracking logic. |

| Kaplan-Meier Estimator Outputs | Non-parametric survival curves used to validate and calibrate the transition probabilities within the Markov model. |

| Advanced Continuous Biomarkers (e.g., Plasma p-tau217, ELF Test) | Quantitative measures that can be modeled as continuous variables within microsimulation to inform progression risk, adding "memory". |

Within cost-effectiveness analyses (CEAs) of diagnostic imaging pathways using Markov models, complexity arises from numerous health states, transition probabilities, and resource utilization parameters. Excessive complexity can obscure insights, increase computational burden, and introduce parameter uncertainty. This document provides application notes and protocols for strategically simplifying such models while preserving their scientific validity and decision relevance.

Application Notes

Note 1: State Aggregation Protocol

Rationale: Reducing the number of health states by aggregating clinically similar states with comparable costs and utilities. Validity Check: Aggregated states must not mask important clinical or economic outcomes. The incremental cost-effectiveness ratio (ICER) sensitivity to aggregation should be tested.

Note 2: Transition Probability Simplification

Rationale: Using constant, time-homogeneous probabilities for stable disease phases instead of complex, time-varying functions. Validity Check: Apply to phases where empirical evidence shows minimal change in hazard rates. Conduct a threshold analysis on the simplification assumption.

Note 3: Tunnel State Elimination

Rationale: Replacing detailed "tunnel states" (tracking time-in-state) with adjusted transition probabilities or memoryless structures where possible. Validity Check: Compare model outcomes (e.g., lifetime costs, QALYs) with and without tunnel states over a range of plausible inputs.

Note 4: Cycle Length Optimization

Rationale: Using the longest justifiable cycle length (e.g., 1 year vs. 1 month) to reduce computational steps. Validity Check: Ensure cycle length does not misrepresent the timing of critical clinical events (e.g., progression, adverse events).

Experimental Protocols

Protocol 1: Stepwise Simplification and Validation

Objective: To implement and validate a sequence of complexity-reducing maneuvers in a Markov model for imaging pathway CEA. Materials: Base-case complex model, probabilistic sensitivity analysis (PSA) dataset, statistical software (R, TreeAge, SAS). Procedure:

- Baseline Output Generation: Run the complex model to establish reference outputs (ICER, mean costs, mean effectiveness).

- Apply Simplification: Implement one simplification strategy (e.g., state aggregation).

- Deterministic Comparison: Compare simplified vs. complex model outputs across a range of key input parameters.

- Probabilistic Comparison: Run PSA (10,000 iterations) on both models. Calculate the correlation of results and the percentage of iterations where the ICER conclusion (e.g., cost-effective vs. not) differs.

- Acceptance Criterion: If the conclusion difference is <2% of PSA iterations and the mean ICER difference is <5%, the simplification is accepted.

- Iterate: Proceed to the next simplification step using the accepted simplified model as the new baseline.

Protocol 2: Calibrating Simplified Transition Probabilities

Objective: To derive constant transition probabilities for a simplified model that accurately reflect observed disease natural history.

Materials: Published survival curves (e.g., Kaplan-Meier), calibration software (e.g., R's heemod or BUGS).

Procedure:

- Identify Time-Varying Data: Obtain overall survival or progression-free survival data from relevant clinical trials.

- Define Complex Model: Create a model with time-varying hazards (e.g., Weibull) and calibrate it to the data.

- Define Simplified Model: Create a model with a reduced number of states and constant transition probabilities.

- Calibration Target: Use the complex model's predicted survival proportion at years 1, 3, and 5 as targets.

- Optimization: Use a goodness-of-fit statistic (e.g., sum of squared errors) to find the constant probabilities that best fit the targets.

- Validation: Visually compare the survival curve generated by the simplified model against the original data.

Table 1: Impact of Simplification Strategies on Model Performance

| Simplification Strategy | States Reduced (%) | Runtime Saved (%) | Mean ICER Difference (%) | PSA Conclusion Discordance (%) |

|---|---|---|---|---|

| State Aggregation | 40% | 35% | 1.8% | 0.7% |

| Constant Probabilities | 0% | 60% | 3.2% | 1.5% |

| Tunnel State Removal | 60% | 75% | 4.1% | 2.1% |

| Cycle Length Increase | 0% | 90% | 2.5% | 1.2% |

Note: Hypothetical data from a simulated case study on lung cancer imaging pathways.

Table 2: Calibration Results for Simplified Transition Probabilities

| Time Point (Year) | Target Survival (Complex Model) | Simplified Model Survival | Absolute Error |

|---|---|---|---|

| 1 | 0.85 | 0.84 | 0.01 |

| 3 | 0.50 | 0.48 | 0.02 |

| 5 | 0.20 | 0.21 | 0.01 |

Visualizations

Simplification and Validation Workflow

State Aggregation in Markov Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Model Simplification Research

| Item | Function/Benefit |

|---|---|

| TreeAge Pro Healthcare | Software for building, simplifying, and validating Markov models with integrated PSA. |

| R Statistical Language | Open-source platform for custom model development, calibration, and advanced analysis. |

R heemod & dampack Packages |

Specific packages for implementing, comparing, and analyzing health economic models. |

| Probabilistic Sensitivity Analysis (PSA) Dataset | A correlated set of input parameters (means, distributions) reflecting joint uncertainty. |

| Clinical Trial Survival Data | Kaplan-Meier curves or published hazard ratios for calibrating transition probabilities. |

| Goodness-of-Fit Metrics | Statistics (e.g., SSE, MAE) to quantify the fit of a simplified model to calibration targets. |

| Visualization Software (Graphviz) | For creating clear diagrams of model structures and workflows to communicate changes. |

Application Notes and Protocols Within a Markov model for cost-effectiveness analysis (CEA) of imaging pathways in medical research, uncertainty is pervasive. This protocol details systematic approaches to characterize and quantify this uncertainty, ensuring robust decision-making for researchers and health technology assessors.

I. Data and Parameter Uncertainty Analysis

Table 1: Key Sources of Uncertainty in Markov Imaging Models

| Source Category | Example in Imaging Pathways | Typical Handling Method |

|---|---|---|

| Parameter Uncertainty | Transition probabilities from diagnostic accuracy (sensitivity/specificity), cost of imaging modalities, utility weights for health states. | Probabilistic Sensitivity Analysis (PSA). |

| Structural Uncertainty | Choice of model type (cohort vs. individual), cycle length, inclusion of "scanxiety" health state, tunnel states for progressive disease. | Scenario Analysis. |

| Heterogeneity | Variation in patient demographics (age, risk factors) impacting test performance or disease progression. | Subgroup Analysis. |

Table 2: Summary of Recommended Quantitative Analysis Techniques

| Technique | Primary Use | Output Metric | Key Implementation Detail |

|---|---|---|---|

| One-Way Deterministic SA | Identify influential parameters. | Tornado Diagram. | Vary each parameter ±20% or within plausible range, hold others constant. |

| Probabilistic SA (PSA) | Quantify overall decision uncertainty. | Cost-Effectiveness Acceptability Curve (CEAC), Ellipse. | Assign distributions (e.g., Beta for probabilities, Gamma for costs) and run 10,000 Monte Carlo simulations. |

| Scenario Analysis | Test structural assumptions or extreme cases. | Incremental Cost-Effectiveness Ratio (ICER) comparison. | Compare base case to clinically plausible alternatives (e.g., different imaging sequences). |

II. Experimental Protocols

Protocol 1: Probabilistic Sensitivity Analysis (PSA) for an Imaging Pathway CEA

- Objective: To propagate uncertainty from all input parameters into the model's output (Net Monetary Benefit - NMB) and generate a CEAC.

- Materials (The Scientist's Toolkit):

- Model Software: R (with

heemod,dampack), TreeAge Pro, Microsoft Excel with VBA. - Distributional Assumptions:

- Beta Distribution: For transition probabilities and test accuracy parameters (bounded between 0 and 1). Function: Ensures sampled values are valid probabilities.

- Gamma Distribution: For cost parameters (positive, right-skewed). Function: Appropriately models non-negative cost data.

- Log-Normal Distribution: For relative risk parameters and some utility weights. Function: Handles ratios and multiplicative effects.

- Statistical Software: R or Python for post-simulation analysis and plotting.

- Model Software: R (with

- Methodology:

- Step 1 – Parameter Definition: For each uncertain parameter in Table 1, define a probability distribution based on its mean and standard error (e.g., from meta-analysis or primary data).

- Step 2 – Monte Carlo Simulation: For

i = 1ton(wheren≥ 10,000): a. Randomly sample one value from the defined distribution for each parameter. b. Run the Markov model with this set of sampled values. c. Record the resulting ICER and NMB for each strategy. - Step 3 – Output Analysis: Plot the CEAC, showing the probability each imaging pathway is cost-effective across a range of willingness-to-pay thresholds. Calculate the cost-effectiveness acceptability frontier.

- Step 4 – Value of Information: Use the PSA results to estimate the Expected Value of Perfect Information (EVPI), identifying parameters where further research is most valuable.

Protocol 2: Scenario Analysis for Structural Uncertainty