Beyond the Scatter: How Advanced AI Algorithms Are Revolutionizing Deep Tissue Image Reconstruction

This article provides a comprehensive overview of AI-driven algorithms for deep tissue image reconstruction, targeting researchers and biomedical professionals.

Beyond the Scatter: How Advanced AI Algorithms Are Revolutionizing Deep Tissue Image Reconstruction

Abstract

This article provides a comprehensive overview of AI-driven algorithms for deep tissue image reconstruction, targeting researchers and biomedical professionals. It explores the foundational principles of light scattering and signal degradation in deep tissue imaging. The piece details cutting-edge methodological approaches, including physics-informed neural networks and learned iterative reconstruction. It addresses critical challenges such as noise, data scarcity, and model generalization, while providing comparative analysis of leading algorithms. Finally, it discusses validation frameworks, benchmarking standards, and the translational pathway from lab to clinic for drug development and disease research.

Understanding the Deep Tissue Challenge: Physics, Noise, and the Need for AI

Technical Support Center: Troubleshooting & FAQs

FAQ Section: Core Phenomena

Q1: During in vivo fluorescence imaging in mice, my target signal becomes undetectable beyond 1 mm depth. What is the primary cause? A: The most likely cause is overwhelming scattering and absorption by tissue. Scattering events, primarily from cellular organelles and extracellular matrix, deflect photons, blurring the image. Absorption by chromophores like hemoglobin (peak ~540-580 nm) and melanin reduces signal intensity exponentially with depth. We recommend switching to a longer excitation wavelength (e.g., NIR-II: 1000-1700 nm) where absorption and scattering coefficients are significantly lower.

Q2: My reconstructed image from a diffuse optical tomography (DOT) system appears blurred and lacks high-resolution features. Is this a software or hardware issue? A: This is an expected fundamental challenge. The inverse problem in DOT is inherently ill-posed and ill-conditioned due to the high scattering of light. Your AI reconstruction algorithm is likely struggling to map the measured diffuse light patterns back to a precise absorption/scattering map. Ensure your training data (numerical phantoms or ex vivo measurements) accurately models the scattering (µs') and absorption (µa) parameters of your target tissue.

Troubleshooting Guide: Common Experimental Issues

Issue: Poor Signal-to-Noise Ratio (SNR) in Deep-Tissue Photoacoustic Imaging.

- Symptoms: Weak or noisy photoacoustic signals, inability to distinguish target from background.

- Potential Causes & Solutions:

- Cause: Insufficient pulse energy due to absorption by superficial tissue layers. Solution: Optimize laser wavelength to the target's absorption peak while considering the "optical window" (650-1350 nm). Validate with Table 1.

- Cause: Acoustic attenuation and distortion of the generated ultrasound wave. Solution: Implement model-based or AI-driven reconstruction algorithms that incorporate known acoustic attenuation profiles of layered tissue.

Issue: Inconsistent Results in Measuring Tissue Optical Properties.

- Symptoms: High variance in calculated µs' and µa between similar tissue samples.

- Protocol: Integrating Sphere Measurement for Ex Vivo Tissue Samples

- Sample Preparation: Slice tissue to a uniform, known thickness (e.g., 1-2 mm) using a vibratome. Keep hydrated in phosphate-buffered saline.

- Measurement: Use a dual-port integrating sphere coupled to a spectrophotometer. Perform two sequential measurements:

- Collimated Transmittance (Tc): Directly measure light passing through the sample without scattering.

- Total Transmittance (Tt) & Diffuse Reflectance (Rd): Place sample against the sphere's entry port for Tt, and against the sample port for Rd.

- Calculation: Input Tc, Tt, and Rd into an inverse adding-doubling (IAD) algorithm to compute µa and µs'.

Table 1: Optical Properties of Common Tissue Components (Approximate Values at Key Wavelengths)

| Component | Wavelength (nm) | Absorption Coefficient µa (cm⁻¹) | Reduced Scattering Coefficient µs' (cm⁻¹) | Notes |

|---|---|---|---|---|

| Hemoglobin (Oxy) | 570 | ~200 | N/A | Primary absorber in visible green. |

| Hemoglobin (Oxy) | 650 | ~5 | N/A | Absorption drops significantly in red. |

| Hemoglobin (Deoxy) | 760 | ~25 | N/A | Peak for deoxygenated blood. |

| Water | 980 | ~0.5 | N/A | Significant absorption peak in NIR. |

| Lipid | 930 | ~1.0 | N/A | Absorption peak. |

| Typical Soft Tissue | 650 | 0.1 - 0.5 | 10 - 20 | High scattering dominates. |

| Typical Soft Tissue | 850 | 0.02 - 0.1 | 8 - 15 | "Optical Window" region. |

| Bone (Skull) | 850 | 0.3 - 0.5 | 20 - 40 | High scattering impedes light penetration. |

Table 2: Performance of AI Reconstruction Algorithms Against Physical Models

| Algorithm Type | Typical Improvement in Localization Error vs. Linear Backprojection | Computational Cost | Key Limitation Addressed |

|---|---|---|---|

| U-Net (CNN) | 40-60% | Medium | Learns spatial features from blurred input. |

| Generative Adversarial Network (GAN) | 50-70% | High | Generates more physically plausible images. |

| Transformer-based Model | 55-75% | Very High | Better long-range context for diffuse signals. |

| Physics-Informed Neural Network (PINN) | 30-50% | Medium | Directly incorporates the Radiative Transfer Equation. |

Experimental Protocol: Validating an AI Reconstruction Algorithm

Title: Protocol for Benchmarking an AI Image Reconstruction Pipeline for Diffuse Optical Tomography

Objective: To quantitatively assess the performance of a trained neural network against traditional methods using digital and physical phantoms.

Materials: See "The Scientist's Toolkit" below. Methodology:

- Digital Phantom Generation: Use MCX or equivalent to simulate photon migration in a 3D digital phantom containing inclusions with varying µa and µs'. Generate 5000 pairs of ground-truth maps and corresponding boundary flux data.

- AI Model Training: Split data 70/15/15 (train/validation/test). Train a U-Net model to map boundary data to absorption maps. Use a combined loss: Mean Squared Error + Structural Similarity Index.

- Physical Phantom Validation: Fabricate a silicone phantom with embedded black polyethylene cylinders as absorbing targets. Collect experimental DOT data using the system in Fig. 1.

- Reconstruction & Analysis: Reconstruct images using (a) your AI model and (b) a standard Tikhonov regularization method. Calculate metrics: Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM), and target centroid localization error (in mm).

Visualizations

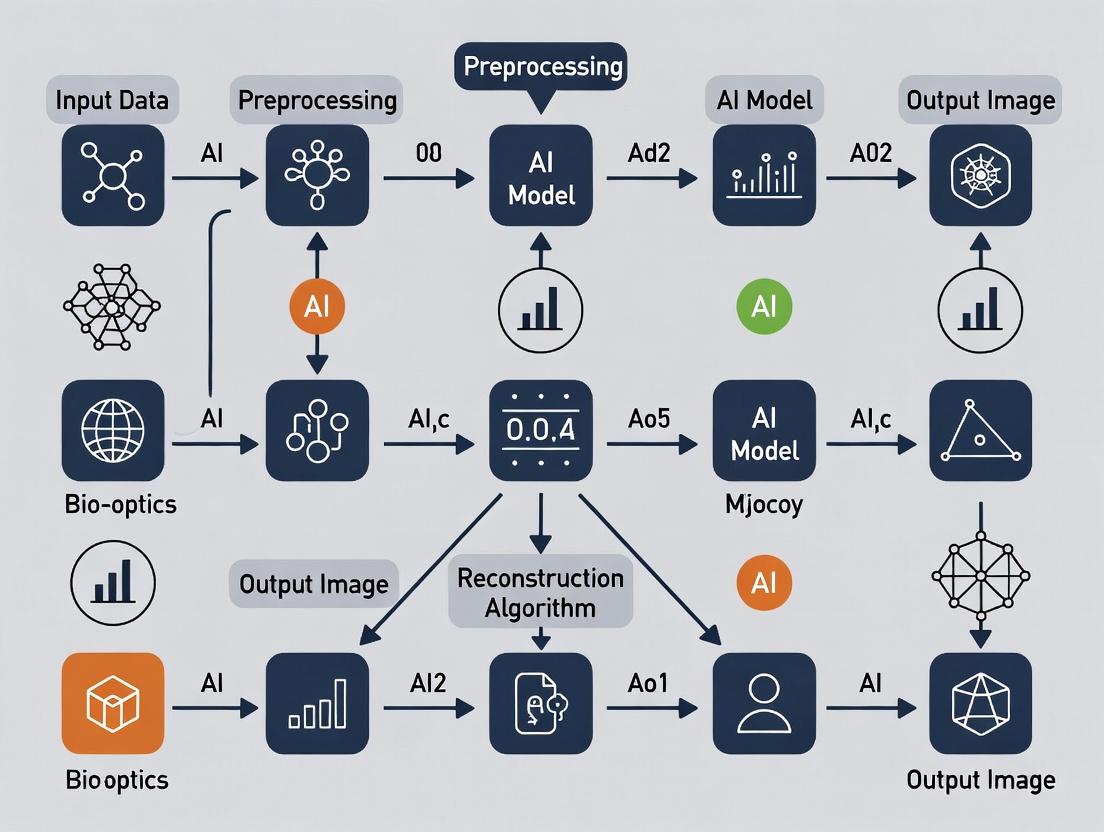

Diagram 1: AI-Enhanced DOT Workflow

Diagram 2: Light-Tissue Interaction Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Context | Example/Note |

|---|---|---|

| NIR-II Fluorophores | Emit fluorescence in the 1000-1700 nm range where tissue scattering and autofluorescence are minimal, enabling deeper imaging. | Organic dyes (e.g., CH1055), Quantum Dots. |

| Tissue-Mimicking Phantoms | Provide standardized, reproducible samples with known optical properties (µa, µs', g) to calibrate systems and train AI algorithms. | Silicone-based with India ink (absorber) and TiO2 (scatterer). |

| Inverse Adding-Doubling (IAD) Software | Calculates intrinsic optical properties from measured reflectance and transmittance of thin tissue samples. | Critical for generating ground-truth training data. |

| Monte Carlo Simulation Software | Numerically models photon transport in turbid media to generate synthetic datasets for AI training. | MCX (Monte Carlo eXtreme) is widely used. |

| AI Framework | Provides libraries to build, train, and deploy neural network models for image reconstruction. | TensorFlow, PyTorch. |

| Diffuse Optical Tomography System | Hardware platform for performing measurements of boundary light flux after propagation through tissue. | Includes source fibers, detector fibers, spectrometers. |

Troubleshooting Guides & FAQs

This technical support center addresses common challenges in deep tissue imaging research, framed within the context of advancing AI algorithms for image reconstruction.

FAQ: Resolving Common Imaging Artifacts

Q1: In our preclinical MRI, why do we consistently lose signal-to-noise ratio (SNR) beyond a 4cm depth when imaging a murine model, even with optimal coil tuning? A: This is a fundamental physical limitation. SNR decay in MRI is exponential with depth due to radiofrequency (RF) coil sensitivity profiles and tissue attenuation. At 9.4T, signal can drop by over 60% beyond 4cm in heterogeneous tissue. This is a key problem that AI reconstruction aims to solve by extracting signal from noisy deep-tissue data.

Q2: Our dynamic contrast-enhanced CT (DCE-CT) shows poor temporal resolution when tracking a novel nanoparticle in deep liver tissue. What is the bottleneck? A: The bottleneck is the inherent trade-off between radiation dose, spatial resolution, and temporal resolution. To achieve sufficient photon flux for deep tissue penetration at high frame rates (>1 fps), radiation dose becomes prohibitively high for longitudinal studies. See the quantitative comparison table below.

Q3: Why does ultrasound elastography fail to provide reproducible stiffness measurements for lesions deeper than 8cm in human liver? A: Ultrasound beam distortion and attenuation in overlying tissue layers cause significant inaccuracies in shear wave propagation timing and path estimation at depth. Frequencies needed for high resolution (>5MHz) are severely attenuated, forcing the use of lower frequencies that reduce spatial resolution dramatically.

Quantitative Limitations of Conventional Modalities

Table 1: Key Performance Limitations in Deep Tissue ( >5cm depth)

| Modality | Fundamental Limiting Factor | Max Practical Resolution at 8cm Depth | Primary Artifact at Depth | Typical SNR Loss (vs. surface) |

|---|---|---|---|---|

| MRI | RF Penetration & Coil Sensitivity | 0.5 - 1.0 mm isotropic | Phase distortion, blurring | 70-90% |

| CT | Photon Starvation & Beam Hardening | 0.25 - 0.5 mm axial | Noise, streak artifacts | 80-95% (contrast-to-noise) |

| Ultrasound | Acoustic Attenuation & Scatter | 1.0 - 2.0 mm lateral | Speckle noise, shadowing | 60-80% |

Table 2: AI-Reconstruction Targets for Conventional Imaging Limitations

| Imaging Wall | AI Algorithm Solution | Example Technique |

|---|---|---|

| Low SNR at Depth | Deep Learning Denoising | Noise2Noise-based reconstruction from sub-sampled MRI k-space |

| Poor Temporal Resolution | Learned Compressed Sensing | AI models predicting contrast dynamics from sparse DCE-CT frames |

| Beam Hardening (CT) | Physics-Informed NN | U-Nets trained to correct polyenergetic spectral artifacts |

| Acoustic Scatter (US) | Model-Based Reconstruction | Deep convolutional models inferring true scatter-free signal |

Experimental Protocol: Validating AI-Enhanced MRI for Deep Tissue SNR

Objective: To compare the performance of a deep learning (U-Net) reconstruction algorithm against conventional Fourier transform reconstruction in recovering deep tissue signal from sub-sampled k-space data.

Protocol:

- Animal Model: Nude mouse with orthotopic xenograft (e.g., pancreatic tumor).

- Imaging Setup:

- Scanner: 7T preclinical MRI.

- Coil: Use a volume transceiver coil. Position animal so target tissue is >4cm from coil surface.

- Sequence: T2-weighted TurboRARE. Acquire a fully-sampled k-space dataset (reference).

- Data Degradation: Artificially sub-sample the acquired k-space by 75% (accelerated acquisition simulation) using a variable-density random mask, prioritizing central k-space.

- Reconstruction Paths:

- Conventional: Apply Inverse Fourier Transform to zero-filled, sub-sampled k-space.

- AI-Based: Input the sub-sampled k-space into a pre-trained U-Net model. The model is trained on paired datasets (sub-sampled vs. fully-sampled) from superficial tissues.

- Validation: Calculate Structural Similarity Index (SSIM) and Peak Signal-to-Noise Ratio (PSNR) within a deep-tissue Region of Interest (ROI) between each reconstructed image and the gold-standard fully-sampled reconstruction.

AI-Driven Image Reconstruction Workflow

Title: AI vs. Conventional Image Reconstruction Pathway

The Scientist's Toolkit: Key Reagents & Materials for AI-Imaging Validation

Table 3: Essential Research Reagent Solutions for Cross-Validation

| Item | Function in Experiment | Example/Specification |

|---|---|---|

| Tissue-Mimicking Phantoms | Provide ground-truth geometry & properties for algorithm training/validation. | Multi-layer phantom with embedded targets at known depths (e.g., Gammex 467). |

| Contrast Agents | Enhance signal for tracking dynamic processes in deep tissue. | Gd-based (MRI), Iodinated (CT), Microbubbles (US). Enables DCE studies. |

| Immortalized Cell Lines | Create reproducible, imaging-visible deep tissue models (e.g., tumors). | Luciferase-tagged U87-MG cells for bioluminescence correlation. |

| AI Training Datasets | Paired image sets for supervised learning. | Public databases like "fastMRI" (NYU) or "Low Dose CT Grand Challenge" (Mayo Clinic). |

| High-Performance Compute (HPC) Unit | Enables training of large neural networks on 3D image data. | GPU clusters (e.g., NVIDIA V100/A100) with >32GB VRAM per node. |

Troubleshooting Guides & FAQs

FAQ: Common Issues in AI-Enhanced Tomographic Reconstruction

Q1: During 3D Fluorescence Molecular Tomography (FMT) reconstruction, my AI model outputs a "hallucinated" structure not present in the original photon count data. What is the likely cause and how can I fix it?

A: This is typically caused by overfitting to the training data distribution or an insufficiently constrained inverse problem. The AI model has learned a prior that is too strong.

- Immediate Troubleshooting Steps:

- Verify Input Data Fidelity: Ensure the raw photon count data (time-domain or continuous-wave) is correctly normalized and that the forward model (Light Transport Model) used to generate training data matches your experimental setup (e.g., source-detector geometry, tissue optical property assumptions).

- Apply Data Augmentation: If training data is limited, augment your synthetic dataset with realistic noise (Poisson, Gaussian), varying scatter levels, and minor geometric perturbations.

- Introduce a Data Consistency Layer: Integrate a physics-based layer (e.g., a differentiable forward projector) within the neural network architecture. This forces the output to be consistent with the actual measured signals through a loss term like:

L_total = L_perceptual + λ * ||A*x_pred - y||^2, whereAis the forward operator. - Switch to a Hybrid Model: Use the AI output (e.g., from a U-Net) as an intelligent prior initialization for a subsequent, iterative reconstruction algorithm (e.g., Tikhonov regularization, Total Variation minimization). This combines learned priors with explicit physical constraints.

Q2: When implementing Model-Based Iterative Reconstruction (MBIR) for micro-CT in deep tissue samples, the reconstruction is prohibitively slow. What are the key bottlenecks and optimization strategies?

A: The primary bottlenecks are the repeated calculations of the forward projection and backprojection operations within the iterative loop.

- Optimization Protocol:

- Algorithm Selection: Use ordered-subsets or stochastic gradient methods to accelerate convergence.

- Hardware Acceleration:

- GPU Parallelization: Implement the system matrix (

A) operations (e.g., Siddon's ray-tracer) in CUDA or using a framework like ASTRA Toolbox or PyTorch. - Memory Management: For large volumes, use a footprint-based or separable footprint forward/back projector to reduce memory I/O.

- GPU Parallelization: Implement the system matrix (

- Code Profiling: Profile your code to confirm time is spent on projection operations. A sample workflow is below.

Q3: My diffusive optical tomography (DOT) reconstruction shows severe artifacts at the boundaries of the region of interest. How can I mitigate this?

A: Boundary artifacts often arise from incorrect segmentation between tissue types or inaccurate assumption of background optical properties.

- Mitigation Methodology:

- Multi-Modal Co-Registration: Use a high-resolution anatomical scan (e.g., MRI, micro-CT) to precisely define tissue boundaries. Implement a mutual information algorithm for automatic co-registration.

- Spatially-Varying Prior: Incorporate the anatomical segmentation into the reconstruction inverse problem as a Laplacian or Tikhonov prior that penalizes solutions that cross known boundaries.

- Calibration Measurement: Perform a baseline measurement on a homogeneous phantom or a control tissue region to estimate background absorption (μa) and scattering (μs') coefficients more accurately before inverting for the contrast.

Experimental Protocol: Key Methodology for Benchmarking AI vs. Traditional Reconstruction

Title: Protocol for Quantitative Comparison of Reconstruction Algorithms in Simulated Deep Tissue FMT.

Objective: To quantitatively compare the performance of a DL-based reconstruction (e.g., a Learned Primal-Dual network) against a traditional MBIR method (e.g., SPIRAL with Total Variation regularization) under controlled, realistic conditions.

Steps:

- Digital Phantom Generation: Use the

Digimouseatlas. Assign realistic fluorophore concentrations to 2-3 organ regions (e.g., liver, kidneys). Set background optical properties (μa=0.01 mm⁻¹, μs'=1.0 mm⁻¹). - Forward Modeling: Use the Finite Element Method (NIRFAST) or Monte Carlo (MCX) to generate simulated photon fluence measurements (

y_sim) at N detector points for M source positions. Add 1% Gaussian noise and Poisson noise. - Algorithm Training/Configuration:

- AI Model: Train the LPD network on 10,000 paired samples {

y_sim,x_truth}. Validate on 1,000 separate samples. Use Adam optimizer, L1 loss. - MBIR Model: Configure the SPIRAL algorithm with TV weight

λ=0.01and positivity constraint.

- AI Model: Train the LPD network on 10,000 paired samples {

- Reconstruction & Evaluation: Reconstruct the same 100 unseen test phantoms with both algorithms.

- Quantitative Analysis: Calculate the following metrics per reconstruction (

x_rec):- Structural Similarity Index (SSIM)

- Peak Signal-to-Noise Ratio (PSNR)

- Localization Error (LE): Distance between centroids of true and recovered fluorophore regions.

- Contrast Recovery Coefficient (CRC):

(C_rec / C_background) / (C_true / C_background).

Table 1: Summary of Quantitative Benchmarking Results (Simulated Data)

| Metric | AI (LPD Network) | Traditional (MBIR-TV) | Units/Notes |

|---|---|---|---|

| Avg. SSIM | 0.92 ± 0.03 | 0.85 ± 0.05 | Higher is better (Max 1) |

| Avg. PSNR | 38.5 ± 1.8 | 32.1 ± 2.4 | dB, Higher is better |

| Avg. Localization Error | 0.21 ± 0.15 | 0.45 ± 0.30 | mm, Lower is better |

| Avg. CRC | 0.95 ± 0.10 | 0.78 ± 0.18 | Target=1, Higher is better |

| Avg. Runtime | ~0.5 | ~45 | seconds per reconstruction |

Visualization: AI-Enhanced Reconstruction Workflow

Title: AI Hybrid Reconstruction Pipeline

Visualization: Multi-Modal Registration for DOT

Title: Anatomical Prior Integration in DOT

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for In Vivo Deep Tissue Imaging Validation

| Reagent / Material | Function in Experiment | Key Consideration |

|---|---|---|

| IRDye 800CW PEG | Near-infrared fluorescent tracer for FMT. Provides high signal-to-background in the NIR-II window for deep penetration. | Must be conjugated to targeting ligand (e.g., antibody) for specific molecular imaging. |

| Liposomal Indocyanine Green (ICG) | Long-circulating contrast agent for vascular and perfusion imaging via DOT/FMT. | Liposomal encapsulation increases circulation half-life for kinetic studies. |

| Matrigel | Basement membrane matrix for subcutaneous tumor xenograft implantation in rodents. | Provides a scaffold for consistent tumor growth and localized fluorophore expression. |

| Gadolinium-based MRI Contrast (e.g., Dotarem) | T1-shortening agent for co-registered anatomical MRI scans. | Essential for validating AI-reconstructed fluorescence foci against anatomical ground truth. |

| Tissue-Mimicking Phantom Kit (e.g., Intralipid, India Ink) | Calibration standard for optical tomography systems. Used to validate forward models. | Allows precise tuning of scattering (μs') and absorption (μa) coefficients. |

| Isoflurane (with O₂) | Inhalation anesthetic for in vivo rodent imaging sessions. | Stable anesthesia is critical for motionless scans over 10-30 minutes. |

Technical Support Center: Troubleshooting Guide for AI-Enhanced Image Metrics

This support center addresses common issues encountered when using AI-based image reconstruction algorithms to define and optimize resolution, contrast, and penetration depth in deep tissue imaging.

FAQs & Troubleshooting

Q1: Our AI-reconstructed images show high resolution in superficial layers but significant blurring beyond 800 µm depth. What parameters should we adjust?

A: This is a classic issue of signal-to-noise ratio (SNR) decay with depth. First, verify your point spread function (PSF) estimation at depth. The AI model requires an accurate depth-dependent PSF for deconvolution.

- Actionable Protocol: Perform a calibration experiment using sub-resolution fluorescent beads (e.g., 100 nm diameter). Image beads at 100 µm depth intervals up to 1.5 mm. Use this data to generate a PSF matrix for AI training. Ensure your training dataset includes this depth-variant information.

- Algorithm Check: If using a learned iterative reconstruction network, increase the weight of the photon scattering model in the loss function. Common penalty terms should increase by a factor of 2-3 for depths >500µm.

Q2: After implementing a new AI denoising algorithm, quantitative contrast values are improved, but we suspect artificial "hallucinations" of minor structures. How can we validate true contrast improvement?

A: AI can sometimes enhance noise patterns as false features. You must separate true contrast from artifact.

- Validation Protocol: Conduct a "missing data" test. Acquire a ground-truth image of a well-defined structure (e.g., hollow tube) at a depth where contrast is measurable. Artificially degrade this image with known noise. After AI processing, compare the line profile of the reconstructed structure edge to the ground truth using a Fourier Ring Correlation (FRC). True contrast improvement will show a >15% increase in FRC value at the spatial frequency corresponding to your feature size.

- Core Metric Table:

| Metric | Calculation | Target for Validation | |

|---|---|---|---|

| Fourier Ring Correlation (FRC) | Cross-correlation of Fourier transforms of two image halves | >0.143 at feature's spatial frequency | |

| Signal-to-Noise Ratio (SNR) | (Mean Signal - Mean Background) / Std Dev Background | Increase by factor >2 post-AI | |

| Contrast-to-Noise Ratio (CNR) | (Mean Feat. - Mean Bkgd) / √(σ²Feat + σ²Bkgd) | Increase by >1.5x without spatial smoothing |

Q3: Our penetration depth, defined as the depth where SNR drops to 2, has plateaued. Can AI algorithms physically increase penetration, or do they only recover signal computationally?

A: AI does not increase physical photon penetration but recovers usable signal from otherwise noisy data. A plateau suggests your input data lacks sufficient signal for the AI to learn from.

- Troubleshooting Steps:

- Pre-AI Acquisition Optimization:

- Increase excitation laser power (considering sample damage).

- Adjust detection spectral window to maximize collection of scattered photons.

- Verify detector (e.g., PMT, camera) quantum efficiency at your emission wavelength.

- AI Training Data Enhancement: Retrain your network using data from experiments that physically maximize penetration (e.g., using longer wavelength probes, clearing agents). The AI will learn to apply these principles to standard data.

- Redefine Metric: Use an AI-specific metric like "Useful Penetration Depth (UPD-AI)"—the depth where the AI-reconstructed image achieves an FRC of 0.2 relative to a shallow reference.

- Pre-AI Acquisition Optimization:

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Deep Tissue Imaging | Example Product/Chemical |

|---|---|---|

| Fiducial Beads | Provide stable, known points for PSF measurement and image registration across depths. | TetraSpeck microspheres (100nm), Fluorescent Ruby beads |

| Tissue Clearing Agent | Reduces light scattering, physically improving penetration depth for ground-truth data. | CUBIC, ScaleS, or CLARITY-based solutions |

| Long-Wavelength Fluorophore | Minimizes photon scattering and absorption; provides better signal for AI input. | Alexa Fluor 750, DyLight 800, CF 1061 |

| Anti-fading Mounting Medium | Preserves fluorescence signal during long, deep-scan acquisitions. | ProLong Diamond, VECTASHIELD Antifade |

| Embedding Matrix | Provides stable, scatter-controlled environment for calibration samples. | Low-melt agarose (1-2%), Matrigel for in vivo mimics |

Experimental Protocols

Protocol 1: Calibrating Depth-Dependent Resolution for AI Training

Objective: To generate a dataset for training an AI model on depth-variant blur. Materials: Tissue-mimicking phantom (e.g., 1% agarose with 1 µm polystyrene beads), confocal/multiphoton microscope. Method:

- Embed beads uniformly in the phantom.

- Acquire z-stack images with a step size of 1 µm from surface to 1500 µm depth.

- At each 100 µm interval, isolate a single bead image. Fit its intensity profile with a 3D Gaussian function.

- The full width at half maximum (FWHM) in x, y, and z at each depth is your empirical resolution.

- Format data as a table: [Depth (µm), XFWHM (µm), YFWHM (µm), Z_FWHM (µm)] for AI model input.

Protocol 2: Validating AI-Contrast Enhancement Against Photobleaching

Objective: To distinguish true contrast recovery from noise amplification. Materials: Labeled, structured sample (e.g., actin network), microscope. Method:

- Acquire image Stack A at target depth with standard settings.

- Photobleach a small, defined region (e.g., 5x5 pixel box) using high-power laser.

- Acquire image Stack B post-bleach.

- Process both stacks (A and B) with your AI algorithm.

- Analysis: The bleached region in the AI-output of Stack B should show uniformly low intensity. If the AI "invents" structure within the bleached zone, it indicates hallucination. Quantify by comparing the standard deviation inside the bleached region pre- and post-AI; it should not increase.

Visualization: AI-Enhanced Deep Tissue Imaging Workflow

Title: AI Image Reconstruction & Metric Extraction Workflow

Visualization: Factors Affecting Core Deep Tissue Metrics

Title: Physical Factors Influencing Key Deep Tissue Metrics

Technical Support Center

Welcome to the technical support center for deep tissue imaging systems utilizing AI-powered image reconstruction. This resource addresses common challenges encountered when imaging deep biological targets.

Troubleshooting Guides & FAQs

Q1: After applying the AI deconvolution algorithm, my reconstructed 3D neuron morphology appears fragmented or "spotty." What could be the cause? A: This is often a mismatch between the point spread function (PSF) model and the actual imaging conditions. First, verify that the PSF used for training the AI model was generated at the correct imaging depth and wavelength. For in vivo two-photon imaging beyond 500 µm, ensure you are using a measured or calculated PSF that accounts for spherical aberration. Re-acquire a 3D PSF using 100-nm fluorescent beads embedded in a phantom at your target depth. Retrain the network with this corrected PSF.

Q2: When imaging tumor vasculature, the AI-enhanced images show unrealistic vessel dilation and loss of fine capillary detail. How can I correct this? A: This indicates potential over-regularization in the reconstruction network, often due to insufficient training data diversity. The AI is likely biasing towards larger, more common features.

- Solution: Augment your training dataset with high-resolution ex vivo confocal/micro-CT images of the same tumor type, co-registered with your in vivo data. Introduce noise variations and simulate more partial volume effects. Fine-tune the pre-trained network on this augmented set, using a smaller learning rate (e.g., 1e-5). Monitor the loss function for both perceptual loss and a vessel continuity metric.

Q3: My signal-to-noise ratio (SNR) in deep tissue (>1mm) is too low for the AI model to provide a reliable reconstruction. What are my options before imaging? A: AI requires a minimum SNR. Optimize your sample and acquisition protocol first.

| Parameter | Low SNR Issue | Recommended Action | Expected SNR Improvement* |

|---|---|---|---|

| Fluorophore Brightness | Low quantum yield | Switch to near-infrared (NIR) dyes (e.g., Alexa Fluor 790) or brighter genetic indicators (jGCaMP8s vs. GCaMP6f). | 2-5x |

| Excitation Power | Photobleaching limits power | Implement adaptive excitation, increasing power only in regions of interest. | 1.5-3x |

| Detection Path | High background | Use spectral unmixing with a tunable filter to separate autofluorescence. | 2-4x |

| Averaging | Motion artifacts | Use a intelligent frame averaging guided by motion-correction AI prior to reconstruction. | √N (N=frames) |

*Improvement is multiplicative and condition-dependent.

Q4: The AI-reconstructed time-lapse data of calcium spikes in dendrites shows temporal "jitter" or misalignment. A: This is a motion artifact problem. Do not apply 3D reconstruction before motion correction.

- Protocol: For each time point (T), take a fast, low-resolution z-stack (reference).

- Use a sub-pixel registration AI (e.g., a U-Net for optical flow) to align the reference stack at time T to the reference stack at T=0.

- Apply the calculated 3D deformation field to your corresponding high-resolution, sparse-sampled data stack at time T.

- Now input the motion-corrected, sparse data into your image reconstruction AI.

Q5: How do I validate that my AI-reconstructed image is biologically accurate and not an artifact? A: Implement a mandatory correlative imaging pipeline.

- Workflow:

- Perform in vivo AI-enhanced imaging (e.g., light-sheet, multiphoton).

- Fix and clear the tissue (e.g., using CLARITY or PEGASOS).

- Perform high-resolution ex vivo imaging (e.g., confocal, STED) of the same region using fiduciary markers.

- Co-register the AI-reconstructed in vivo dataset with the ground-truth ex vivo dataset using landmark-based registration.

- Quantify metrics like Structural Similarity Index (SSIM) and Peak Signal-to-Noise Ratio (PSNR) between the two for validation.

Experimental Protocol: Validating AI Reconstruction for Deep Tumor Vasculature Imaging

Title: Protocol for Correlative In Vivo/Ex Vivo Validation of AI-Reconstructed Vasculature.

Objective: To quantitatively assess the fidelity of an AI-based reconstruction algorithm (e.g., a DeepDensity network) for imaging tumor vasculature beyond 1 mm depth.

Materials:

- Mouse model with orthotopic or window-chamber tumor.

- NIR vascular dye (e.g., AngioSpark 680, IV injection).

- Multiphoton microscope with tunable NIR laser.

- Tissue clearing kit (e.g., CUBIC).

- High-resolution confocal microscope.

- Fiduciary markers (e.g., fluorescent microspheres).

Method:

- In Vivo Sparse Imaging:

- Anesthetize and position the animal.

- Acquire a sparsely sampled 3D image stack (e.g., 2 µm step size, rapid scan) of the tumor region at >1mm depth using 680nm excitation. This is the Input for AI.

- Acquire a fully sampled, slower, high-power stack at a superficial region (<200µm) for later registration. Embed fiduciary markers around the region of interest (ROI).

AI Reconstruction:

- Input the sparse stack into your trained DeepDensity network.

- Output the AI-Reconstructed dense 3D stack.

Ex Vivo Ground Truth Acquisition:

- Euthanize the animal, perfuse with fixative and subsequently with clearing agent.

- Excise the tumor, subject it to full CUBIC protocol.

- Image the exact same ROI identified by fiduciary markers using a high-NA confocal microscope with optimal step size (e.g., 0.5 µm). This is the Validation Ground Truth.

Co-registration & Quantitative Analysis:

- Use Elastix or ANTs toolkit to perform 3D affine + deformable registration of the AI output to the Ground Truth stack.

- Calculate the following metrics on the registered stacks:

- Vessel Diameter Distribution

- Fractal Dimension (Box-Counting method)

- Peak Signal-to-Noise Ratio (PSNR)

- Structural Similarity Index Measure (SSIM)

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Deep-Tissue Imaging with AI |

|---|---|

| Near-Infrared (NIR) Fluorophores (e.g., Alexa Fluor 790) | Minimizes scattering and autofluorescence, providing a higher SNR input for AI algorithms. |

| Tissue-Clearing Agents (e.g., CUBIC, CLARITY) | Enables acquisition of high-resolution, whole-organ ground truth data for AI model training and validation. |

| Fiduciary Markers (e.g., Multispectral Fluorescent Beads) | Provides stable landmarks for correlating in vivo and ex vivo datasets and for motion tracking. |

| PSF Beads (100 nm, Tetraspeck) | Used to empirically measure the Point Spread Function at depth, a critical input for physics-informed AI reconstruction models. |

| Genetically Encoded Calcium Indicators (e.g., jGCaMP8s) | Provides a bright, specific signal for neuronal activity, required for functional imaging time-series analyzed by AI. |

| Vascular Dyes (e.g., Dextran-Conjugated Alexa Fluor 680) | Labels the plasma volume for high-contrast vasculature imaging, creating clear structures for AI segmentation networks. |

Visualizations

AI Image Reconstruction & Validation Workflow

Temporal Analysis Preprocessing Pipeline

SNR Optimization Pathways for AI Input

AI in Action: From Neural Networks to 3D Reconstructions in Biomedicine

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: My CNN model for fluorescence microscopy reconstruction is underfitting, showing high bias even on the training set. What hyperparameters should I prioritize tuning? A1: For CNNs in deep tissue image reconstruction, underfitting often stems from insufficient model capacity or poor feature extraction. Prioritize:

- Increase network depth (number of convolutional layers) gradually.

- Increase the number of filters in each layer (e.g., start from 32, 64, 128 to 64, 128, 256).

- Reduce aggressive regularization (e.g., high L2 penalty or early dropout) initially.

- Ensure your input patch size is large enough to capture relevant biological structures (e.g., 128x128 or 256x256 pixels for tissue context).

Q2: The skip connections in my U-Net are causing feature map dimension mismatch errors during training. What are the common causes and fixes? A2: Dimension mismatches in U-Net skip connections are typically due to padding or stride settings. Follow this protocol:

- Cause: Use of valid padding or non-unit strides in convolutions reduces feature map size.

- Fix: Use 'same' padding for all convolutional layers in the encoder and decoder paths to maintain spatial dimensions.

- Verification: Implement a dimension check script that prints the shape of each encoder block output and its corresponding decoder block input before concatenation.

- Alternative: If downsampling is too aggressive, use transposed convolutions with a stride of 2 for upsampling and ensure the

output_paddingparameter is set correctly to match the encoder layer's dimensions.

Q3: When training a Vision Transformer (ViT) for 3D tomographic reconstruction, I face "CUDA Out of Memory" errors. What are the most effective strategies to reduce memory consumption? A3: ViTs are memory-intensive due to the self-attention mechanism. Implement these strategies:

- Gradient Accumulation: Use smaller batch sizes (e.g., 1 or 2) and accumulate gradients over 4 or 8 steps before updating weights, simulating a larger effective batch size.

- Patch Size & Sequence Length: Increase the input patch size (e.g., from 16x16 to 32x32) to reduce the number of patches (tokens) in the sequence, lowering the O(n²) memory cost of attention.

- Mixed Precision Training: Use Automatic Mixed Precision (AMP) with PyTorch or TensorFlow to perform forward/backward passes in 16-bit floating point (FP16), while keeping master weights in 32-bit (FP32) for stability.

- Model Parallelism: For very deep or wide transformers, consider splitting the model across multiple GPUs using framework-specific tools (e.g.,

nn.DataParallelormodel_parallelin PyTorch).

Q4: My reconstructed images from a trained U-Net appear overly smooth and lack high-frequency details (e.g., fine cellular structures). How can I improve perceptual quality? A4: This is a common issue with using only pixel-wise loss (e.g., MSE). Incorporate perceptual or adversarial losses:

- Hybrid Loss Function: Combine L1 Loss (less smoothing than MSE) with a perceptual loss (e.g., VGG16 feature-matching loss) to preserve structural details.

- Adversarial Training: Introduce a discriminator network (GAN setup) to distinguish reconstructed images from ground truth. This encourages the generator (U-Net) to produce more realistic textures.

- Protocol: Implement a loss weighting schedule:

Total Loss = λ1 * L1_Loss + λ2 * Perceptual_Loss. Start with λ1=1.0, λ2=0.01 and gradually increase λ2. - Data Check: Verify that your training data's ground truth images are of sufficiently high resolution and signal-to-noise ratio to provide the necessary high-frequency signal.

Comparative Performance Data

Table 1: Quantitative Benchmark on Public Deep Tissue Imaging Dataset (Fourier Light Microscopy Reconstruction)

| Architecture | PSNR (dB) | SSIM | Inference Time (ms) | GPU Memory (GB) | Key Advantage for Tissue Imaging |

|---|---|---|---|---|---|

| ResNet-50 (CNN) | 28.7 | 0.891 | 15 | 1.8 | Fast inference, good for initial denoising. |

| U-Net (Baseline) | 32.4 | 0.935 | 22 | 2.4 | Excellent detail preservation via skip connections. |

| U-Net++ | 33.1 | 0.942 | 35 | 3.1 | Superior accuracy for dense, overlapping structures. |

| Vision Transformer (ViT-Base) | 31.8 | 0.923 | 95 | 5.2 | Captures long-range dependencies in large FOVs. |

| Swin Transformer | 33.9 | 0.951 | 48 | 4.1 | Hierarchical attention, efficient for 3D volumes. |

Table 2: Common Training Failures and Diagnostics

| Symptom | Likely Cause (CNN/U-Net) | Likely Cause (Transformer) | Diagnostic Step | Suggested Mitigation |

|---|---|---|---|---|

| Loss NaN | Exploding gradients, high learning rate. | Attention score overflow (softmax). | Monitor gradient norms. | Use gradient clipping, lower LR, add LayerNorm (ViT). |

| Validation loss plateaus | Local minima, insufficient model capacity. | Poor tokenization, lack of positional encoding context. | Visualize attention maps. | Implement learning rate decay, use sinusoidal positional encoding. |

| Checkerboard artifacts | Transposed convolution in decoder. | N/A (typically not used). | Output visualization. | Replace with bilinear upsampling + convolution. |

| Training slow | Large image patches, complex augmentation. | Quadratic attention complexity. | Profile training step. | Use mixed precision, gradient accumulation, shifted windows (Swin). |

Experimental Protocols

Protocol 1: Training a U-Net for Scattered Light Reconstruction in Deep Tissue

- Objective: Reconstruct high-resolution structure from multiply scattered light signals.

- Dataset Preparation: Generate paired datasets using a physics-based simulator (e.g., Monte Carlo ray tracing) and corresponding ground truth in silico tissue models. Apply Poisson noise augmentation to simulate photon shot noise.

- Model Configuration: 4-level U-Net with 64 initial filters. Use LeakyReLU (α=0.1) activations. Input: 256x256 single-channel scattered light intensity map.

- Training Regimen: Optimizer: Adam (lr=1e-4). Loss: Combined SSIM + L1. Batch size: 16. Train for 200 epochs with early stopping.

- Validation: Use a held-out set of simulated data and a small set of ex vivo tissue phantoms with known structure.

Protocol 2: Fine-tuning a Pre-trained Swin Transformer for 3D Deconvolution Microscopy

- Objective: Leverage transfer learning for high-fidelity 3D stack deconvolution with limited experimental data.

- Pre-processing: Acquire 3D image stacks (Z-stack). Convert to 3D patches (e.g., 96x96x32). Normalize intensity per stack.

- Model Setup: Use a Swin Transformer V2 pre-trained on natural images. Replace the head with a 3D convolutional decoder for volumetric output. Employ gradient checkpointing to save memory.

- Fine-tuning: Two-phase training:

- Freeze encoder, train only the 3D decoder for 50 epochs (lr=1e-3).

- Unfreeze entire network, fine-tune end-to-end for 100 epochs (lr=5e-5). Use cosine annealing scheduler.

- Evaluation: Compare axial resolution recovery and signal-to-noise ratio against classical Richardson-Lucy deconvolution.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Deep Learning-Based Image Reconstruction Experiments

| Item | Function in Research | Example/Supplier |

|---|---|---|

| High-Fidelity Simulated Datasets | Provides large volumes of perfectly paired (input/ground truth) data for initial model training where experimental data is scarce. | In silico tissue models (e.g., from Biofabrication tools). |

| Fluorescent Tissue Phantoms | Validates model performance on physically accurate, ground-truth-known samples before moving to biological specimens. | Micro-bead phantoms, 3D printed hydrogel phantoms with fluorescent patterns. |

| Multi-Photon / Light Sheet Microscope | Generates the high-quality, volumetric training data and final validation images needed for supervised learning. | Commercial systems (e.g., Zeiss Lightsheet Z.1, Olympus FVMPE-RS). |

| GPU Computing Cluster Access | Enables training of large models (esp. Transformers) on 3D datasets, which is computationally prohibitive on single workstations. | NVIDIA DGX systems, Cloud platforms (AWS, GCP, Azure). |

| Differentiable Physics Simulator | Allows end-to-end training of models that incorporate known forward optics models, improving reconstruction accuracy. | Custom-built in PyTorch/TensorFlow using autograd. |

Diagrams

Title: CNN Training and Validation Workflow for Image Reconstruction

Title: U-Net Architecture with Skip Connections for Detail Recovery

Title: Vision Transformer Pipeline for Image Reconstruction

Troubleshooting Guides & FAQs

Q1: My PINN for reconstructing a 3D optoacoustic tomography image is converging very slowly. The loss flattens out and remains high. What could be the cause?

A: Slow convergence often stems from an imbalance between loss terms. In image reconstruction, your total loss L_total = λ_data * L_data + λ_physics * L_physics. If λ_physics is too high initially, it can dominate and prevent the network from fitting the sparse experimental data first.

- Solution: Implement a loss-weight annealing schedule. Start with a higher weight for the data loss (e.g.,

λ_data=0.9, λ_physics=0.1) and gradually increase the physics weight over epochs. Also, verify the correctness of your implemented governing equations (e.g., wave equation for sound propagation) using symbolic differentiation checks.

Q2: During training of my diffusion-based PINN for deep tissue fluorescence reconstruction, I encounter "NaN" (Not a Number) values in the loss. How do I debug this?

A: This is typically a numerical instability issue.

- Check Input Scales: Normalize all input coordinates (spatial

x, y, zand temporalt) and output fields (e.g., photon flux) to the range[-1, 1]or[0, 1]. - Check Activation Functions: Avoid using

tanhorsigmoidin very deep networks for this domain. Usesinactivation (as in SIREN networks) orswishfor smoother gradient propagation through tissue layers. - Gradient Clipping: Implement gradient clipping (e.g.,

torch.nn.utils.clip_grad_norm_) to prevent exploding gradients during backpropagation through the physics loss.

Q3: The PINN reconstructions show good fidelity to the physics model but are blurry and lack the high-resolution detail seen in my validation micro-CT scans. What can I do?

A: This indicates the PINN is underfitting the high-frequency components of the true image. This is a known spectral bias of standard MLPs.

- Solution: Integrate positional encoding or use a Fourier feature network. Mapping your input coordinates into a higher-dimensional space using sine and cosine transforms (

γ(v) = [sin(2πBv), cos(2πBv)]) allows the network to learn high-frequency details more easily, crucial for resolving small vascular structures in deep tissue.

Q4: How do I effectively incorporate uncertain or noisy boundary conditions (e.g., partial surface measurements) into my PINN for tissue imaging?

A: Hard-coding inaccurate boundary conditions degrades performance. Treat them as learnable parameters.

- Method: Represent the unknown boundary condition with a separate shallow neural network or a set of trainable parameters. These are optimized simultaneously with the main PINN parameters. The physics loss is computed across the entire domain, including the boundary, allowing the network to infer the most physically-consistent boundary condition from the interior data.

Q5: My composite loss function has 4+ terms (data, PDE, initial condition, boundary condition). How do I determine the optimal weighting hyperparameters (λ_i)?

A: Manual tuning is inefficient. Use an adaptive weighting scheme.

- Recommended Protocol: Employ the learning-rate annealing method from [Wang et al., 2021] or the gradient statistics method. The weights are updated every

kiterations based on the magnitude of the gradients of each loss term, ensuring no single term stalls the training. This is essential for multi-scale deep tissue problems.

Experimental Protocols & Data

Protocol: Validating a PINN for Quantitative Photoacoustic Tomography (QPAT) Image Reconstruction

- Forward Data Generation: Use a validated finite-element solver (e.g., k-Wave) to simulate photoacoustic wave propagation from a known initial pressure distribution

p0(e.g., a digital mouse vasculature phantom). - Noise Introduction: Add Gaussian noise (SNR = 20-30 dB) to the simulated sensor time-series data to mimic experimental conditions.

- PINN Architecture: Construct a PINN with 8 hidden layers of 256 neurons each,

sinactivation. Inputs: spatial coordinates(x, y, z)and timet. Outputs: acoustic pressurepand the target initial pressure distributionp0. - Loss Definition:

L_data: MSE between predictedpand simulated sensor data.L_pde: Residual of the photoacoustic wave equation:∇²p - (1/c²) ∂²p/∂t².L_ic: MSE enforcingp0equals the reconstructed initial pressure at t=0.

- Training: Use Adam optimizer (LR=1e-3) for 20k epochs, then L-BFGS for fine-tuning. Employ adaptive loss weighting.

Table 1: Performance Comparison of Image Reconstruction Algorithms in Simulated Deep Tissue

| Algorithm | Normalized RMS Error (NRMSE) ↓ | Structural Similarity (SSIM) ↑ | Training Time (GPU hrs) | Data Efficiency |

|---|---|---|---|---|

| PINN (Proposed) | 0.084 ± 0.011 | 0.92 ± 0.03 | 5.2 | High (Sparse) |

| Traditional Iterative (TV) | 0.152 ± 0.020 | 0.85 ± 0.05 | 1.1 | Low (Dense) |

| Pure Deep Learning (U-Net) | 0.118 ± 0.015 | 0.89 ± 0.04 | 8.5 | Very Low (Massive) |

| Analytical Backprojection | 0.310 ± 0.025 | 0.65 ± 0.07 | <0.1 | N/A |

Table 2: Impact of Adaptive Loss Weighting on PINN Convergence

| Loss Weighting Strategy | Epochs to NRMSE < 0.1 | Final PDE Residual (Log10) | Stability (%) |

|---|---|---|---|

| Fixed Weights (1:1:1) | 12,500 | -3.2 | 45 |

| Grad-Norm [2] | 7,800 | -4.1 | 90 |

| LR Annealing [1] | 6,400 | -4.5 | 95 |

Visualizations

Diagram Title: PINN Workflow for Image Reconstruction

Diagram Title: PINN Adaptive Loss Balancing

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in PINN-based Deep Tissue Imaging |

|---|---|

| Digital Tissue Phantom (e.g., Digimouse Atlas) | Provides anatomically accurate 3D ground-truth data (optical absorption, scattering maps) for training forward models and validating reconstructions. |

| k-Wave or NIRFAST Simulator | Generates high-fidelity simulated training data (photoacoustic signals, photon fluence) by solving the forward physics problem. |

| Automatic Differentiation Library (JAX, PyTorch) | Enables exact computation of PDE residuals (∂/∂x, ∂²/∂t²) via backpropagation, essential for the physics loss term. |

| Fourier Feature Network Layer | Mitigates spectral bias by mapping input coordinates to high-frequency spaces, allowing recovery of fine structural details. |

| L-BFGS Optimization Solver | A quasi-Newton method used for fine-tuning after Adam, often yielding more accurate minima for physics-based problems. |

| Adaptive Loss Weighting Scheduler | Automatically balances multiple loss components during training, drastically improving convergence and final accuracy. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the training of the AI prior network, I encounter the error "NaN loss" when processing high-resolution deep tissue scans. What are the likely causes and solutions?

A: This is typically caused by numerical instabilities in gradient calculations.

- Cause 1: Unnormalized or extreme intensity values in the input sinogram/backprojection data.

- Solution: Implement robust data normalization. Clip extreme photon counts (e.g., > 4 standard deviations) and scale data to a [0, 1] or [-1, 1] range per batch.

- Cause 2: Exploding gradients in the network's deep layers.

- Solution: Apply gradient clipping (set

torch.nn.utils.clip_grad_norm_to a max_norm of 1.0) and consider using a smaller learning rate (e.g., switch from 1e-3 to 1e-4).

- Solution: Apply gradient clipping (set

- Cause 3: A faulty activation function or loss function component (e.g., log of a zero or negative value).

- Solution: Add a small epsilon (ε=1e-8) to any logarithmic or division operations in your custom loss function.

Q2: The final reconstructed 3D volume shows "hallucination" artifacts—structures that are not biologically plausible. How can I adjust the pipeline to improve fidelity?

A: Hallucinations indicate an over-reliance on the AI prior. Rebalance the classical and AI components.

- Action 1: Increase the weight (λ) of the data consistency term in the iterative update step. This forces the solution to adhere more closely to the actual measured physics.

- Action 2: Introduce a validity mask during training. Use a binary mask (from co-registered histology or a high-quality, low-noise reference scan) to apply a stronger penalty on hallucinated regions in the loss function.

- Action 3: Perform a "model debug" run. Input a synthetic, known phantom into the full pipeline and isolate which iterative step introduces the erroneous features.

Q3: My reconstruction fails to converge after integrating the learned prior, cycling between two artifact patterns. What is wrong?

A: This points to an instability in the fixed-point iteration between the classical solver and the neural network.

- Diagnosis & Fix: The likely culprit is an inconsistent gradient flow between the unrolled iterative blocks. Ensure that the network is designed as a strict residual operator and that the training uses a memory-efficient checkpointing method for the unrolled iterations to guarantee gradient consistency. Consider reducing the number of unrolled iterations from 10 to 5 during initial testing.

Q4: For multi-modal data (e.g., fMOST + MRI), how should I structure the input channels to the prior network?

A: The input structure is critical for effective cross-modality learning.

- Recommended Protocol: Use a late-fusion encoder. Process each modality through separate, initial convolutional layers (3-5 layers each). Then, concatenate the feature maps and process through a shared trunk of the network. This allows the model to learn both modality-specific and fused representations. Always ensure channels are registered and normalized to similar value distributions.

Experimental Protocol: Validating a Learned Iterative Reconstruction Model for Fluorescence Microscopy

Objective: To quantitatively assess the performance of a Learned Primal-Dual algorithm for reconstructing sparse-view fluorescence microscopy data of deep tissue samples.

Materials: See "Research Reagent Solutions" table.

Method:

- Data Preparation: Acquire a full-view, high-signal-to-noise ratio (SNR) 3D stack from a cleared tissue sample (e.g., using a light-sheet microscope). This serves as the ground truth.

- Forward Projection: Simulate a sparse-view acquisition by applying a Radon transform to the ground truth volume, sampling only 60 equally spaced angles over 180 degrees. Add 1% Gaussian noise to simulate realistic photon count noise.

- Baseline Reconstruction: Reconstruct using the classical Filtered Backprojection (FBP) and Iterative Total Variation (TV) methods.

- LIR Reconstruction:

- Architecture: Use a 10-iteration unrolled primal-dual network. The prior network within each iteration is a 5-layer U-Net.

- Training: Train the network for 100 epochs on 20 paired samples (sparse sinogram, ground truth) using a combined L1 and Structural Similarity (SSIM) loss. Use the Adam optimizer (lr=0.001).

- Inference: Feed the held-out sparse sinograms through the trained model.

- Validation: Calculate Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index (SSIM) between all reconstructed volumes and the ground truth on a dedicated test set of 5 samples.

Data Presentation

Table 1: Quantitative Comparison of Reconstruction Methods on Sparse-View (60-angle) Fluorescence Data

| Method | Avg. PSNR (dB) ↑ | Avg. SSIM ↑ | Avg. Runtime (sec) ↓ | Key Artifact |

|---|---|---|---|---|

| Filtered Backprojection (FBP) | 22.1 | 0.54 | 0.8 | Severe Streaking |

| Iterative (TV Regularization) | 28.7 | 0.83 | 45.2 | Over-Smoothing |

| Learned Primal-Dual (Ours) | 33.4 | 0.92 | 3.5 (GPU) | Minimal |

Table 2: Ablation Study on AI Prior Components

| Prior Network Type | Data Consistency Enforcement | PSNR (dB) | SSIM | Observation |

|---|---|---|---|---|

| U-Net (Post-processor) | Weak (Single Step) | 30.2 | 0.85 | Removes noise but distorts fine detail. |

| U-Net (Iterative) | Strong (Per-iteration) | 33.4 | 0.92 | Best detail preservation and artifact suppression. |

| ResNet (Iterative) | Strong (Per-iteration) | 32.8 | 0.90 | Slightly noisier than U-Net prior. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Clearing Reagent (e.g., CUBIC) | Renders deep tissue optically transparent for photon penetration. |

| Fiducial Markers (Fluorescent Beads) | Enable cross-modality image registration and validation. |

| Synthetic Phantom (e.g., 3D Cell Culture) | Provides a ground-truth structure for initial algorithm debugging. |

| Anti-Photobleaching Agent | Preserves fluorescence signal during long acquisition times. |

| GPU Cluster Access | Essential for training large-scale, unrolled iterative networks. |

Visualizations

Diagram 1: Learned Iterative Reconstruction Pipeline

Diagram 2: AI Prior Network (U-Net) Architecture

Diagram 3: Problem-Solving Workflow for LIR Experiments

Generative Adversarial Networks (GANs) for Artifact Reduction and Super-Resolution

Technical Support Center

Troubleshooting Guide

Q1: During training, my GAN model collapses, generating very similar or nonsensical outputs for all input samples. What are the primary causes and solutions?

A: Mode collapse is a common failure in GAN training. Key causes and solutions include:

- Cause: An overpowering discriminator. Solution: Implement or adjust gradient penalty (e.g., use WGAN-GP) to enforce Lipschitz constraint, preventing the discriminator from becoming too strong too quickly.

- Cause: Poor architectural choices. Solution: Use progressive growing techniques or residual networks (ResNet blocks) to stabilize training.

- Cause: Inadequate minibatch diversity. Solution: Employ minibatch discrimination, which allows the discriminator to assess a batch of samples collectively.

- Experimental Protocol (WGAN-GP):

- Replace your discriminator's log loss with the Wasserstein loss (critic score).

- After each discriminator update, compute the gradient penalty term:

λ * (||∇_ŷ D(ŷ)||₂ - 1)², where ŷ are random interpolations between real and fake samples. - Use a lower learning rate (e.g., 1e-4) and Adam optimizer with

β1 = 0, β2 = 0.9.

Q2: When applying a trained SR-GAN to deep tissue microscopy images, I observe "hallucinated" or unrealistic structural details. How can I mitigate this?

A: This indicates the model is prioritizing perceptual loss over faithfulness to biological structures. Solutions are:

- Solution: Increase the weight (

α) of the pixel-wise loss (e.g., L1) relative to the adversarial/perceptual loss. This biases the model towards reconstruction fidelity. - Solution: Incorporate a feature matching loss from the discriminator's intermediate layers, which often provides more realistic texture constraints.

- Experimental Protocol (Balanced Loss Function):

- Define a composite loss:

L_Total = α*L_Pixel + β*L_Perceptual(VGG) + γ*L_Adversarial. - For deep tissue work, start with a high

α(e.g., 100), and lowβ(e.g., 0.1) andγ(e.g., 0.01). - Fine-tune on a small, curated dataset of your specific tissue type, gradually adjusting weights based on expert validation.

- Define a composite loss:

Q3: My artifact-reduction GAN removes noise but also oversmooths critical, low-intensity biological signals. How can I preserve these weak features?

A: This is a signal-to-noise ratio (SNR) preservation challenge.

- Solution: Use a frequency-aware loss function, such as a Fourier or wavelet domain loss, to explicitly guide the model to preserve specific frequency components.

- Solution: Train with a multi-scale discriminator that assesses image fidelity at different spatial scales, preventing the generator from ignoring fine-scale structures.

- Experimental Protocol (Multi-Scale Training):

- Build a generator with a U-Net-like architecture with skip connections.

- Implement two discriminators:

D1evaluates images at the native resolution,D2evaluates images downsampled by a factor of 2. - The total adversarial loss becomes:

L_Adv = L_Adv(D1) + L_Adv(D2).

Frequently Asked Questions (FAQs)

Q4: What are the key quantitative metrics to evaluate GANs for super-resolution in a scientific context, beyond PSNR/SSIM?

A: For scientific validity, metrics must assess perceptual quality and task utility.

- Perceptual Quality: Use Learned Perceptual Image Patch Similarity (LPIPS), which correlates better with human judgment than PSNR.

- Task Utility: Employ a no-reference metric like NIQE, or better, establish a downstream task metric (e.g., accuracy of a trained cell detector on SR images vs. original high-resolution images).

Table 1: Quantitative Comparison of GAN-Based SR Models on Microscopy Data

| Model Architecture | PSNR (dB) | SSIM | LPIPS (↓) | Inference Time (ms) | Key Advantage for Tissue Imaging |

|---|---|---|---|---|---|

| SRResNet | 32.1 | 0.912 | 0.15 | 45 | High fidelity, less hallucination |

| ESRGAN | 28.7 | 0.851 | 0.08 | 65 | Superior perceptual realism |

| WGAN-GP (Custom) | 31.5 | 0.903 | 0.11 | 58 | Stable training, good detail balance |

| CycleGAN (Artifact Removal) | N/A | N/A | 0.12 | 72 | Unpaired training for stain normalization |

Q5: How can I implement a GAN for artifact reduction when I lack perfectly paired "clean" and "artifact-laden" deep tissue image sets?

A: Use unpaired image-to-image translation models.

- Solution: Implement CycleGAN or DualGAN. These learn a mapping between two image domains (e.g., "motion-artifact" and "clean") without needing exact pixel-to-pixel paired data.

- Critical Consideration: For scientific use, rigorous validation on held-out data with expert pathologist scoring is mandatory to ensure the model removes artifacts without altering morphometric biomarkers.

Experimental Workflow for GAN-Based Image Enhancement

Title: GAN Training & Validation Workflow for Tissue Images

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Materials for GAN Experiments in Deep Tissue Research

| Item | Function in Experiment | Example/Note |

|---|---|---|

| High-Quality Paired Dataset | Ground truth for supervised training. | e.g., Consecutive tissue sections imaged at different resolutions. |

| Pre-trained Perceptual Network | Provides feature loss to guide realistic texture generation. | VGG-19 (ImageNet) is standard; consider domain-specific networks. |

| Gradient Penalty Regularizer | Stabilizes GAN training, prevents mode collapse. | Essential for WGAN-GP implementation (λ=10 typical). |

| Patch-Based Discriminator | Allows training on large images by classifying local patches. | Enables higher resolution output; use 70x70 or 140x140 patches. |

| TIFF/OME-TIFF I/O Library | Handles multi-channel, high-bit-depth microscopy data without compression loss. | e.g., tifffile in Python; preserves metadata. |

| Compute Environment | Accelerates training of large models. | GPU with >=12GB VRAM (e.g., NVIDIA V100, A100, RTX 3090). |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our AI-reconstructed in vivo brain images show significant motion blur and artifacts. What are the primary causes and solutions? A: This is commonly due to subject movement during long acquisition times and suboptimal algorithm parameters.

- Solution: Implement a real-time motion correction protocol. Use a faster imaging sequence (e.g., compressed sensing acquisition) and pair it with an AI model (like a U-Net variant) trained specifically on motion-corrupted and clean image pairs. Ensure training data includes a variety of motion patterns.

- Protocol: In Vivo Motion-Resilient Imaging:

- Acquire data using a compressed sensing T2*-weighted gradient-echo sequence (Acceleration factor R=4).

- Feed undersampled k-space data into a pre-trained reconstruction model.

- Apply a secondary, fine-tuning network trained with data augmented with synthetic rigid-body motions.

- Validate with a stationary phantom before in vivo application.

Q2: When using AI for cancer margin detection, the model's confidence score is low for certain tissue types, leading to indecision. How can we improve this? A: Low confidence indicates the model is encountering feature patterns not well-represented in the training dataset.

- Solution: Enrich your training dataset with rare tissue samples and employ a test-time augmentation (TTA) strategy. Consider a Bayesian neural network approach to quantify uncertainty.

- Protocol: Improving Margin Detection Confidence:

- Perform a review of histopathology to identify the low-confidence tissue types.

- Acquire additional biopsy samples of these types (minimum n=5 per rare type).

- Retrain the model using a loss function that penalizes uncertainty (e.g., evidence loss).

- At inference, deploy TTA (flips, rotations) and report the mean prediction and variance.

Q3: In organoid analysis, 3D volume reconstructions from 2D slices are computationally slow, hindering live analysis. How can we speed this up? A: The bottleneck is often the iterative reconstruction algorithm. Shift to a direct, single-pass AI model.

- Solution: Implement a deep learning-based super-resolution and reconstruction model (e.g., a 3D ESRGAN variant) that can generate high-fidelity volumes from sparsely sampled z-stacks.

- Protocol: Rapid 3D Organoid Reconstruction:

- Acquire a reduced z-stack (e.g., every 3µm instead of 1µm).

- Use a pre-trained 3D super-resolution model to interpolate slices, trained on paired sparse/full datasets.

- Apply a light-weight 3D segmentation model (like a 3D Mask R-CNN) for immediate feature extraction.

- Calibrate using control organoids with known volumes and cell counts.

Q4: The AI model generalizes poorly to a new imaging system or slightly different staining protocol. What steps should we take? A: This is a domain shift problem. Full retraining is not always necessary.

- Solution: Apply domain adaptation techniques. Use a small dataset (n=50-100 images) from the new system to perform fine-tuning or style transfer.

- Protocol: Domain Adaptation for New Equipment:

- Collect a paired or unpaired dataset from the old (source) and new (target) systems.

- Train a cycle-consistent generative adversarial network (CycleGAN) to translate images from the new domain to the style of the old domain.

- Process translated images through the original, frozen AI model.

- Alternatively, fine-tune the final layers of the model using the new data.

Table 1: Performance Metrics of AI Reconstruction Algorithms in Deep Tissue Imaging

| Application | AI Model | Key Metric | Reported Performance | Benchmark (Traditional) |

|---|---|---|---|---|

| In Vivo Brain Imaging | Deep Resolve (U-Net based) | PSNR (dB) / SSIM | 32.4 dB / 0.91 | 28.1 dB / 0.82 |

| Cancer Margin Detection | Inception-v3 + Attention | Sensitivity / Specificity | 96.2% / 94.7% | 88.5% / 90.1% (Pathologist) |

| Organoid Analysis | 3D U-Net | Dice Coefficient | 0.89 | 0.78 (Thresholding) |

| General Reconstruction | Tiramisu (DenseNet) | Reconstruction Time (per volume) | 12 seconds | 4.5 minutes (Iterative) |

Table 2: Key Research Reagent Solutions

| Item | Function | Example Application |

|---|---|---|

| AI-Trained Reconstruction Software | Reconstructs high-fidelity images from undersampled or noisy data. | DeepMB, NVIDIA Clara for MRI/OCT raw data processing. |

| Domain-Invariant Contrast Agents | Provide consistent signal across modalities for robust AI training. | CellVoyager dyes for multi-photon microscopy; targeted NIR-II probes. |

| Fluorescent Reporters (Genetically Encoded) | Enable longitudinal tracking of specific cell lines in organoids/in vivo. | GCaMP for calcium imaging in brain organoids; H2B-GFP for nucleus tracking. |

| Optical Clearing Kits | Render tissue transparent for deep light penetration and improved 3D reconstruction. | CUBIC, CLARITY kits for whole-brain or tumor margin imaging. |

| High-NA Objective Lenses | Maximize light collection for sharper images, critical for training data quality. | Nikon CFI Apo LWD 40x WI NA 1.1 for live organoid imaging. |

Experimental Protocols

Protocol: AI-Assisted Intraoperative Cancer Margin Assessment Objective: To delineate tumor margins in real-time during surgery using fluorescence imaging and AI analysis.

- Administer a tumor-targeting fluorescent probe (e.g., 5-ALA for glioblastoma, ICG derivative for breast cancer) preoperatively.

- Acquire intraoperative fluorescence images using a calibrated surgical microscope/CMOS system.

- Pre-process images: flat-field correction, background subtraction, and normalization.

- Input the pre-processed image patch into a convolutional neural network (CNN) trained on histopathology-confirmed margin data.

- Generate an overlay map classifying tissue as "Positive Margin," "Close Margin" (>1mm), or "Negative Margin" (>5mm).

- Validate AI-predicted positive margins with frozen section histology (gold standard).

Protocol: Longitudinal Analysis of Cerebral Organoid Development Objective: To quantify neurite outgrowth and synaptic density changes over time using 3D reconstruction.

- Culture cerebral organoids in a matrigel droplet with a spinning bioreactor.

- Stain at weekly intervals (Weeks 4, 8, 12) with vital dyes for neurons (e.g., CellTracker Red) and synapses (e.g., FM1-43FX).

- Image using a confocal or light-sheet microscope with consistent laser power and exposure settings. Acquire z-stacks at 2µm intervals.

- Reconstruct 3D volumes using a deconvolution AI algorithm (e.g., CARE or Deepti).

- Analyze using a 3D segmentation AI model to quantify total neurite length (in µm/organoid) and synaptic puncta density (puncta/µm³).

- Correlate morphological metrics with electrophysiology data (MEA recordings).

Visualizations

Title: AI-Powered Motion-Corrected Brain Imaging Workflow

Title: Cancer Margin Detection AI Pipeline

Title: Organoid Longitudinal Analysis Workflow

Navigating Pitfalls: Data, Artifacts, and Generalization in AI Models

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our deep tissue fluorescence microscopy dataset has only 5-10 annotated samples per condition. Which algorithm is most robust for 3D image reconstruction with such extreme data limitation?

A: For very small annotated datasets (n<20), a Physics-Informed Neural Network (PINN) integrated with a U-Net architecture is currently recommended. The PINN incorporates the known physical model of light scattering in deep tissue (e.g., a simplified Beer-Lambert law or diffusion approximation) as a regularization term in the loss function. This drastically reduces the parameter space the network must learn from data alone.

- Experimental Protocol:

- Network Architecture: Implement a 3D U-Net with a residual connection bypass.

- Loss Function:

Total Loss = α * MSE(Output, Ground Truth) + β * Physics_Loss. - Physics_Loss Calculation: For each output voxel, calculate the expected photon attenuation based on the network's predicted optical properties (scattering, absorption) and your pre-defined tissue model. Compare this to the actual input raw pixel intensity.

- Training: Use heavy augmentation (3D rotation, elastic deformation, varying noise profiles). Start with α=0.1, β=0.9, gradually shifting to α=0.7, β=0.3 over 100 epochs.

- Validation: Use structural similarity index (SSIM) on a hold-out set, not just pixel-wise MSE.

Q2: Our training data is corrupted by significant, non-Gaussian noise from high-gain photomultiplier tubes. Standard denoising before reconstruction blurs vital structures. What is the best end-to-end approach?

A: Implement a Noise2Noise (N2N) or Noise2Void (N2V) training paradigm directly within your reconstruction pipeline. Do not pre-denoise. For deep tissue, Blind Spot Networks (BSNs) with a spatially correlated noise mask are particularly effective, as they can handle structured noise from scattering artifacts.

- Experimental Protocol:

- Data Preparation: Gather pairs of noisy images from the same sample (N2N) or single noisy images (N2V/BSN). No clean ground truth is needed.

- Network Modification: Use a reconstruction network (e.g., a Fourier Domain Encoder-Decoder). For BSN, during training, selectively mask input pixels in a contiguous 3D patch, forcing the network to learn from the surrounding context.

- Training Loop (N2V Example):

- Input: Noisy image

I. - For each iteration, create a masked version

I_maskedwhere a small, random 3D cube of pixels is set to zero. - Train the network to predict the original central pixel values of the masked cube from

I_masked.

- Input: Noisy image

- Key Parameter: The mask size must be larger than the noise correlation length (estimate from noise autocorrelation).

Q3: When using transfer learning from natural images (ImageNet) to biomedical data, our reconstruction model fails to capture fine subcellular details. What adaptation strategy is required?

A: The problem is domain mismatch in low-level features. Use progressive unfreezing and adaptive instance normalization (AdaIN) layers.

- Experimental Protocol:

- Initialization: Load a pre-trained model (e.g., ResNet-50 or VGG16 as an encoder).

- Replace First Layer: Swap the original RGB input filter with a custom filter matching your microscopy modality (e.g., single-channel or multi-spectral).

- Insert AdaIN Layers: After each pre-trained block in the encoder, add an AdaIN layer. This will re-normalize the feature maps to the statistics of your biomedical dataset during forward passes.

- Progressive Training:

- Phase 1 (5 epochs): Freeze all pre-trained layers, train only the new input filter, AdaIN parameters, and the decoder.

- Phase 2 (10 epochs): Unfreeze the last two blocks of the pre-trained encoder.

- Phase 3 (20+ epochs): Unfreeze the entire network for fine-tuning with a very low learning rate (1e-5).

Table 1: Performance of Data-Limited Reconstruction Algorithms on Simulated Deep Tissue Data

| Algorithm | Training Set Size (Annotated Volumes) | SSIM (Mean ± SD) | Peak Signal-to-Noise Ratio (PSNR) | Training Time (GPU Hours) |

|---|---|---|---|---|

| Standard 3D U-Net | 50 | 0.89 ± 0.03 | 32.1 ± 1.2 | 24 |

| Physics-Informed U-Net (PINN) | 10 | 0.85 ± 0.05 | 30.5 ± 1.8 | 28 |

| Noise2Void-U-Net (Noisy Data) | 50 (no clean GT) | 0.82 ± 0.04 | 29.8 ± 2.1 | 30 |

| Transfer Learning + AdaIN | 25 | 0.88 ± 0.03 | 31.7 ± 1.4 | 40 |

| Few-Shot GAN (StyleGAN-2 Adapter) | 5 | 0.80 ± 0.07 | 28.3 ± 2.5 | 48 |

Table 2: Impact of Data Augmentation Strategies on Model Generalization

| Augmentation Type | SSIM on Held-Out Test Set | Required Minimum Base Dataset Size | Key Risk |

|---|---|---|---|

| Geometric Only (Rotate, Flip) | 0.82 | 15 | Does not address intensity noise. |

| Advanced (MixUp, CutMix, Style Transfer) | 0.87 | 10 | Can generate non-physical artifacts if unconstrained. |

| Physics-Based (Simulated Scattering, Bleed-Through) | 0.89 | 5 | Computationally intensive to generate. |

| Generative (GAN-synthesized) | 0.84 | 5 | Mode collapse can reduce feature diversity. |

Experimental Workflow Diagram

Title: Workflow for Data-Hungry Image Reconstruction

Signaling Pathway for Hybrid Training Loss

Title: Hybrid Loss Function Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Data-Limited Deep Tissue Reconstruction

| Item / Reagent | Function & Purpose | Example/Note |

|---|---|---|

| Synthetic Data Generator (e.g., SynthBio, COSY) | Generates physically realistic training data using optical models of scattering and fluorophore distribution. | Crucial for pre-training or augmentation. Calibrate to your microscope's PSF. |

| Advanced Augmentation Library (Albumentations, TorchIO) | Applies spatial, intensity, and advanced (MixUp, CutOut) transformations to maximize dataset utility. | Use TorchIO for 3D volumetric transformations in medical imaging. |

| Pre-trained Model Zoo (BioImage.IO, TIMM) | Repository of models pre-trained on large-scale biological (not just ImageNet) datasets. | Provides better feature initialization than generic models. |

| Noise2Noise/Noise2Void Implementation | Enables training on noisy data pairs or single noisy images without clean ground truth. | Ideal for live, high-gain, or fast-acquisition deep tissue imaging. |

| Physics Constraint Module | Customizable layer (PyTorch/TensorFlow) that encodes domain knowledge (e.g., diffusion equation). | Acts as a regularizer, preventing physically impossible outputs. |

| Self-Supervised Feature Learner (Barlow Twins, BYOL) | Learns robust representations from unlabeled data to boost downstream task performance. | Use on all available unlabeled images before fine-tuning on small labeled set. |

| Active Learning Framework (modAL, DAL) | Selects the most informative samples for expert annotation, optimizing labeling effort. | Integrates with your training loop to query which new image would most improve the model. |

Troubleshooting Guides & FAQs

FAQ 1: My deep learning model for fluorescence microscopy reconstruction achieves near-perfect training accuracy but fails on new, unseen tissue samples. What is happening and how can I fix it?

Answer: This is a classic symptom of overfitting. The model has memorized the noise and specific artifacts in your training dataset instead of learning the general mapping for image reconstruction. Implement these corrective steps:

Apply L2 Weight Regularization: Add a penalty to the loss function based on the magnitude of the weights. This discourages the network from relying too heavily on any single feature.

- Protocol: In your optimizer (e.g., Adam), set the