Beyond the Microscope: How AI-Driven Image Analysis is Revolutionizing Optical Data Processing in Biomedical Research

This article provides a comprehensive overview of artificial intelligence (AI)-powered image analysis for processing optical data in biomedical research.

Beyond the Microscope: How AI-Driven Image Analysis is Revolutionizing Optical Data Processing in Biomedical Research

Abstract

This article provides a comprehensive overview of artificial intelligence (AI)-powered image analysis for processing optical data in biomedical research. We explore the foundational principles of deep learning and computer vision, detailing core methodologies like convolutional neural networks (CNNs) and their application in high-content screening, digital pathology, and live-cell imaging. We address common challenges in model training, data quality, and deployment, offering practical troubleshooting guidance. The article also examines validation strategies and benchmark comparisons with traditional methods, highlighting superior performance in feature detection and quantification. Aimed at researchers, scientists, and drug development professionals, this guide synthesizes current innovations and future trajectories for AI-driven optical analysis in accelerating scientific discovery and therapeutic development.

Decoding the Vision: AI Fundamentals for Optical Data Processing in Biomedicine

The Evolution from Manual Analysis to Intelligent Automation

This document, framed within a thesis on AI-driven image analysis for optical data processing, details the application and protocols enabling the shift from manual microscopy to fully automated, intelligent systems in biomedical research. This evolution is critical for high-content screening (HCS) in drug discovery and quantitative cellular analysis.

Key Application Notes

2.1. Application Note: Automated High-Content Screening for Drug Toxicity

- Objective: To rapidly and quantitatively assess compound-induced hepatotoxicity using AI-driven analysis of hepatic spheroid images.

- Background: Manual assessment of cell death markers (e.g., nuclear condensation, membrane integrity) is subjective and low-throughput. Intelligent automation enables unbiased, multi-parameter analysis across thousands of conditions.

- Outcome: An end-to-end pipeline from automated confocal imaging to AI-based classification of toxicity phenotypes, generating dose-response curves for multiple cellular health parameters simultaneously.

2.2. Application Note: AI-Assisted Pathological Scoring in Tissue Histology

- Objective: To standardize the scoring of immunohistochemistry (IHC) samples for cancer biomarker (e.g., PD-L1) expression.

- Background: Inter-observer variability in manual pathological scoring remains a major reproducibility challenge. Convolutional Neural Networks (CNNs) can be trained to identify and quantify stained regions with high consistency.

- Outcome: A validated algorithm that provides Tumor Proportion Scores (TPS) and Immune Cell Scores, reducing scoring time from minutes per slide to seconds and providing continuous, rather than categorical, data output.

Quantitative Evolution: Manual vs. Automated Analysis

Table 1: Performance Comparison of Analysis Paradigms

| Metric | Manual Analysis | Automated Basic Analysis | Intelligent Automation (AI-Driven) |

|---|---|---|---|

| Throughput | 10-100 images/day | 1,000-10,000 images/day | 100,000+ images/day |

| Analysis Time per Image | 2-5 minutes | 10-30 seconds | <1 second |

| Measurable Parameters | 3-5 (limited by analyst) | 10-20 (predefined) | 50+ (including emergent features) |

| Inter-observer Variability | High (15-40% CV) | Low (<5% CV for simple features) | Very Low (<2% CV for complex features) |

| Object Detection Accuracy (F1-score) | ~0.75 (subjective) | ~0.85 (on ideal images) | >0.95 (robust to noise) |

| Primary Limitation | Subjective, fatiguing | Inflexible to new morphologies | Requires large, annotated training sets |

Experimental Protocols

4.1. Protocol: Training a CNN for Nuclei Segmentation and Phenotypic Classification

- Objective: To develop a model for segmenting nuclei in live-cell images and classifying them into phenotypic states (e.g., interphase, mitotic, apoptotic).

- Materials: See "Scientist's Toolkit" below.

- Procedure:

- Sample Preparation: Seed U2OS cells in a 96-well imaging plate. Treat with a compound library (e.g., kinase inhibitors) and control agents (nocodazole for mitotic arrest, staurosporine for apoptosis). Incubate for 24h.

- Staining: Stain nuclei with Hoechst 33342 (1 µg/mL) and viability dye (e.g., propidium iodide, 0.5 µM). Incubate for 30 minutes.

- Image Acquisition: Using a high-content confocal imager (e.g., Yokogawa CV8000), acquire 9 fields per well at 20x magnification (Channels: Hoechst, PI).

- Ground Truth Annotation: Manually label ~500 images using a tool like Ilastik or LabKit. Draw precise contours around nuclei and assign class labels.

- Model Training: Use a U-Net architecture in a framework like PyTorch or TensorFlow. Split data (70% train, 15% validation, 15% test). Train for 100 epochs using a combined loss (Dice loss + Cross-entropy).

- Validation: Apply model to test set. Calculate metrics: Dice coefficient for segmentation, precision/recall for classification. Deploy model for inference on new screens.

4.2. Protocol: Implementing an End-to-End Automated Workflow for Spheroid Analysis

- Objective: To fully automate the culture, treatment, imaging, and analysis of 3D tumor spheroids for drug efficacy screening.

- Procedure:

- Automated Spheroid Formation: Use a liquid handling robot to dispense HCT-116 cells into ultra-low attachment 384-well plates. Centrifuge (500 x g, 10 min) to encourage aggregation.

- Automated Compound Dispensing: On day 3, use a digital dispenser (e.g., Tecan D300e) to transfer nanoliter volumes of drug compounds into each well in a dose-response matrix.

- Automated Imaging: At assay endpoint (day 6), transfer plate to an automated incubator-imager (e.g., Incucyte S3 or ImageXpress Micro Confocal). Acquire z-stacks (4 slices, 20µm step) using brightfield and fluorescence (Calcein AM for viability, EthD-1 for death).

- Intelligent Analysis: Images are auto-directed to a cloud analysis server.

- Step A: A pre-trained CNN performs 3D spheroid core segmentation from brightfield z-stacks.

- Step B: Fluorescence intensities are quantified within the segmented volume.

- Step C: A regression model predicts spheroid health scores and IC50 values for each compound.

- Data Delivery: Results (dose-response curves, heatmaps) are pushed to a LIMS (Laboratory Information Management System).

Diagrams

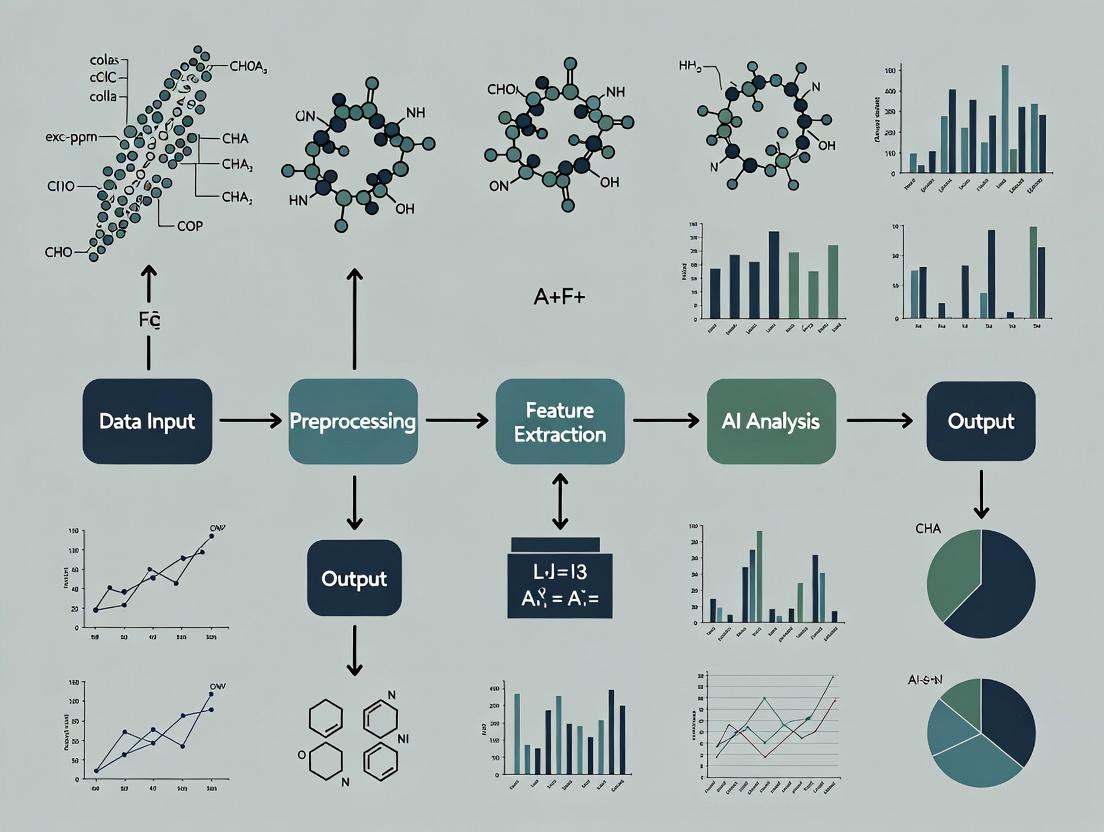

Title: Evolution of Image Analysis Workflow

Title: AI-Driven Image Analysis Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for AI-Driven Image Analysis Experiments

| Item | Function & Rationale |

|---|---|

| Live-Cell Nuclear Dyes (e.g., Hoechst 33342, SiR-DNA) | Enable non-toxic, long-term tracking of nuclei for time-lapse analysis, providing the primary segmentation target for AI models. |

| Viability/Apoptosis Kits (e.g., Annexin V, Caspase-3/7 substrates) | Provide multiplexed fluorescence readouts for cell health, used as ground truth for training AI classifiers to recognize death phenotypes. |

| Multiplex Fluorescence Antibody Panels | Allow simultaneous detection of multiple phospho-proteins or biomarkers in fixed cells, creating rich, high-dimensional image data for AI-based pathway analysis. |

| 3D Culture Matrices (e.g., Basement Membrane Extract) | Support the formation of physiologically relevant organoids/spheroids, whose complex morphology requires advanced 3D AI segmentation models. |

| High-Content Imaging Plates (e.g., µClear black-walled plates) | Optimized for automated microscopy, providing minimal background fluorescence and optical clarity for consistent, high-quality image acquisition. |

| Open-Source Annotation Tools (e.g., QuPath, CellProfiler Annotator) | Critical for generating accurate labeled datasets (ground truth) to train and validate supervised AI models without vendor lock-in. |

| Pre-trained AI Models (e.g., in DeepCell, ZeroCostDL4Mic) | Accelerate workflow development by providing a starting point for segmentation or classification, which can be fine-tuned with user-specific data. |

Application Notes for AI-Driven Optical Data Processing

In the context of optical data processing for research—spanning high-content cellular imaging, spectroscopy analysis, and particulate characterization—Convolutional Neural Networks (CNNs), Generative Adversarial Networks (GANs), and Transfer Learning form a foundational toolkit. Their application accelerates the extraction of quantitative features from complex image data, enables the synthesis of realistic training datasets where experimental data is scarce, and facilitates the adaptation of powerful pre-trained models to niche scientific domains with limited labeled examples.

Core Quantitative Performance Metrics (Summarized from Recent Literature)

Table 1: Comparative Performance of AI Architectures on Benchmark Image Analysis Tasks (2023-2024)

| AI Model Type | Primary Task | Key Metric | Reported Performance | Typical Dataset Size Required |

|---|---|---|---|---|

| Deep CNN (e.g., ResNet-50) | Image Classification (e.g., Cell Phenotyping) | Top-1 Accuracy | 92-98% (on curated bio-image sets) | 10,000 - 100,000 labeled images |

| U-Net (Encoder-Decoder CNN) | Image Segmentation (e.g., Nucleus Detection) | Dice Similarity Coefficient | 0.94 - 0.99 | 500 - 5,000 labeled images |

| Conditional GAN (e.g., pix2pix) | Image-to-Image Translation (e.g, Denoising) | Structural Similarity Index (SSIM) | 0.85 - 0.96 | 1,000 - 10,000 image pairs |

| StyleGAN2/3 | High-Fidelity Image Synthesis | Fréchet Inception Distance (FID) ↓ | 5-15 (lower is better) | 50,000+ images for training |

| Transfer Learning (Fine-tuning) | Adaptation to New Image Modality | % Improvement over Baseline | 15-40% accuracy gain | 100 - 1,000 target-domain images |

Experimental Protocols

Protocol 2.1: Implementing a CNN for High-Content Cell Image Classification

Objective: To automate the classification of cellular phenotypes from fluorescence microscopy images. Materials: Labeled dataset of cell images (e.g., untreated vs. drug-treated), Python with PyTorch/TensorFlow, GPU workstation. Procedure:

- Data Preprocessing: Scale all images to a uniform size (e.g., 224x224). Apply augmentation (random rotation, flip, intensity variation). Split data into training (70%), validation (15%), and test (15%) sets.

- Model Selection & Initialization: Select a pre-trained CNN architecture (e.g., ResNet-34). Replace the final fully connected layer to output classes matching your phenotype count (e.g., 2). Initialize new layer weights randomly, others with pre-trained weights.

- Training: Use cross-entropy loss and Adam optimizer. Freeze early convolutional layers for the first 5 epochs, then unfreeze all layers. Train for 50 epochs with batch size 32. Monitor validation loss for early stopping.

- Evaluation: On the held-out test set, calculate accuracy, precision, recall, and F1-score. Generate a confusion matrix.

- Interpretation: Apply Gradient-weighted Class Activation Mapping (Grad-CAM) to visualize image regions most influential to the model's decision.

Protocol 2.2: Utilizing a GAN for Synthetic Data Augmentation in Particle Analysis

Objective: To generate synthetic optical microscopy images of particles/cells to augment a small training dataset. Materials: Small corpus of real particle images (min. ~500), Python with PyTorch/TensorFlow and GAN libraries (e.g., StyleGAN2-ADA), high-VRAM GPU. Procedure:

- Data Curation: Collect and center-crop all raw images to focus on the particle. Minimal diverse background is ideal. Resize to a standard resolution (e.g., 256x256).

- Model Configuration: Implement a GAN with adaptive discriminator augmentation (ADA) to prevent overfitting on small data. Configure the loss function (e.g., non-saturating logistic loss with R1 regularization).

- Training: Train the generator (G) and discriminator (D) adversarially. For 1000 images, expect training for ~24-48 GPU hours. Monitor FID score on a fixed validation set of real images.

- Synthesis & Validation: After FID plateaus, use G to generate synthetic images. Have a domain expert perform a blinded review to assess realism. Use synthetic data to augment the real training set for a downstream CNN task and measure performance lift.

Protocol 2.3: Protocol for Transfer Learning in Drug Response Imaging

Objective: To adapt a general-purpose image CNN to predict drug response from specialized time-lapse phase-contrast imaging. Materials: Pre-trained ImageNet model (e.g., EfficientNet-B2), small labeled dataset of phase-contrast images showing treatment response, GPU resource. Procedure:

- Feature Extraction Analysis: Pass your image data through the pre-trained model (without final layer). Use t-SNE to visualize feature embeddings. This confirms if pre-trained features separate your classes.

- Progressive Fine-tuning: Remove the original classifier head. Add a new head: Global Average Pooling, Dropout (0.5), Dense layer (your class number). First, train only the new head for 10 epochs. Then, unfreeze and jointly fine-tune the entire model with a very low learning rate (1e-5) for another 20 epochs.

- Domain-Specific Validation: Evaluate on a temporally separated test set (different experiment date). Use metrics relevant to the clinical/bio question (e.g., AUC-ROC for response prediction).

Visualizations

CNN Workflow for Image Analysis

Adversarial Training in GANs

Transfer Learning Process Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational "Reagents" for AI-Driven Image Analysis

| Item / Solution | Function in Experiment | Example/Note |

|---|---|---|

| Pre-trained Model Weights | Provides a high-quality initialization of feature extractors, drastically reducing data needs and training time. | Models from PyTorch Torchvision, TensorFlow Hub (e.g., ResNet, EfficientNet, VGG). |

| Data Augmentation Library | Artificially expands training dataset diversity by applying realistic transformations, improving model generalization. | Albumentations, Torchvision.transforms (for rotations, flips, noise, contrast shifts). |

| Differentiable Augmentation (ADA) | A critical "reagent" for GANs on small data; applies augmentations during training to prevent discriminator overfitting. | Implementation of StyleGAN2-ADA; essential for synthetic data generation in research. |

| Gradient Calculation Framework | Automates backpropagation, enabling the training of deep networks by computing gradients of loss w.r.t. all parameters. | Autograd in PyTorch, GradientTape in TensorFlow. The core "enzyme" of deep learning. |

| Loss Function | Quantifies the discrepancy between model predictions and ground truth, guiding the optimization process. | Cross-Entropy (classification), Dice Loss (segmentation), Wasserstein Loss (GAN training). |

| Optimizer | The algorithm that updates model weights based on calculated gradients to minimize the loss function. | Adam or AdamW are standard; configurable learning rate and momentum. |

| Performance Metrics Package | Provides standardized, reproducible evaluation of model performance beyond basic accuracy. | Scikit-learn (for F1, AUC-ROC), TorchMetrics (for Dice, IoU, PSNR). |

This Application Note provides a practical guide for implementing AI-driven image analysis in optical data processing, specifically within biomedical and pharmaceutical research. It details the protocols and experimental frameworks that merge advanced optical systems with machine learning algorithms to transform raw pixel data into quantitative biological insights, supporting a thesis on scalable, automated image analysis.

Foundational Concepts & Data

Modern AI models, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), are trained on large datasets of optical images to learn hierarchical feature representations. The performance of these models is benchmarked on standard datasets.

Table 1: Benchmark Performance of AI Models on Key Optical Datasets

| Dataset | Primary Use | Top Model (2023-24) | Reported Accuracy | Key Metric |

|---|---|---|---|---|

| ImageNet-1K | General Object Recognition | ConvNeXt-V2 (H) | 88.9% | Top-1 Accuracy |

| COCO | Object Detection & Segmentation | DINOv2 (ViT-g) | 62.5 AP | Box AP |

| LIVECell | Live-Cell Segmentation | Cellpose 2.0 | 0.85 mAP | Average Precision |

| RxRx1 | High-Content Cell Phenotyping | Self-Supervised ViT | 0.94 AUC | ROC-AUC |

Detailed Experimental Protocols

Protocol 3.1: AI-Assisted High-Content Analysis (HCA) for Drug Screening

Objective: To automate the quantification of cell viability and morphological changes in response to compound libraries. Materials: See Scientist's Toolkit. Workflow:

- Sample Preparation:

- Seed HeLa or U2OS cells in 384-well microplates at 2,000 cells/well.

- Incubate for 24h (37°C, 5% CO₂).

- Treat with compound library using a liquid handler (n=4 technical replicates).

- Incubate for 48h.

- Stain with Hoechst 33342 (nuclei), Phalloidin-Alexa Fluor 488 (actin), and SYTOX Red (dead cells).

- Optical Imaging:

- Acquire 16-bit images using a high-content spinning-disk confocal system (e.g., Yokogawa CV8000) with a 20x objective (NA 0.75).

- Capture 9 fields-of-view per well to ensure statistical robustness.

- Use appropriate filter sets for DAPI, FITC, and TRITC channels.

- AI-Based Image Analysis:

- Preprocessing: Apply flat-field correction and subtract background per channel. Stitch fields of view per well.

- Nuclei Segmentation: Input Hoechst channel to a pre-trained U-Net model (trained on LIVECell) to generate binary masks.

- Feature Extraction: For each segmented cell, extract 500+ morphological, intensity, and texture features (e.g., area, eccentricity, Haralick features) from all channels.

- Phenotypic Classification: Input feature vector into a Random Forest or a CNN classifier trained on control vs. treated phenotypes.

- Hit Identification: Wells exhibiting a statistically significant (p<0.01, ANOVA) shift in population morphology vs. DMSO controls are flagged.

Protocol 3.2: Super-Resolution Reconstruction via Deep Learning

Objective: To generate super-resolution images from diffraction-limited inputs using a Generative Adversarial Network (GAN). Workflow:

- Data Pair Acquisition:

- Image a fixed biological sample (e.g., microtubules) using both a standard confocal microscope (xy-resolution: ~250 nm) and a STORM/PALM super-resolution system (xy-resolution: ~20 nm).

- Precisely align the image pairs using landmark-based registration (e.g., with Phase Correlation).

- Model Training:

- Train a SRGAN model where the low-resolution confocal image is the input and the corresponding STORM image is the target.

- Use a loss function combining Mean Squared Error (MSE) and a perceptual (VGG) loss.

- Train for 50,000 iterations using the Adam optimizer (lr=1e-4).

- Validation & Application:

- Validate on a held-out test set using the Structural Similarity Index (SSIM); target SSIM > 0.85.

- Apply the trained model to new confocal-only data to infer sub-diffraction structural details.

Visualization: Pathways and Workflows

Diagram 1: AI-Driven Image Analysis Workflow

Diagram 2: CNN Architecture for Phenotype Classification

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function/Benefit | Example Product/Catalog |

|---|---|---|

| High-Content Imaging Plates | Optically clear, black-walled plates for minimal crosstalk and high SNR. | Corning #4514 (384-well) |

| Live-Cell Fluorescent Dyes | Vital stains for multiplexed, dynamic tracking of cellular structures. | Thermo Fisher H21492 (Hoechst), I34057 (Phalloidin) |

| Automated Liquid Handler | Ensures precise, reproducible compound dosing for screening assays. | Beckman Coulter Biomek i7 |

| Cell Painting Assay Kit | Standardized dye cocktail for profiling morphological phenotypes. | Revvity #D10014 |

| Pre-trained AI Models | Accelerates deployment by providing baseline segmentation/classification. | Cellpose 2.0, StarDist |

| GPU Computing Resource | Enables rapid training and inference of deep learning models. | NVIDIA RTX A6000 (48GB VRAM) |

| Image Analysis Software SDK | Allows custom pipeline development and integration of AI models. | Python (PyTorch, TensorFlow), Napari |

Application Notes

AI-Driven Integration of Multi-Modal Optical Data

The convergence of AI with optical imaging modalities is revolutionizing biomedical research and drug development. By processing and correlating diverse data types, AI models can extract complex, high-dimensional phenotypic signatures, accelerating the path from discovery to clinical application.

Table 1: Core Characteristics of Key Optical Data Types in AI Pipelines

| Data Type | Primary Scale | Key AI Analysis Tasks | Typical Data Volume per Sample | Common File Formats |

|---|---|---|---|---|

| Microscopy | Subcellular to Cellular | Segmentation, Object Tracking, Super-Resolution, Denoising | 100 MB – 10 GB | .TIFF, .ND2, .CZI, .LSM |

| Histopathology | Tissue to Organ | Whole Slide Image (WSI) Classification, Tumor Detection, Prognostic Scoring | 1 GB – 20 GB | .SVS, .MRXS, .TIFF |

| High-Content Screening (HCS) | Cellular | Multiparametric Feature Extraction, Phenotypic Profiling, Hit Identification | 10 MB – 5 GB per well | .TIFF, .H5, Assay-specific |

| In Vivo Imaging | Whole Organism | Biomarker Quantification, 3D Reconstruction, Longitudinal Tracking | 50 MB – 50 GB per timepoint | .DICOM, .NIfTI, .RAW |

Table 2: AI Model Performance Benchmarks on Representative Public Datasets

| Dataset (Modality) | AI Task | Top Model Architecture | Reported Metric (Score) | Key Challenge Addressed |

|---|---|---|---|---|

| Camelyon16 (Histo) | Metastasis Detection | CNN (ResNet-50) | AUC (0.994) | Large WSI analysis |

| BBBC021 (HCS) | Phenotype Classification | U-Net + Feature Analysis | F1-Score (0.92) | Multiparametric cell profiling |

| Cell Tracking Challenge (Micro) | Segmentation & Tracking | StarDist + TrackMate | SEG Score (0.85) | Dynamic subcellular events |

| TCIA (In Vivo, MRI) | Tumor Segmentation | 3D U-Net | Dice Coefficient (0.89) | 3D volumetric analysis |

Key Applications in Drug Development

- Target Identification & Validation: HCS and microscopy, analyzed by AI, enable genome-wide phenotypic screening, linking genetic perturbations to cellular morphology.

- Lead Optimization & Toxicology: AI analysis of histopathology and HCS data predicts compound efficacy and off-target toxicities from organoid and tissue models.

- Preclinical In Vivo Studies: AI automates the quantification of tumor burden, metabolic activity, and biomarker expression from longitudinal in vivo imaging, reducing bias.

- Biomarker Discovery: AI models correlate in vitro HCS profiles with in vivo imaging and histopathological outcomes to identify novel digital biomarkers.

Experimental Protocols

Protocol 1: AI-Enabled High-Content Screening for Phenotypic Drug Discovery

Objective: To identify compounds inducing a target cellular phenotype using high-content imaging and an AI-based analysis pipeline.

Materials:

- Cell line: U2OS osteosarcoma cells expressing a fluorescent fusion protein of interest.

- Instrument: Confocal or widefield high-content microscope with environmental control.

- Reagents: Compound library (e.g., 1,280-compound LOPAC), assay-specific dyes (Hoechst 33342, CellMask), cell culture media.

- Software: CellProfiler, DeepCell (or equivalent), custom Python scripts with PyTorch/TensorFlow.

Procedure:

- Plate Cells & Treat: Seed U2OS cells in 384-well optical plates at 2,000 cells/well. After 24h, treat with compounds from the library (1 µM final concentration), including DMSO vehicle and known phenotypic control compounds. Incubate for 48h.

- Stain & Fix: Stain live cells with Hoechst 33342 (nuclei) and CellMask Deep Red (cytosol). Fix cells with 4% PFA for 15 minutes.

- Image Acquisition: Using a 40x objective, acquire 9 fields per well in the DAPI (nuclei), FITC (target protein), and Cy5 (cytosol) channels. Maintain consistent exposure across plates.

- AI-Based Image Analysis:

- Preprocessing: Flat-field correct and stitch images per well.

- Segmentation: Input the DAPI channel into a pre-trained U-Net model to generate nuclear masks. Use these to seed a second model (e.g., Cellpose) for whole-cell segmentation using the cytosol channel.

- Feature Extraction: For each segmented cell, extract ~1,000 morphological, intensity, and texture features from all channels (using CellProfiler or deep feature embeddings).

- Phenotype Classification: Train a Random Forest or shallow neural network classifier on features from control wells to recognize the desired phenotype. Apply the classifier to all compound-treated wells.

- Hit Identification: Rank compounds by the Z-score of the percentage of cells predicted to exhibit the target phenotype relative to the DMSO control plate. Select hits with Z > 3 and a dose-response confirmation.

Protocol 2: CorrelativeIn Vivo–Ex VivoHistopathology Analysis via AI Registration

Objective: To spatially align in vivo imaging data with high-resolution histopathology for ground-truth validation of imaging biomarkers.

Materials:

- Animal Model: Mouse xenograft model.

- Imaging Systems: In vivo micro-CT or MRI system, whole slide scanner.

- Reagents: Perfusion fixation setup (PBS, 10% formalin), paraffin, H&E staining reagents.

- Software: 3D Slicer, QuPath, Elastix (or ANTs) registration toolbox.

Procedure:

- In Vivo Imaging: Anesthetize the mouse and acquire a 3D volumetric scan (e.g., micro-CT) with high spatial resolution. Administer a contrast agent if necessary.

- Tissue Processing: Euthanize the animal and perfuse with formalin. Resect the tumor/tissue of interest and place in formalin for 24h fixation. Process, embed in paraffin, and section at 5 µm thickness. Perform H&E staining.

- Digital Histopathology: Scan the entire H&E slide using a 20x objective on a whole slide scanner.

- AI-Driven Registration Workflow:

- Histology Preprocessing: Use a pre-trained CNN (e.g., MesoNet) in QuPath to identify and mask out non-tissue regions on the WSI.

- 3D Reconstruction from In Vivo Data: Segment the organ/tumor from the in vivo scan using a 3D U-Net in 3D Slicer. Generate a 2D maximum intensity projection (MIP) plane that best approximates the histology sectioning plane.

- Multi-Modal Registration: Employ a multi-stage deformable registration algorithm (e.g., Elastix). Use mutual information as the similarity metric to align the in vivo MIP image (moving image) to the H&E WSI (fixed image). The AI-generated tissue mask constrains the registration to relevant areas.

- Validation & Analysis: Manually annotate key histological structures (e.g., necrotic cores, invasive fronts) on the WSI. Overlay these annotations onto the registered in vivo image to validate the accuracy of in vivo-derived radiomic features.

Diagrams

Title: AI-Powered High-Content Screening Analysis Workflow

Title: Correlative In Vivo to Histology AI Registration Pipeline

The Scientist's Toolkit

Table 3: Essential Research Reagents & Tools for AI-Driven Optical Analysis

| Item | Function in AI Workflow | Example Product/Model |

|---|---|---|

| Live-Cell Fluorescent Dyes | Generate specific, quantifiable signals for AI segmentation and tracking. | CellTracker Green CMFDA, Hoechst 33342, MitoTracker Deep Red |

| Antibodies for Multiplex Imaging | Enable high-plex biomarker detection for complex phenotype classification. | Opal Polymer IHC/IF kits, Akoya CODEX reagents |

| AI-Ready Cell Lines | Express consistent fluorescent markers (e.g., H2B-GFP) for training models. | FUCCI cell lines, Thermo Fisher Cell Lights reagents |

| 3D Tissue Culture Matrices | Provide physiologically relevant contexts for HCS and AI model training. | Corning Matrigel, Cultrex BME 2 |

| Multi-Modal Contrast Agents | Enhance in vivo imaging signals for robust AI segmentation. | Luminescence probes (IVIS), Gd-based MRI agents, Micro-CT iodinated agents |

| Open-Source AI Platforms | Provide pre-trained models and pipelines for image analysis. | CellProfiler, Ilastik, DeepCell, ZeroCostDL4Mic |

| High-Performance Computing Storage | Manage massive datasets (WSI, 3D volumes) for efficient AI training. | NVMe SSDs, Scalable NAS (e.g., Synology) |

The Critical Role of Annotated Datasets in Biomedical AI

The efficacy of AI models in biomedical image analysis is fundamentally constrained by the quality, scale, and biological fidelity of their training data. Within optical data processing research—encompassing modalities like whole-slide imaging (WSI), live-cell microscopy, and multiplexed immunofluorescence—annotated datasets serve as the critical substrate for teaching models to discern biologically relevant patterns from complex, high-dimensional data. This document outlines application notes and protocols for the creation and utilization of annotated datasets, a cornerstone for advancing thesis research in predictive phenotyping and therapeutic response analysis.

Quantitative Landscape: Current Datasets and Performance Metrics

Table 1: Representative Publicly Available Annotated Biomedical Image Datasets

| Dataset Name | Modality | Primary Annotation Type | Volume (Images) | Key Application | Common Model Performance (F1-Score)* |

|---|---|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Whole-Slide Images (WSI) | Tumor region, histological subtype | >30,000 slides | Cancer diagnosis, stratification | 0.87 - 0.92 |

| Human Protein Atlas (HPA) Image Data | Immunofluorescence Microscopy | Protein subcellular localization | ~13 million cells | Spatial proteomics, cell state classification | 0.89 - 0.95 |

| Image Data Resource (IDR) | High-Content Screening (HCS) | Phenotypic profiles, siRNA/compound treatment | ~100+ studies | Drug discovery, phenotype mapping | 0.78 - 0.85 |

| LIVECell | Phase-Contrast Microscopy | Instance segmentation (cell boundaries) | ~1.6M cells | Live-cell tracking, proliferation assays | 0.83 - 0.88 |

| MitoEM | Electron Microscopy | Instance segmentation (mitochondria) | ~4,000 x 2,048³ voxels | Ultrastructural analysis, connectomics | 0.91 - 0.94 |

*Performance range reflects top-cited models (e.g., ResNet, U-Net variants) on respective test sets as of recent literature.

Experimental Protocols for Dataset Creation and Validation

Protocol 3.1: Multi-Expert Annotation for Histopathology WSIs

- Objective: Generate high-fidelity annotations for tumor microenvironment segmentation.

- Materials: Digital slide scanner, WSI management platform (e.g., QuPath, HALO), expert pathologist panel (≥3).

- Procedure:

- Slide Curation: Select WSIs from biobanked tissues representing disease spectrum and controls.

- Pre-annotation: Use a pre-trained model to generate initial segmentation masks (e.g., tumor vs. stroma) to expedite review.

- Blinded Multi-Expert Review: Each pathologist independently reviews and corrects pre-annotations using a standardized digital tool.

- Consensus Generation: Annotations are aggregated. Pixels labeled identically by ≥2 experts are accepted. Discrepant regions are discussed in a consensus meeting.

- Ground Truth Synthesis: Final consensus annotation is synthesized as the ground truth mask.

- Quality Control (QC): A fourth, senior pathologist reviews a random 10% of consensus masks.

Protocol 3.2: Temporal Annotation for Live-Cell Imaging Data

- Objective: Create track annotations for single-cell behavior analysis (division, death, motility).

- Materials: High-content live-cell imager, CO₂ incubator, cell culture reagents, tracking software (e.g., TrackMate, CellProfiler).

- Procedure:

- Experimental Setup: Seed cells in a 96-well imaging plate. Treat with compounds/controls.

- Image Acquisition: Program imager for multi-position, time-lapse acquisition (e.g., every 30 mins for 72h).

- Automated Pre-tracking: Apply segmentation (e.g., Cellpose) to each frame to detect cell instances.

- Linking & Manual Correction: Use nearest-neighbor algorithms to generate tracklets. Manually correct linking errors, division events, and cell deaths using the software's correction interface.

- Event Labeling: Annotate frames for key events: mitosis, apoptosis (blebbing), and morphological changes.

- Metadata Association: Link each track to experimental metadata (well ID, treatment, concentration).

Visualizing Workflows and Dependencies

(Title: AI Development Pipeline for Biomedical Imaging)

(Title: Annotation Quality Dictates Model Performance)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Advanced Biomedical Image Annotation

| Item / Reagent | Function in Annotation & AI Workflow |

|---|---|

| Digital Pathology Platform (e.g., QuPath, HALO) | Open-source/commercial software for visualizing, annotating, and quantitatively analyzing WSIs. Enables ROI marking, cell segmentation, and biomarker scoring. |

| High-Content Analysis Software (e.g., CellProfiler, Harmony) | Automates feature extraction from millions of cells in HCS images. Critical for generating phenotypic profiles used as annotations for ML models. |

| Generalist AI Models (e.g., Cellpose, Segment Anything Model - SAM) | Pre-trained models for zero-shot or promptable segmentation of cells/nuclei. Used for rapid pre-annotation to accelerate expert review cycles. |

| Annotation Collaboration Tool (e.g., CVAT, Labelbox) | Cloud-based platform to manage annotation projects, distribute tasks among experts, perform QC, and maintain version control for datasets. |

| Data Versioning System (e.g., DVC, Delta Lake) | Tracks changes to datasets, models, and code together. Ensures reproducibility and lineage tracking in AI research pipelines. |

| Standardized DICOM / OME-TIFF Formats | Interoperable file formats that preserve rich metadata (instrument settings, stains) alongside pixel data, crucial for model input consistency. |

From Pixels to Insights: Methodologies and Real-World Applications

Within the broader thesis on AI-driven image analysis for optical data processing in biomedical research, this document outlines the integrated pipeline from raw image capture to AI model inference. This workflow is critical for applications in high-content screening, phenotypic drug discovery, and quantitative cell biology, where reproducibility and data integrity are paramount.

Image Acquisition Protocols

High-Content Screening (HCS) Image Capture

Objective: To acquire consistent, high-fidelity multichannel cellular images. Protocol:

- Plate Preparation: Seed cells in a 96-well or 384-well optical-bottom microplate. Apply compounds or controls following an established randomization layout to minimize plate-edge effects.

- Microscope Setup: Use an automated epifluorescence or confocal high-content microscope. Perform daily calibration using fluorescent calibration slides (e.g., TetraSpeck beads) to align channels and check intensity uniformity.

- Acquisition Parameters:

- Set exposure times for each channel (DAPI, FITC, TRITC, Cy5) using negative and positive control wells to avoid saturation.

- Define a z-stack range (e.g., 5 slices at 2µm intervals) for 3D cell models.

- Set autofocus using a dedicated laser-based or software-based method per imaging site.

- Acquire a minimum of 9 non-overlapping fields per well at 20x magnification to ensure statistical robustness.

- Metadata Logging: Automatically save all acquisition parameters (exposure, objective, binning, timestamp) within the image file header (e.g., OME-TIFF format).

Live-Cell Imaging for Kinetic Assays

Objective: To capture temporal dynamics of cellular processes. Protocol:

- Environmental Control: Maintain the microscope stage-top incubator at 37°C, 5% CO₂, and high humidity for >1 hour prior to imaging for stability.

- Viability Control: Include a cell-health indicator dye (e.g., Cytoplasm-Selective Membrane-Permeant Dye) in control wells.

- Timelapse Setup: Define total experiment duration (e.g., 72h) and interval (e.g., 30 minutes). Use phase-contrast and a single fluorescent channel to minimize phototoxicity.

- Focus Maintenance: Employ hardware autofocus or software-based focus drift compensation at each interval.

Image Preprocessing & Quality Control

Raw images require standardization before analysis.

Preprocessing Workflow Protocol

- Flat-Field Correction: Apply to correct for uneven illumination.

- Input: Raw image

I_raw, flat-field imageF(from a uniform fluorophore), dark-field imageD. - Calculation:

I_corrected = (I_raw - D) / (F - D) - Perform per channel, per imaging session.

- Input: Raw image

- Background Subtraction: Use a rolling-ball or top-hat filter (radius = 50 pixels) to remove diffuse background signal.

- Channel Alignment (Registration): If channels are misaligned due to filter wheel shift, compute cross-correlation using calibration bead images and apply affine transformation.

- Stitching & Tiling: For large fields, stitch adjacent image tiles using feature-matching algorithms (e.g., SIFT).

- Z-Stack Projection: For 3D acquisitions, perform a maximum intensity projection to create a 2D composite for segmentation, or retain the stack for 3D analysis.

Automated Quality Control (QC) Protocol

Implement a QC step to flag failed acquisitions.

- Extract metrics: Focus scores (using gradient-based methods), intensity distribution, signal-to-noise ratio (SNR), and contamination artifacts.

- Flag images where:

- Focus score < 0.5 (normalized 0-1).

- Total intensity deviates >3 standard deviations from plate median.

- SNR < 5.

- Exclude flagged images from downstream training or trigger re-acquisition.

AI Model Pipeline for Image Analysis

Model Training Protocol for Cell Segmentation

Objective: Train a U-Net model to segment nuclei and cytoplasm.

- Data Preparation:

- Use 500 preprocessed images with corresponding manually-annotated ground truth masks.

- Split data: 70% training, 15% validation, 15% test.

- Apply on-the-fly augmentation: random rotations (±15°), horizontal/vertical flips, minor intensity variations (±10%).

- Model Architecture: Use a standard U-Net with an EfficientNet-B3 encoder, pre-trained on ImageNet.

- Training:

- Loss Function: Combined Dice loss and Binary Cross-Entropy.

- Optimizer: AdamW with a learning rate of 1e-4.

- Batch size: 8, trained for 100 epochs with early stopping.

- Hardware: Single NVIDIA A100 GPU.

- Validation: Monitor validation Dice coefficient. Deploy model only if Dice > 0.92 on the held-out test set.

Inference & Feature Extraction Protocol

- Batch Inference: Apply the trained model to new preprocessed images in batches using a dedicated inference server.

- Feature Extraction: For each segmented cell, extract ~1,000 morphological, intensity-based, and texture features (e.g., area, eccentricity, mean intensity, Haralick features).

- Data Export: Save features as a

.csvfile linked to the original image metadata and segmentation masks.

Performance Data & Benchmarks

The following tables summarize quantitative results from implementing the above workflow in a pilot drug screening study.

Table 1: Preprocessing Impact on AI Model Performance

| Metric | Raw Images | After Preprocessing | Improvement |

|---|---|---|---|

| Segmentation Dice Coefficient | 0.78 ± 0.12 | 0.94 ± 0.03 | +20.5% |

| Feature Standard Deviation (across plates) | 45.2% | 12.7% | -71.9% |

| Intra-class Correlation (ICC) | 0.65 | 0.91 | +40.0% |

Table 2: Computational Requirements for AI Pipeline (per 1000 images)

| Pipeline Stage | Hardware | Avg. Processing Time | Key Software Library |

|---|---|---|---|

| Preprocessing & QC | CPU (32 cores) | 25 min | scikit-image, OpenCV |

| U-Net Training | 1x A100 GPU | 4.5 hours | PyTorch, TIMM |

| Batch Inference | 1x V100 GPU | 8 min | ONNX Runtime |

| Feature Extraction | CPU (16 cores) | 12 min | scikit-image, pandas |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Image Analysis Workflows

| Item | Function | Example Product/Catalog # |

|---|---|---|

| Optical-Bottom Microplates | Provide superior image clarity and minimal background for high-resolution microscopy. | Corning 96-well Black/Clear Bottom Plate (#3904) |

| Multi-Fluorescent Calibration Beads | Daily calibration for channel alignment, pixel size, and intensity normalization. | Thermo Fisher TetraSpeck Microspheres (0.5µm, #T7280) |

| Cell Health Indicator Dye | Live-cell imaging viability control to monitor cytotoxicity during kinetic assays. | Cytoplasma-Selective Membrane-Permeant Dye, CellMask Green (C37608) |

| Antibody Conjugates (Bright, Photostable) | For multiplexed target labeling; critical for generating high-SNR training data. | Alexa Fluor 488, 568, 647 secondaries (Thermo Fisher) |

| Mounting Media (Antifade) | Preserve fluorescence signal for fixed-cell imaging; reduces photobleaching. | ProLong Diamond Antifade Mountant (P36961) |

| Automated Liquid Handler | Ensure reproducible cell seeding and compound addition to minimize plate-to-plate variation. | Integra ViaFlo Assist |

| Data Storage Solution | Manage large-scale image datasets (often >10TB per campaign). | Network-Attached Storage (NAS) with RAID 6 configuration |

Visualized Workflows & Pathways

Diagram 1: End-to-End AI Image Analysis Pipeline

Diagram 2: U-Net Model Architecture for Segmentation

Within the broader thesis on AI-driven image analysis for optical data processing, this document details the critical application of advanced imaging and machine learning to two transformative approaches in modern drug discovery: Phenotypic Screening and Organoid Analysis. These methodologies generate complex, high-content optical data, which, when processed by AI, can reveal subtle, biologically relevant phenotypes and accelerate the identification of novel therapeutics.

Phenotypic Screening: AI-Enhanced Workflow

Application Notes

Phenotypic screening assesses compounds based on their ability to modulate observable cellular characteristics (phenotypes) without requiring prior knowledge of a specific molecular target. AI-driven image analysis is pivotal for extracting quantitative, multi-parametric data from these assays, moving beyond single-parameter readouts to holistic profiling.

Current Trends (2023-2024):

- Integration of Multi-Omics Data: AI models are increasingly trained on correlative datasets combining high-content imaging with transcriptomic or proteomic profiles from the same samples.

- Explainable AI (XAI): There is a strong push to develop models that not only classify phenotypes but also highlight the subcellular features (e.g., texture, morphology) driving the classification, enhancing researcher trust and biological insight.

- Platforms: Commercial platforms like Cell Painting have become a standard, employing up to 6 fluorescent dyes to label 8+ cellular components, generating thousands of features per cell.

Quantitative Impact of AI on Phenotypic Screening: Table 1: Performance Metrics of AI-Driven vs. Traditional Phenotypic Analysis

| Metric | Traditional (Manual/Simple Analysis) | AI-Driven (Deep Learning) | Source/Context |

|---|---|---|---|

| Features Extracted per Cell | 10-50 | 1,000 - 5,000+ | Cell Painting assay with CNN feature extraction |

| Hit Confirmation Rate | 10-25% | 30-50% | Improved triage reduces false positives |

| Time for Image Analysis (per 96-well plate) | 4-6 hours | 15-30 minutes | Automated pipeline with GPU acceleration |

| Phenotypic Class Accuracy | 75-85% | 92-98% | Classification of known mechanistic classes |

Detailed Protocol: AI-Driven Phenotypic Screening with Cell Painting

Title: High-Content Phenotypic Screening and Profiling of Compound Libraries Using Cell Painting and Convolutional Neural Networks (CNNs).

Objective: To identify and characterize novel therapeutic compounds by inducing and quantifying morphological changes in cultured cells, using an AI pipeline for image segmentation, feature extraction, and mechanistic prediction.

Materials (Research Reagent Solutions):

- U2OS Cells: A robust, adherent cell line with clear morphology.

- Cell Painting Reagent Kit: Includes dyes for nuclei (Hoechst 33342), endoplasmic reticulum (Concanavalin A, Alexa Fluor 488 conjugate), nucleoli (SYTO 14), actin (Phalloidin, Alexa Fluor 568 conjugate), Golgi apparatus (Wheat Germ Agglutinin, Alexa Fluor 594 conjugate), and mitochondria (MitoTracker Deep Red).

- Compound Library: 10,000-member diversity library in 384-well format.

- Assay Medium: Phenol-red free medium for imaging.

- Automated Liquid Handler: For consistent compound and reagent dispensing.

- High-Content Imaging System: Confocal or widefield microscope with ≥5 fluorescence channels, 20x/40x objective, and automated stage.

- AI/ML Software: Open-source (CellProfiler, DeepCell, ilastik) or commercial (Harmony, IN Carta, HCS Studio) with CNN capabilities.

Procedure:

- Cell Seeding & Treatment: Seed U2OS cells at 2,000 cells/well in a 384-well collagen-coated plate. Incubate for 24 hrs. Pin-transfer compounds from library for a final concentration of 10 µM. Include DMSO (vehicle) and reference compound controls (e.g., mTOR inhibitor, DNA damage agent). Incubate for 48 hrs.

- Staining: Fix cells with 4% PFA for 20 min. Permeabilize with 0.1% Triton X-100. Stain with the Cell Painting cocktail as per manufacturer's protocol. Seal plates.

- Image Acquisition: Acquire 9 fields per well using a 40x air objective across all 5-6 fluorescence channels. Use consistent exposure times determined from control wells.

- AI-Driven Image Analysis Pipeline: a. Preprocessing & Segmentation: Use a pre-trained U-Net CNN within CellProfiler or DeepCell to identify nuclei (Hoechst channel) and whole-cell boundaries (actin/ER channels). b. Feature Extraction: For each segmented cell, extract ~1,500 morphological, intensity, and texture features (size, shape, granularity, correlation between channels) using standard image analysis libraries. c. Data Normalization & Compression: Apply robust Z-scoring per plate using DMSO controls. Use dimensionality reduction (UMAP/t-SNE) on the feature matrix to visualize compound-induced phenotypic clustering. d. Phenotypic Profiling & Hit Identification: Train a Random Forest or support vector machine (SVM) classifier on features from reference compounds. Apply model to score similarity of test compounds to known mechanisms. Calculate a Mahalanobis distance from the DMSO cloud to identify significant outliers as hits.

- Validation: Prioritize hits from novel clusters. Confirm activity in dose-response Cell Painting and orthogonal functional assays.

Signaling Pathways in Phenotypic Screening

Phenotypic changes often result from the perturbation of key signaling hubs. AI can map compound-induced morphology to these pathways.

Diagram Title: Key Pathways Modulating Cell Painting Phenotypes

Organoid Analysis: AI for Complex 3D Models

Application Notes

Organoids are self-organizing 3D tissue cultures that recapitulate key aspects of in vivo organ structure and function. They present a more physiologically relevant but analytically challenging model. AI-driven 3D image analysis is essential for quantifying complex phenotypes in these structures.

Current Trends (2023-2024):

- Live-Cell & Time-Lapse Analysis: AI models are being developed to segment and track individual cells within living organoids over days, enabling studies of clonal dynamics and heterogeneous drug responses.

- Multi-organoid Analysis: Models must handle high variability between individual organoids, focusing on population-level distributions rather than single-structure readouts.

- Fusion with Spatial Transcriptomics: AI correlates 3D morphological features from imaging with localized gene expression maps, creating powerful multimodal datasets for target discovery.

Quantitative Advantages of AI in Organoid Analysis: Table 2: Capabilities of AI in 3D Organoid Image Analysis

| Analysis Challenge | Conventional Method | AI/Deep Learning Solution | Performance Gain |

|---|---|---|---|

| 3D Segmentation | Thresholding + Watershed (2D) | 3D U-Net / StarDist-3D | Dice Coefficient: 0.6 → 0.9+ |

| Cell Type Classification | Manual based on marker location | 3D CNN on multiplexed data | Accuracy: ~70% → >90% |

| Drug Response Quantification | Organoid diameter/volume | Multiparametric feature analysis (lumen size, cell death, budding) | Z'-factor: 0.2 → 0.5+ |

| Phenotypic Heterogeneity | Categorical scoring | Deep embedding + clustering identifies novel subtypes | Identifies 3-5x more subpopulations |

Detailed Protocol: Multiparametric Drug Response Analysis in Colorectal Cancer Organoids

Title: Quantifying Therapeutic Response in Patient-Derived Colorectal Cancer Organoids Using 3D Confocal Imaging and AI-Based Segmentation.

Objective: To assess the efficacy and mechanism of action of novel oncology candidates by measuring multiple phenotypic endpoints in 3D tumor organoids treated with compounds.

Materials (Research Reagent Solutions):

- Matrigel or BME2: Basement membrane extract for 3D organoid embedding.

- Advanced DMEM/F-12: Organoid culture medium, supplemented with niche factors (Wnt3a, R-spondin, Noggin, EGF).

- Patient-Derived Colorectal Cancer (CRC) Organoids: From biobank or fresh biopsy.

- Live-Cell Fluorescent Probes: CellTracker Green (viability), Hoechst 33342 (nuclei), Incucyte Cytotox Red (dead cells), Phalloidin (F-actin, for endpoint).

- Test Compounds: Including standard chemotherapeutics (5-FU, Irinotecan) and novel agents.

- 96-Well Round-Bottom Ultra-Low Attachment Plates: For consistent organoid formation.

- Confocal Spinning Disk Microscope: With environmental chamber for live imaging.

- 3D Image Analysis Software: Featuring AI models (e.g., Arivis Vision4D, Imaris with CNN module, or custom Python using Napari).

Procedure:

- Organoid Preparation & Treatment: Dissociate CRC organoids to single cells. Mix 500 cells with 20 µL BME2 and plate as domes in 96-well plate. Overlay with culture medium. After 4 days, treat with compounds across a 10-point dose-response curve. Include viability (CellTracker) and death (Cytotox Red) dyes.

- Image Acquisition (Live/Endpoint):

- Live Imaging: Image 4 organoids/well every 24h for 72h using a 20x water objective, acquiring Z-stacks (50 µm depth, 5 µm steps) for nuclei, viability, and death channels.

- Endpoint Imaging: At 72h, fix, permeabilize, and stain with Phalloidin and Hoechst. Acquire high-resolution Z-stacks.

- AI-Based 3D Image Analysis: a. Preprocessing: Apply 3D deconvolution and background subtraction. b. Segmentation: Employ a pre-trained 3D U-Net to segment whole organoids (using CellTracker/F-actin channel) and individual nuclei (Hoechst channel) within the volume. c. Feature Extraction: Calculate per-organoid and per-cell features: * Volumetric: Organoid volume, sphericity, lumen size. * Viability: Ratio of CellTracker+ area to total area. * Cell Death: Number and location of Cytotox Red+ objects. * Cellular: Nuclei count, density, and proximity to organoid edge. * Structural: F-actin intensity distribution and texture.

- Dose-Response & Phenotypic Profiling: Fit volumetric and viability data to a 4-parameter logistic model to calculate IC50. Use extracted feature matrices to train a classifier (e.g., SVM) to distinguish between mechanisms (e.g., cytostatic vs. cytotoxic).

- Correlation with Genomics: Compare organoid drug response profiles (IC50, phenotypic features) with patient tumor genomic data (e.g., KRAS, TP53 status).

Organoid Analysis Workflow

The integration of AI is critical at every step of the organoid screening pipeline.

Diagram Title: AI-Integrated Organoid Drug Screening Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Phenotypic & Organoid Screening

| Item Name | Category | Primary Function in Experiments |

|---|---|---|

| Cell Painting Kit | Fluorescent Dyes | Multiplexed staining of 6-8 cellular compartments for holistic phenotypic profiling. |

| Matrigel / BME2 | Extracellular Matrix | Provides a 3D scaffold for organoid growth, mimicking the basement membrane. |

| Incucyte Cytotox Red | Live-Cell Probe | Real-time, non-disruptive quantification of dead cells in both 2D and 3D cultures. |

| Hoechst 33342 | Nuclear Stain | Labels DNA in fixed and live cells, used for segmentation and cell counting. |

| Recombinant Human Growth Factors (Wnt3a, R-spondin, Noggin) | Culture Supplement | Essential for maintaining stemness and driving growth of intestinal-derived organoids. |

| CellTracker Green CMFDA | Live-Cell Probe | Long-term cytoplasmic labeling of viable cells, used for tracking and viability assessment. |

| Paraformaldehyde (4%) | Fixative | Rapidly preserves cellular architecture and fluorescence for endpoint imaging. |

| Triton X-100 | Detergent | Permeabilizes cell membranes to allow entry of antibody and dye molecules. |

Within the broader thesis of AI-driven image analysis for optical data processing, digital pathology represents a paradigm shift. The conversion of glass slides into high-resolution Whole Slide Images (WSIs) creates a vast, complex optical dataset. AI, particularly deep learning, is engineered to process this data, transforming subjective histopathological assessment into quantitative, reproducible biomarker extraction. This directly accelerates drug development by providing robust, data-rich endpoints for clinical trials.

Core Applications in Research & Drug Development

- AI-Enhanced Quantitative Biomarker Analysis: Moves beyond traditional manual H-score or simple positive cell counting. AI models can segment individual cells, classify cell types (tumor, lymphocyte, stroma), and quantify biomarker expression (e.g., PD-L1, HER2, Ki67) with subcellular precision, correlating intensity and localization.

- Prognostic and Predictive Biomarker Discovery: By analyzing spatial relationships (e.g., tumor-immune cell interactions) and morphological patterns across large retrospective cohorts, AI can identify novel digital biomarkers predictive of treatment response or disease outcome.

- Tumor Microenvironment (TME) Deconvolution: AI models can dissect the complex TME by simultaneously identifying and quantifying multiple cell populations and their spatial organization, crucial for immuno-oncology research.

- Pathology Workflow Prioritization: AI-powered triage can flag suspicious regions or high-priority cases (e.g., high tumor burden), streamlining pathologist workflow in time-sensitive scenarios.

Table 1: Comparative Performance of AI vs. Manual Biomarker Quantification

| Metric | Manual Pathologist Assessment | AI-Driven Analysis | Implication for Research |

|---|---|---|---|

| Throughput | 5-10 minutes per WSI (focused region) | < 1 minute per WSI (full slide) | Enables large-scale cohort analysis. |

| Reproducibility (Inter-observer) | Moderate (Cohen's κ ~0.6-0.8) | High (Consistent algorithm) | Reduces variability in clinical trial endpoint scoring. |

| Spatial Feature Analysis | Limited to broad assessments | Precise (cell-level spatial statistics) | Unlocks novel TME-based biomarker discovery. |

| Multiplex Biomarker Integration | Challenging for >3 markers | Scalable to hyperplex imaging (10+ markers) | Enables systems biology approaches in tissue. |

Detailed Experimental Protocols

Protocol: AI-Assisted PD-L1 Combined Positive Score (CPS) Quantification in Gastric Carcinoma

Objective: To reproducibly quantify PD-L1 expression in tumor and immune cells from a CD3/CD8/PD-L1 multiplex immunohistochemistry (mIHC) WSI.

Materials & Reagents: (See Scientist's Toolkit below) Software: Python 3.9+, PyTorch/TensorFlow, OpenSlide, QuPath or equivalent digital pathology analysis platform.

Workflow:

Slide Digitization & Preprocessing:

- Scan stained slide at 40x magnification (0.25 µm/pixel resolution).

- Export WSI in a pyramidal file format (e.g., .svs, .tiff).

- Apply shading correction and normalize stain intensities across the dataset using a method like Macenko normalization.

AI Model Inference for Cell Segmentation & Classification:

- Tile Extraction: Segment the WSI into 512x512 pixel tiles at 20x equivalent magnification (0.5 µm/pixel).

- Cell Segmentation: Process each tile through a pre-trained deep learning model (e.g., HoVer-Net or a U-Net variant) to generate nuclear segmentation masks.

- Cell Phenotyping: For each segmented cell, extract morphological and intensity features from the multiplex channels. Input these features into a classifier (e.g., Random Forest or CNN) trained to label cells as:

CD3+ T-cell,CD8+ Cytotoxic T-cell,PD-L1+ Tumor Cell,PD-L1+ Immune Cell,Other Stromal Cell. - Post-processing: Merge tile-level results into a slide-level cell database with spatial coordinates and phenotype labels.

Quantitative Biomarker Scoring:

- Calculate the AI-CPS as per standard definition:

(Number of PD-L1+ Tumor Cells + PD-L1+ Immune Cells) / (Total Number of Viable Tumor Cells) * 100 - The denominator is derived from a concurrent H&E analysis or an additional tumor segmentation model.

- Generate spatial maps and histograms of PD-L1 expression intensity.

- Calculate the AI-CPS as per standard definition:

Validation:

- Compare AI-CPS against manual CPS from 2-3 certified pathologists on a representative subset (e.g., 50-100 WSIs).

- Calculate intra-class correlation coefficient (ICC) and Pearson correlation.

AI-PD-L1 CPS Quantification Workflow

Protocol: Discovery of Spatial Biomarkers via Graph Neural Networks (GNNs)

Objective: To model cell-cell interaction networks within the TME and identify graph-derived features predictive of patient survival.

Workflow:

- Input Data Generation: Follow Protocol 2.1 to obtain a spatial cell database with phenotype labels.

- Graph Construction:

- For a defined region (e.g., tumor core), model each cell as a node.

- Assign node features: phenotype, morphology, biomarker intensity.

- Create edges between nodes if the Euclidean distance between cell centroids is <30 µm (approximating interaction distance).

- Graph Neural Network Analysis:

- Train a GNN model (e.g., Graph Convolutional Network) to learn representations of the local tissue microenvironment.

- Use a readout function to create a fixed-size feature vector (graph embedding) for each patient's WSI.

- Survival Correlation:

- Use Cox Proportional-Hazards regression to correlate graph-derived features with patient overall survival.

- Identify specific topological patterns (e.g., clusters of immune cells proximal to tumor) associated with outcome.

Spatial Biomarker Discovery via GNN

The Scientist's Toolkit: Key Research Reagent & Solution Solutions

Table 2: Essential Materials for AI-Enhanced Digital Pathology Workflows

| Item | Function & Relevance to AI Analysis |

|---|---|

| Multiplex IHC/IF Kits (e.g., Akoya Phenocycler/PhenoImager, Standard BioTools Codex) | Enable simultaneous detection of 4-60+ biomarkers on a single tissue section. Provides the rich, multi-channel optical data required for AI-based TME deconvolution. |

| Automated Slide Stainers | Ensure consistent, reproducible staining crucial for training robust AI models and minimizing technical batch effects. |

| Whole Slide Scanners (40x-60x, with fluorescence capability) | Generate the high-resolution, high-fidelity optical datasets (WSIs) that are the primary input for AI analysis. |

| Tissue Microarrays (TMAs) | Contain 10s-100s of patient samples on one slide. Ideal for efficient, large-scale model validation and biomarker discovery across a cohort. |

| Open-Source Pathology Software (QuPath, HistomicsTK) | Provide community-vetted tools for WSI visualization, manual annotation (ground truth creation), and integration with AI models. |

| Cloud Computing Platform/GPU Cluster | Essential for training and deploying computationally intensive deep learning models on large WSI datasets (often terabytes in size). |

Within the broader thesis on AI-driven image analysis for optical data processing, this application note addresses a critical challenge: extracting quantitative, dynamic phenotypes from live-cell imaging. Traditional manual tracking is low-throughput and subjective. This document details how deep learning-based tools automate the analysis of cellular motion, morphology, and signaling dynamics over time, transforming time-lapse data into actionable biological insights for fundamental research and drug development.

Core AI Methodologies & Quantitative Comparison

Modern approaches combine convolutional neural networks (CNNs) for feature extraction with recurrent neural networks (RNNs) or graph neural networks (GNNs) for temporal modeling.

Table 1: Comparison of AI Models for Cellular Dynamics Tracking

| Model Architecture | Primary Use Case | Key Strength | Typical Accuracy (F1-Score) | Inference Speed (FPS) |

|---|---|---|---|---|

| U-Net + LSTM | Segmentation & Lineage Tracking | Excellent spatial and temporal context | 0.91-0.95 | 12-15 |

| Mask R-CNN + TrackR-CNN | Multi-object Tracking | Robust instance segmentation & association | 0.88-0.93 | 8-12 |

| StarDist + Bayesian Tracking | Dense Cell Populations | Superior for touching/overlapping cells | 0.89-0.94 | 10-18 |

| Graph Neural Networks (GNNs) | Collective Migration Analysis | Models cell-cell interactions explicitly | 0.85-0.90* | 5-10 |

| Transformer-based (CellDETR) | End-to-End Detection & Tracking | Eliminates complex post-processing pipelines | 0.90-0.92 | 7-11 |

*Accuracy highly dependent on graph construction quality.

Application Protocols

Protocol: AI-Assisted Tracking of Neurite Outgrowth Dynamics

Aim: To quantify neurite length, branching, and dynamics in primary neuronal cultures. Materials: See "Scientist's Toolkit" (Section 5.0). Workflow:

- Image Acquisition: Acquire phase-contrast or fluorescence (e.g., MAP2 staining) time-lapse images every 30 minutes for 72 hours using a controlled environment chamber (37°C, 5% CO₂).

- Preprocessing:

- Apply flat-field correction for illumination inhomogeneity.

- Use Fiji/ImageJ for mild background subtraction (rolling ball radius=50px).

- Convert 16-bit images to 8-bit and normalize pixel intensity (0-1 scale).

- AI Model Inference:

- Load the preprocessed image stack into a tracking platform (e.g., CellProfiler with DeepCell plugin, or custom Python script).

- Apply a pre-trained neural network (e.g., DeepLABCut for neurite skeletonization or a custom U-Net) to segment neurites from cell bodies in each frame.

- Use a skeletonization algorithm to convert segmentation masks to 1-pixel-wide skeletons.

- Tracking & Quantification:

- The AI model links skeletonized neurites across frames using a probabilistic matching algorithm based on overlap and proximity.

- Extract quantitative data: total neurite length per neuron, number of branches, tip velocity, and branchpoint dynamics.

- Output: Data tables for each tracked neuron and kymographs for selected neurites.

Protocol: Analysis of Immune Cell Migration in 3D Spheroids

Aim: To characterize T-cell infiltration kinetics and motility parameters in tumor spheroids. Workflow:

- Sample Prep & Imaging: Co-culture fluorescently labeled CAR-T cells with GFP-expressing tumor spheroids in collagen matrix. Acquire confocal z-stacks every 2 minutes for 12 hours.

- Preprocessing: Perform 3D deconvolution. Create maximum intensity projections (MIPs) for each time point for 2D tracking, or process full 3D stack.

- 3D Cell Tracking:

- Input the 4D (x,y,z,t) data into a 3D-capable tracker (e.g., TrackMate in Fiji using the DoG detector or a StarDist 3D model).

- Set appropriate estimated cell diameter (e.g., 10µm) and threshold.

- Apply a simple LAP (Linear Assignment Problem) tracker to link detections across time, allowing gaps of 2 frames.

- Motility Analysis:

- Calculate standard metrics: mean migration speed, persistence, meandering index, and confinement ratio.

- Analyze infiltration depth over time.

- Use vector maps to visualize collective migration patterns.

Visualizing Workflows and Pathways

AI-Driven Cellular Dynamics Analysis Workflow

AI Quantifies Signaling Dynamics Driving Phenotypes

The Scientist's Toolkit

Table 2: Essential Research Reagents & Materials for AI-Driven Dynamics Studies

| Item | Function/Description | Example Product/Catalog |

|---|---|---|

| Live-Cell Imaging Dyes | Non-toxic labels for nuclei, cytoplasm, or organelles for long-term tracking. | SiR-DNA (Cytoskeleton, Inc.), CellTracker dyes (Thermo Fisher). |

| FRET/BRET Biosensors | Genetically encoded reporters for real-time signaling activity (e.g., ERK, cAMP, Ca2+). | EKAR-EV (Addgene #18679), AKAR variants. |

| Phenotypic Dyes | Report viability, apoptosis, or mitochondrial health concurrently with tracking. | Annexin V probes, MitoTracker, Incucyte Cytotox Dyes. |

| Matrices for 3D Culture | Provide physiologically relevant microenvironment for migration studies. | Corning Matrigel, Cultrex BME, Collagen I (rat tail). |

| Environmental Control Chamber | Maintains temperature, CO2, and humidity for multi-day live imaging. | Tokai Hit STX stage-top incubator, Okolab cage incubators. |

| AI-Ready Public Datasets | For training or benchmarking models (pre-annotated time-lapse data). | Cell Tracking Challenge datasets, Allen Cell Explorer. |

| Open-Source Analysis Suites | Integrate AI models with microscopy data processing pipelines. | CellProfiler 4.0, Napari with tracking plugins, DeepLabCut. |

This Application Note details protocols for integrating spatial transcriptomics and multiplexed imaging within an AI-driven image analysis pipeline, a core theme of our broader thesis on optical data processing. These techniques enable the mapping of gene expression and protein activity directly within tissue architecture, providing unprecedented insights into cellular networks in health and disease for drug development.

Key Application Notes & Quantitative Data

Table 1: Comparison of Leading Spatial Transcriptomics Platforms

| Platform | Technology Basis | Spatial Resolution | Transcripts per Spot/Cell | Throughput (Cells per Experiment) | Key Distinguishing Feature |

|---|---|---|---|---|---|

| 10x Genomics Visium | Barcoded oligo-dT arrays on slides | 55 µm (current) | ~5,000 | 5,000 - 10,000 spots | Whole Transcriptome, H&E guided |

| NanoString GeoMx DSP | Digital Spatial Profiler (oligo barcodes + UV cleavage) | ROI-defined (1-10 µm) | 18,000+ (WTA) | 1 - 660+ ROIs | Protein & RNA, user-defined ROI |

| Vizgen MERSCOPE | MERFISH (multiplexed FISH imaging) | Subcellular (~100 nm) | 500 - 10,000 genes | ~1,000,000 cells | High-plex RNA, single-cell resolution |

| 10x Genomics Xenium | In situ sequencing (FISH-based) | Subcellular (~140 nm) | 300 - 1,000 genes | 100,000s of cells | In situ imaging, high detection efficiency |

| Akoya CODEX/Phenocycler | Multiplexed antibody imaging (cyclic staining) | Single-cell (~0.65 µm) | 40 - 100+ proteins | 1,000,000s of cells | High-plex protein, whole-slide imaging |

Table 2: AI Model Performance on Multiplexed Image Analysis Tasks

| AI Task | Model Architecture | Primary Metric | Typical Reported Performance (F1-Score/Accuracy) | Key Challenge Addressed |

|---|---|---|---|---|

| Cell Segmentation | U-Net, Mask R-CNN, Cellpose | Dice Coefficient | 0.85 - 0.95 | Overlapping cells, heterogeneous morphology |

| Cell Phenotyping | Random Forest, CNN, Vision Transformer (ViT) | Classification Accuracy | >90% | High-dimensional marker space, rare cell populations |

| Spatial Interaction Analysis | Graph Neural Networks (GNNs) | AUC for Interaction Prediction | 0.75 - 0.90 | Modeling complex, non-random cell neighborhood patterns |

| Feature Extraction for Prediction | Autoencoders, Deep Learning | Concordance Index (Survival) | 0.68 - 0.75 | Linking tissue phenotypes to clinical outcomes |

Experimental Protocols

Protocol 1: Integrated Analysis of GeoMx DSP and Phenocycler Data with AI Segmentation

Objective: To correlate protein-targeted spatial transcriptomics with high-plex protein expression in formalin-fixed, paraffin-embedded (FFPE) tumor sections.

Materials & Workflow:

- Tissue Preparation: Section FFPE tissue at 4-5 µm. Perform standard deparaffinization and antigen retrieval.

- Phenocycler Multiplexed Staining:

- Stain tissue with a pre-validated, panel-specific antibody cocktail conjugated to rare-earth metals (e.g., 40-plex).

- Image slides using a compatible fluorescence scanner (e.g., PhenoImager). Iteratively stain, image, and strip antibodies for 4-6 cycles.

- AI-Powered Cell Segmentation & Phenotyping:

- Register and composite cycle images using Akoya’s inForm or Apeer (Zeiss) software.

- AI Protocol: Apply a pre-trained U-Net model (TensorFlow/PyTorch) on DAPI images for nuclear segmentation. Use a secondary watershed or StarDist model for whole-cell segmentation based on membrane markers.

- Extract single-cell expression vectors for all protein markers.

- Use a clustering algorithm (e.g., PhenoGraph, Leiden) on normalized expression data to define cell phenotypes.

- GeoMx DSP ROI Selection & Profiling:

- Based on Phenocycler-derived cell phenotyping maps, select Regions of Interest (ROIs) (e.g., tumor-immune interface, specific stromal regions) in the GeoMx DSP software.

- Follow standard GeoMx protocol: UV-cleavage of oligo tags in selected ROIs, collection into microplates, and preparation for nCounter or NGS library construction.

- Data Integration:

- Spatially align Phenocycler and GeoMx DSP images.

- Use the cell phenotype map as a spatial filter to calculate the proportion of each cell type within each GeoMx ROI.

- Perform multivariate regression (e.g., linear mixed models) to associate ROI-level transcriptomic signatures with underlying cellular composition derived from multiplexed imaging.

Protocol 2: MERFISH Image Processing with Deep Learning-Based Decoding

Objective: To achieve accurate, high-throughput decoding of single RNA molecules from MERFISH imaging data using a convolutional neural network (CNN).

Materials & Workflow:

- Sample Preparation & Imaging: Perform MERFISH library preparation and hybridization on cultured cells or tissue sections according to Vizgen’s protocol. Acquire images across multiple fields of view and hybridization rounds.

- Traditional Barcode Call vs. AI Decoding:

- Traditional: Apply pixel-based registration. For each candidate spot, extract fluorescence intensities across all rounds/bit channels. Decode by comparing to the reference codebook via Pearson correlation or Hamming distance.

- AI Protocol (DeepCODE): a. Training Data Generation: Use traditional methods to generate decoded RNA locations. Extract small 3D image patches (e.g., 16x16xR, R=# rounds) centered on each identified RNA molecule and its corresponding barcode label. b. Model Training: Train a 3D-CNN with a classification head (output layer = # of genes in codebook) on these patches. Use data augmentation (rotation, flipping, noise injection). c. Inference: Apply the trained model in a sliding-window fashion or on candidate spots from a preliminary detector to predict gene identity directly from the raw image stack.

- Validation: Compare AI-decoded results with traditional methods using metrics like decoding yield, calling accuracy (via positive/negative control genes), and robustness to image noise.

Visualizations

Title: AI-Driven Spatial Multi-Omics Integration Workflow

Title: MERFISH Image Analysis & AI Decoding Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Spatial/Image Analysis |

|---|---|

| 10x Genomics Visium Spatial Gene Expression Slide | Barcoded oligo-dT capture array for whole transcriptome mapping from tissue sections. |

| NanoString GeoMx Protein & RNA Panels | Pre-designed, validated antibody (Protein) or RNA probe (Cancer Transcriptome Atlas) sets for targeted spatial profiling. |

| Akoya Phenocycler/PhenoImager Antibody Conjugation Kit | Enables labeling of user-defined antibodies with metal isotopes for cyclic multiplexed imaging (CODEX). |

| Vizgen MERSCOPE Gene Panel & Hybridization Kit | Optimized probe sets and reagents for high-efficiency, multiplexed FISH imaging. |

| Cellpose 2.0 (Software) | Deep learning-based, generalist algorithm for cell and nucleus segmentation adaptable to diverse image types. |

| QuPath (Open-Source Software) | Digital pathology platform supporting multiplexed image analysis, machine learning, and spatial statistics. |

| Squidpy (Python Package) | Facilitates scalable analysis and integration of spatial omics data, including graph-based analyses. |

| Illumina DNA/RNA UD Indexes | Used for sample multiplexing in NGS-based spatial transcriptomics library preparation (e.g., for Visium, GeoMx DSP). |

| DAPI (4',6-diamidino-2-phenylindole) | Nuclear counterstain essential for cell segmentation across all imaging platforms. |

| Antibody Diluent/Blocking Buffer (e.g., BSA, ScyTek) | Reduces non-specific antibody binding in multiplexed immunofluorescence protocols, critical for signal-to-noise ratio. |

Overcoming Hurdles: Best Practices for Robust and Scalable AI Implementation

In AI-driven image analysis for optical data processing in drug development, three pervasive challenges compromise model reliability: Data Scarcity, Imaging Artifacts, and Batch Effects. This document provides detailed application notes and protocols to identify, mitigate, and control these issues, ensuring robust analytical pipelines.

Data Scarcity: Mitigation Protocols

Quantitative Impact Assessment

Data scarcity leads to overfitting and poor generalization. The following table summarizes performance degradation with reduced dataset sizes in a typical high-content screening (HCS) analysis.

Table 1: Model Performance vs. Training Set Size in Phenotypic Profiling

| Training Images per Class | Validation Accuracy (%) | F1-Score | Overfitting Gap (Train-Val %) |

|---|---|---|---|

| 50 | 58.2 ± 3.1 | 0.55 | 28.5 |

| 200 | 75.6 ± 2.4 | 0.73 | 18.2 |

| 1000 | 88.9 ± 1.1 | 0.87 | 7.3 |

| 5000 | 93.4 ± 0.6 | 0.92 | 3.1 |

Protocol: Advanced Data Augmentation for Microscopy

Objective: Synthetically expand training datasets while preserving biological validity. Materials: Raw image sets, augmentation library (e.g., Albumentations, TorchIO). Procedure:

- Load & Normalize: Load 16-bit TIFF images. Apply percentile-based intensity normalization (e.g., 1st-99th percentile) to minimize background variance.

- Spatial Transformations: Apply random rotations (±15°), horizontal/vertical flips (p=0.5), and elastic deformations (α=100, σ=10) using B-spline interpolation.

- Photometric Augmentation: Introduce realistic noise: Add Gaussian noise (σ=0.01 * intensity range) and Poisson noise to simulate photon shot noise. Randomly adjust gamma contrast (range 0.7-1.3).

- Microscopy-Specific Artifacts: Simulate out-of-focus blur using random 2D Gaussian filtering (kernel size 1-3px). Apply pseudo-bleaching by linearly reducing intensity in a random quadrant by up to 20%.

- Validation: Visually inspect augmented images with a biologist to ensure phenotypical features remain plausible. Retrain model with augmented set and monitor validation loss for reduction in overfitting.

Protocol: Strategic Cross-Dataset Pre-training

Objective: Leverage public datasets to initialize models. Procedure:

- Source Selection: Identify relevant large-scale public datasets (e.g., ImageNet, Human Protein Atlas, RxRx1 for cell morphology).

- Domain Adaptation Pre-training: Train a CNN (e.g., ResNet50) on the source dataset. Replace final layer with a project-specific head (e.g., for cell count/classification).

- Feature Extraction & Fine-tuning: Freeze all convolutional layers. Train only the new head on your scarce target data (e.g., 200 images/class) for 50 epochs. Unfreeze all layers and conduct low-learning-rate (1e-5) fine-tuning for an additional 20 epochs.