AI in Retinal Imaging: Revolutionizing Disease Screening, Drug Discovery, and Personalized Medicine

This article provides a comprehensive analysis of AI-enhanced retinal imaging for researchers, scientists, and drug development professionals.

AI in Retinal Imaging: Revolutionizing Disease Screening, Drug Discovery, and Personalized Medicine

Abstract

This article provides a comprehensive analysis of AI-enhanced retinal imaging for researchers, scientists, and drug development professionals. It explores the foundational principles of AI and the retina as a biomarker window, details advanced methodologies for data processing and feature extraction, addresses key challenges in model robustness and clinical integration, and evaluates validation frameworks against traditional diagnostics. The review synthesizes how AI is transforming retinal analysis from a diagnostic tool into a quantitative, predictive, and scalable platform for systemic disease management and therapeutic development.

The Eye as a Window: Foundational Principles of AI in Retinal Biomarker Discovery

Application Notes: AI-Enhanced Retinal Biomarker Detection for Systemic Diseases

Recent advances in high-resolution retinal imaging and artificial intelligence have established the retina as a critical biomarker discovery platform for systemic health. AI models, particularly deep learning algorithms, can now quantify subtle, subclinical retinal vascular and neuronal changes that correlate with systemic disease progression and therapeutic response. This non-invasive approach is accelerating research in neurology, cardiology, and endocrinology.

Table 1: Quantitative Correlations Between Retinal Features and Systemic Diseases (Recent Meta-Analysis Findings)

| Systemic Disease | Retinal Feature | Quantitative Metric | Correlation Strength (Effect Size/OR/HR) | Primary Study Type |

|---|---|---|---|---|

| Alzheimer's Disease | Retinal Nerve Fiber Layer (RNFL) Thinning | Mean Thickness Reduction (μm) | -7.32 μm (95% CI: -10.99 to -3.65) | Cross-Sectional Meta-Analysis |

| Cognitive Decline | Fractal Dimension (FD) of Vasculature | Decrease in FD (unitless) | β = 0.12, p<0.001 per 0.01 FD decrease | Longitudinal Cohort |

| Diabetic Kidney Disease | Wider Retinal Venular Caliber | Central Retinal Venular Equivalent (CRVE) increase (μm) | HR 1.16 (1.08–1.25) per 5μm increase | Prospective Cohort |

| Cardiovascular Risk | Arteriolar-to-Venular Ratio (AVR) | Decrease in AVR (unitless) | OR 2.31 (1.45–3.67) for low AVR | Population-Based Study |

| Hypertension | Focal Arteriolar Narrowing | Arteriolar Caliber Reduction (μm) | β = -2.1 μm per 10mmHg SBP increase | Cross-Sectional Analysis |

| Multiple Sclerosis | Ganglion Cell-Inner Plexiform Layer (GCIPL) Thinning | Volume Reduction (mm³) | -0.03 mm³ (p=0.004) vs. controls | Case-Control Study |

Table 2: Performance of Representative AI Models for Systemic Disease Prediction from Retinal Images

| AI Model Architecture | Target Condition | Data Modality | Performance (AUC) | Key Biomarkers Identified |

|---|---|---|---|---|

| Deep Ensemble CNN | Chronic Kidney Disease (CKD) | Color Fundus Photography (CFP) | 0.82 (0.79–0.85) | Vascular tortuosity, exudates, hemorrhages |

| 3D Convolutional Neural Network | Alzheimer's Disease Progression | Optical Coherence Tomography (OCT) Volumes | 0.89 (0.86–0.92) | Inner plexiform layer thickness, drusen-like deposits |

| Transformer-based Network | Cardiovascular Mortality Risk | Ultra-Widefield CFP | 0.75 (0.72–0.78) | Peripheral vascular lesions, ischemic signs |

| Multimodal Fusion Network | Diabetic Complications (Neuropathy/Nephropathy) | CFP + OCT-Angiography (OCT-A) | 0.91 (0.88–0.94) | Perfused vessel density, foveal avascular zone geometry |

| Graph Neural Network | Stroke Risk | OCT-A Vessel Graphs | 0.78 (0.75–0.81) | Capillary network connectivity, bifurcation angles |

Experimental Protocols

Protocol 2.1: Acquisition of Multimodal Retinal Imaging Data for AI Biomarker Discovery

Objective: To standardize the acquisition of high-quality, annotated retinal imaging datasets for training and validating AI models predicting systemic health outcomes.

Materials: See "Research Reagent Solutions" table. Software: DICOM viewers, image registration toolkits (e.g., ANTs, Elastix).

Procedure:

- Participant Recruitment & Consent: Recruit participants with confirmed systemic disease diagnoses (e.g., via serum creatinine, brain MRI, cardiac echo) and matched controls. Obtain IRB-approved informed consent.

- Systemic Data Collection: Record relevant systemic variables: blood pressure, HbA1c, lipid panel, estimated glomerular filtration rate (eGFR), cognitive scores (e.g., MMSE).

- Pupillary Dilation: Instill 1% tropicamide per institutional protocol.

- Multimodal Imaging Session: a. Color Fundus Photography (CFP): Acquire 45°–50° macula-centered and optic disc-centered images per eye using a standardized fundus camera. Ensure even illumination and focus. b. Spectral-Domain Optical Coherence Tomography (SD-OCT): Perform a macular cube scan (e.g., 6x6mm, 512x128 scans) and a disc-centered scan. Check signal strength (>7/10). c. OCT-Angiography (OCT-A): Acquire 3x3mm and 6x6mm scans centered on the fovea. Use motion correction technology.

- Image De-identification & Annotation: Remove all PHI. Annotate images with ground truth labels (systemic diagnosis, clinical metrics) by certified graders masked to participant data.

- Quality Control & Preprocessing: Reject images with media opacities, poor fixation, or artifacts. Align multimodal images (e.g., register CFP to OCT) using feature-based algorithms.

- Data Curation for AI: Partition data into training (70%), validation (15%), and test (15%) sets, ensuring no patient overlap between sets.

Protocol 2.2: Development and Validation of a Deep Learning Model for Systemic Risk Prediction

Objective: To train and evaluate a convolutional neural network (CNN) for predicting a systemic outcome (e.g., reduced eGFR) from retinal images.

Materials: Curated dataset from Protocol 2.1, high-performance computing cluster with GPUs. Software: Python, PyTorch/TensorFlow, scikit-learn, OpenCV.

Procedure:

- Model Architecture Design: Implement a CNN (e.g., ResNet-50) with a modified final fully connected layer to output a binary or continuous prediction (e.g., eGFR <60 ml/min/1.73m²).

- Input Preprocessing: Resize all CFP images to a uniform resolution (e.g., 512x512 pixels). Apply normalization using ImageNet mean and standard deviation. Use data augmentation (random rotation, flipping, brightness adjustment) on the training set only.

- Model Training: a. Initialize model with pre-trained weights (ImageNet). b. Define loss function (e.g., cross-entropy for classification, mean squared error for regression) and optimizer (Adam). c. Train for a fixed number of epochs (e.g., 100) using mini-batches. Employ early stopping based on validation loss.

- Interpretability Analysis: Apply Gradient-weighted Class Activation Mapping (Grad-CAM) to visualize retinal regions most influential for the model's prediction.

- Model Validation: a. Internal Validation: Evaluate on the held-out test set. Report AUC, sensitivity, specificity, precision, and calibration plots. b. External Validation: Test the finalized model on a completely independent dataset from a different institution or population to assess generalizability.

- Statistical Analysis: Calculate 95% confidence intervals for performance metrics via bootstrapping (n=1000 iterations).

Visualizations

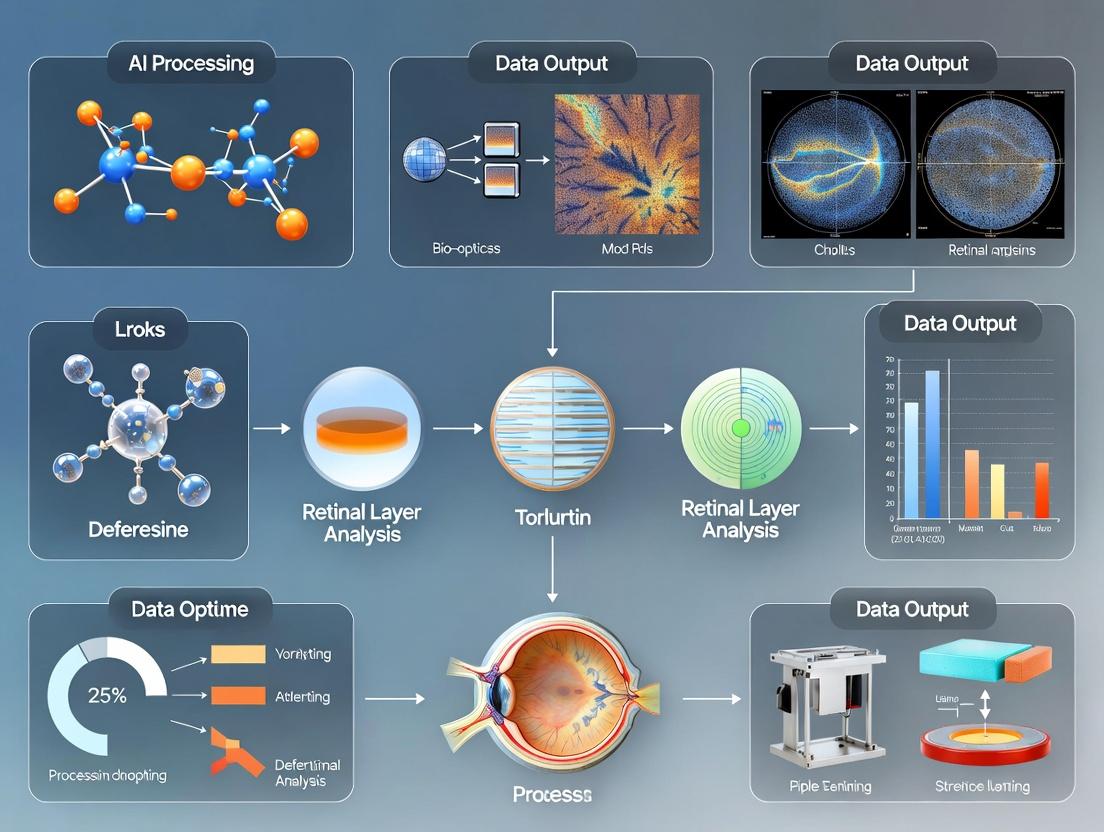

AI Links Retina to Systemic Health

Retinal Biomarker AI Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Enhanced Retinal Biomarker Research

| Item / Solution | Supplier Examples | Function in Research |

|---|---|---|

| Dilating Eye Drops (1% Tropicamide) | Alcon, Bausch + Lomb | Induces mydriasis for consistent, high-quality retinal image acquisition across modalities. |

| Spectral-Domain OCT System | Heidelberg Engineering, Zeiss, Topcon | Provides high-resolution, cross-sectional scans of retinal layers for quantitative thickness and reflectance analysis. |

| Ultra-Widefield Fundus Camera | Optos, Heidelberg Engineering | Captures peripheral retinal pathology, crucial for systemic conditions like sickle cell or autoimmune diseases. |

| OCT-Angiography Module | Zeiss, Nidek, Optovue | Enables non-invasive visualization of retinal vasculature and quantification of perfusion density, a key biomarker. |

| Validated Deep Learning Framework (PyTorch/TensorFlow) | Meta, Google | Open-source libraries for developing, training, and deploying custom AI models on retinal image data. |

| High-Performance Computing Cluster with GPUs (NVIDIA) | Various institutional providers | Provides the computational power necessary for training complex deep learning models on large imaging datasets. |

| DICOM & Image Management Database (e.g., OMERO) | Glencoe Software, Open Source | Securely stores, organizes, and annotates large-scale multimodal retinal imaging datasets for AI research. |

| Image Registration & Preprocessing Toolkit (ANTs) | Penn, Open Source | Aligns images from different modalities (CFP, OCT) to enable correlative, pixel-level biomarker analysis. |

This document, framed within a thesis on AI-enhanced retinal imaging applications research, details the evolution, application, and experimental protocols of core artificial intelligence (AI) paradigms. It serves as a technical reference for researchers, scientists, and drug development professionals working on quantitative analysis of retinal images for disease biomarker discovery and therapeutic efficacy assessment.

Evolution of AI Paradigms in Image Analysis

The analysis of medical images, particularly retinal fundus and optical coherence tomography (OCT) scans, has transitioned from manual feature engineering to automated deep feature learning.

Table 1: Comparative Analysis of AI Paradigms in Retinal Imaging

| Paradigm | Key Characteristics | Typical Accuracy on DR Detection | Data Efficiency | Interpretability | Primary Use Case in Retinal Imaging |

|---|---|---|---|---|---|

| Traditional Machine Learning (e.g., SVM, Random Forest) | Relies on handcrafted features (vessel tortuosity, exudate area). | 85-92% (Fundus) | High (100s of images) | High | Epidemiological studies, focused phenotype quantification. |

| Convolutional Neural Networks (CNNs) (e.g., ResNet, VGG) | Learns hierarchical features automatically from pixel data. | 93-98% (Fundus/OCT) | Medium (1000s of images) | Medium | Screening for Diabetic Retinopathy (DR), Age-related Macular Degeneration (AMD) classification. |

| Vision Transformers (ViTs) | Uses self-attention mechanisms to model global image dependencies. | 95-99% (OCT) | Low (10,000s+ images) | Low | Detailed segmentation of retinal layers, detection of novel biomarkers. |

| Multimodal Learning | Fuses data from different sources (e.g., OCT + Fundus + EHR). | N/A (Application-specific) | Very Low | Low | Predicting systemic disease (e.g., cardiovascular risk) from retinal images. |

Application Notes for Retinal Imaging

Traditional ML for Vessel Segmentation

- Application: Quantification of retinal vessel caliber as a biomarker for hypertensive retinopathy.

- Protocol: The Frangi filter is applied to a green channel fundus image to enhance tubular structures. Thresholding and skeletonization are followed by feature extraction (width, branching points). A Random Forest classifier distinguishes arteries from veins.

Deep Learning for Pathological Feature Detection

- Application: Automated grading of Diabetic Retinopathy (DR) severity.

- Protocol: A dataset of fundus images graded by clinicians is used. A pre-trained ResNet-50 model is fine-tuned using transfer learning. Data augmentation (rotation, flipping, brightness adjustment) is applied to prevent overfitting. Performance is evaluated on a hold-out test set against expert gradings.

Segmentation of OCT Biomarkers

- Application: Precise segmentation of retinal fluid (intraretinal fluid - IRF, subretinal fluid - SRF) in neovascular AMD.

- Protocol: A U-Net architecture is trained on pixel-wise annotated OCT B-scans. The model outputs probability maps for IRF, SRF, and retinal layers. Volumetric quantification of fluid over time serves as a primary endpoint in anti-VEGF therapy trials.

Experimental Protocols

Protocol 3.1: Benchmarking Classifiers for DR Detection

Objective: Compare the performance of a traditional ML pipeline vs. a CNN on a public dataset (e.g., Messidor-2).

Materials:

- Messidor-2 fundus image dataset.

- Pre-processed images (resized, normalized).

- For Traditional ML: Extracted features (Microaneurysm count, exudate area via morphological ops).

- For CNN: Raw pre-processed images.

Procedure:

- Data Partition: Split data into Training (70%), Validation (15%), Test (15%). Ensure stratification by DR grade.

- Traditional ML Pipeline:

- Extract handcrafted features from the training set.

- Train a Support Vector Machine (SVM) with RBF kernel using 5-fold cross-validation on the training set.

- Tune hyperparameters (C, gamma) on the validation set.

- Evaluate final model on the held-out test set.

- CNN Pipeline:

- Load a pre-trained ResNet-34 model, replace the final fully connected layer.

- Train the model using the training set images, with validation set monitoring for early stopping. Use Adam optimizer, cross-entropy loss.

- Evaluate on the held-out test set.

- Analysis: Compare AUC-ROC, sensitivity, specificity, and F1-score for referable DR (≥ moderate DR).

Protocol 3.2: U-Net for Retinal Layer Segmentation in OCT

Objective: Train a model to segment 7 retinal layers from a macular OCT B-scan.

Materials:

- Publicly available Duke OCT dataset with layer annotations.

- Computing environment with GPU support (e.g., NVIDIA V100).

Procedure:

- Pre-processing: Apply Gaussian filtering to reduce speckle noise. Normalize pixel intensity to [0,1]. Resize all B-scans to a uniform dimension (e.g., 512x512).

- Annotation: Use provided manual tracings as ground truth masks (7-class label map).

- Model Architecture: Implement a standard U-Net with encoder (contracting path) and decoder (expanding path) with skip connections.

- Training: Use a Dice Loss + Cross-Entropy Loss combination. Optimize with Adam (lr=1e-4). Train for 150 epochs with batch size 8.

- Validation & Testing: Monitor Dice Similarity Coefficient (DSC) per layer on the validation set. Report mean DSC and per-layer DSC on the test set.

Diagrams

AI Pipeline Comparison for Retinal Analysis

OCT Analysis for Therapy Assessment

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for AI Retinal Imaging Research

| Item | Function/Description | Example/Note |

|---|---|---|

| Public Retinal Datasets | Benchmarks for training and validating models. | Messidor-2 (Fundus, DR), Duke OCT Dataset (OCT, layers), RETOUCH (OCT, fluid). |

| Annotation Software | For creating pixel-wise or image-level ground truth labels. | ITK-SNAP, VGG Image Annotator (VIA), custom web-based tools. |

| Deep Learning Framework | Library for building, training, and deploying models. | PyTorch, TensorFlow/Keras. Preferred for research flexibility. |

| Medical Image Processing Library | Provides standard pre-processing and evaluation functions. | ITK, SimpleITK, OpenCV (for fundamental ops). |

| Model Weights (Pre-trained) | Enables transfer learning, reducing data requirements. | Models pre-trained on ImageNet (e.g., ResNet, DenseNet) or medical images (e.g., Models Genesis). |

| Performance Metrics Suite | Code to calculate standardized metrics for comparison. | Includes functions for AUC-ROC, Dice Score, Sensitivity/Specificity, Mean Absolute Error. |

| Computational Environment | GPU-accelerated hardware/cloud platform for model training. | NVIDIA GPUs (e.g., A100, V100), Google Colab Pro, AWS EC2 (P3 instances). |

| Statistical Analysis Software | For rigorous analysis of model performance and clinical correlations. | R, Python (SciPy, statsmodels), SAS. |

Application Notes: Within the broader thesis on AI-enhanced retinal imaging, a critical research pathway involves the systematic identification and quantification of specific anatomical landmarks and pathological lesions. This document details the current state of feature detection by AI models, derived from a synthesis of recent literature, providing structured data, protocols, and resources for translational research and clinical trial endpoint development.

Quantified Anatomical & Pathological Features in AI Training

Table 1: Key Retinal Features for AI Detection in Research & Development

| Feature Category | Specific Feature | Clinical/Research Significance | Common Imaging Modality | Representative Prevalence in Datasets* |

|---|---|---|---|---|

| Anatomical Landmarks | Optic Disc (ONH) | Reference point for screening; glaucoma assessment. | Fundus Photo, OCT | ~100% |

| Fovea | Central vision; AMD and DME reference. | Fundus Photo, OCT | ~100% | |

| Retinal Vessels (Arteries/Veins) | Cardiovascular risk; diabetic changes. | Fundus Photo | ~100% | |

| Pathological Lesions | Drusen (Hard/Soft) | Early & Intermediate AMD hallmark. | Fundus Photo, OCT | 30-50% in aging populations |

| Geographic Atrophy (GA) | Advanced AMD (non-neovascular). | Fundus Photo, OCT | 5-10% in AMD cohorts | |

| Choroidal Neovascularization (CNV) | Neovascular AMD; requires urgent treatment. | OCT, OCT-A | 10-15% in AMD cohorts | |

| Microaneurysms | Earliest sign of Diabetic Retinopathy (DR). | Fundus Photo | 20-70% in diabetic populations | |

| Hemorrhages (Dot, Blot, Flame) | Key marker for DR severity. | Fundus Photo | 10-40% in diabetic populations | |

| Exudates (Hard) | Diabetic macular edema indicator. | Fundus Photo | 5-20% in diabetic populations | |

| Cotton Wool Spots | Retinal nerve fiber layer infarcts. | Fundus Photo | <5% in general screening | |

| Retinal Pigment Epithelium (RPE) Changes | AMD progression, drug toxicity. | OCT, FAF | Varies by disease | |

| Structural Changes | Retinal Fluid (SRF, IRF) | Active neovascularization or DME. | OCT | >50% in nAMD/DME trials |

| Epiretinal Membrane (ERM) | Macular distortion, visual impairment. | OCT | ~10% in elderly | |

| Macular Hole | Full-thickness retinal defect. | OCT | ~0.2% in adults |

*Prevalence estimates are generalized from recent public dataset analyses (e.g., Kaggle EyePACS, AREDS, UK Biobank) and are cohort-dependent.

Experimental Protocol: Validating AI Feature Detection for Clinical Trial Endpoints

Protocol Title: Independent Validation of a Novel AI Retinal Feature Quantifier Against Expert Grading in a Phase II AMD Study.

Objective: To assess the agreement and efficacy of an AI model (e.g., a multi-task segmentation network) in quantifying geographic atrophy (GA) area and intraretinal fluid (IRF) volume from OCT scans, compared to manual grading by a certified reading center.

Materials:

- Dataset: Retrospective SD-OCT volumes (e.g., Cirrus HD-OCT) from a Phase II AMD trial cohort (n=150 patients, ~2000 B-scans).

- AI Model: Pre-trained nnU-Net or custom DeepLabV3+ model for simultaneous GA and IRF segmentation.

- Ground Truth: Manually annotated masks by at least two independent retinal specialists, with adjudication.

- Software: Python (PyTorch), ITK-SNAP for manual correction, statistical analysis software (R, Python SciPy).

Methodology:

- Data Curation & Preprocessing:

- De-identify all OCT volumes.

- Apply standard preprocessing: normalization of pixel intensity to [0,1], resampling to uniform voxel size (e.g., 10µm x 10µm x 50µm).

- Split data into training (60%), validation (20%), and hold-out test (20%) sets at the patient level.

AI Model Inference & Post-processing:

- Load the pre-trained model weights.

- Run inference on the hold-out test set to generate binary segmentation masks for GA and IRF.

- Apply connected-component analysis to remove spurious predictions below 5 contiguous pixels for GA and 3 for IRF.

Quantitative Analysis & Validation:

- Compute pixel-wise metrics (Dice Similarity Coefficient - DSC) and region-based metrics (Absolute Area/Volume Difference).

- Perform statistical analysis: Bland-Altman plots for agreement on GA area (mm²) and IRF volume (nL), and linear regression against reading center grades.

- Pre-defined success criterion: Mean DSC > 0.85 and mean absolute volume error < 15% for both features.

Expected Outcomes: A validated AI tool capable of providing precise, reproducible quantifications of key pathological features, potentially serving as a secondary endpoint in subsequent clinical trials.

Visualizing the AI Retinal Analysis Workflow

AI Retinal Image Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI Retinal Feature Research

| Item / Solution | Function / Application | Example/Provider |

|---|---|---|

| Public Retinal Image Datasets | Provides standardized, often annotated data for training and benchmarking AI models. | Kaggle Diabetic Retinopathy, AREDS, UK Biobank, RETOUCH Challenge. |

| Annotation Software | Enables expert manual labeling of anatomical and pathological features to create ground truth data. | ITK-SNAP, VGG Image Annotator (VIA), Labelbox. |

| Deep Learning Frameworks | Provides libraries and tools to build, train, and validate custom AI detection models. | PyTorch, TensorFlow, MONAI (for medical imaging). |

| Cloud GPU Compute Platform | Offers scalable computational power for training large AI models on extensive image datasets. | Google Cloud AI Platform, Amazon SageMaker, Azure Machine Learning. |

| Medical Image Processing Libraries | Facilitates domain-specific preprocessing, augmentation, and evaluation of retinal images. | Python: OpenCV, SimpleITK, NumPy, SciKit-Image. |

| Statistical Analysis Software | Used for rigorous validation of AI model performance against clinical benchmarks. | R, Python (SciPy, Statsmodels), GraphPad Prism. |

| DICOM & Image Format Converters | Ensures interoperability between clinical imaging systems and research pipelines. | dcm4che, PyDicom, ImageJ. |

Within AI-enhanced retinal imaging research, the retina is established as a unique, accessible window to systemic health. This document provides Application Notes and Protocols for investigating established retinal biomarkers of neurodegenerative (e.g., Alzheimer's, Parkinson's), cardiovascular (e.g., hypertension, stroke), and metabolic (e.g., diabetes) diseases. The integration of multimodal imaging with AI analysis is central to quantifying these signs and discovering novel biomarkers.

Application Notes: Key Biomarkers and Quantitative Findings

The following table summarizes key quantitative retinal changes associated with systemic diseases, derived from recent meta-analyses and cohort studies.

Table 1: Quantitative Retinal Biomarkers in Systemic Diseases

| Disease Category | Specific Condition | Retinal Layer/Biomarker | Quantitative Change (vs. Healthy) | Imaging Modality |

|---|---|---|---|---|

| Neurodegenerative | Alzheimer's Disease | Macular Ganglion Cell-Inner Plexiform Layer (GC-IPL) Thickness | ↓ 5.1 μm (95% CI: -6.7 to -3.5) | SD-OCT |

| Retinal Nerve Fiber Layer (RNFL) Thickness | ↓ 4.6 μm (95% CI: -6.1 to -3.1) | SD-OCT | ||

| Retinal Amyloid-β Plaque Burden | ↑ 2.3-fold fluorescence intensity | CURIO Amyloid Imaging | ||

| Parkinson's Disease | Foveal Pit Volume | ↓ 0.003 mm³ (p<0.01) | HD-OCT | |

| Peripapillary RNFL Thickness | ↓ 7.2 μm in temporal quadrant | SD-OCT | ||

| Cardiovascular | Hypertension | Arteriolar-to-Venular Ratio (AVR) | ↓ 0.15 units (per 10mmHg ↑) | Fundus Photography |

| Retinal Artery Wall Thickness | ↑ 4.8 μm (95% CI: 3.2-6.4) | Adaptive Optics | ||

| Stroke & Cognitive Decline | Retinal Fractal Dimension (Vessel Complexity) | ↓ 0.02 units (Df) | AI-assisted Vessel Analysis | |

| Metabolic | Diabetic Retinopathy (DR) | DR Prevalence (moderate+) in Type 2 Diabetes | 28.5% (global prevalence) | Multimodal |

| Retinal Venular Diameter | ↑ 6.4% in pre-diabetes | Dynamic Vessel Analysis | ||

| Diabetic Macular Edema | Central Subfield Thickness (CST) | > 320 μm threshold for CSME | OCT | |

| Hyperreflective Foci Count | > 20 foci correlates with HbA1c >8% | OCT |

Detailed Experimental Protocols

Protocol 2.1: Multimodal Retinal Biomarker Acquisition for AI Model Training

Objective: To standardize the acquisition of retinal images for developing AI models that predict systemic disease risk. Materials: Spectral-Domain OCT (SD-OCT), Color Fundus Camera, Adaptive Optics Scanning Laser Ophthalmoscope (AOSLO), Dedicated Amyloid Fluorescence Imaging System (e.g., CURIO), Pupil Dilation Drops.

- Participant Preparation & Ethics: Obtain informed consent. Dilate pupils (Tropicamide 1% + Phenylephrine 2.5%). Document medical history.

- Synchronized Multimodal Imaging:

- Step A (Fundus & Vessel Maps): Acquire 50° FOV centered on macula and optic disc. Ensure clarity for vessel segmentation.

- Step B (SD-OCT Volumes): Acquire 6x6mm macular cube (512x128 scans) and peripapillary circle scan (3.4mm diameter, 100 avg.). Ensure signal strength >7.

- Step C (Functional/Advanced Imaging): If applicable, perform AOSLO for photoreceptor/vasculature metrics or fluorescence imaging post-injection of targeting probe.

- Data Preprocessing for AI: Anonymize all images. Co-register fundus, OCT, and AOSLO images using fiduciary points. Manually label ground truth (e.g., layer boundaries, vessel classes, lesions) by two independent graders. Resolve discrepancies with a third senior grader. Export in standardized format (e.g., .nii for volumes, .png for fundus).

Protocol 2.2: Quantifying Retinal Neurovascular Coupling (RNC) in Hypertension

Objective: To assess dynamic RNC as an early biomarker of cerebral microvascular dysfunction. Materials: Dynamic Vessel Analyzer (DVA), 530nm & 660nm light sources, Gas Challenge Unit (5% CO₂, 95% O₂), Analysis Software.

- Baseline Calibration: Seat participant, dark adapt for 10 mins. Focus DVA on superior temporal arteriole and adjacent venule. Record baseline diameters for 1 minute.

- Flicker Light Stimulus & Gas Challenge: Apply 530nm diffuse flicker light (12.5Hz, 20 secs). Record vessel diameters for 60 secs post-stimulus. After 10-min rest, administer normocapnic hypercapnia (5% CO₂) via mask for 2 mins while recording.

- Analysis: Calculate % diameter change from baseline for arteriole and venule. Compute RNC ratio: (Arteriolar Dilation %)/(Venular Dilation %). Compare to normative database (Hypertensive: RNC Ratio typically <1.8 vs. Normotensive: ~2.3).

Protocol 2.3: Ex Vivo Retinal Tissue Analysis for Neurodegenerative Proteinopathy

Objective: To validate in vivo amyloid imaging via histopathological correlation in post-mortem retinal tissue. Materials: Donor eye globes, 4% PFA, Cryostat, Antibodies (Anti-Aβ, Anti-pTau, Anti-GFAP), Confocal Microscope.

- Tissue Processing: Fix globe in 4% PFA for 24h. Dissect retina, flat-mount, or embed in OCT medium for 12μm cross-sections.

- Immunohistochemistry: Permeabilize with 0.3% Triton X-100. Block with 5% BSA/10% normal goat serum. Incubate with primary antibodies (1:200, 24h, 4°C). Apply fluorescent secondary antibodies (1:500, 2h). Counterstain with DAPI.

- Imaging & Quantification: Image using confocal microscope (20x, 63x). Co-localize fluorescence with OCT landmarks. Quantify plaque count/mm² or fluorescence intensity per region using ImageJ/FIJI software.

Signaling Pathways and Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials for Retinal-Systemic Disease Studies

| Item / Reagent | Supplier Examples | Function in Research |

|---|---|---|

| Spectralis HRA+OCT | Heidelberg Engineering | Gold-standard multimodal platform for simultaneous OCT and angiography; critical for longitudinal biomarker tracking. |

| CURIO Imaging Agent | NeuroVision Imaging | Fluorescent ligand that binds retinal amyloid-β; enables in vivo quantification of Alzheimer's-related pathology. |

| Anti-Amyloid-β (Clone 6E10) | BioLegend, Covance | Primary antibody for detecting and quantifying amyloid-β plaques in ex vivo retinal tissue via IHC. |

| Dynamic Vessel Analyzer (DVA) | Imedos Systems | Measures real-time retinal vessel diameter changes in response to stimuli; assesses neurovascular coupling health. |

| Adaptive Optics | Canon, Physical Sciences Inc. | Enables cellular-resolution imaging of retinal neurons (ganglion cells) and capillaries for subtle metric analysis. |

| AI Model Development Suite | NVIDIA Clara, TensorFlow | Provides infrastructure for training deep learning models on large retinal image datasets for biomarker discovery. |

| Human Retinal Tissue Biobank | NDRI, Eye-Bank for Sight Restoration | Provides post-mortem retinal tissues essential for histological validation of in vivo imaging biomarkers. |

The development of robust, generalizable AI models for retinal imaging analysis is critically dependent on large-scale, well-annotated datasets. Within the context of a thesis on AI-enhanced retinal imaging applications research, access to standardized public repositories for Optical Coherence Tomography (OCT), Fundus Photography, and Angiography is foundational. These repositories enable benchmarking, facilitate transfer learning, and accelerate translational research for scientists and drug development professionals.

Optical Coherence Tomography (OCT) Datasets

OCT provides high-resolution cross-sectional and volumetric imagery of retinal layers, crucial for diagnosing age-related macular degeneration (AMD), diabetic macular edema (DME), and glaucoma.

Table 1: Major Public OCT Datasets

| Dataset Name | Source/Institution | Volume (Images/Scans) | Key Pathologies | Annotation Type | Primary Use Case |

|---|---|---|---|---|---|

| Kermany 2018 (OCT2017) | UCSD, Shiley Eye Institute | 108,312 images | CNV, DME, Drusen, Normal | Image-level classification | Disease classification, model pre-training |

| Duke OCT Dataset | Duke University | 384,000 B-scans from 1,351 patients | AMD, DME, RVO | Fluid segmentation, retinal layer maps | Segmentation, biomarker quantification |

| AIROGS | Multiple EU centers | > 110,000 scans | Referable Glaucoma | Referability grading (normal/abnormal) | Glaucoma screening AI |

| OCTID | Isfahan University of Medical Sciences | 500+ volumes | AMD, DME, CSR, Normal | Volume-level classification | 3D OCT analysis |

Fundus Photography Datasets

Fundus photography captures 2D color images of the posterior pole, essential for screening diabetic retinopathy (DR), glaucoma, and other vascular pathologies.

Table 2: Major Public Fundus Photography Datasets

| Dataset Name | Source/Institution | Volume (Images) | Key Pathologies/Grades | Annotation Type | Notable Features |

|---|---|---|---|---|---|

| EyePACS | Kaggle/California Screening Program | ~88,702 images | DR (5-scale severity) | Image-level grading | Large-scale, real-world variability |

| APTOS 2019 | Asia Pacific Tele-Ophthalmology Society | 3,662 images | DR (5-scale severity) | Image-level grading | High-quality, expert-graded |

| RFMiD | Kasturba Medical College, India | 3,200 images | 46 retinal diseases | Multi-label classification | Broad multi-disease scope |

| REFUGE Challenge | Multiple (MESSIDOR, etc.) | 1,200 images | Glaucoma, Optic Disc/Cup | Disc/cup segmentation, glaucoma classification | Paired fundus & OCT, standard benchmarks |

Angiography Datasets (OCTA & FA/ICGA)

Angiography, including OCT Angiography (OCTA) and traditional Fluorescein/Indocyanine Green Angiography (FA/ICGA), visualizes retinal and choroidal vasculature.

Table 3: Major Public Angiography Datasets

| Dataset Name | Modality | Volume | Key Pathologies | Annotation Type | Application Focus |

|---|---|---|---|---|---|

| ROSE Projects | OCTA | 229 subjects (both eyes) | Diabetic Retinopathy | Vessel segmentation, FAZ quantification | Vascular network analysis |

| OCTA-500 | OCTA | 500 subjects | Multiple (Normal, DR, AMD, etc.) | Vessel, FAZ, Retinal Layer | Comprehensive 3D OCTA |

| AFIO | FA | 106 subjects | Uveitis, Vasculitis | Image-level diagnosis, lesion marking | Inflammatory disease analysis |

Detailed Experimental Protocols for Utilizing Public Datasets

Protocol: Training a Multi-Disease Classifier Using Federated Datasets

Aim: To develop a robust CNN model for simultaneous detection of DR, AMD, and Glaucoma from fundus images using multiple public sources.

Materials:

- Data Sources: EyePACS (DR), REFUGE (Glaucoma), ODIR (mixed diseases).

- Software: Python 3.8+, PyTorch/TensorFlow, OpenCV, scikit-learn.

- Hardware: GPU with ≥8GB VRAM (e.g., NVIDIA V100, RTX 3090).

Procedure:

- Data Harmonization:

- Download datasets from official sources.

- Standardize image resolution to 512x512 pixels using bilinear interpolation.

- Apply uniform color normalization (e.g., Macenko method) to correct for inter-device variability.

- Convert all diagnosis labels to a unified multi-hot encoding scheme [DR, AMD, Glaucoma, Normal].

Data Partitioning:

- Create a patient-wise split (70% training, 15% validation, 15% testing) to prevent data leakage. Ensure no patient's images appear in more than one set.

Model Training (EfficientNet-B4):

- Preprocessing: Apply on-the-fly augmentation: random rotation (±15°), horizontal/vertical flip, brightness/contrast adjustment (±10%).

- Initialization: Load weights pre-trained on ImageNet.

- Loss Function: Use

Binary Cross-Entropyloss for multi-label classification. - Optimization: Train for 50 epochs using AdamW optimizer (lr=1e-4, weight_decay=1e-5) with a cosine annealing scheduler.

- Validation: Monitor validation loss and per-pathology AUC. Implement early stopping with patience=10 epochs.

Evaluation:

- Report per-class and macro-average Precision, Recall, F1-Score, and AUC-ROC on the held-out test set.

- Perform Grad-CAM visualization to ensure model focuses on anatomically plausible regions.

Protocol: Quantitative Biomarker Extraction from OCT Angiography

Aim: To quantify retinal ischemia by segmenting the Foveal Avascular Zone (FAZ) and measuring vessel density from OCTA scans.

Materials:

- Dataset: ROSE-1 (OCTA for DR).

- Software: Python, ITK-SNAP for manual correction, custom vessel segmentation scripts (U-Net based).

- Hardware: Workstation with GPU.

Procedure:

- Preprocessing of OCTA Volumes:

- Load 3x3mm or 6x6mm en face OCTA projections.

- Apply contrast-limited adaptive histogram equalization (CLAHE) to enhance vessel contrast.

- Use a median filter (3x3 kernel) to reduce speckle noise.

FAZ Segmentation:

- Train a U-Net model on manually annotated FAZ masks.

- Input: Preprocessed en face image. Output: Binary FAZ mask.

- Post-process prediction using morphological closing to smooth boundaries.

- Quantification: Calculate FAZ area (mm²), perimeter, and circularity index.

Vessel Density Calculation:

- Apply a second U-Net for vessel segmentation (capillaries and larger vessels).

- Binarize the output and skeletonize the vessel map.

- Quantification: Vessel Density = (Total white pixels in vessel mask / Total pixels in ROI) * 100%.

- Calculate density in specific zones (e.g., foveal, parafoveal) defined by ETDRS grid overlay.

Statistical Correlation:

- Correlate FAZ area and vessel density metrics with DR severity grade using Spearman's rank correlation.

Diagrams & Workflows

Title: AI Retinal Research Workflow Using Public Data

Title: OCTA Biomarker Quantification Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagent Solutions for Retinal AI Experimentation

| Item/Category | Function & Purpose | Example/Note |

|---|---|---|

| Public Dataset Suites | Provides standardized, annotated data for training and benchmarking. | Kaggle Diabetic Retinopathy, OCT2017. Essential for reproducibility. |

| Deep Learning Frameworks | Infrastructure for building, training, and deploying neural network models. | PyTorch, TensorFlow/Keras. Enable custom architecture design. |

| Medical Image Libraries | Specialized tools for reading, preprocessing, and augmenting medical images. | MONAI, ITK, OpenCV. Handle DICOM, NIfTI formats and spatial transforms. |

| Annotation & QC Platforms | Facilitate expert labeling and review of ground truth data. | CVAT, QuPath, ITK-SNAP. Critical for segmentation tasks. |

| High-Performance Computing (HPC) | Accelerates model training on large volumetric datasets (OCT, OCTA). | Cloud GPUs (AWS, GCP), On-premise Clusters. Necessary for 3D CNN training. |

| Statistical Analysis Software | For rigorous evaluation of model performance and biomarker correlations. | R, Python (SciPy, statsmodels). Compute p-values, AUC, confidence intervals. |

| Model Explainability Toolkits | Generates visual explanations of model predictions to build clinical trust. | Grad-CAM, SHAP, Captum. Highlights influential image regions for diagnosis. |

From Pixels to Predictions: Methodologies and Translational Applications in Research & Pharma

Within the broader thesis on AI-enhanced retinal imaging applications research, a robust and reproducible pipeline is fundamental. This protocol details the integrated workflow for acquiring, preparing, augmenting, and qualifying retinal image data to train and validate diagnostic AI models. This pipeline ensures data integrity, mitigates bias, and is critical for applications in clinical research and therapeutic development.

Application Notes & Protocols

Image Acquisition Protocol

Objective: Standardize the capture of high-quality retinal fundus and OCT images from human subjects. Instruments: Table-top fundus camera (e.g., Zeiss Visucam), Spectral-Domain OCT device (e.g., Heidelberg Spectralis). Protocol:

- Patient Preparation & Consent: Obtain IRB-approved informed consent. Dilate pupil using 1% tropicamide.

- Device Calibration: Perform daily built-in calibration routines. Set device to "Research Mode" for raw data output.

- Image Capture Sequence:

a. Fundus Imaging: Capture 50° FOV centered on macula and optic disc. Minimum of 3 images per eye (macula-centered, disc-centered, and an additional for redundancy). Save in lossless format (

.tiffor proprietary.e2e). b. OCT Imaging: Perform volumetric macular scan (30°x25°, 61 B-scans). Ensure signal strength index (SSI) > 25 (Heidelberg) or equivalent. - Data Export & Anonymization: Use vendor software to export de-identified images with structured filename (e.g.,

StudyID_Eye_Date_Modality.tiff). Store associated metadata in a separate, secure, pseudonymized database.

Image Preprocessing Protocol

Objective: Normalize images to reduce inter-device and inter-patient variability, enhancing model generalizability. Input: Raw retinal images (Fundus, OCT volumes). Software: Python with OpenCV, NumPy, and custom scripts.

Methodology for Fundus Images:

- Green Channel Extraction: Extract the green channel from RGB fundus images for optimal vessel contrast.

- Illumination Correction: Apply Contrast Limited Adaptive Histogram Equalization (CLAHE) with a clip limit of 2.0 and tile grid size of 8x8.

- Vignetting Removal: Model background via morphological opening (disk radius=30) and subtract.

- Intensity Normalization: Scale pixel intensities to the range [0, 1] using whole-dataset percentile normalization (1st and 99th percentiles as anchors).

- Resizing: Resize all images to a uniform 512x512 pixels using bilinear interpolation.

Methodology for OCT B-scans:

- Speckle Noise Reduction: Apply a non-local means denoising filter.

- Intra-volume Alignment: Register sequential B-scans using a rigid transformation based on the retinal pigment epithelium (RPE) layer.

- Flattening: Flatten each B-scan to align the RPE band horizontally.

- Region-of-Interest (ROI) Crop: Automatically crop to retain retina region, removing vitreous and choroid.

Table 1: Preprocessing Parameters Summary

| Step | Fundus Parameter | Value | OCT Parameter | Value |

|---|---|---|---|---|

| Contrast Enh. | CLAHE Clip Limit | 2.0 | Denoising Strength (h) | 10 |

| Color Norm. | Percentile Range | [1, 99] | Intensity Scale | [0, 1] |

| Output Size | Pixels | 512x512 | ROI Dimensions | 512x256 |

Image Augmentation Protocol

Objective: Artificially expand and diversify the training dataset to improve model robustness. Application: Applied only to the training set during model training in real-time. Techniques (Implemented via Albumentations or Torchvision):

- Geometric: Random rotation (±15°), horizontal/vertical flip, affine scaling (0.9-1.1x).

- Photometric (Fundus): Random adjustments to brightness/contrast (±10% limit), additive Gaussian noise (σ=0.01*intensity range), and simulated vignetting.

- Advanced & Pathology-Specific: Use generative models (e.g., Diffusion Models) to synthesize rare pathological features (microaneurysms, drusen) in healthy backgrounds, conditional on expert labels.

Image Quality Assessment (IQA) Protocol

Objective: Automatically filter out poor-quality images that could compromise model performance. Method: Implement a binary classifier (Pass/Fail) based on established criteria. Experimental Protocol for IQA Model Training:

- Ground Truth Labeling: Two retinal specialists independently grade 5000 images as "Gradable" or "Ungradable" based on criteria in Table 2. Resolve disagreements with a third grader.

- Model Architecture: Train a lightweight CNN (e.g., MobileNetV2) on preprocessed images.

- Training: Use 80/10/10 train/validation/test split. Optimizer: Adam (lr=1e-4). Loss: Weighted binary cross-entropy.

- Deployment: Integrate the trained model as a gatekeeper at the start of the pipeline. Images scoring below 0.8 probability of being "Gradable" are flagged for re-acquisition or manual review.

Table 2: Quality Assessment Criteria

| Criteria | Gradable (Pass) | Ungradable (Fail) |

|---|---|---|

| Focus/Sharpness | Vessels sharp at optic disc. | Blurred vessels, unclear boundaries. |

| Illumination | Even, no extreme shadows. | Severe central vignetting or overexposure. |

| Field Definition | Optic disc and macula visible. | Key anatomical landmarks missing. |

| Artifacts | Minimal eyelash or dust artifacts. | Large obscuring artifacts or blur. |

| OCT Signal Strength | SSI > 25. | SSI ≤ 20. |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Materials

| Item | Function/Application | Example/Details |

|---|---|---|

| Dilating Agent (Tropicamide 1%) | Induces pupil mydriasis for wider retinal view. | Essential for consistent, high-quality image acquisition. |

| Lossless Image Export Software | Extracts raw image data from proprietary devices. | Heidelberg Eye Explorer, Zeiss FORUM. |

| Pseudonymization Scripts | De-identifies images while maintaining study linkage. | Custom Python scripts using hash functions. |

| CLAHE Algorithm | Corrects uneven illumination in fundus images. | Available in OpenCV (cv2.createCLAHE). |

| Non-local Means Denoiser | Reduces speckle noise in OCT B-scans. | Available in OpenCV (cv2.fastNlMeansDenoising). |

| Albumentations Library | Provides optimized, real-time image augmentation. | Supports complex spatial & pixel-level transforms. |

| Pre-trained IQA Model | Automatically filters out low-quality data. | Can be fine-tuned from models trained on EyeQ dataset. |

| Diffusion Model Framework | Generates synthetic pathological features for data augmentation. | E.g., Stable Diffusion fine-tuned on retinal images. |

Pipeline Visualization

Title: End-to-End Retinal Image Analysis Pipeline

Title: IQA Model Development & Deployment Workflow

Within the thesis "AI-Enhanced Retinal Imaging Applications for Disease Diagnosis and Therapeutic Monitoring," this document provides detailed application notes and protocols. Retinal analysis presents unique challenges: fine anatomical structures, subtle pathological features, and multi-modal imaging data. This deep dive examines the core architectures enabling state-of-the-art performance.

Table 1: Quantitative Performance of Model Architectures on Common Retinal Tasks (2023-2024 Benchmark Studies)

| Model Type | Exemplar Architecture | Primary Task (Dataset) | Key Metric | Reported Score | Key Strength | Computational Cost (GPU VRAM) |

|---|---|---|---|---|---|---|

| CNN | Custom U-Net variant | Vessel Segmentation (DRIVE) | F1-Score | 0.830 | Local feature extraction, translation invariance | ~4 GB |

| CNN | DenseNet-121 | Diabetic Retinopathy Grading (APTOS/EyePACS) | Quadratic Weighted Kappa | 0.925 | Parameter efficiency, feature reuse | ~2 GB |

| Transformer | ViT-Base (pre-trained) | AMD Classification (AREDS) | AUC-ROC | 0.945 | Global context, superior scalability with data | ~8 GB |

| Transformer | Swin Transformer | Multi-disease classification (RFMiD) | Macro F1-Score | 0.748 | Hierarchical processing, computational efficiency | ~6 GB |

| Hybrid | TransFuse (CNN+Transformer) | Optic Disc/Cup Segmentation (REFUGE) | Dice Coefficient | 0.928 | Fuses local precision & global relationships | ~7 GB |

| Hybrid | CNN-Transformer Encoder | Retinal OCT Classification (Kermany) | Accuracy | 0.992 | Robust feature learning from limited data | ~5 GB |

Detailed Experimental Protocols

Protocol 3.1: Training a Hybrid Model for Geographic Atrophy (GA) Segmentation

- Objective: To segment GA regions from fundus autofluorescence (FAF) images using a CNN-Transformer hybrid encoder with a U-Net decoder.

- Materials: As per "The Scientist's Toolkit" below.

- Dataset Pre-processing:

- Obtain FAF images with expert GA segmentations (e.g., from AREDS2 or a proprietary cohort).

- Apply standardization: Resize to 512x512 pixels. Normalize pixel intensities to [0, 1] range.

- Apply data augmentation in real-time: random rotation (±15°), horizontal/vertical flips, brightness/contrast variation (±10%).

- Model Configuration:

- Encoder: Use a ResNet-34 backbone for initial feature maps. The feature map from the third ResNet block is fed into a Transformer module with 4 attention heads and embedding dimension of 256.

- Decoder: A symmetrical U-Net decoder with skip connections from both the CNN and Transformer encoder stages.

- Loss Function: Use a combination of Dice Loss (0.7 weight) and Binary Cross-Entropy Loss (0.3 weight) to handle class imbalance.

- Training Procedure:

- Initialize the CNN backbone with ImageNet pre-trained weights. Initialize Transformer weights randomly (He initialization).

- Use the AdamW optimizer with an initial learning rate of 1e-4 and a weight decay of 1e-5.

- Train for 150 epochs with a batch size of 16. Use a learning rate scheduler that reduces LR by half on plateau (patience=10 epochs).

- Monitor validation Dice score for early stopping (patience=20 epochs).

- Evaluation: Report pixel-wise sensitivity, specificity, Dice coefficient, and area under the precision-recall curve (AUPRC) on a held-out test set.

Protocol 3.2: Fine-tuning a Vision Transformer for DR and DME Joint Assessment

- Objective: To adapt a pre-trained Vision Transformer (ViT) for simultaneous grading of Diabetic Retinopathy (DR) and detection of Diabetic Macular Edema (DME) from color fundus photographs.

- Dataset: Curated dataset with grades for DR (0-4) and DME (0/1).

- Fine-tuning Steps:

- Head Replacement: Replace the pre-trained ViT classification head with a new multi-task head: two parallel linear layers for DR (5-class) and DME (2-class) outputs.

- Progressive Unfreezing: Initially, freeze all ViT encoder blocks and train only the new head for 5 epochs. Subsequently, unfreeze the last 4 Transformer blocks and train for 15 epochs. Finally, unfreeze the entire model and train for 30 epochs with a 10x lower learning rate.

- Optimization: Use Adam optimizer with a cosine annealing learning rate schedule from 3e-5 to 1e-6.

- Outcome Metrics: Weighted Kappa for DR grading, AUC-ROC for DME detection, and confusion matrices for both tasks.

Visualizations of Architectures and Workflows

CNN Feature Extraction Pipeline

Transformer Self-Attention Mechanism

Hybrid CNN-Transformer Model Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Developing Retinal AI Models

| Item / Solution | Function in Research | Example Vendor/Product |

|---|---|---|

| Public Retinal Image Datasets | Benchmarking & pre-training models. | Kaggle EyePACS, RETFound benchmark suite (Moorfields), AREDS database (NIH). |

| Annotation Software | Creating ground truth labels for segmentation/ detection. | ITK-SNAP, VGG Image Annotator (VIA), proprietary clinical grader interfaces. |

| Deep Learning Framework | Model architecture, training, and evaluation. | PyTorch, TensorFlow with Keras, MONAI for medical imaging. |

| Pre-trained Model Weights | Transfer learning to overcome limited dataset sizes. | TorchVision models, RETFound (Nature), Google ViT checkpoints. |

| High-Memory GPU Compute Instance | Training large models (esp. Transformers) on high-resolution images. | NVIDIA A100/A6000 (40GB+ VRAM) via cloud providers (AWS, GCP, Azure). |

| Gradient Accumulation Script | Simulates larger batch sizes when hardware memory is limited. | Custom training loop in PyTorch. |

| Explainability Toolkit | Generating saliency maps (Grad-CAM) for model interpretability. | Captum (for PyTorch), tf-keras-vis (for TensorFlow). |

| DICOM / Medical Image Reader | Standardized handling of clinical OCT and fundus data. | pydicom, SimpleITK, OCT-Converter (for proprietary formats). |

1. Introduction in Thesis Context Within the broader thesis on AI-enhanced retinal imaging, this document details protocols for leveraging AI not merely for diagnostic classification but for the continuous, quantitative measurement of disease biomarkers. This shift enables granular tracking of progression and sensitive evaluation of therapeutic efficacy in clinical trials and research.

2. AI Model Development & Validation Protocol

2.1. Data Curation Pipeline

- Source: Multi-center, longitudinal studies (e.g., NIH AREDS2, UK Biobank) and interventional clinical trial archives.

- Standardization: All images undergo quality assessment, illumination correction, and registration to a baseline visit.

- Annotation: Expert graders segment key features (e.g., geographic atrophy area, fluid volume, drusen volume) to generate ground truth maps.

2.2. Model Architecture & Training

- Core Architecture: Hybrid CNN-Transformer network.

- Input: Registered image pairs (baseline vs. follow-up).

- Output: Pixel-wise change maps and quantitative biomarkers (e.g., Δ in lesion area, fluid volume).

- Loss Function: Combined Dice loss for segmentation and Mean Absolute Error (MAE) for regression of biomarker values.

- Validation: 5-fold cross-validation with hold-out test set from separate clinical trial data.

3. Key Experimental Protocol: Quantifying Geographic Atrophy (GA) Progression in AMD

3.1. Objective: To automatically measure the monthly rate of GA lesion growth from serial Spectral-Domain Optical Coherence Tomography (SD-OCT) volumes.

3.2. Materials & Workflow

- Input: Two SD-OCT cube scans (512 x 128 x 1024 voxels) from the same patient, spaced 6-12 months apart.

- Step 1: Pre-processing with intensity normalization and intra-retinal layer segmentation using a pre-trained layer segmentation model.

- Step 2: AI-based GA segmentation on each volume using a U-Net model trained on expert annotations.

- Step 3: 3D registration of follow-up scan to baseline using an affine then deformable algorithm.

- Step 4: Calculation of absolute growth (in mm³) and square-root transformed growth (√mm²) as per historical consensus.

- Step 5: Statistical comparison of AI-measured growth rates between treatment and placebo arms in a clinical trial setting.

3.3. Performance Data Summary

Table 1: Performance of AI Quantifier vs. Human Expert Graders in GA Progression Measurement

| Metric | AI Model (Mean ± SD) | Human Grader (Mean ± SD) | p-value |

|---|---|---|---|

| Dice Score (Baseline) | 0.92 ± 0.04 | 0.91 ± 0.05 | 0.15 |

| Dice Score (Follow-up) | 0.93 ± 0.03 | 0.92 ± 0.06 | 0.08 |

| Correlation of √mm² Growth | r = 0.98 | (Inter-grader r = 0.97) | <0.001 |

| Mean Absolute Error (Growth) | 0.032 mm²/month | 0.041 mm²/month (inter-grader) | 0.01 |

| Processing Time per Pair | ~45 seconds | ~20 minutes | N/A |

4. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Based Retinal Biomarker Quantification Experiments

| Item / Solution | Function & Explanation |

|---|---|

| Curated Longitudinal Datasets (e.g., AREDS2 DB) | Provides standardized, time-series retinal images with linked clinical outcomes for model training and biological validation. |

| Expert-Annotated Image Libraries (e.g., RETOUCH, FLUID-13) | Gold-standard ground truth for specific pathologies (fluid, GA) to train and benchmark segmentation models. |

| Cloud-based AI Training Platform (e.g., Google Vertex AI, AWS SageMaker) | Provides scalable GPU resources for developing and deploying large, complex deep learning models. |

| DICOM & OCT Visualization SDKs (e.g., Horos, Heidelberg Eye Explorer) | Enables raw data handling, visualization, and extraction of pixel-spacing metadata critical for accurate metric calculation. |

| Statistical Analysis Software (e.g., R, Python with SciPy/StatsModels) | For performing longitudinal mixed-effects models, calculating significance of treatment effects on AI-derived biomarkers. |

5. Visualization Diagrams

5.1. AI Quantification Workflow

5.2. CNN-Transformer Model Architecture

5.3. GA Progression Analysis Pathway

The integration of artificial intelligence (AI) in ophthalmic imaging is revolutionizing the identification and quantification of retinal biomarkers. Within the broader thesis of AI-enhanced retinal imaging applications, a critical translational pathway is their validation as surrogate endpoints in clinical trials for systemic and ocular diseases. This application note details the protocols and frameworks for utilizing AI-derived retinal biomarkers to accelerate and reduce the cost of drug development, providing sensitive, objective, and frequently measurable indicators of therapeutic efficacy and disease progression.

Quantitative Landscape of Retinal Biomarkers in Clinical Trials

Table 1: Key Retinal Biomarkers in Active Drug Development Pipelines (2023-2024)

| Biomarker (Imaging Modality) | Target Disease(s) | Clinical Trial Phase(s) | Primary Quantitative Measure | Correlation with Traditional Endpoints |

|---|---|---|---|---|

| Retinal Nerve Fiber Layer (RNFL) Thickness (OCT) | Multiple Sclerosis, Alzheimer's Disease, Glaucoma | II, III | Mean peri-papillary thickness (µm) | Strong correlation with brain atrophy (MRI) and cognitive decline. |

| Macular Volume / Thickness (OCT) | Diabetic Macular Edema, Uveitis, Neurodegenerative Diseases | III, IV | Central subfield thickness (CST) in µm | Validated surrogate for visual acuity; exploratory for CNS drug effects. |

| Drusen Volume & Hyperreflective Foci (OCT) | Age-related Macular Degeneration (AMD) | II, III | Total drusen volume (mm³) in defined grid | Predicts progression to geographic atrophy or neovascular AMD. |

| Retinal Vascular Caliber & Fractal Dimension (Fundus Photography) | Cardiovascular Disease, Diabetic Retinopathy, Hypertension | II, Observational | Central Retinal Artery/Venule Equivalent (CRAE/CRVE) in µm | Associated with systemic vascular events and mortality. |

| Choroidal Thickness & Vascularity Index (OCT/OCTA) | Central Serous Chorioretinopathy, Inflammatory Diseases, Myopia | II | Subfoveal choroidal thickness (µm), Choroidal Vascular Index (CVI) | Indicator of inflammatory activity and treatment response. |

Table 2: Performance Metrics of AI Algorithms for Biomarker Quantification

| Algorithm Task | Modality | Key Performance Metric (Mean ± SD or [Range]) | Validation Cohort Size (N) | Reference Standard |

|---|---|---|---|---|

| Automated RNFL Segmentation | OCT | Dice Coefficient: 0.94 ± 0.03 | > 1,000 scans | Manual grading by experts. |

| Drusen Volume Segmentation | OCT | Intraclass Correlation Coefficient (ICC): 0.98 [0.97–0.99] | 500 patients | Semi-automated software. |

| Vessel Caliber Measurement | Fundus Photo | Pearson's r vs. human: 0.92 for CRAE | 3,000 images | IVAN tool measurements. |

| OCTA Vessel Density Calculation | OCTA | Coefficient of Variation (Repeatability): < 2.5% | 150 subjects | Repeated scans. |

Experimental Protocols for Biomarker Validation & Application

Protocol 3.1: Longitudinal Analysis of OCT Biomarkers in Neurodegenerative Disease Trials

Objective: To quantify the rate of RNFL thinning as a surrogate for neuronal loss in a 24-month clinical trial for an Alzheimer's disease therapeutic.

Materials & Workflow:

- Image Acquisition: Perform spectral-domain OCT (e.g., Cirrus HD-OCT, Heidelberg Spectralis) on all participants at baseline, month 12, and month 24. Use internal fixation and eye-tracking. Acquire 3 scans per visit; use the highest-quality scan for analysis.

- AI-Powered Segmentation: Process scans through a validated, FDA-cleared AI segmentation software (e.g.,

AI-RNFL Analyzer v2.1). The algorithm outputs global and sectoral RNFL thickness maps and metrics. - Quality Control (QC): Implement an automated QC flagging system (Signal Strength < 7, segmentation errors). Flagged scans undergo manual adjudication by a masked reading center grader.

- Data Aggregation: Export thickness data (µm) for the global average and four quadrants (superior, inferior, nasal, temporal) to a structured trial database.

- Statistical Endpoint Analysis: The primary surrogate endpoint is the mean change in global RNFL thickness from baseline to month 24. Use a mixed-effects model for repeated measures (MMRM) to compare treatment vs. placebo arms, adjusting for baseline age and disease severity.

Protocol 3.2: Dynamic Retinal Vessel Analysis as a Surrogate for Cardiovascular Outcomes

Objective: To assess changes in retinal vessel caliber in response to a novel anti-hypertensive drug over 6 months.

Materials & Workflow:

- Standardized Imaging: Obtain 45-degree digital fundus photographs centered on the optic disc (e.g., Topcon TRC-NW400) under standardized lighting and dilation.

- Centralized AI Analysis: Transmit de-identified images to a central reading center. Process images through a convolutional neural network (CNN)-based vessel analysis pipeline (e.g.,

DeepVesselNet). - Caliber Measurement: The AI system identifies all vessels in the zone 0.5–1.0 disc diameters from the disc margin, classifies them as arteries or veins, and calculates the CRAE and CRVE using the revised Knudtson-Parr-Hubbard formula.

- Endpoint Definition: The surrogate endpoint is the treatment-induced difference in CRAE change from baseline to month 6. A positive change (arteriolar widening) indicates reduced vascular tone and improved microcirculatory health.

Visualizing Workflows and Pathways

Diagram 1: AI-Enhanced Retinal Biomarker Pipeline in Clinical Trials

Diagram 2: Pathophysiological Link: Retinal Biomarkers to Systemic Disease

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Retinal Biomarker Research & Trials

| Item / Solution | Function in Protocol | Example Product / Specification |

|---|---|---|

| Validated AI Analysis Software | Core tool for automated, high-throughput, and objective quantification of retinal features from images. | DeepDR (for DR grading), Heidelberg Eye Explorer with AI modules, IRIS Registry analytics. |

| Standardized Imaging Phantoms | Ensures calibration and longitudinal consistency across different imaging devices and trial sites. | OCT phantom with certified layer thickness (e.g., from AMR). Fundus photography test targets. |

| Central Reading Center Platform | Secure, HIPAA/GDPR-compliant platform for image upload, storage, QC, blinded grading, and data management. | Medici (ICON plc), PIE (Digital Angiography Reading Center). |

| Synthetic Retinal Image Dataset | For training and validating AI algorithms where real clinical data with rare phenotypes is limited. | RETOUCH (OCT fluid), STARE (vessels). Generated via Generative Adversarial Networks (GANs). |

| Biomarker Data Aggregation Suite | Statistical software package pre-configured for longitudinal analysis of ophthalmic surrogate endpoints. | R with lme4 package; SAS PROC MIXED templates for MMRM analysis of OCT data. |

| QC Flagging Algorithm Library | Pre-defined digital rules to automatically detect and flag poor-quality scans for reacquisition or review. | Rules-based filters for signal strength, motion artifact, blinking, and incorrect segmentation. |

Application Notes and Protocols

1. Thesis Context: Integration into AI-Enhanced Retinal Imaging Research This work contributes to the broader thesis that retinal imaging, enhanced by artificial intelligence (AI), serves as a non-invasive window into systemic health. The retina, as an embryological extension of the central nervous system, offers a unique opportunity to visualize microvasculature, neural tissue, and inflammatory processes in vivo. The core hypothesis is that systemic pathologies imprint quantitative and qualitative signatures on the retinal architecture, which can be decoded via deep learning to predict future disease risk, stratify patient populations, and monitor therapeutic efficacy in drug development.

2. Quantitative Data Synthesis: Performance of Recent AI Models

Table 1: Performance of Select AI Models for Systemic Disease Prediction from Retinal Images

| Target Disease / Risk Factor | Model Architecture | Dataset Size (Images) | Primary Metric | Reported Performance | Key Biomarkers Identified |

|---|---|---|---|---|---|

| Cardiovascular Disease (CVD) Risk (e.g., CVD event, stroke) | Deep Learning (CNN with Attention) | ~150,000 (UK Biobank, EyePACS) | AUC-ROC | 0.70-0.80 for 5-year risk | Vessel caliber, tortuosity, fractal dimension, AV nicking |

| Chronic Kidney Disease (CKD) Progression | Ensemble (ResNet + Vascular Features) | ~35,000 (SEED, Singapore) | AUC-ROC | 0.73 for predicting 3-year progression | Retinal arteriolar narrowing, enhanced venular curvature |

| Alzheimer's Disease & Cognitive Decline | Multimodal CNN (Image + Demographics) | ~3,000 (ADNI, MemoRY) | AUC-ROC | 0.82-0.88 for AD detection | Reduced retinal nerve fiber layer thickness, foveal avascular zone enlargement, altered vessel density |

| Hemoglobin A1c & Dysglycemia | Regression CNN | ~120,000 (UK Biobank) | Mean Absolute Error (MAE) | MAE ~0.44% for HbA1c | Vessel density, hemorrhages/exudates, optic disc features |

| Liver Function & Cirrhosis Risk | Transfer Learning (ImageNet to Retina) | ~66,000 (UK Biobank) | Hazard Ratio (HR) | HR 2.17 for high-risk vs low-risk retina phenotype | Arcus lipoides, specific vessel tortuosity patterns |

3. Experimental Protocols

Protocol 3.1: End-to-End Model Development for CVD Risk Prediction Objective: To develop and validate a deep learning model that predicts 5-year major adverse cardiovascular events (MACE) from color fundus photographs. Materials:

- Datasets: Paired retinal images and longitudinal health records (e.g., UK Biobank).

- Software: Python 3.9+, PyTorch/TensorFlow, OpenCV, scikit-learn.

- Hardware: GPU cluster (e.g., NVIDIA A100).

Methodology:

- Data Curation & Labeling: Link retinal images to electronic health records. Define MACE endpoint (myocardial infarction, stroke, cardiovascular death). Create cohorts for training (70%), validation (15%), and held-out testing (15%).

- Preprocessing: Standardize image resolution to 512x512 pixels. Apply illumination correction (CLAHE). Normalize pixel intensity.

- Model Architecture: Implement a Dual-Stream Neural Network.

- Stream A (Anatomical): A pre-trained ResNet-50 backbone to extract global features.

- Stream B (Vascular): A custom U-Net to segment vasculature, followed by a CNN to extract quantitative vascular features (caliber, fractal dimension).

- Fusion: Concatenate feature vectors from Stream A and B. Pass through fully connected layers with dropout (0.5).

- Training: Use Adam optimizer (lr=1e-4), binary cross-entropy loss. Employ 5-fold cross-validation on the training set.

- Validation & Interpretation: Evaluate on the validation set using AUC-ROC, precision-recall. Apply Gradient-weighted Class Activation Mapping (Grad-CAM) to highlight predictive regions (e.g., specific vascular beds).

- External Testing: Final evaluation on the completely held-out test set and any available external datasets (e.g., EyePACS).

Protocol 3.2: Biomarker Discovery via Explainable AI (XAI) Objective: To identify and quantify novel retinal biomarkers associated with systemic disease. Materials: Trained prediction model, segmented retinal images, statistical software (R, Python).

Methodology:

- Feature Attribution: Apply SHAP (Shapley Additive Explanations) or integrated gradients to the trained model from Protocol 3.1 to rank image features by importance.

- Phenotype Extraction: Use optimized segmentation models (e.g., DRUNET for vessels, TransUNet for lesions) to extract quantifiable phenotypes: arteriole-to-venule ratio (AVR), vessel density (VD) in concentric zones, foveal avascular zone (FAZ) area/perimeter.

- Association Analysis: Perform multivariate linear/logistic regression in epidemiological cohorts, linking extracted phenotypes to systemic biomarkers (e.g., serum creatinine, HbA1c, CRP), adjusting for confounders (age, sex, BMI).

- Pathway Correlation: Correlate significant retinal phenotypes with known disease pathways using genomic/proteomic data where available.

4. Visualizations

AI Workflow: Retinal Image to Systemic Risk

Systemic Disease Pathways Mirrored in Retina

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Retinal Systemic Risk Research

| Item | Function & Relevance |

|---|---|

| Curated Paired Datasets (e.g., UK Biobank, ARIC, SEED) | Large-scale, longitudinal datasets with retinal images linked to systemic health outcomes are foundational for model training and validation. |

| Pre-trained Segmentation Models (e.g., IOWA Reference Algorithms, IRISToolbox) | High-performance models for segmenting vessels, optic disc, and fovea provide crucial quantitative input features for prediction models. |

| Explainable AI (XAI) Libraries (SHAP, Captum, LIME) | Essential for moving beyond a "black box" to identify which retinal regions/features drive predictions, enabling biomarker discovery and clinical trust. |

| Standardized Image Preprocessing Pipelines (e.g., Python libraries for CLAHE, registration, quality assessment) | Ensures consistency in input data, reducing technical variance and improving model generalizability across different imaging devices. |

| Biobanking & OMICS Linkage | The ability to correlate retinal imaging phenotypes with genomic, proteomic, and metabolomic data from the same patients is key to validating biological pathways. |

Navigating the Challenges: Optimizing AI Models for Robustness and Clinical Workflow Integration

Within AI-enhanced retinal imaging research for applications like disease screening, prognosis, and drug development efficacy biomarkers, model performance is critically limited by three ubiquitous data challenges: scarcity of high-quality annotated images, severe class imbalance (e.g., rare pathologies vs. normal scans), and variability in annotations from multiple expert graders. This document provides application notes and protocols to address these pitfalls.

Table 1: Common Data Challenges in Public Retinal Imaging Datasets

| Dataset | Total Images | Pathology Class Prevalence (%) | Number of Annotators | Inter-Grader Variability (Kappa) |

|---|---|---|---|---|

| EyePACS (Diabetic Retinopathy) | ~88,702 | Mild: 26%, Mod: 13%, Severe: 3%, PDR: 1% | 1-3 | 0.70 - 0.85 (weighted) |

| MESSIDOR (DR & DME) | 1,200 | DR0: 55%, DR1: 21%, DR2: 15%, DR3: 9% | 1 | N/A |

| RFMiD (Multi-Disease) | 3,200 | Glaucoma Suspect: 8%, AMD: 7%, DR: 12% | 3 | 0.65 - 0.80 |

| ODIR-5K (Multi-Label) | 5,000 | Cataract: 11%, Glaucoma: 4%, Myopia: 7% | Multiple | Reported as "Moderate" |

Table 2: Impact of Mitigation Techniques on Model Performance (AUC)

| Technique | Baseline AUC (No Mitigation) | Post-Mitigation AUC | Primary Dataset Used |

|---|---|---|---|

| Synthetic Data (GANs) | 0.81 | 0.87 (+0.06) | RFMiD |

| Weighted Loss Function | 0.78 | 0.84 (+0.06) | EyePACS |

| Test-Time Augmentation | 0.85 | 0.88 (+0.03) | MESSIDOR |

| Consensus Annotation | 0.83 | 0.89 (+0.06) | ODIR-5K |

Application Notes & Protocols

Protocol for Addressing Data Scarcity

Protocol Title: Generation and Integration of Synthetic Retinal Fundus Images via StyleGAN2-ADA

1. Objective: To augment limited training data with high-fidelity synthetic retinal images conditioned on disease class.

2. Materials:

- Source Dataset (e.g., RFMiD subset with >100 images per target class).

- StyleGAN2-ADA framework (PyTorch).

- GPU with >12GB VRAM (e.g., NVIDIA V100, A100).

3. Procedure:

- Step 1 - Data Curation: Isolate and preprocess all images for the target scarce class (e.g., "Choroidal Neovascularization"). Apply standard resizing to 1024x1024, vignetting correction, and luminosity normalization.

- Step 2 - Model Training: Train StyleGAN2-ADA for 25k iterations with ADA (adaptive discriminator augmentation) target set to 0.6. Use a conditional label to ensure class-specific generation.

- Step 3 - Quality Filtering: Generate a pool of 5,000 synthetic images. Employ a quality filter using a pretrained Inception-v3 network to retain only images with high confidence scores (>0.7) for the target class.

- Step 4 - Integration: Mix the filtered synthetic images (recommended ratio: 1:1 synthetic:real) with the original training set. Ensure shuffle at the beginning of each epoch.

4. Validation:

- Train two identical ResNet-50 models: one on the original dataset and one on the augmented dataset.

- Compare AUC on a held-out, real-only validation set. Expect a significant increase in recall for the scarce class without degrading specificity.

Diagram Title: Synthetic Data Augmentation Workflow

Protocol for Addressing Class Imbalance

Protocol Title: Class-Balanced Training Using Focal Loss and Strategic Batch Sampling

1. Objective: To mitigate bias towards the majority class (e.g., No DR) during model optimization.

2. Materials:

- Imbalanced training dataset (e.g., EyePACS).

- Deep learning framework (TensorFlow/PyTorch).

- Implementation of Focal Loss.

3. Procedure:

- Step 1 - Batch Composition: Implement a batch sampler that ensures each mini-batch of size N has at least M examples from each minority class (e.g., for batch size 32, M=4). This is not pure oversampling.

- Step 2 - Loss Function: Use Focal Loss instead of standard Cross-Entropy.

- Formula:

FL(p_t) = -α_t (1 - p_t)^γ log(p_t) - Recommended starting parameters:

γ (gamma) = 2.0,α (alpha)set inversely proportional to class frequency.

- Formula:

- Step 3 - Optimization: Train the model using the balanced batches and Focal Loss. Monitor per-class precision and recall on the validation set every epoch.

- Step 4 - Threshold Tuning: After training, tune the decision threshold for each minority class on the validation set to maximize the F1-score, moving away from the default 0.5.

4. Validation:

- Report macro-average F1-score in addition to overall accuracy.

- Generate confusion matrices for the test set to verify improved minority class performance.

Diagram Title: Class Imbalance Mitigation Protocol

Protocol for Addressing Annotation Variability

Protocol Title: Establishing Consensus Ground Truth via Multi-Grader Aggregation and Uncertainty Estimation

1. Objective: To create a robust ground truth label from multiple noisy annotations and enable models to estimate prediction uncertainty.

2. Materials:

- Dataset with multiple independent annotations per image (e.g., from ODIR-5K or in-house labeled data).

- Access to annotation software (e.g., Labelbox, CVAT) or records.

3. Procedure:

- Step 1 - Annotation Collection: For a subset of critical or ambiguous cases, procure annotations from a minimum of 3 expert graders (retinal specialists, optometrists).

- Step 2 - Consensus Method:

- For binary tasks: Use majority vote. Ties can be resolved by a senior adjudicator.

- For multi-class or severity grades: Use the soft-label approach. Calculate the probability vector for each class (e.g., for DR: [0.0, 0.33, 0.67, 0.0, 0.0]).

- For segmentation tasks: Use the STAPLE algorithm to combine pixel-level masks.

- Step 3 - Model Training with Uncertainty: Train the model using the consensus labels (hard or soft). Add a Monte Carlo Dropout layer at inference to enable Bayesian estimation of model uncertainty.

- Step 4 - Flagging System: Implement a post-processing rule to flag predictions where the model's uncertainty (e.g., entropy of predictions across dropout runs) exceeds a set threshold for expert review.

4. Validation:

- Measure the agreement (Cohen's Kappa) between the model's predictions and the consensus ground truth versus any single grader.

- Assess the correlation between the model's predicted uncertainty and its error rate.

Diagram Title: Multi-Grader Consensus & Uncertainty Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI Retinal Imaging Research

| Item / Reagent | Function & Application | Example/Note |

|---|---|---|

| Public Retinal Datasets | Foundation for training and benchmarking models. | EyePACS, MESSIDOR, RFMiD, ODIR-5K. Ensure proper data use agreements. |

| Synthetic Data Generation Tools | Augment scarce classes, simulate pathologies. | StyleGAN2-ADA, Diffusion Models (e.g., Stable Diffusion fine-tunes). |

| Annotation Platforms | Facilitate multi-grader labeling campaigns and consensus. | Labelbox, CVAT, Supervisely. Critical for generating high-quality ground truth. |

| Class-Balanced Loss Functions | Directly counter class imbalance during backpropagation. | Focal Loss, Class-Balanced Loss, LDAM Loss. Implement in PyTorch/TF. |

| Monte Carlo Dropout Module | Enables model uncertainty estimation at inference time. | Standard dropout layer activated during both training and inference passes. |

| Reference Standards (Graders) | The "gold standard" for validation and consensus. | Access to 2-3 certified retinal specialists for adjudication. |

| Metricsuites (beyond Accuracy) | Comprehensive evaluation of model performance. | Scikit-learn for per-class Precision, Recall, F1, Macro-Averages, AUC-ROC. |

Within the broader thesis on AI-enhanced retinal imaging applications research, a critical bottleneck is the translation of high-performing research models into robust clinical tools. The central challenge lies in model generalizability—ensuring diagnostic algorithms maintain accuracy across the inherent variability of real-world data sources. This document provides application notes and experimental protocols to systematically quantify and mitigate generalization gaps across imaging devices, demographic populations, and image quality spectra.

Recent studies highlight performance degradation when models encounter distribution shifts.

Table 1: Reported Model Performance Degradation Across Domains

| Domain Shift Type | Original Test Performance (AUC) | External/Shifted Test Performance (AUC) | Performance Drop (ΔAUC) | Key Variable |

|---|---|---|---|---|

| Imaging Device (Fundus Camera) | 0.98 (Canon CR-2) | 0.87 (Zevis Visucam 500) | -0.11 | Camera manufacturer, lens optics, FOV |

| Population Demographics (DR Detection) | 0.95 (Multi-ethnic U.S. cohort) | 0.82 (African clinical cohort) | -0.13 | Skin pigmentation, disease prevalence |

| Image Quality (Grading Readability) | 0.96 (High-quality images) | 0.78 (Low-quality, gradable images) | -0.18 | Illumination, clarity, artifact presence |

Experimental Protocols